Welcome to the SQL showdown series!

What is this and how does it work?

I'm committing to publishing a SQL challenge every day for 30 days. In each post I'll describe my solution to the last day's challenge. I'll follow that up with a full description of the challenge for the next day.

Write your own solution in the comments! Let's see who can come up with the most creative solutions.

Challenge #12: Greatest Discount

For today's challenge I'll pose a problem that involves finding date overlaps in the data. Here's the challenge itself:

Suppose you have a table of discount rates, each of which is only valid for a limited time, but discounts are cumulative. Can you find the greatest possible cumulative discount rate?

Here's an example of the data we have to play with:

The greatest cumulative discount, given the data above, would be 0.35.

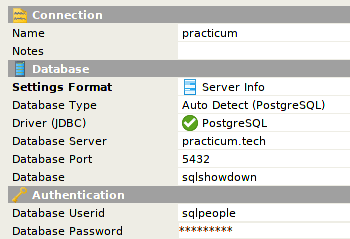

Sandbox Connection Details

I have a PostgreSQL database ready for you to play with.

The password is the same as the username! To query the discount table:

SELECT * FROM day12.discount;

Solution to Challenge #11: Soft Deletes

If you've read yesterday's challenge, you'll know I opted to change things up a bit, and instead of asking for an answer in the form of a query, I posed a more open-ended design question.

The challenge focused on how we could implement the soft delete pattern in a database, while asking a number of questions about possible issues that might affect the design:

What happens to related child data when a parent is soft deleted? If a user deletes an "account" record, what happens to all of the related "order" records?

The entire application is now responsible for making sure that queries filter out all "deleted" records. Write enough queries, and sooner or later someone will forget to filter out the deleted records.

What affect will deleted records have on constraints that might be in place? If I have a unique constraint on a field called name, should users be able to create new records that re-use an existing name on a soft-deleted record?

As I thought through this problem, I realized that there are many complications that come with adding soft deletes. In fact, if you look at the most popular StackOverflow answer regarding soft deletes, quite a few people advocate avoiding this pattern if at all possible.

Assuming we believe the complexity is worthwhile, how can we implement soft deletes in a way that doesn't add a great deal of bug-prone overhead?

Let's talk through some possible solutions.

Adding a deleted_on column

In this scenario a deleted_on date-time column would be added to any table that allows soft deletes. A null value indicates a currently active record.

The pros:

- Solution is easy to implement.

- Unique constraints can still function if the database supports partial indexes.

The cons:

- Querying the table becomes more complex. Every query needs an additional criteria, or a view must be created to handle the filtering. This may not be an attractive solution because ORMs don't tend to have great support for creating, maintaining, or accessing data through views.

- The behavior of relationships between tables is not very well defined. Foreign keys lose meaning if deleting a record does not really mean deleting it.

Archive tables

Instead of adding a deleted flag or timestamp, we can introduce archive tables that are (nearly) identical to the original version. Any deleted records are first inserted into an associated archive table prior to removal from the original.

Archive tables should have the same columns as their associated original, but would not have unique constraints. Copying records that are about to be deleted would need to be done in application code, or in the database itself via a trigger.

The pros:

- Queries can operate without knowledge of soft deletes.

- Foreign keys function as they normally would. Any cascading deletes or updates result in the expected behavior.

The cons:

- Depending on how many tables need the soft delete pattern, the size of the schema can balloon. Maintaining the archive tables as schema migrations occur would be painful to do by hand. Some databases, such as PostgreSQL, support table inheritance, which could make maintenance easier.

Good luck!

Have fun, and I can't wait to see what you come up with! I'll be back tomorrow with a solution to this problem and a new challenge.

Top comments (0)