I will explain this with an example:

Scenario: A gaming company that collects petabytes of game logs that are produced by games in the cloud.

Business Requirements: The company wants to analyze these logs to gain insights into customer preferences, demographics, and usage behavior. It also wants to identify up-sell and cross-sell opportunities, develop compelling new features, drive business growth, and provide a better experience to its customers.

Tech Requirements:

1.To analyze these logs, the company needs to use reference data such as customer information, game information, and marketing campaign information that is in an on-premises data store.

2.The company wants to utilize this data from the on-premises data store, combining it with additional log data that it has in a cloud data store.

3.To extract insights, it hopes to process the joined data by using a Spark cluster in the cloud (Azure HDInsight),

4.Publish the transformed data into a cloud data warehouse such as Azure Synapse Analytics to easily build a report on top of it. 5.They want to automate this workflow, and monitor and manage it on a daily schedule. They also want to execute it when files land in a blob store container.

Solution using ADF:

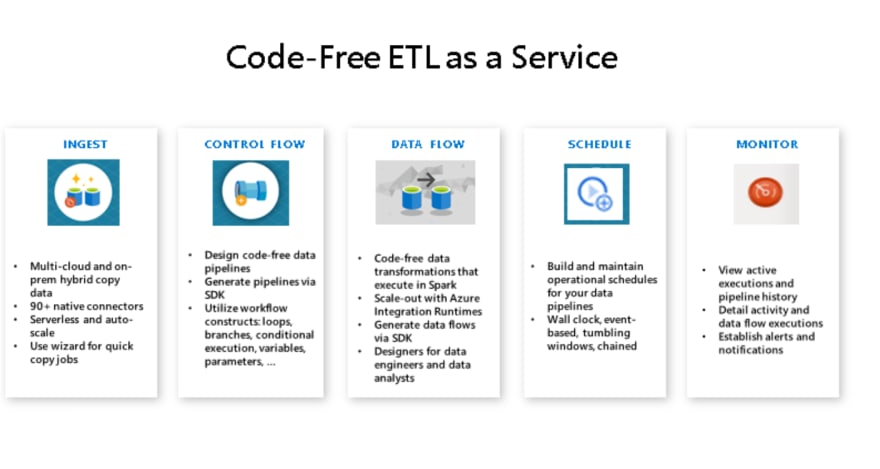

Using Azure Data Factory, you can create and schedule data-driven workflows (called pipelines) that can ingest data from disparate data stores. You can build complex ETL processes that transform data visually with data flows or by using compute services such as Azure HDInsight Hadoop, Azure DataBricks, and Azure SQL Database.

Additionally, you can publish your transformed data to data stores such as Azure Synapse Analytics for business intelligence (BI) applications to consume. Ultimately, through Azure Data Factory, raw data can be organized into meaningful data stores and data lakes for better business decisions.

Check out next article in the series:

https://dev.to/swatibabber/how-does-azure-data-factory-work-j5n

Top comments (0)