In the Django 3.2 version just released I contributed with new features related to compressed fixtures and fixtures compression. In this article I have explored into the topic and produced some sample benchmarks.

Management Commands

As reported in the documentation, the changes are related to the scope of the management commands.

loaddatanow supports fixtures stored in XZ archives (.xz) and LZMA archives (.lzma).dumpdatanow can compress data in thebz2,gz,lzma, orxzformats.

loaddata

The loaddata command searches for and loads the contents of the named fixture into the database.

Compressed fixtures

In the Django 3.2 version was addes support for xz archives (

.xz) and lzma archives (.lzma).

Fixtures may be compressed in zip, gz, bz2, lzma, or xz format.

For example $ django-admin loaddata mydata.json would look for any of mydata.json, mydata.json.zip, mydata.json.gz, mydata.json.bz2, mydata.json.lzma, or mydata.json.xz.

The first file contained within a compressed archive is used.

dumpdata

The dumpdata outputs all data in the database associated with some or installed applications. The output of dumpdata can be used as input for loaddata.

Fixtures compression

In the Django 3.2 version was addes support to dump data directly to a compressed file.

The output file can be compressed with one of the bz2, gz, lzma, or xz formats by ending the filename with the corresponding extension.

For example, to output the data as a compressed JSON file $ django-admin dumpdata -o mydata.json.gz

Benchmarks

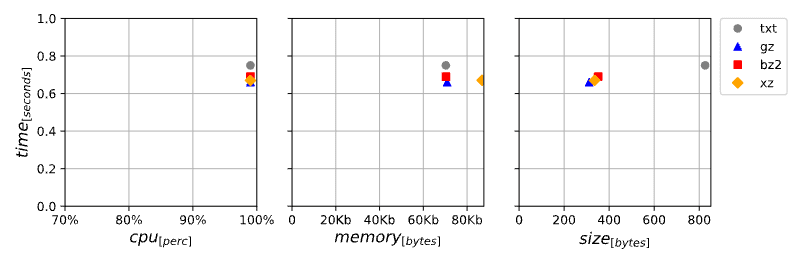

After the development of the new fixtures compression function I carried out benchmarks for all supported file formats starting from different databases, from small projects to larger ones.

The benchmark were performed on my pc and are only examples of the relationship between time, file size, memory and cpu occupation that is needed to export data directly into different type of compressed files.

System info

import os

import platform

print(f"Architecture:\t{platform.architecture()[0]}")

print(f"Machine type:\t{platform.machine()}")

print(f"System glibc:\t{platform.libc_ver()[1]}")

print(f"System memory:\t{os.sysconf('SC_PAGE_SIZE') * os.sysconf('SC_PHYS_PAGES')}")

print(f"System release:\t{platform.release()}")

print(f"System type:\t{platform.system()}")

print(f"Python impl.:\t{platform.python_implementation()}")

print(f"Python version:\t{platform.python_version()}")

Architecture: 64bit

Machine type: x86_64

System glibc: 2.32

System memory: 33402449920

System release: 5.8.0-48-generic

System type: Linux

Python impl.: CPython

Python version: 3.8.6

Benchmark 01

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 0.75 | 70300 | 99 | 826 |

| gz | 0.66 | 70920 | 99 | 312 |

| bz2 | 0.69 | 70372 | 99 | 351 |

| xz | 0.67 | 86832 | 99 | 336 |

Benchmark 02

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 0.67 | 70260 | 99 | 1202 |

| gz | 0.66 | 70868 | 99 | 501 |

| bz2 | 0.66 | 70560 | 99 | 538 |

| xz | 0.68 | 86860 | 99 | 532 |

Benchmark 03

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 1.03 | 72856 | 98 | 872126 |

| gz | 1.08 | 72904 | 99 | 30446 |

| bz2 | 1.21 | 79024 | 99 | 20664 |

| xz | 1.14 | 96608 | 99 | 23252 |

Benchmark 04

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 1.53 | 71304 | 98 | 2138437 |

| gz | 1.60 | 72004 | 98 | 257593 |

| bz2 | 1.71 | 77732 | 98 | 198347 |

| xz | 2.42 | 107252 | 99 | 164072 |

Benchmark 05

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 2.10 | 74240 | 98 | 5252952 |

| gz | 2.25 | 74236 | 98 | 405580 |

| bz2 | 2.69 | 80592 | 98 | 334556 |

| xz | 3.22 | 137004 | 99 | 238432 |

Benchmark 06

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 55.31 | 87012 | 73 | 12092981 |

| gz | 71.97 | 87200 | 71 | 845193 |

| bz2 | 53.74 | 93372 | 74 | 688968 |

| xz | 73.19 | 180936 | 73 | 768812 |

Benchmark 07

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 118.74 | 86344 | 74 | 36035128 |

| gz | 183.11 | 86572 | 71 | 3936656 |

| bz2 | 158.76 | 93272 | 73 | 2719186 |

| xz | 220.65 | 181636 | 73 | 2586748 |

Benchmark 08

| type | time | memory | cpu | size |

|---|---|---|---|---|

| txt | 532.92 | 89192 | 79 | 394846146 |

| gz | 711.72 | 89944 | 77 | 94789125 |

| bz2 | 673.47 | 96284 | 78 | 73823620 |

| xz | 1217.50 | 184724 | 79 | 64908128 |

Conclusions

From the benchmarks carried out with various starting data in exporting data directly to compressed files, it is clear that:

- the xz format almost always produces the smallest files in the face of greater memory and cpu occupation

- the gz and bz2 formats almost always have execution times comparable to saving on simple and uncompressed text files in the face of a strong reduction in the space occupied

- the space gain in the generated compressed files compared to the uncompressed text file ranges from 55% to 98%

- export execution times for compressed files are in the worst case (xz) about double the export in an uncompressed file and in the best case (gz) a tenth faster

The export of fixtures directly to compressed files therefore allows a strong reduction of the space occupied in the face of a small increase in the time and resources required for creation.

In addition there is the possibility for the user to choose the best file type for their use case, opting for maximum compression (xz) or for greater portability (gz).

External links

- PR #12871: Added tests for loaddata with gzip/bzip2 compressed fixtures.

- Ticket #31552: Loading lzma compressed fixtures.

- PR #12879: Fixed #31552 -- Added support for LZMA and XZ fixtures to loaddata.

- Ticket #32291: Add support for fixtures compression in dumpdata.

- PR #13797: Fixed #32291 -- Added fixtures compression support to dumpdata.

License

This article and related presentation is released with Creative Commons Attribution ShareAlike license (CC BY-SA)

Original

Originally posted on my blog:

https://www.paulox.net/2021/04/06/django-32-news-on-compressed-fixtures-and-fixtures-compression/

Top comments (0)