In recent years, natural language processing (NLP) has seen immense progress through the development of large language models (LLMs) like GPT-3, which can generate human-like text and engage in natural conversations. However, a major limitation of these models is that they operate solely in the text domain - they cannot perceive or reason about visual inputs like images. Enabling LLMs to handle multimodal visual tasks alongside language would be a major leap towards more capable artificial intelligence.

Subscribe or follow me on Twitter for more content like this!

A new paper from researchers at Tsinghua University, Tencent AI Lab, and Chinese University of Hong Kong introduces an exciting new method that can efficiently teach existing LLMs to utilize visual tools and models for comprehending and generating images. Their approach, called GPT4Tools, demonstrates that we may not need proprietary models with inaccessible architectures and datasets to impart visual capabilities to language models. This could expand the horizons of what is possible with LLMs using available resources.

Why Visual Grounding Matters for Language Models

Up until now, advanced proprietary models like ChatGPT have shown impressive performance on language tasks through self-supervision on vast amounts of text data. However, their understanding is entirely based on statistical patterns in language corpora - they have no way to ground symbols and words to real-world visual concepts.

This limits their reasoning ability and makes them unreliable for tasks that require connecting language to vision. For instance, they cannot determine if a statement like "the balloon is green" is true or not without seeing the actual image of the balloon. Nor can they generate new reasonable images from textual descriptions.

Grounding language in vision gives much needed common sense and semantics to models. It also unlocks countless new applications at the intersection of computer vision and NLP, like visual question answering, image captioning, multimodal search, assistive technology for visually impaired, and more.

That's why techniques to impart visual capabilities to LLMs could be transformative. The GPT4Tools approach demonstrates an efficient way to do this with available resources.

Overview of Technical Approach

The researchers' key insight is to leverage advanced LLMs like ChatGPT as powerful "teacher models" to provide visual grounding data for training other LLMs.

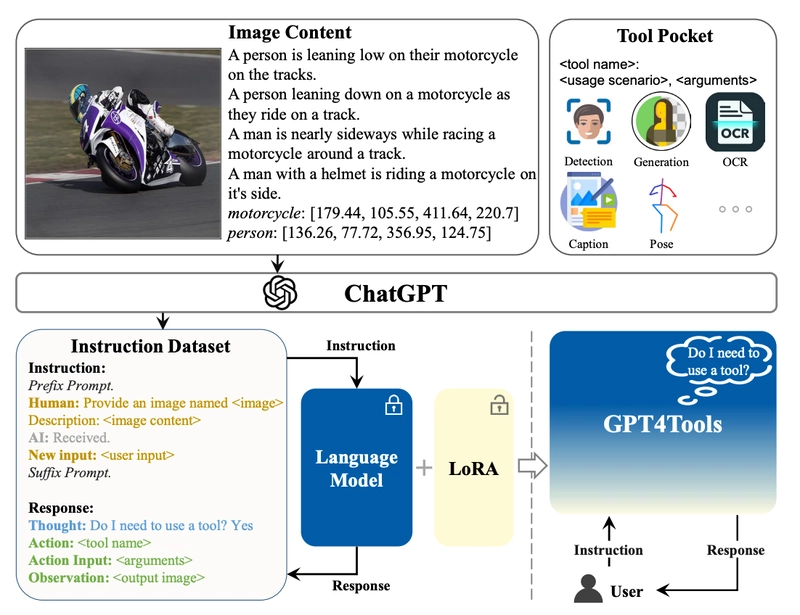

How GPT4Tools works (from the paper)

Here are the main steps of their technical approach:

They first prompt ChatGPT with image captions and tool definitions to generate a dataset of 41k instruction-response pairs that use 23 defined visual tools (for tasks like segmentation, depth prediction etc).

Next, they augment this data by adding negative samples (to avoid overfitting on tool usage) and context samples (for complete conversations).

They use the collected 3-part dataset to fine-tune existing smaller LLMs like Vicuna and OPT by keeping the base model frozen and only updating the rank decomposition components using a technique called Low-Rank Adaptation. This allows efficiently specializing for tool usage without forgetting language capabilities.

They propose metrics to evaluate whether models can accurately determine when tools should be used, choose the right tools, and provide proper arguments. Test sets are constructed with seen and unseen tools.

This approach allows repurposing the knowledge of advanced LLMs to rapidly impart visual grounding abilities to other models without extensive training resources.

Key Results

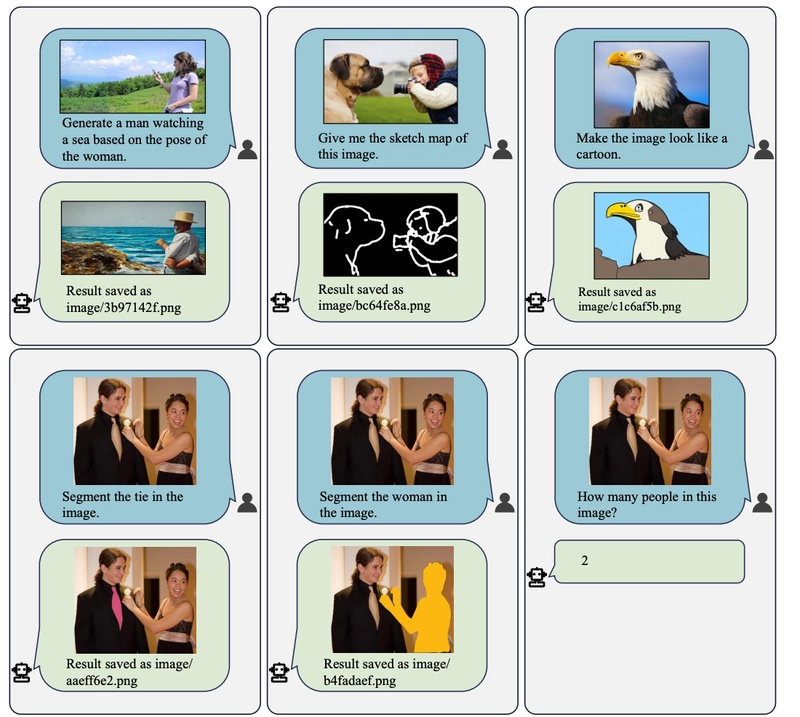

From the paper: Cases of invoking tools from Vicuna-13B fine-tuned on GPT4Tools.

The experiments validate that GPT4Tools can successfully teach existing LLMs to handle visual tasks in a zero-shot manner:

After fine-tuning, smaller 13B parameter models like Vicuna performed on par with the 175B parameter GPT-3.5 on seen tools, improving success rate by 9.3%. This shows transferred knowledge from teacher models can compensate for lower compute.

Augmenting the instructional data with negative and contextual samples was crucial to achieve high success rates. Without it, models easily overfit on basic tool usage.

Fine-tuned Vicuna also generalized reasonably to unseen tools, achieving 90.6% success rate compared to 91.5% for GPT-3.5. This demonstrates an ability to invoke new tools.

The proposed metrics offered a robust assessment on different facets of tool usage - deciding when to use tools, choosing the correct tool, and providing proper arguments.

Some limitations are that the success rate is not yet 100%, and verbose prompting for tools reduces efficiency. But overall, these results are very promising.

Implications

The GPT4Tools method provides an exciting direction for imbuing visual capabilities in existing LLMs without requiring expensive training of massive models on inaccessible proprietary data.

Some key implications are:

With this approach, even smaller 13B parameter models can gain impressive zero-shot performance on visual tasks by transferring knowledge from teacher LLMs like ChatGPT. This greatly reduces computing requirements.

Data efficiency is improved by repurposing the knowledge learned by advanced LLMs instead of training from scratch. Generated instructions provide a natural way to align vision and language.

The techniques open up many new possibilities for multimodal research and applications with available LLMs.

This can also inspire new human-AI collaboration methods, with humans providing visual context and instructions for models to follow.

Systematic evaluation methodologies for tool usage are important to rigorously benchmark progress, as done in this work.

Of course, there are still challenges to address. The success rate needs to improve, prompting efficiency should be enhanced, and advances must translate to benefits for real users. But overall, enabling LLMs to dynamically invoke tools and skills could be a crucial milestone towards more general artificial intelligence.

Conclusion

The GPT4Tools approach demonstrates that self-instruction from advanced teacher LLMs provides an efficient way to impart visual capabilities to existing language models without extensive proprietary resources. The techniques could open up new possibilities at the intersection of vision and language AI. Through instructional learning and tool invocation, language models may learn to dynamically acquire skills as needed for multimodal reasoning and generation.

Top comments (0)