TL;DR The post below outlines a few of the key search algorithms in AI, why they are important, what and what they are used for.

While in recent years, search and planning algorithms have taken a back seat to machine and deep learning methods, better understanding these algorithms can boost the performance of your models. Additionally as more powerful computational technologies such as quantum computing emerge it is very likely that search based AI will make a comeback .

What is a Search Algorithm in AI?

Before we get started lets define what search in AI means.

Search in AI is the process of navigating from a starting state to a goal state by transitioning through intermediate states.

Almost any AI problem can be defined in these terms.

- State — A potential outcome of a problem

- Transition — The act of moving between states.

- Starting State — Where to start searching from.

- Intermediate State - The states between the starting state and the goal state that we need to transition to.

- Goal State — The state to stop searching.

- Search Space — A collection of states.

Uninformed Search

Uninformed search is used when there is no information about the cost of navigating between states.

There are three main classical algorithms for uninformed search:

- DFS — Traverse the search space using a LIFO Stack to determine the next node. Advantages : Good for deep graphs, memory efficient. Disadvantages: Can get stuck in loops.

- IDFS — Traverse the search space, using a LIFO Stack, to determine the next node when it reaches a certain depth then it clears the stack, increments the depth limit and starts the search again.

Iterative deepening depth-first search - Wikipedia

- BFS- Traverse the search space using a queue FIFO to determine the next node.

Breadth-first search - Wikipedia

Informed Search

An informed search is used when we know the cost or have a solid estimate of the cost between states.

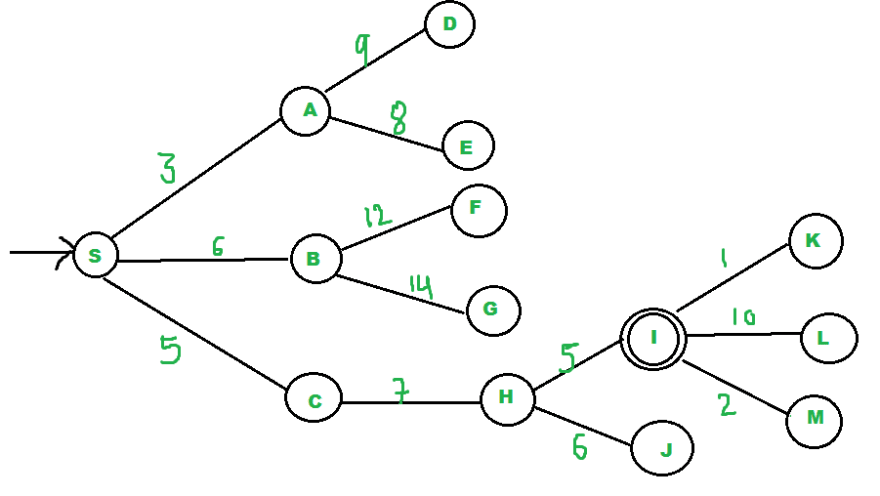

UCF- Traverse the search space with a priority queue and a score. The score for a given state is calculated by the cost of getting to the state from the parent along the path it was discovered.

Artificial Intelligence - Uniform Cost Search(UCS)

A* — Traverse the search space with a priority queue and a score. The score of a state is calculated by the cost of getting to the state from the parent along the path it was discovered, combined with a heuristic value for the given state.

The admissible value of heuristic must abide by the two following properties.

- A state’s heuristic value must be less than the lowest cost path of getting from a state to the goal node.

- The heuristic value must be less than the value of the cost between the path to the state and the heuristic value of each parent node.

A* search algorithm - Wikipedia

IDA* — An IDFS version of A*

Iterative deepening A* - Wikipedia

Local Search

We use local search algorithms when there is more than one possible goal state but some outcomes are better than others and we need to discover the best. Used a lot in optimization of machine learning algorithms.

Hill Climbing- A greedy search method that detriments the next state based on the value thats the smallest until it hits a local maximum.

Simulated annealing- Starts with a hill climb until it hits a local maximum then uses a temperature function to decide whether to stop or continue to a worse state with the hopes of finding a better

Simulated annealing - Wikipedia

GSAT — An implementation of Hill Climbing for the CNF domain. Chooses a random set of boolean values for each possible parameter, if the values match all the preconditions goal state return else we flip the values to satisfy the largest amount of possible preconditions of the goal state and then repeat this process with a new random set of values for each of the boolean values we flipped on the last iteration.

Genetic Search - Generate initial population of states, prune those under a threshold that have the lowest values using a fitness function. Randomly combine the survivors, then mutate a couple of bits and evaluate fitness and repeat.

Beam Search - Preform uniform cost search with the top n values likelyhood values of the current and previous outputs of a model.

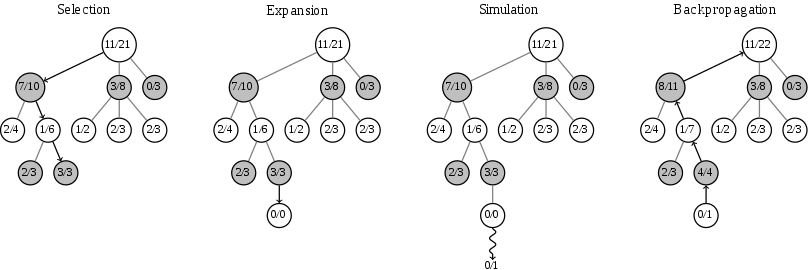

Monte Carlo Search — A randomized search algorithm that will return the best estimate of the correct search result when terminated. Monte Carlo algorithms are always fast, but only probably correct.

Monte Carlo tree search - Wikipedia

Las Vegas Search Is a randomized search algorithm unlike Monte Carlo it will only return when the correct result is found. Las Vegas algorithms are always correct, but only probably fast.

_// Las Vegas algorithm_

2 repeat:

3 k = RandInt(n)

4 **if** A[k] == 1,

5 **return** k;

Las Vegas algorithm - Wikipedia

Atlantic City Search — Is a bounded probabilistic polynomial time search algorithm that combines the strengths and weaknesses of the Las Vegas and Monte Carlos Search algorithms.

Atlantic City algorithm - Wikipedia

Next Steps

If you enjoyed this article, keep your eyes out for new content shortly and follow me here on medium or twitter @pythiccoder. To get started experimenting with these algorithms check out Azure Notebooks as well as the graph data base features of CosmosDB on Azure.

If you don’t have one yet you can pick up a free Azure Subscription below.

Create your Azure free account today | Microsoft Azure

About the Author

Aaron (Ari) Bornstein is an avid AI enthusiast with a passion for history, engaging with new technologies and computational medicine. As an Open Source Engineer at Microsoft’s Cloud Developer Advocacy team, he collaborates with Israeli Hi-Tech Community, to solve real world problems with game changing technologies that are then documented, open sourced, and shared with the rest of the world.

Top comments (1)

Thank you.