On my previous post I explained how to build your own Data Pipeline from scratch. I mentioned that in order to use my GitHub repository you will need to have Python 3 and pip installed.

After building the pipeline, I wanted to run my software on a brand new server, where I hadn't setup Python and the libraries my program needs like pip, pandas and so on.

I was wondering how could I make sure my program would work exactly the same way on the server, or on some other developer's laptop, without having to worry about what version of Python they have. This is where Docker containers are very useful, and I thought it would be a great opportunity to combine Docker technology with my project.

There are some amazing tutorials on Docker that are great for beginners, like this video crash course, and this article that focuses on Docker in Data Science. Since I still have a lot to learn myself, my post is not going to be a complete Docker guide, and I recommend you to read more about it and get comfortable with this concept.

Let's cover the basics briefly:

- Image = a blueprint of our application. Imagine you can take a snapshot of your program after clicking the "run" button. The image is built from a Dockerfile that has all the commands that are necessary to run your program, and that copies the necessary libraries and dependencies. Inside the image the application has everything it needs in order to work properly (operating system, application code, system tools etc).

- Container = an instance of our image. As explained on the Docker website:

"Container images become containers at runtime...[and they] isolate software from its environment..."

This post will teach you:

- How to build a Docker file that copies anything needed to run your Python project.

- How to create an image from that Docker file.

- How to run this image in a Docker container.

Let's get started:

All the instructions are following my previous post about the weather data pipeline that I created.

Now the only thing you have to install is Docker Desktop on your computer.

Writing the Dockerfile:

The Dockerfile is the recipe of commands that are building our image.

I created a file called "Dockerfile" without any extensions:

- Line 1: Base image. This preconfigured Docker image has Python and pip pre-installed and configured.

FROM python:3

- Line 3: Set the working directory in the container:

WORKDIR /usr/src/app

- Line 5: Copy all the dependencies from your project's requirements.txt. Keep in mind that each line of a Dockerfile creates a separate layer. Docker knows when the input to any given layer has changed, so when your application code changes but your requirements do not, Docker doesn't waste time downloading all your requirements.txt every time you build the image.

COPY requirements.txt ./

- Line 6: Reduce the size of your Docker image. Disabling cache allows to avoid installing source files and installation files of pip.

RUN pip install --no-cache-dir -r requirements.txt

- Line 8: Copy the project's folder into an image layer. My Python files are stored locally in a folder called src.

COPY src/ .

- Line 9: Make a new directory called data_cache. This is where all the current weather data will be stored after retrieving it.

RUN mkdir data_cache

- Line 11: Run main.py by default when the container is started. CMD tells Docker what commands to execute.

CMD [ "python", "main.py" ]

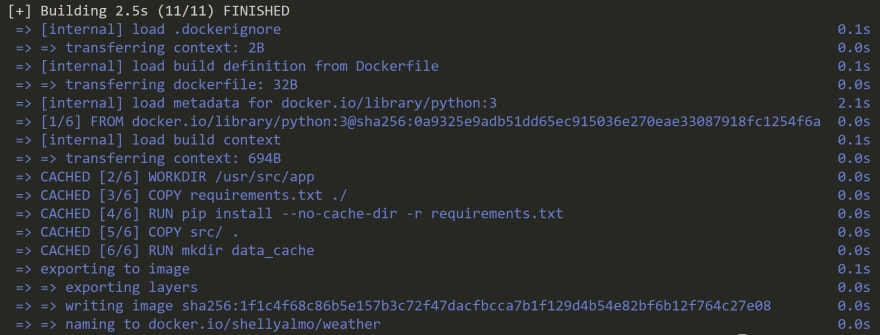

Building the image from the Dockerfile:

Run this to build the Docker image:

$ docker build . -t <yourDockerID>/weather

Name your local image using your Docker Hub username. In this case, weather is the name of the image.

Let's look at the output:

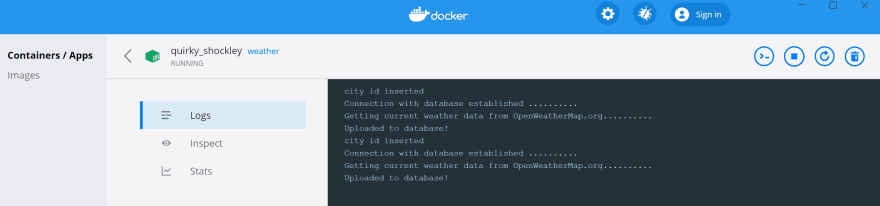

Running the image on a Docker container:

The following command will run your image. As I mentioned before, the API key you get from OpenWeatherMap has to remain private, and that's why I showed previously how to make it an environment variable.

Now instead of using the .env file to set the environment variable, we can use Docker's command --env.

$ docker run --env api-token=<yourapifromOpenWeatherMap> -it <yourDockerID>/weather

We can see our weather image on the Docker Desktop:

Another way to see our image is to run on the command line:

$ docker images

And we can see our container on Docker Desktop:

Now you can proudly say that you have built your own image of your Python application. I uploaded mine on DockerHub so you can just download it and use it for retrieving weather data from web API.

Top comments (5)

Docker is an amazing product, it solves a lot of issues, and has so many "go to production" options. No more someone logged into the server and started running adhoc analysis then went on vacation with full memory. No more someone running some adhoc analysis installing the wrong versions of dependencies that your project depends on.

Nice article, but movingnaway from python will help you as well, a lot more than you think. Have you considered the Go language? Its deployment model doesnt need anything docker-like.

No need to hate on any language. @shellyalmo is super productive in python, she is probably on a team using python, she is in an industry that lives on python. The effort that it would take to move her, her team, and entire industry over to go will significantly detract from getting real work done.

I have to Go and Rust both have super cool deployment though. I do get a bit jelous when I am pushing up a 4GB docker image then go to use a go/rust cli app and its only 200kb and everything is wrapped up in a nice binary.

Thanks for the nice words! I really appreciate it. I'm actually looking for my first job as a developer :)

Well explained thanks for sharing with the community of Python devs :)