It's second post from TensorFlow 101 series, so I assume you installed conda,

tensorflow and jupyter (preferably jupyterlab).

First Jupyter Notebook

Let's create our first Jupyter notebook. To do it we need to start Jupyter

server. We can do it with two commands. You can start with JupyterLab enabled or

not.

If you want to use lab you just have to run:

jupyter lab

and without it:

jupyter notebook

If you're using PyCharm or VS Code you don't have to launch it manually from

terminal. You can run it inside the IDE/editor.

Protip: if you're using PyCharm - Scientific view is very useful. It

displays IPython REPL, all your variables, documentation and your notebook at the

same time.

After you launched Jupyter we can create new notebook. It can be created in bare

Jupyter in the New menu on the right.

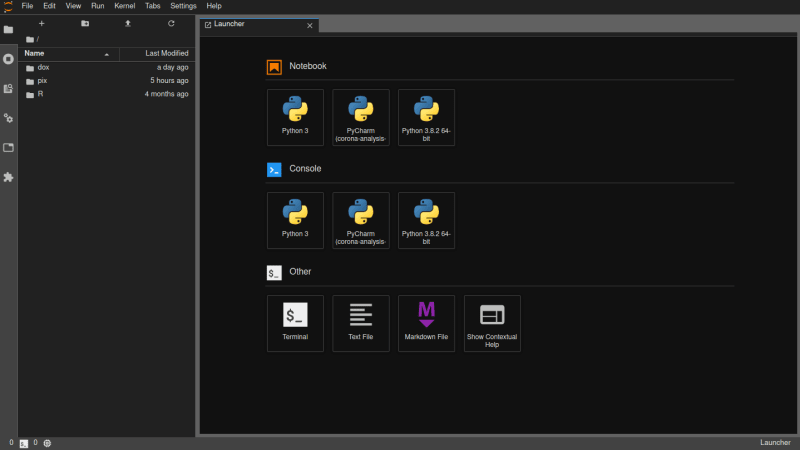

For JupyterLab it should be visible inside Launcher in Notebook category.

Otherwise you can access it in the menu bar under File>New>Notebook option.

For PyCharm you can create it with RMB on the file list, with file menu or

alt+insert keybind.

What can we do in here?

Basically any correct code in Python should run just fine. Jupyter Notebooks are

superior to regular scripts, because you can run your code just partially with

blocks. It also allows to create comment blocks with markdown.

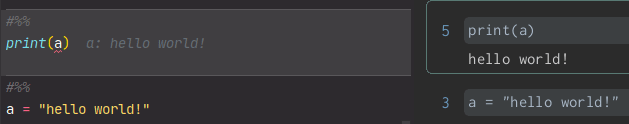

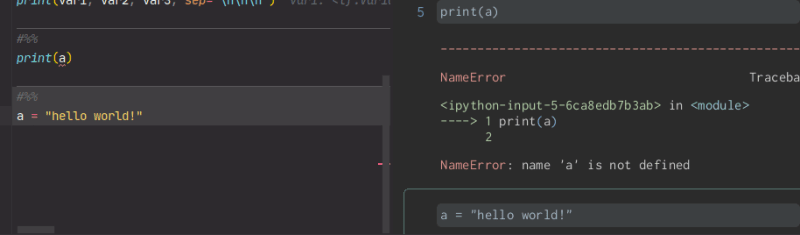

As you can see in the picture - this code works perfectly fine if you launch

block [3] before [5], but there's a catch. If you run all your notebook at

once - it'll throw an error because a is declared AFTER print(a).

Tensorflow basics

Fist we have to import tensorflow. It's usually aliased as tf.

import tensorflow as tf

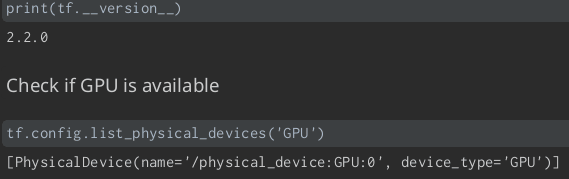

Now we can check installed version and check if GPU is available. Of course if

you installed CPU-only version you won't be able to access GPU.

print(tf.__version__)

print(tf.config.list_physical_devices("GPU"))

I've done that in separate blocks, so for me it looks like this.

Now we can create our first tensors (but not flow!)

Creating tensors

We can create tensor of ones with

tf.ones([2,3,4])

Its argument is list of dimensions. In this example tensor has dimensions 2x3x4.

It's very similar to nested lists in python. Let's assume tensor is (for now)

a list - so it contains 2 lists with 3 sub-lists and 4 elements.

By default TF uses float32 type. Yes, it's important to understand how does the

type work. Every tensor can have like float16 (half precision), float32 (single

precision), float64 (double precision), integers with proper size, strings,

complex numbers, and many others. There's one a bit special - bfloat16 -

this one is a bit more tricky, because it's not IEEE 754 compatible, It has

8-bit exponent and 7 bit fraction. Half precision floats contain 5b exponent

and 10b fraction, but use case is a bit different.

There are more functions like tf.ones(), but the most important one is just

tf.constant(). With this one we can create any tensor containing constant

values. There's also tf.Variable(), where our values are, of course, variable.

That's all for now folks. If you've got any questions or something is unclear

feel free to ask. I'll do my best to explain everything.

Stay tuned!

PS. You can also subscribe to my newsletter

Top comments (0)