Hi All, this is my first post and it is designed to create a talking point. I figured that is as good an intro as any. These are some very hard metrics to measure.

During this post I want to slowly lead you to the tangible cost of having a web presence, and what we can all do to improve the world wide web - the benefits of the web are undeniable. There is no going back, however we can reduce our carbon footprint and provide better services, be better devs.

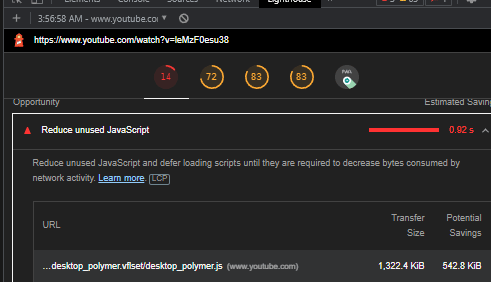

I will start with Youtube - it is obviously a very heavy website, used by billions of people every day. Lets take a deep dive with a lighthouse test.

My desktop testing shows that they could save around a megabyte on every single page's first interaction. Please take a look for yourself, this image is just one of many scripts and styles they could minify. They make the rookie error of not purging all their their styles and scripts.

Javascript injection even when used for good is a dangerous toy which feels very much like a resurrection of flash - "blazingly fast" but not designed to make sites or be crawled by search engine bots. That doesn't stop bootcamps programming people with the how but not the why of React.

Here is where we hit the first bottleneck. People on 3g networks is really where the tangible cost of our gluttony comes in and worse programmers often ignore accessibility.

We have a so many frameworks to make apps, however a good website is lightweight, it uses as much HTML and CSS as possible. Ideally no scripting is required. It seems almost laughable having client side rendering - to use a heavy scripting language to say build this site each time you visit it to allow our virtual/heavily modified DOM to work. Client side hydration can and has been improved on.

As you get more advanced you learn about packaging and caching. Now this is where things start to get dangerous - Gulp is no longer a task manager looking over our shoulders, purging and minifying, then spitting out prebuilt sites ready for FTP upload at a massive reduction in size.

Webpack blew it out the water. Webpack is undeniably a friendly tool, it can be hard to use, and again it is a case of scale whether you actually need packaging or not. Then there is vite et al. That is another post, please let me know if this interests you.

Sizing the internet.

I am an SEO, and I like to say that means the payoff between accessibility and performance. With the right hardware severely disabled people can access sites if (the sites grant them access). SEO is not about linkspam, it is about delivering the best content you can, as quickly as you can to as many people as you can.

I decided to roughly calculate the size of the web. The average desktop page is 2mb, with many sites significantly higher than that. "There are around two billion websites [in 2022]".

That would make an average of 4bn kilobytes sat on active servers and on the cloud. It gets worse.

1.4.4bn of our YouTube videos are watched daily.

- circa 3bn searches made on Google, people watch more video's than they make searches.

- 100bn+ emails are sent per day - think spam. [https://www.domo.com/learn/infographic/data-never-sleeps-5]**

For me the worst culprit is that "32 billion people are active on Facebook daily" - that's more than four times the actual population of the world, undeniably something is wrong.

Want the real kicker? :These stats are from 2017.

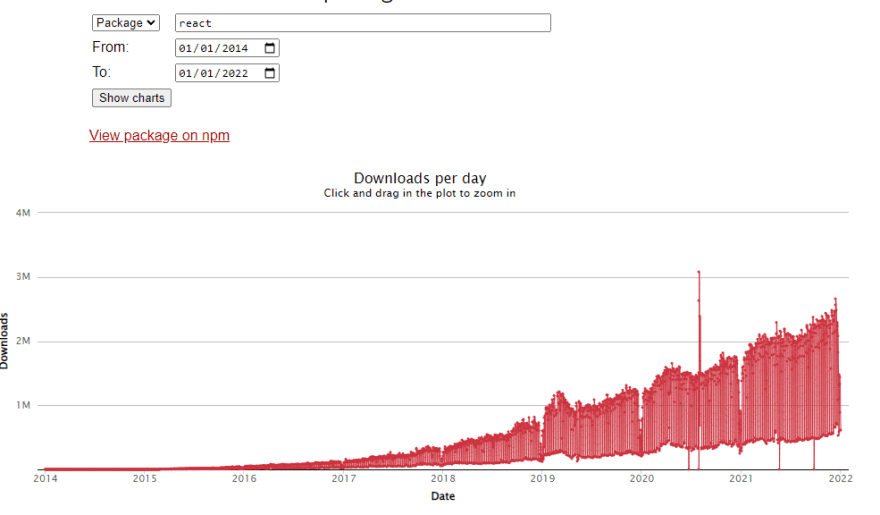

Facebook maintains React. It makes sense for a business with traffic like that to create reactive content. I feel it was a bit too successful, also we have Node vs Deno - another argument where we are starting to see the stress of success modern JS is having. The faker facade was a good example of an inherent problem with node while the first step people often take is npm init -y.

Exponential growth of popular package downloads is an inherant problem with node - these files, and all their requirements are included in your final build. This is such an obvious statement it seems laughable to even mention but in 2014 I can assure you we didn't expect such an explosion of packets and requirements:

Always consider - are you making an app or are you making a site? What does that mean for users, and what does that mean to peaceful web crawlers?

Frameworks and libraries have started to be written for the web dev rather than the end user. There is a war of frameworks and libraries going on which means convenience of use is paramount. React is winning because it has the highest user-base, not because it is the fastest, lightest weight, or best by any other metric.

What form of rendering do you use? How many times do requests ping back and forth from the server before your site loads? There are 4.2bn sites live today and double that amount of active servers.

The real cost of doing business

[In 2017 the guardian predicted that the internet would account for 20% of the worlds electricity in 2025.]

The real conclusion is how long is a piece of string, what is the internet, do we count all our devices how do we measure and does it matter.

What is paramount is your visitors with their 3g phones. Time to interaction is the secret to keeping happy visitors. If each page you have is 2mb then you have the body chugging away that is costing your user, not just in experience. You are slowly polluting the world and costing money in the form of data.

So why am I making this post - obviously there was a point where the internet overtook standard media, and this page costs far less carbon than a piece of paper. dev.to delivers the images I use minified from a central cache however each visitor I shamelessly push this post on has a small carbon footprint.

These stats are important to think about, accessibility and performance. Get people on your site and give them the best experience possible. Don't get lazy, learn your stack inside out so you know what you can hack away if you are bleeding data.

Please leave some comments on what you feel - obviously the title is clickbait but it is also the truth. If something uses an incalculable amount of energy, all optimisation is good optimsation.

Regards

Dave

Optimise-U

Top comments (2)

Good post. I think these are important ideas for individuals/developers to be thinking about! And for companies who claim energy use & climate impact is important, to instil those intentions in their devs (likewise for devs to work for those companies that share their values).

Tech companies such as Microsoft and Vodafone signed a pact last year to drive green digital solutions, and the UNEP are confident that tech has the "speed and scale" to make a difference... with many companies pledging to be carbon neutral by 2040, I'm not a fan of that time scale personally, but as you say its hard to cut what you can't measure. I hope they over-shoot and become carbon negative long before then.

Perhaps tech will create the solution to it's own energy problem?

Relevant article from UNEP about the pact I mention above, with World Environment Day next month:

unep.org/news-and-stories/story/ne...

I hope you got the do what you can. I am a very cynical person. The Internet much as I hate the how brings people together. Uses less carbon than a paper system but we can do better so we should do better.

Very contentious area. Thanks for your reply.