I've been wondering for a while about how to start this article, but I think I'll just jump into it.

In 2018 I made a Brain-Computer Interface (BCI) game called PsyBreaker and I think that this might be the project that I'm most proud of, so naturally I decided to write about it.

Let's start with the tools I've used:

- Unity - the budding game developer's favorite tool

- Muse Headband 2014 - a headband that people normally use to meditate, together with the Muse App

- MuseLab - software that acted as a data middleman between the headband and unity

- Terminal of choice - I used iTerm. I like iTerm.

Now, I'll let you in on something, I had no clue how I was gonna pull this off. I initially wanted to just make a simple game, but my tutor said

"Nah, that's boring. I've got this headband, can you do something with that?" and of course I went "Yeah, ez m8".

It was not.

Muse had very little support (at the time) for connecting the headband to neither PC or Mac. The only thing that Muse offered me was their API (MuseLab) that helps you visualise your brainwaves and send the data further on to a set IP through UDP packets, which, fair enough, helped me quite a lot later on. So not the best start to my project, so I decided to put the whole "How the hell am I gonna connect this headband to my game and actually use it" problem on the backburner and focus on making the game first.

Design and game creation

The game had to be something simple. Something that at the most had only two inputs. I initially thought of using Pong, but I just couldn't be bothered to create an AI to play against, so I went to another paddle-type classic.

That's right, I'm talking about Atari's Breakout, baby. Everyone knows and has played this game in some form or another. Hell, I even had it on one of my old Nokia phones in the early 2000's. It was perfect, the player had to just move left and right and that's it.

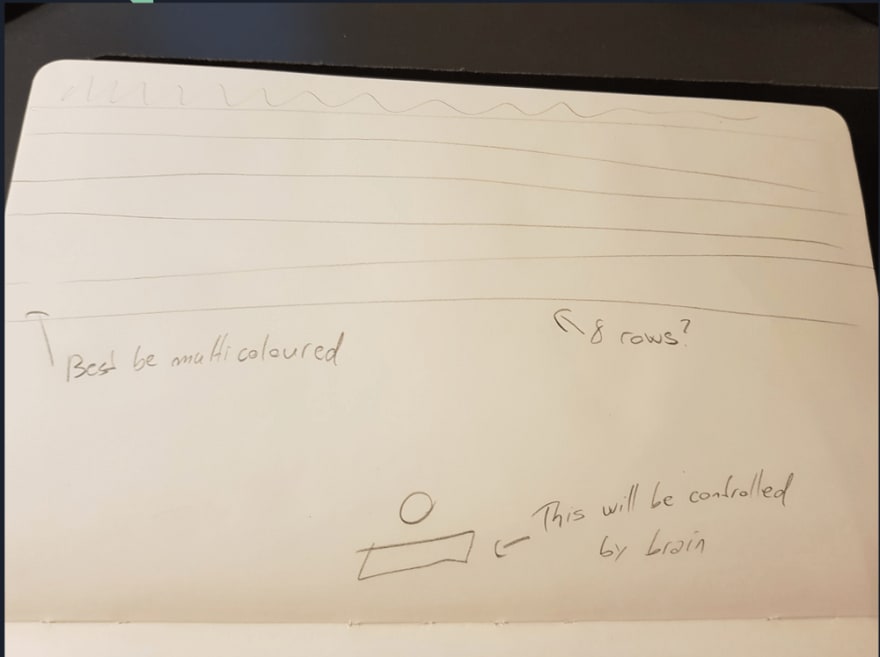

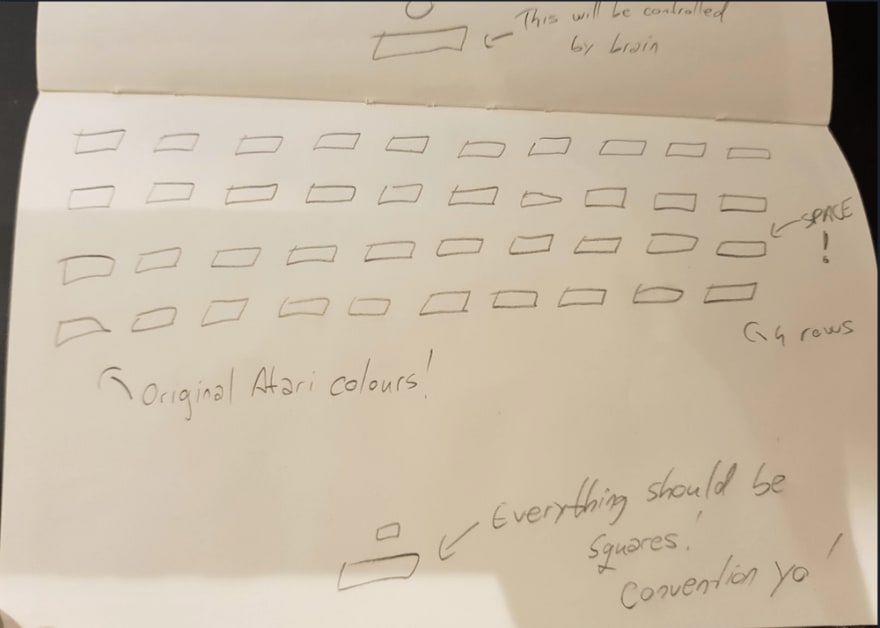

So once I had the game in mind, I went to sketching, because umm... one of the requirements of my dissertation was to provide low fidelity prototypes. Usually I'd just go and do it straight into Unity, but I can see the benefits of sketching your ideas first. However, in this case it didn't really help me, I mean, look at these:

Yeah. Not my best art, I give you that. But, redemption strikes, as this is how it actually looked like in Unity:

Design has been based around the original colours and layout of Atari’s Breakout, bringing a tiny homage to the ole' timer and also keeping it simple. I have taken a more minimalistic approach to my design however so the player has some breathing space. Less is more as some designers say! Afterwards, I’ve implemented the core movement mechanics and tested them by using normal arrow key inputs, to see if the colliders worked fine and nothing clipped through the game. After several tests I’ve tweaked the speed of both paddle and ball found a nice sweet spot that won’t be too fast or slow. If need be, I’ve made the variables dynamic so I can change the values on the go, anytime.

Now that the easy bit was done, it was time to face the moment I've been dreading.

Connecting the headband

I actually got a bit lucky here. I went through the Muse docs on their website and on forums and found out that Unity uses something called OSC (Open Sound Control) signals to transmit data through TCP packets.

You know what else uses OSC to send inputs through? A Nintendo WiiMote!

Finally, the gears started to move and I could see the light at the end of the tunnel. A flicker of hope.

So what I did next of course might shock you... I typed into google "how to use unity with wiimote". From there on, it was smooth sailing. I gotta thank heaversm for the tutorial on setting up the OSC plugin for Unity.

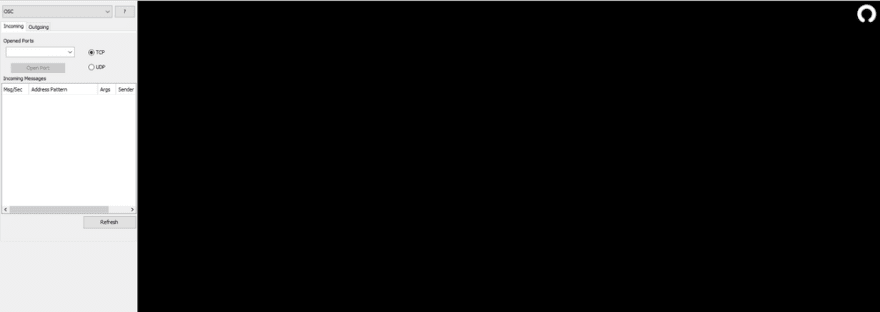

Now this is where MuseLab comes into play. The data that I was getting into Unity through OSC was all over the place. I couldn't properly read it, or break it into paths. Also for some reason, on Windows, the headband would refuse to stay connected to my PC. It was all a bit annoying. So I swapped to my Mac and I got MuseLab to be the middleman here, by flexing some of my computer networking skills (that was a pun on networking a.k.a. socialising and networking in computers, thank you for coming, I'll be here every Tuesday).

Right, so it was time to bring it all home. To connect the headband and open a default port, I installed something called muse-io in my terminal and just had to type in “muse-io” and the headband connects and opens a connection on the machine's local IP (usually 127.0.0.1) and port 5000.

Now, if one desires to open the connection on a specific port they need to specify it in the command i.e. “muse-io –osc osc.tcp://127.0.0.1:5001”.

(Image courtesy of MuseIO)

(Image courtesy of MuseIO)

Once the port is opened, the data is received in MuseLab by opening the TCP port displayed on the terminal and the array of OSC messages is displayed again. When that is achieved, one must open an outgoing port to send the data further on, in an UDP format. In the case of this project, it is necessary to open two ports, one with the address “127.0.0.1:5005” and another “127.0.0.1:5006” since the headband is used to control both paddle and other functions of the game like screen navigation. In MuseLab we can select exactly which OSC messages to send, and in this case, Unity accepts the data and can use it without any problems. And the cool thing is, it's that it sent it through as simple endpoints with values attached to the body (e.g. /muse/concentration with body of 200 or something like that, I don't remember, it's been 3 years, ok?). To get a visual, MuseLab looks like this:

And bam! Unity can now read your brainwaves. This was, honestly, the hardest thing in this whole project and took me a hell of a long time to pull off.

Gameplay

Now, the initial plan was to use something like the player's concentration value to control the paddle in the game. However that proved a little...unreliable. Getting the player to reach a specific concentration value, let alone maintain it is not as easy as it sounds. Not to mention, tiring.

So, I've hit yet another wall. What to do to prove that you can play a game with just your mind?

Luckily, while I was looking through the API, exploring different endpoints that I could hit, I found something called "jawClench". And it clicked! When I was trying to achieve those concentration values I was talking about, I tended to clench my jaw quite a bit or make a tense face. So I tried to search for some link between jaw clenching and concentration, and I found a paper/article/i don't remember what it was but I'll link it if I find it again, that claimed that by clenching your jaw, it functions kinda like a trigger for the brain to go from a relaxed state to an alert state. With that information in mind, I implemented some basic commands, the paddle will go to the left by itself, and if the player clenches their jaw, thus triggering an alert state, the headband will move to the right.

And it worked like a charm.

I've added some extra flair, like a main menu and a tutorial where I used the headband's accelerometer to navigate through. Then some basic game stuff, like lives (the player would have 5 tries to clear the screen), a timer for those who wanted to brag with their time, and of course, score, each brick having a different value if broken. And wouldn't you know it, I had myself a BCI game on my hands. Once everything was done, I done went ahead and got myself around 30 people or so to test it out.

Reviews were better than I actually expected! A lot of people enjoyed it and most of the complaints were about their jaws getting tired and their teeth hurting, which, fair enough. It may not be perfect, but I feel like it's a good first step.

Aaand that's pretty much it. I hope you've enjoyed this little brain dump of mine. Maybe one day I'll make the game public, or open-source it, but I feel that first I'd have to properly refine it and fix some bugs (yeah, got some of those too sadly). But till then, I'll leave you with some images and this story.

Thanks for reading!

PS: Please check out my personal website, I write all of my posts there first!

Top comments (0)