The adoption of CI/CD tools has made delivering new features to customers faster than ever. A far cry from the weeks-long code freezes and everlasting pipeline stabilization sessions that my team commonly referred to as integration hell.

But yesterday’s solutions have exposed the next big challenge for today’s developers; actually getting code merged.

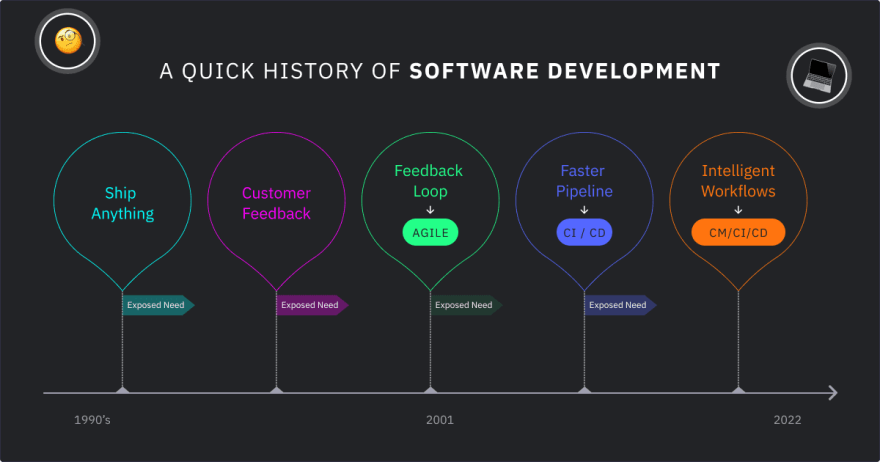

How We Got Here

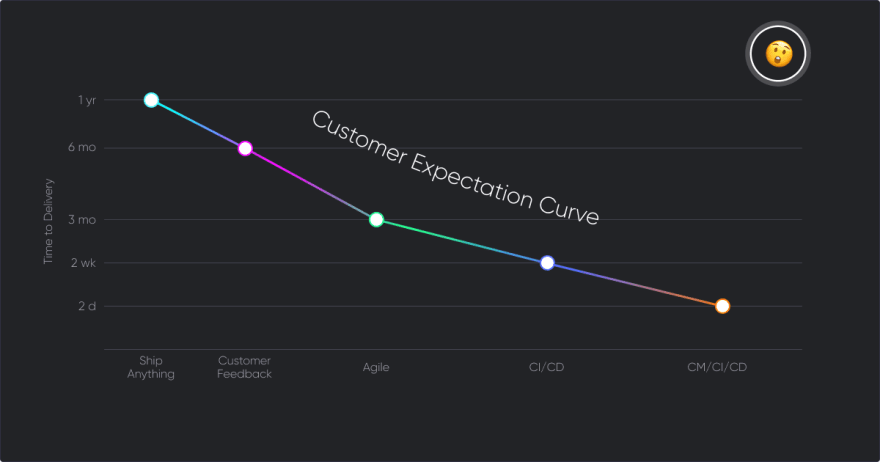

The ‘90s were a unique time in the history of software development. Projects would often take six months to complete, and compared to today’s standards, it was a black box operation. Feedback in a pre-agile world was no small thing. It meant design meetings, architecture changes, and infrastructure development to say the least. And on top of all that, it came with a large side of customer expectation.

“It would have been nice to know about these needs before we built the damn thing to work like this,” was a very regular thought of mine. The need for a feedback loop, shorter iterations, and less siloed work was rapidly exposed.

Then in 2001 the Agile Manifesto came out, forever changing the way software was developed. As agile began taking hold in the industry, customer expectations continued to grow. Using scrum vs traditional waterfall, we were able to develop faster than our counterparts in ops could deliver. This speed divergence between development and operations exposed another major challenge, building a faster delivery pipeline.

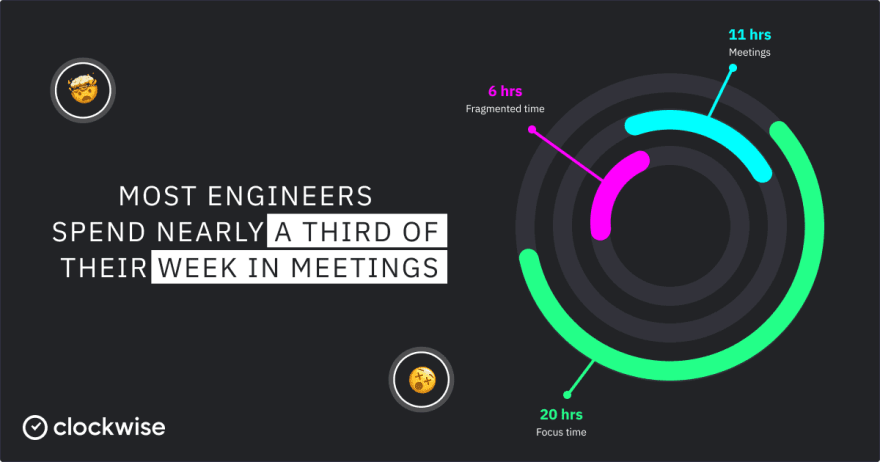

With this need to speed up the delivery pipeline, CI/CD automation and the DevOps movements came to be in the early 2010s. This automation opened the door for new architectures like microservices, integrated security, and GitOps to evolve how software is built and delivered. Each of these advancements promised the same two things: a faster, more reliable delivery pipeline and more time to code for developers. Unfortunately the “more time to code” part never happened.

Unlocking Developer Productivity

Developers today are more weighed down by non-coding activities than ever before. A recent study by Clockwise showed that developers only have 20 hours of focus time per week. The good news is that businesses are beginning to understand the cost of developer toil and implement real changes to counteract it.

The developer experience (DX) movement has started gaining traction with major tech companies like Netflix, Airbnb, and Meta adopting developer productivity roles. Major publications like Forbes and Fast Company are publishing developer experience & productivity articles. Tech researchers like Nicole Forsgren at GitHub are publishing new measurement frameworks like SPACE.

The ultimate goal of the DX movement is to improve developer happiness and as a result productivity. But how do you measure something as complicated as productivity? How do you baseline happiness?

Critics of the movement are quick to tell you that it’s nigh impossible to accurately measure such subjective areas as productivity or happiness. They’ll tell you that in doing so you will only ruin what you’re trying to preserve, developer culture.

I agree with them…to a point. It’s true that developer satisfaction surveys and tracking team velocity are unlikely to help retain engineers. But that doesn’t mean trying to improve the developer experience is a fool's errand.

The Merge Frequency Problem

When I was a developer, I felt the most satisfaction when I could complete a task. That meant merging code to the trunk, or better yet getting it deployed, and starting to work on something new with a clean slate. It was always worse having my work blocked for days without review than it was having a regular stream of meetings. Every hour that my work sat unattended I lost more momentum and became increasingly frustrated.

My happiness and satisfaction as a developer was always based more on solving interesting problems, helping others, and shipping code than it ever was on velocity. If the developer experience movement is truly focused on improving developer happiness, then it’s time to focus on letting us actually get our code merged.

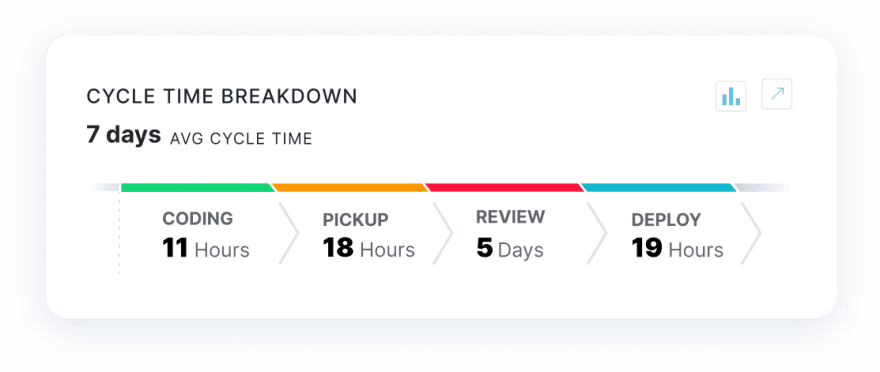

We analyzed millions of PRs from thousands of dev teams across all sized organizations. We found that the average cycle time is about 7 days.

My company’s data analysis team, LinearB Labs, has spent the last 3 years looking at cycle time (aka lead time for changes), the time it takes from when coding begins to when it’s deployed. After analyzing millions of PRs from thousands of dev teams across all sizes of organizations, we found that the average cycle time is about 7 days.

But what is really interesting is that the code or PR in this instance sits in the PR review process for 5 out of the 7 days. Even worse, we found that the majority of those 5 days resulted in an “LGTM” type review.

I speak with engineering leaders from companies like Stripe, Slack, Google, Netlify, and more every week on the Dev Interrupted Podcast; we hear the exact same story over and over. The PR review cycle is the main bottleneck they have to overcome. They also tell us another truth: that if the code someone wrote, assuming it was properly tested, is released soon after it is written, there is a better chance of catching a problem while it is still fresh in the developer’s head.

All of the data, both qualitative and quantitative, is telling us that the next major problem in the history of software development is focused on getting code merged. I believe it is time we start making moves toward a continuous merge future.

3 Iterations of Continuous Merge

v.1 Context

Scenario: I’m working on an issue, and I get an alert that someone assigned me to review their PR. But I don’t really know anything about that PR, so I continue working on my issue and plan to come back to the review later. When I get to the review, I need context, and by the time I actually start reviewing the PR, the other developer is hard at work on something else. I may have to break their focus and get information from them, forcing both of us into unnecessary context switching.

So the first problem we decided to solve was context.

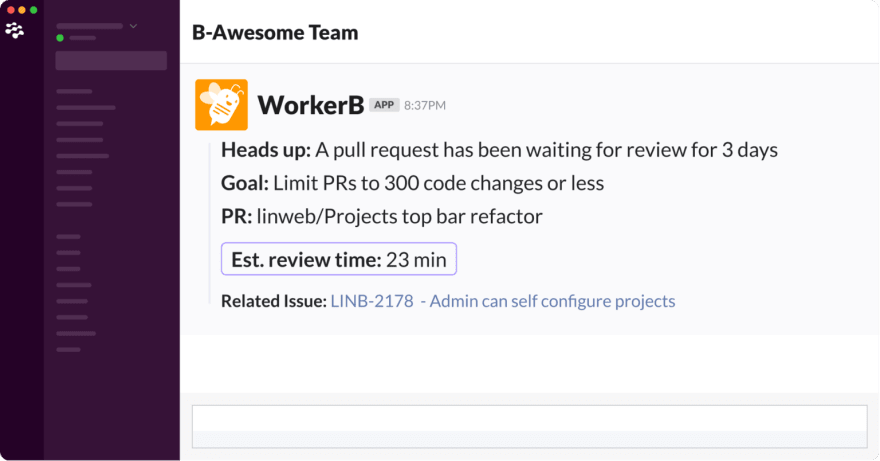

Using our Slack and MS Teams bot, WorkerB, as the vehicle for PR alerts, we started by simply enriching PR notifications. Instead of, “You’ve been assigned this PR to review,” we now provide our users with:

- Estimated Review Time^

- Associated project issue

- The PR owner

^ based on a proprietary learning algorithm

Each of these three pieces of information empowers the developer to make a better decision about when to pick up the review. It answers questions like: Do I have enough time for this review during my next small break or should I queue it? Is this a priority project? Do I owe this person a favor?

Continuous merge isn’t solely about improving the speed to merge. It’s about giving developers the tools and information they need to make better decisions and reduce the cost of PR context switching on them.

v.2 PR Routing

Not all PRs are created equal. Continuing our data analysis, we found that many PRs are incredibly small, while others are much larger. Shocker, I know. Yet they both go through the exact same review process.

Even more so, the small PRs that would wait for days to be reviewed would result in a “Looks good to me” type comment 80% of the time. Why are we waiting days to merge an LGTM PR?

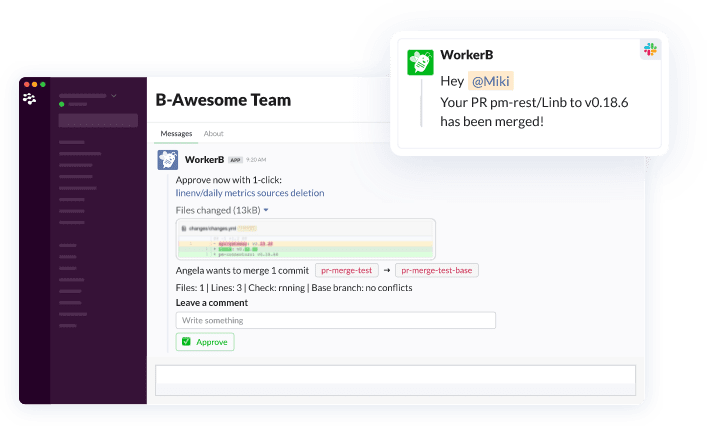

Again we utilized WorkerB to begin routing PRs with <5 lines of code changes into Slack and MS Teams for immediate review and approval. This simple, yet needed, feature takes a snapshot of your PR changes, and sends them via DM to the assigned reviewer so they can approve almost instantly.

It’s important to stress here that our v.2 solution is the first time any PR has been treated differently based on it’s unique characteristics. This feature was a hit with users. We found that we were able to route 11% of all PRs for an almost-instant approval. So we began to extrapolate into v.3.

v.3 gitStream

Iterations 1 & 2 taught us that empowering developers with more information allowed them to make better choices with their time. It also proved that we could increase merge frequency by routing PRs based on their characteristics. Knowing this, we started working on the first stand-alone continuous merge tool, gitStream.

Creating new technology is one of the great pleasures of working in the software industry. Spending late nights with passionate people playing the “what if” game.

- What if we could automatically route every PR to a more specific place?

- What if we created automations in the repo that could tell people where PRs would be routed?

- What if the repo owner could create any automated path they wanted?

- What if we could give the developer context into which review automation a commit falls into while coding? 8 What if we change the very nature of the PR process today…

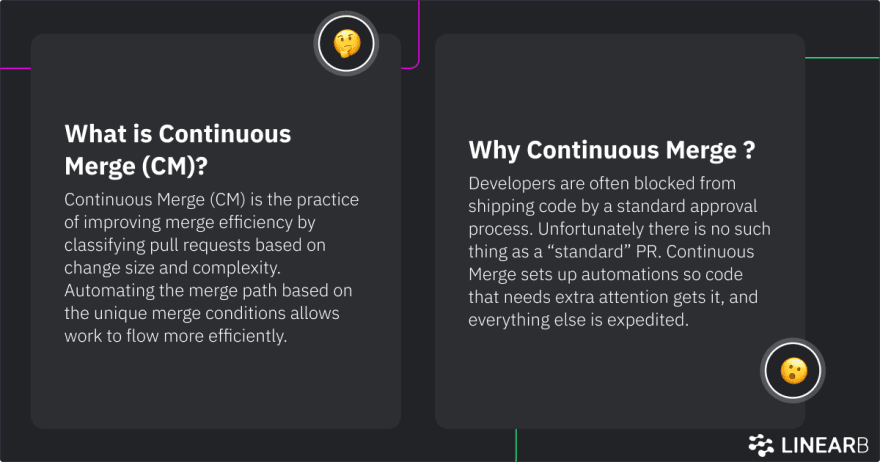

CM/CI/CD

Continuous merge (CM) is a rules-based approach to merging code from an individual developer into the team branch with the level of review reflecting the importance and risk of the code being merged.

In more plain terms, a repo owner can create review automations for the repo (e.g. automatically approve PRs if only image files were changed), and the developer will use an IDE integration to tell them which “lane” their PR will fall into. These repo-based review automations and IDE context do two things:

- Eliminates the “standard” PR review process in lieu of routing PRs based on their characteristics.

- Encourages high-frequency merge behaviors from developers by adding context during the coding process.

The tool we’ve created that automates the continuous merge process is called gitStream.

Continuous merge using gitStream starts with the .cm file to create the review automations for your code. Not all pull requests are equal. Some PR’s are just image or docs changes; while others are modifications of key parts of the services that need special attention. Each of these unique cases are defined in your .cm file, communicating to the developer how to make the code they’re writing qualify for a given level of review.

By using gitStream and having well-defined automations, additional context, and a clear record of changes, several cycles of back and forth are saved and merge velocity is drastically increased.

Get Early Access to gitStream

I'm happy to say that gitStream, the first continuous merge solution is in beta and we’re accepting early sign-ups today at gitStream.cm.

Over the next couple of months, as we build, test, and iterate, we hope you will begin to think about what review automations you would want to add to your repos. Even better, sign up for early access and help us test gitStream.

- How might you improve the quality of a review by routing it to a specific person or team?

- What types of PRs can be auto-merged?

- What types of review automations would you want to enable for the entire company?

- How would back-end team review automations be different from front-end team ones?

The automation of continuous merge has an almost unlimited scope. I hope you will join us on our journey as we change the very definition of how software is created and delivered…all over again.

And if you want to learn more about continuous merge, check out an in-depth discussion with my co-founder, Ori Keren, on the Dev Interrupted podcast.

You’re invited to Interact on October 25th!

Want to know how engineers at Slack and Stripe connect their dev teams’ work to the business bottom line? Or how team leads at Shopify and CircleCI keep elite cycle time while minimizing dev burnout and maximizing retention?

These are just two of the topics we’ll tackle at Interact on October 25th.

A free, virtual, community-driven engineering leadership conference, Interact is a one-day event featuring over 25 of the most respected minds in development, all selected by the thousands of engineering leaders in the Dev Interrupted community.

Top comments (1)

why not just do trunk-based development?

Branching was created for 0 trust environments (ex. OSS) and not for office environments.

With this every pair push to production normally bellow the hour, a cicle time that is not achivable with PRs.

At the end the important thing is to reduce MTTR, so if there is any issue it can be solved almost instantly.