Hey, what's up 😁 Today, i'm gonna tell you how to build a simple neural network with JavaScript by your own with no AI frameworks. Let's go!

For good understanding you need to know these things:

- OOP, JS, ES6;

- basic math;

- basic linear algebra.

Simple theory

A neural network is a collection of neurons with synapses connected them. A neuron can be represented as a function that receive some input values and produced some output as a result.

Every single synapse has its own weight. So, the main elements of a neural net are neurons connected into layers in specific way.

Every single neural net has at least an input layer, at least one hidden and an output layer. When each neuron in each layer is connected to all neurons in the next layer then it's called multilayer perceptron (MLP). If neural net has more than one of hidden layer then it's called Deep Neural Network (DNN).

The picture represents DNN of type 6–4–3–1 means 6 neurons in the input layer, 4 in the first hidden, 3 in the second one and 1 in the output layer.

Forward propagation

A neuron can have one or more inputs that can be an outputs of other neurons.

- X1 and X2 - input data;

- w1, w2 - weights;

- f(x1, x2) - activation function;

- Y - output value.

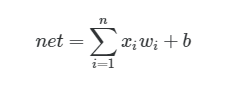

So, we can describe all the stuff above by mathematical formula:

The formula describes neuron input value. In this formula: n - number of inputs, x - input value, w - weight, b - bias (w e won't use that feature yet, but only one thing you should know about that now - it always equals to 1).

As you can see, we need to multiply each input value by its weight and summarize products. We have sum of the products of multiplying x by w. The next step is passing the output value net through activation function. The same operation needs to be applied to each neuron in our neural net.

Finally, you know what the forward propagation is.

Backward propagation (or backpropagation or just backprop)

Backprop is one of the powerful algorithms first introduced in 1970. [Read more about how it works.]

Backprop consists of several steps you need apply to each neuron in your neural net.

- First of all, you need to calculate error of the output layer of neural net.

target - true value, output - real output from neural net.

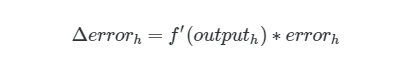

- Second step is about calculating delta error value.

f' - derivative of activation function.

- Calculating an error of hidden layer neurons.

synapse - weight of a neuron that's connected between hidden and output layer.

Then we calculate delta again, but now for hidden layer neurons.

output - output value of a neuron in a hidden layer.

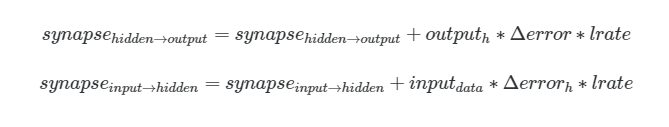

- It's time to update the weights.

lrate - learning rate.

Buddies, we just used the simplest backprop algorithm and gradient descent😯. If you wanna dive deeper then watch this video.

And that's it. We’re done with all math. Just code it!!!

Practice

So, we’ll create MLP for solving XOR problem (really, man? 😯).

From the simplest things to the hardest, bro. All in good time.

Input, Output for XOR.

We’ll use Node.js platform and math.js library (which is similar to numpy in Python). Run these commands in your terminal:

mkdir mlp && cd mlp

npm init

npm install babel-cli babel-preset-env mathjs

Let’s create a file called activations.js which will contain our activation functions definition. In our example we’ll use classical sigmoid function (oldschool, bro).

Then let’s create nn.js file that contains NeuralNetwork class implementation.

It seems that something is missing.. ohh, exactly! we need to add trainable ability to our network.

And just add predict method for producing result.

Finally, let’s create index.js file where all the stuff we created above will joined.

Predictions from our neural net:

Conclusions

As you can see, the error of the network is going to zero with each next epoch. But you know what? I’ll tell you a secret — it won’t reach zero, bro. That thing can take very long time to be done. It won’t happens. Never.

Finally, we see results that are very close to input data. The simplest neural net, but it works!

Source code is available on my GitHub.

Original article posted by me in my native language.

Oldest comments (16)

You got deserved star on GitHub. Very good article, nice demonstration, thank you!

ohh, thank you so much!

Amazing article and really well explained. Congratulations 🎊

And thanks for this!

thank you! 😉

This is awesome! Very easy to follow. It's always fun to try to do things without frameworks.

I haven't understood most of it. I'll be back as soon as I get it folks

Same boat but still fascinating

In the next article I would try to thoroughly explain how neural network works (with math examples), if you want 😉 And you will figure it out 😁

Post almost unrelated, but I was reading a tut about tensorflow.js and this video about tensors is veyr mathy but also very good youtube.com/watch?v=f5liqUk0ZTw

Hey, check out my new article about math principles of neural networks 😀dev.to/liashchynskyi/how-neural-ne...

Hey, your post widened my perception. JavaScript or not, neural networks are pretty cool. I am willing to research the subject

Thanks!

Understood everything til backprop but if this is the simplest I’m glad for the explanation! Thanks bro 🤪

Wow, nice article and awesome explanation!

Hi, I put the code on a RunKit notebook, for anyone who wants to see it running without having to install anything on their computer: runkit.com/edo9k/tiny-rnn

For some reason I kept getting an error in the

trainfunction, the error was output_layer is not a function, so I just wrapped theoutput_layervariable in an arrow function. Now it's running.Great article, thank you.

Your derivative is wrong. First, check this math.stackexchange.com/questions/7...

And this simple network can solve AND, OR, XOR, whatever. It depends on the data and the labels. Bias is simple, why don't you just google for it? 😅

Luck!

Some comments may only be visible to logged-in visitors. Sign in to view all comments. Some comments have been hidden by the post's author - find out more