Apache Kafka is a leading open-source distributed streaming platform first developed at LinkedIn. It consists of several APIs such as the Producer, the Consumer, the Connector and the Streams. Together, those systems act as high-throughput, low-latency platforms for handling real-time data. This is why Kafka is preferred among several of the top-tier tech companies such as Uber, Zalando and AirBnB.

Quite often, we would like to deploy a fully-fledged Kafka cluster in Kubernetes, just because we have a collection of microservices and we need a resilient message broker in the center. We also want to spread the Kafka instances across nodes, to minimize the impact of a failure.

In the following tutorial, the Platform9 technical team presents an example Kafka deployment within the Platform9 Free Tier Kubernetes platform, backed up by some DigitalOcean droplets.

Let’s get started.

Setting Up the Platform9 Free Tier Cluster

Below are the brief instructions to get you up and running with a working Kubernetes Cluster from Platform9:

- Signup with Platform9

- Click the Create Cluster button and inspect the instructions. We need a server to host the Cluster.

- Create a few Droplets with at least 3gb Ram and 2vCPU’s. Follow instructions to install the pf9cli tool and prepping the nodes.

$ bash <(curl -sL http://pf9.io/get_cli)

$ pf9ctl cluster prep-node -i

- Switch to the Platform9 UI and click the refresh button. You should see the new nodes in the list. Assign the first node as a master and the other ones as workers.

- Leave the default values in the next steps. Then create the cluster.

- Wait until the cluster becomes healthy. It will take at least 20 minutes to finish.

Click on the API Access tab and select to download the kubeconfig button:

-

Once downloaded, export the config and test the cluster health:

$ export KUBECONFIG=/Users/itspare/Theo/Projects/platform9/example.yaml

$ kubectl cluster-infoKubernetes master is running at https://134.122.106.235

CoreDNS is running at https://134.122.106.235/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

Metrics-server is running at https://134.122.106.235/api/v1/namespaces/kube-system/services/https:metrics-server:/proxyCreating Persistent Volumes

Before we install Helm and the Kafka chart, we need to create some persistent volumes for storing Kafka replication message files.

This step is crucial to be able to enable persistence in our cluster because without that, the topics and messages would disappear after we shutdown any of the servers, as they live in memory.

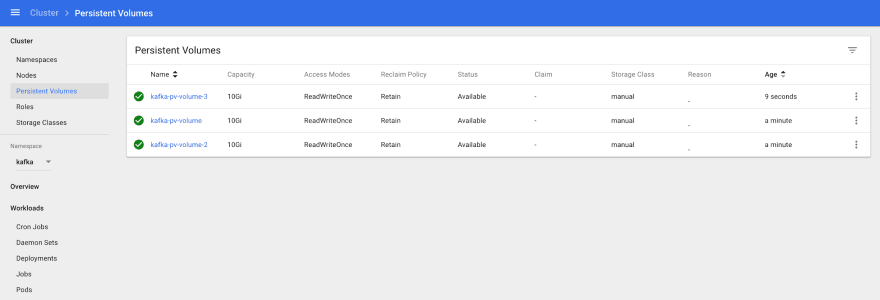

In our example, we are going to use a local file system, Persistent Volume (PV), and we need one persistent volume for each Kafka instance; so if we plan to deploy three instances, we need three PV’s.

Create and apply first the Kafka namespace and the PV specs:

$ cat namespace.yml --- apiVersion: v1 kind: Namespace metadata: name: kafka $ kubectl apply -f namespace.yml namespace/kafka created $ cat pv.yml --- apiVersion: v1 kind: PersistentVolume metadata: name: kafka-pv-volume labels: type: local spec: storageClassName: manual capacity: storage: 10Gi accessModes: - ReadWriteOnce hostPath: path: "/mnt/data" --- apiVersion: v1 kind: PersistentVolume metadata: name: kafka-pv-volume-2 labels: type: local spec: storageClassName: manual capacity: storage: 10Gi accessModes: - ReadWriteOnce hostPath: path: "/mnt/data" --- apiVersion: v1 kind: PersistentVolume metadata: name: kafka-pv-volume-3 labels: type: local spec: storageClassName: manual capacity: storage: 10Gi accessModes: - ReadWriteOnce hostPath: path: "/mnt/data" $ kubectl apply -f pv.ymlIf you are using the Kubernetes UI, you should be able to see the PV volumes on standby:

Installing Helm

We begin by installing Helm on our computer and installing it in Kubernetes, as it’s not bundled by default.

First, we download the install script:

$ curl https://raw.githubusercontent.com/kubernetes/helm/master/scripts/get > install-helm.shMake the script executable with chmod:

$ chmod u+x install-helm.shCreate the tiller service account:

$ kubectl -n kube-system create serviceaccount tillerNext, bind the tiller serviceaccount to the cluster-admin role:

$ kubectl create clusterrolebinding tiller --clusterrole cluster-admin --serviceaccount=kube-system:tillerNow we can run helm init:

$ helm init --service-account tillerNow we are ready to install the Kafka chart.

Deploying the Helm Chart

In the past, trying to deploy Kafka on Kubernetes was a good exercise. You had to deploy a working Zookeeper Cluster, role bindings, persistent volume claims and apply correct configuration.

Hopefully for us, with the use of the Kafka Incubator Chart, the whole process is mostly automated (with a few quirks here and there).

We add the Helm chart:

$ helm repo add incubator http://storage.googleapis.com/kubernetes-charts-incubatorExport the chart values in a file:

$ curl https://raw.githubusercontent.com/helm/charts/master/incubator/kafka/values.yaml > config.ymlCarefully inspect the configuration values, particularly around the parts about persistence and about the number of Kafka stateful sets to deploy.

Then install the chart:

$ helm install --name kafka-demo --namespace kafka incubator/kafka -f values.yml --debugCheck the status of the deployment

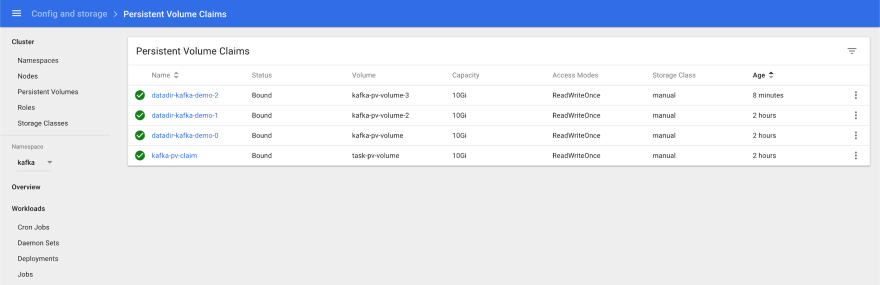

$ helm status kafka-demo LAST DEPLOYED: Sun Apr 19 14:05:15 2020 NAMESPACE: kafka STATUS: DEPLOYED RESOURCES: ==> v1/ConfigMap NAME DATA AGE kafka-demo-zookeeper 3 5m29s ==> v1/Pod(related) NAME READY STATUS RESTARTS AGE kafka-demo-zookeeper-0 1/1 Running 0 5m28s kafka-demo-zookeeper-1 1/1 Running 0 4m50s kafka-demo-zookeeper-2 1/1 Running 0 4m12s kafka-demo-zookeeper-0 1/1 Running 0 5m28s kafka-demo-zookeeper-1 1/1 Running 0 4m50s kafka-demo-zookeeper-2 1/1 Running 0 4m12s ==> v1/Service NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kafka-demo ClusterIP 10.21.255.214 9092/TCP 5m29s kafka-demo-headless ClusterIP None 9092/TCP 5m29s kafka-demo-zookeeper ClusterIP 10.21.13.232 2181/TCP 5m29s kafka-demo-zookeeper-headless ClusterIP None 2181/TCP,3888/TCP,2888/TCP 5m29s ==> v1/StatefulSet NAME READY AGE kafka-demo 3/3 5m28s kafka-demo-zookeeper 3/3 5m28s ==> v1beta1/PodDisruptionBudget NAME MIN AVAILABLE MAX UNAVAILABLE ALLOWED DISRUPTIONS AGE kafka-demo-zookeeper N/A 1 1 5m29sDuring this phase, you may want to navigate to the Kubernetes UI and inspect the dashboard for any issues. Once everything is complete, then the pods and Persistent Volume Claims should be bound and green.

Now we can test the Kafka cluster.

Testing the Kafka Cluster

We are going to deploy a test client that will execute scripts against the Kafka cluster.

Create and apply the following deployment:

$ cat testclient.yml apiVersion: v1 kind: Pod metadata: name: testclient namespace: kafka spec: containers: - name: kafka image: solsson/kafka:0.11.0.0 command: - sh - -c - "exec tail -f /dev/null" $ kubectl apply -f testclientThen, using the testclient, we create the first topic, which we are going to use to post messages:

$ kubectl -n kafka exec -ti testclient -- ./bin/kafka-topics.sh --zookeeper kafka-demo-zookeeper:2181 --topic messages --create --partitions 1 --replication-factor 1 Created topic "messages".Here we need to use the correct hostname for zookeeper cluster and the topic configuration.

Next, verify that the topic exists:

$ kubectl -n kafka exec -ti testclient -- ./bin/kafka-topics.sh --zookeeper kafka-demo-zookeeper:2181 --list MessagesNow we can create one consumer and one producer instance so that we can send and consume messages.

First create one or two listeners, each on its own shell:

$ kubectl -n kafka exec -ti testclient -- ./bin/kafka-console-consumer.sh --bootstrap-server kafka-demo:9092 --topic messages --from-beginningThen create the producer session and type some messages. You will be able to see them propagate to the consumer sessions:

$ kubectl -n kafka exec -ti testclient -- ./bin/kafka-console-producer.sh --broker-list kafka-demo:9092 --topic messages >Hi >How are you? >Hope you're well > Hi How are you? Hope you're wellDestroying the Helm Chart

To clean up our resources, we just destroy the Helm Chart and delete the PVs we created earlier:

$ helm delete kafka-demo --purge $ kubectl delete -f pv.yml -n kafkaNext Steps

Stay put for more tutorials showcasing common deployment scenarios within Platform9’s fully-managed Kubernetes platform.

Top comments (0)