I just want to ask the question of why what we write can be perfectly performant and still be trash.

There is a concept in system design called high availability. Frequently used to provide an expectation for a level of the system performing as expected is the shorthand "five 9's" or 99.999% uptime, about. Many fields shoot for this goal of system availability or even higher(hospitals, airline equipment, and satellite software).

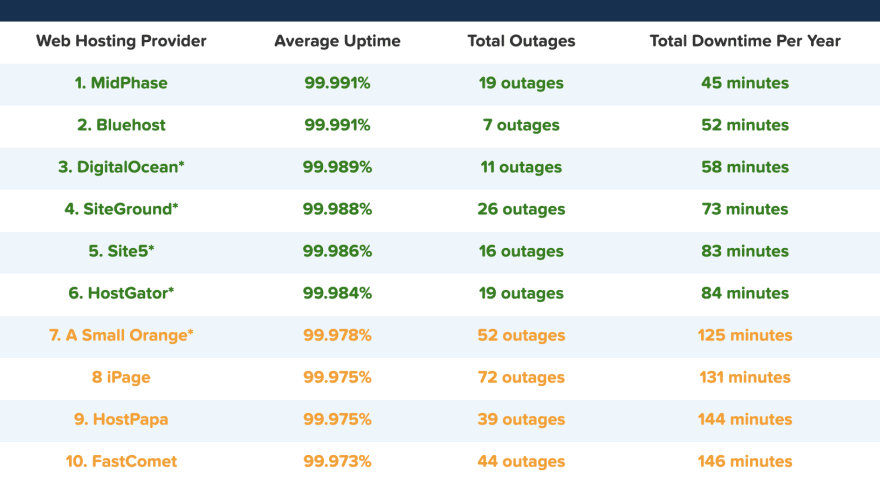

Wow, what a dream, but what about WebDev. I want to be able to write code and perform at those level, but i'm working in distributed hardware(servers, routers), with distributed software(packages, links, libraries). Let's start with the data center where our site is hosted.

Whoops, the highest average was just four 9's and we haven't even written any code yet.

At least google handles their own hosting and has it figured out.

G Suite@gsuite

G Suite@gsuite Google Calendar is currently experiencing a service disruption. Please stay tuned for updates or follow here: goo.gl/NOZTZ14:39 PM - 18 Jun 2019

Google Calendar is currently experiencing a service disruption. Please stay tuned for updates or follow here: goo.gl/NOZTZ14:39 PM - 18 Jun 2019

..oh no

Looks like G-Cal was down for for a little over three hours, that puts it at three 9's for the year.

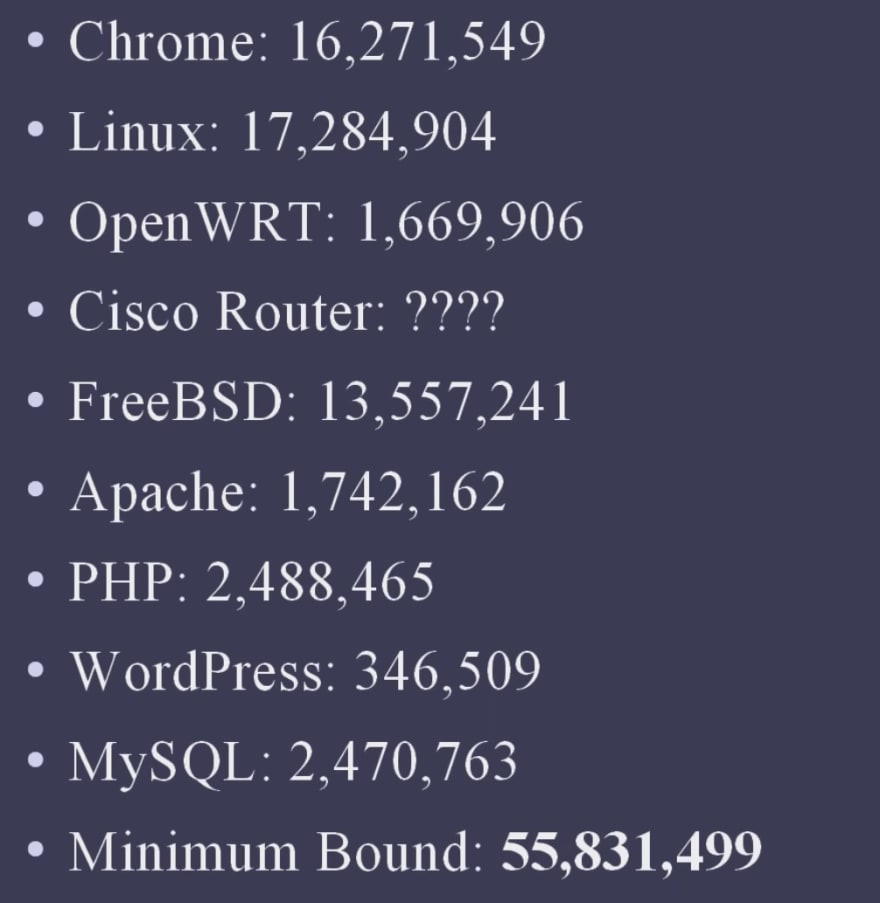

We as web devs are used to relying on so many dependancies we can't even properly estimate how many lines of code are running.

One Dev Casey Muratori tried to figure out how much code has to be brought in and used in a typical web stack from a few years ago and stopped at 56 million lines. Granted, one wouldn't use all the functions and methods brought in through this stack, but it does make some issues difficult or impossible to actually test.

My point is not that we shouldn't use these technologies. But just be aware of what you are bringing in to your stack, try to limit the code you bring to a project if you don't understand how it runs at a deeper level, because as a web dev you are already at four 9's or worse assuming your code is perfect.

Resources

Top comments (0)