Intro

Hi! In this series of posts, I'll bring you along on my journey to setup a home server. I intend to use this server for file sharing/backup, hosting git repos, and various other services. The main applications I have in mind right now are Nextcloud, Gitlab, Pi-hole, and Prometheus/Grafana. Since I'm planning to run everything under Docker, it should be relatively easy to add more services on demand.

Hardware

So...I have a bad habit of rebuilding my gaming PC somewhat often. However, this means I have a lot of spare parts for the server!

Parts List:

CPU: AMD Ryzen 2700X

CPU Cooler: Noctua NH-D15 chromax.Black

Motherboard:: Asus ROG Strix X470-F

RAM: 4x8GB G.SKILL Trident RGB DDR4-3000

Boot Drive: Samsung 980 PRO 500 GB NVMe SSD

Storage Drives: | 2x Seagate BarraCuda 8TB HDD (will be run in RAID 1)

Graphics Card: EVGA GTX 1080 Reference (needed since no onboard CPU graphics)

Power Supply: EVGA SuperNOVA G1 80+ GOLD 650W

Case: NZXT H500i

Case Fans: 2x be quiet! Silent Wings 3 140mm

For my use case, consumer grade hardware should suffice. Also, I can't decide whether 8TB of storage is overkill or not enough! Guess we'll find out...

Assembling the server was straightforward aside from one minor hiccup with the fans. I had to replace one of the two 140mm fans on the CPU cooler with a 120 mm one since my RAM sticks were too tall. Damn those RGB heat spreaders!

Initial Installation

Linux Distribution

I've chosen to use Ubuntu Server for the OS since it's one of the most popular server distributions. I'm more familiar with CentOS, but given the recent shift in that project, Ubuntu seems to be a more stable alternative.

Creating and Booting into the Ubuntu Install Media

- Download the Ubuntu Server ISO from the official website.

- Flash the ISO onto a USB drive (balenaEtcher is a good tool for this).

- Plug the USB drive into the server and boot into the install media.

- Ensure that the server has an Internet connection so that packages and updates can be downloaded during the install. Ethernet is preferred for ease of use and stability.

- NOTE: You may have to change BIOS settings if the server doesn't boot into the USB automatically. Refer to your motherboard's docs for more information.

Installation Process

The official installation guide documents the process well, so I won't list all the steps here. Here are some steps I want to emphasize:

Configure storage:

- Make sure that you select the correct disk to install the OS on. For me, the OS disk will be the Samsung 500GB NVMe SSD (this will vary depending on your specific hardware configuration).

- I'm choosing to configure the two storage disks later manually.

Profile setup:

- The username and password created in this step will be used to login to the server after installation, so keep note of them!

- The newly created user will have

sudoprivileges. - I'm using

positronas the hostname for my server, in case you see it later.

SSH Setup:

- Make sure to install OpenSSH so you can login to the machine remotely.

- You can also choose to import SSH keys from Github/Launchpad. If you do this, it's better to disable password authentication for SSH since it won't be needed. If anything goes wrong, you can always login to the server directly as long as you have physical access.

Snaps:

- I'm not installing any extra packages during the install since I'll do that later using Ansible.

After the installer does its thing, reboot the server and login with the username and password you created earlier.

Initial Configuration

Remote Login

Get the IP address of the server so we can login remotely:

jaswraith@positron:~$ ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: enp7s0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 04:92:26:c0:e1:28 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.140/24 brd 192.168.1.255 scope global dynamic enp7s0

valid_lft 85934sec preferred_lft 85934sec

inet6 fe80::692:26ff:fec0:e128/64 scope link

valid_lft forever preferred_lft forever

The IPv4 address for interface enp7s0 is the first part of the inet field: 192.168.1.140. Your server's interface name might be different depending on your networking hardware.

Now we can login from a different machine using ssh:

jaswraith@photon[~]=> ssh jaswraith@192.168.1.140

Welcome to Ubuntu 20.04.2 LTS (GNU/Linux 5.4.0-65-generic x86_64)

...

jaswraith@positron:~$

Note that I didn't have to enter my password since:

- I imported my public SSH key from Github during installation.

- I have the corresponding private key on my laptop (

photon) under/home/jaswraith/.ssh/id_ed25519

I'm adding the entry 192.168.1.140 positron to /etc/hosts on my laptop so I can login using the hostname instead of the IP address:

jaswraith@photon[~]=> cat /etc/hosts

# Static table lookup for hostnames.

# See hosts(5) for details.

127.0.0.1 localhost

::1 localhost

127.0.1.1 photon.localdomain photon

192.168.1.140 positron

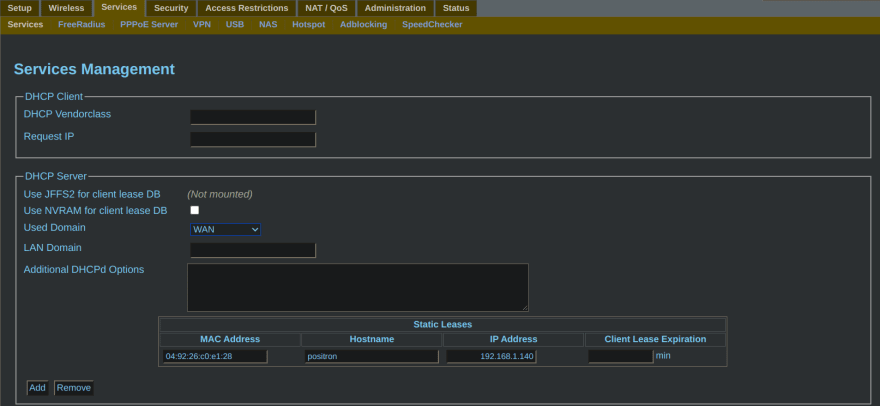

The network router on the local network needs to be configured to reserve the server's IP address so that it doesn't change after a reboot. My router is running dd-wrt, so I'll use that as an example (refer to your own router's documentation on how to set a non-expiring DHCP lease).

dd-wrt specific steps:

- Navigate to

192.168.1.1in a browser - Click on the "Services" tab

- Under the "DHCP Server" section, click on "Add" under "Static Leases"

- Enter the server's MAC address, IP address, and hostname (you can get this info from the router's "Status" page or by running

ip addron the server). Leave the "Client Lease Expiration" field blank so that the DHCP lease never expires.

System Upgrade

Upgrade all installed packages on the server using apt :

jaswraith@positron:~$ sudo apt update

Hit:1 http://us.archive.ubuntu.com/ubuntu focal InRelease

...

Reading package lists... Done

Building dependency tree

Reading state information... Done

13 packages can be upgraded. Run 'apt list --upgradable' to see them.

jaswraith@positron:~$ sudo apt upgrade

Reading package lists... Done

Building dependency tree

Reading state information... Done

Calculating upgrade... Done

The following packages will be upgraded:

dirmngr friendly-recovery gnupg gnupg-l10n gnupg-utils gpg gpg-agent gpg-wks-client gpg-wks-server gpgconf gpgsm gpgv open-vm-tools

13 upgraded, 0 newly installed, 0 to remove and 0 not upgraded.

Need to get 3,133 kB of archives.

After this operation, 114 kB of additional disk space will be used.

Do you want to continue? [Y/n] y

Get:1 http://us.archive.ubuntu.com/ubuntu focal-updates/main amd64 gpg-wks-client amd64 2.2.19-3ubuntu2.1 [97.6 kB]

...

-

apt updatedownloads the latest package information from all configured sources on the server. It also informs you how many packages can be upgraded. -

apt upgradeactually upgrades the packages.

Storage Drive Configuration

Time for the fun stuff, let's configure those storage drives!

Check if the drives are recognized by the system with lsblk:

jaswraith@positron:~$ sudo lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

loop0 7:0 0 55.4M 1 loop /snap/core18/1944

loop1 7:1 0 31.1M 1 loop /snap/snapd/10707

loop2 7:2 0 69.9M 1 loop /snap/lxd/19188

sda 8:0 0 7.3T 0 disk

sdb 8:16 0 7.3T 0 disk

nvme0n1 259:0 0 465.8G 0 disk

├─nvme0n1p1 259:1 0 512M 0 part /boot/efi

├─nvme0n1p2 259:2 0 1G 0 part /boot

└─nvme0n1p3 259:3 0 464.3G 0 part

└─ubuntu--vg-ubuntu--lv 253:0 0 200G 0 lvm /

The two storage drives are listed as sda and sdb. NOTE: Drive names can be different depending on the type and amount of drives in your system.

Creating a RAID Array

I'm going to run the two storage drives in a RAID 1 array. Essentially, the two drives will be exact copies of one another and can act as a single device on the system. If one drive happens to fail, the data is still accessible from the good drive. The array can be repaired by replacing the bad drive and running a few commands. The downside is that the array can only be as large as the smallest member disk (8TB in my case).

On Linux, mdadm is the utility used to manage software RAID arrays. Use the following command to create the array md0 from the two disks:

jaswraith@positron:~$ sudo mdadm --create --verbose /dev/md0 --level=1 --raid-devices=2 /dev/sda /dev/sdb

mdadm: Note: this array has metadata at the start and

may not be suitable as a boot device. If you plan to

store '/boot' on this device please ensure that

your boot-loader understands md/v1.x metadata, or use

--metadata=0.90

mdadm: size set to 7813894464K

mdadm: automatically enabling write-intent bitmap on large array

Continue creating array? yes

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

NOTE: Make sure you use the correct drive names and DON'T use the OS drive!

Check that the array was successfully created:

jaswraith@positron:~$ sudo mdadm -D /dev/md0

/dev/md0:

Version : 1.2

Creation Time : Tue Feb 16 02:51:43 2021

Raid Level : raid1

Array Size : 7813894464 (7451.91 GiB 8001.43 GB)

Used Dev Size : 7813894464 (7451.91 GiB 8001.43 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Intent Bitmap : Internal

Update Time : Tue Feb 16 02:56:33 2021

State : clean, resyncing

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Consistency Policy : bitmap

Resync Status : 0% complete

Name : positron:0 (local to host positron)

UUID : dfe41661:16eb9d7f:ed891288:904746b0

Events : 58

Number Major Minor RaidDevice State

0 8 0 0 active sync /dev/sda

1 8 16 1 active sync /dev/sdb

The resync process is going to take a loooong time. We can track the progress by running watch cat /proc/mdstat:

jaswraith@positron:~$ watch cat /proc/mdstat

Every 2.0s: cat /proc/mdstat positron: Tue Feb 16 03:03:05 2021

Personalities : [linear] [multipath] [raid0] [raid1] [raid6] [raid5] [raid4] [raid10]

md0 : active raid1 sdb[1] sda[0]

7813894464 blocks super 1.2 [2/2] [UU]

[>....................] resync = 1.4% (116907456/7813894464) finish=739.5min speed=173457K/sec

bitmap: 59/59 pages [236KB], 65536KB chunk

unused devices: <none>

740 minutes?! This is going to take over 12 hours!

We can tweak some kernel parameters with sysctl to make this go faster:

jaswraith@positron:~$ sudo sysctl -w dev.raid.speed_limit_min=100000

dev.raid.speed_limit_min = 100000

jaswraith@positron:~$ sudo sysctl -w dev.raid.speed_limit_max=500000

dev.raid.speed_limit_max = 500000

Or I guess not...turns out that the reported speed of ~170000K/s is right around the documented read speed for my Seagate drives (190MB/s). Fortunately, we can still write to the md0 device during this process.

Configuring the md0 Array

Use mkfs to format md0:

jaswraith@positron:~$ sudo mkfs.ext4 -F /dev/md0

mke2fs 1.45.5 (07-Jan-2020)

/dev/md0 contains a ext4 file system

created on Tue Feb 16 04:07:25 2021

Creating filesystem with 1953473616 4k blocks and 244187136 inodes

Filesystem UUID: 3e8

b5548-14c5-4fac-8e90-98cdbd71123f

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208,

4096000, 7962624, 11239424, 20480000, 23887872, 71663616, 78675968,

102400000, 214990848, 512000000, 550731776, 644972544, 1934917632

Allocating group tables: done

Writing inode tables: done

Creating journal (262144 blocks): done

Writing superblocks and filesystem accounting information: done

NOTE: The -F flag is needed since md0 is not a partition on a block device.

I opted to use the classic ext4 filesystem type instead of other ones (xfs, zfs, etc.).

In order to use the newly created filesystem, we have to mount it. Create a mountpoint with mkdir and use mount to mount it:

jaswraith@positron:~$ sudo mkdir -p /data

jaswraith@positron:~$ sudo mount /dev/md0 /data

Confirm that the filesystem has been mounted:

jaswraith@positron:~$ df -h /data

Filesystem Size Used Avail Use% Mounted on

/dev/md0 7.3T 93M 6.9T 1% /data

The /etc/mdadm/mdadm.conf file needs to be edited so that the array is automatically assembled after boot. We can redirect the output of mdadm --detail --scan --verbose using tee -a to accomplish this:

jaswraith@positron:~$ sudo mdadm --detail --scan --verbose | sudo tee -a /etc/mdadm/mdadm.conf

ARRAY /dev/md0 metadata=1.2 name=positron:0 UUID=dfe41661:16eb9d7f:ed891288:904746b0

Confirm that the md0 array configuration has been added to the end of /etc/mdadm/mdadm.conf:

jaswraith@positron:~$ cat /etc/mdadm/mdadm.conf

# mdadm.conf

#

# !NB! Run update-initramfs -u after updating this file.

# !NB! This will ensure that initramfs has an uptodate copy.

#

# Please refer to mdadm.conf(5) for information about this file.

...

ARRAY /dev/md0 level=raid1 num-devices=2 metadata=1.2 name=positron:0 UUID=dfe41661:16eb9d7f:ed891288:904746b0

devices=/dev/sda,/dev/sdb

We also need to regenerate the initramfs image with our RAID configuration:

jaswraith@positron:~$ sudo update-initramfs -u

update-initramfs: Generating /boot/initrd.img-5.4.0-65-generic

The last step is to add an entry for md0 in /etc/fstab so that the array is automatically mounted after boot:

jaswraith@positron:~$ echo '/dev/md0 /data ext4 defaults,nofail,discard 0 0' | sudo tee -a /etc/fstab

/dev/md0 /data ext4 defaults,nofail,discard 0 0

jaswraith@positron:~$ cat /etc/fstab

# /etc/fstab: static file system information.

...

/dev/md0 /data ext4 defaults,nofail,discard 0 0

Note that I used the same mountpoint for md0 that was created eariler (/data).

Conclusion

So we've completed the intial setup of the server and created a RAID 1 array for storage. This server looks ready to run some services! Stay tuned for the next post where we'll define and run those services using Docker and Ansible.

Top comments (0)