Author: Brecht DeRooms

Date: February 6, 2020

Originally posted on the Fauna blog.

New Function-as-a-service (FaaS) providers are rising and gaining adoption. How do these new providers differentiate themselves from the existing big players? We will try to answer these questions by exploring the capabilities of both the more popular FaaS offerings and the new players. We will look at where their focus lies, the limitations, what programming languages they support, and their pricing models. The goal is to build a reference for those looking to choose between providers. Since this should be a collaborative effort, feel free to contact us if you would like to see something added.

Before we dive in, let’s briefly discuss the advantages of FaaS over other solutions. Why would you choose to go serverless?

How does FaaS compare to PaaS and CaaS

Functions as a Service (FaaS) vs. Platform as a service (PaaS)

At the highest level, the choice between PaaS and FaaS is a choice of control versus ease of use, and a choice between architectures (monolith versus microservices). A platform such as Heroku is the first choice for many proof of concepts because getting started is easy and it has powerful tooling to make a developer’s life easier. Typically, such applications are monoliths that scale by adding nodes with another instance of the whole application (e.g., in Heroku terms: add more dynos). An application can go a long way on such a platform without running into performance problems. Still, if one part in your code becomes a bottleneck, this monolith approach makes it harder to scale your application. Once the load on your application increases, PaaS providers typically become expensive (1).

FaaS, on the other hand, requires you to break up applications into functions, resulting in a microservices architecture. In a FaaS-based application, scaling happens at the function level, and new functions are spawned as they are needed. This lower granularity avoids bottlenecks, but also has a downside since many small components (functions) require orchestration to make them cooperate. Orchestration is the extra configuration work that is necessary to define how functions discover each other or talk to each other.

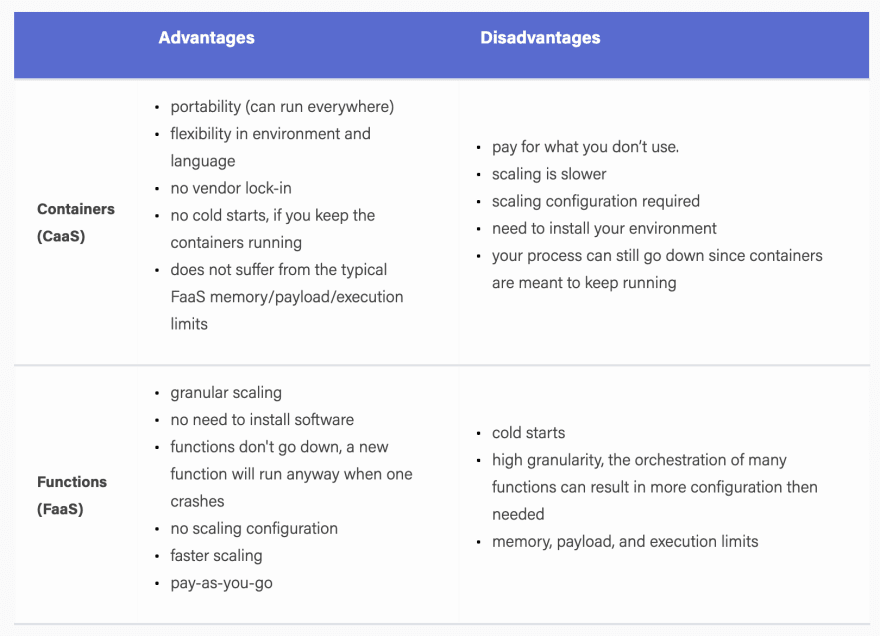

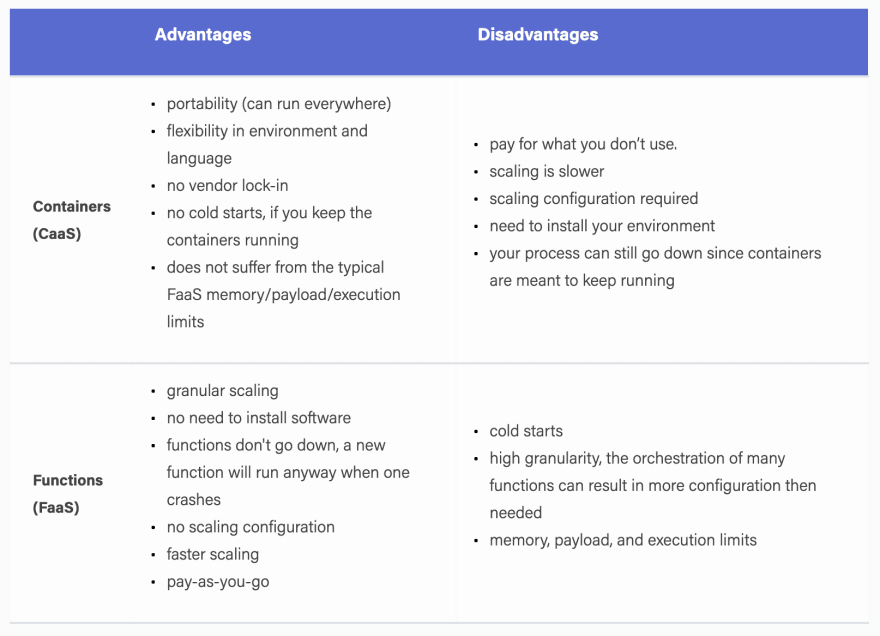

Containers (CaaS) vs Functions (FaaS)

Although containers and functions solve a very different problem, they are often compared since the type of applications that are built with them can be very similar. Both provide ways to easily deploy a piece of code. In the container case, one typically still has to decide how many instances need to run while functions are 100% auto-scaling. There will always be one container running for a specific service, waiting until it receives a task, while a function only runs on request. Containers start up fairly quickly, but are not fast enough to catch up with quick bursts in traffic. In contrast, functions just scale along with the burst.

In general, containers require much more configuration, especially because scaling rules must be tweaked in order not to over- or underprovision. In FaaS, over- or underprovisioning becomes the concern of the provider while clients are provided with a pay-as-you-go model. The pay-as-you-go model is quickly becoming the definition of “serverless” since it abstracts away the last indication of servers from the developer. Of course, the servers that execute your functions are still there and the provider needs to make sure that there are enough of them when a client launches new functions. In order to make this manageable, there are typically memory, payload, and execution time limits in place. These limits make it easier for FaaS providers to estimate the load at a given time and are the prices we pay to be able to run code without caring about servers.

FaaS providers compared

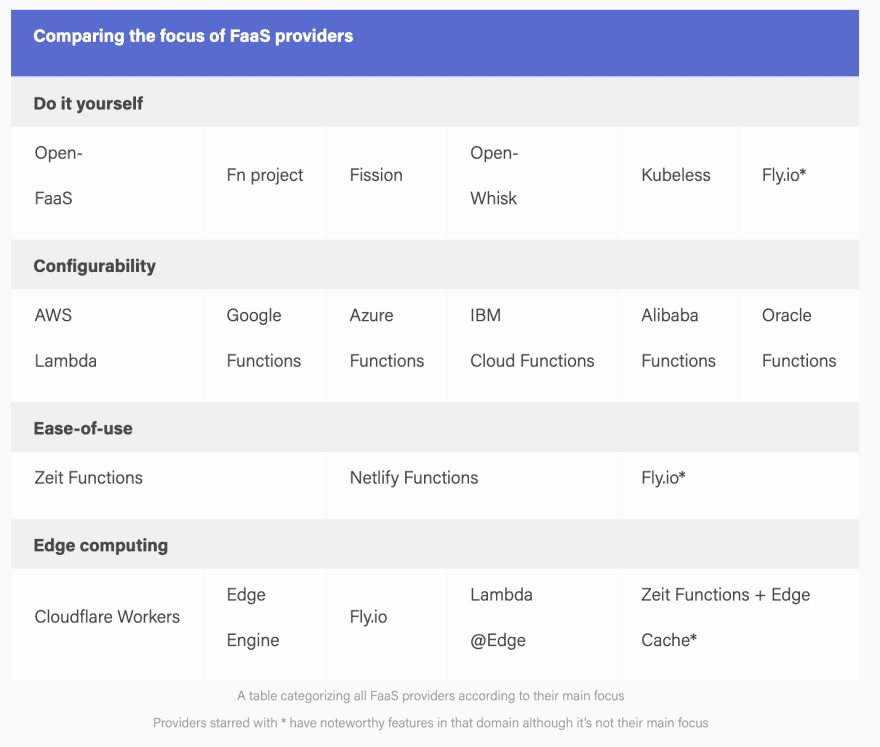

Focus: ease-of-use vs configurability vs edge computing. Of course, people choose a cloud ecosystem for different reasons (e.g., free Azure credits, deployment via CloudFormation, or a specific feature like Google Dataflow) and the logical choice is usually the provider you already use. If the ecosystem is not the deciding factor, the focus of the FaaS provider is often the reason why a company chooses a particular provider.

Generality and configurability:

The biggest providers, such as Azure, Google, and AWS, focus on configurability. Their function offerings are often a vital part of communication between other services of their ecosystem. The trigger mechanism is therefore separated from the function allowing the function to be activated by many things such as database triggers, queue triggers, custom events based on logs, scheduled events, or load balancers. If all you need is a REST API based on functions, then this might seem cumbersome. For example, when you write an API based on AWS Lambda functions, you need to write the function, deploy it, set up security rules to be able to execute them, and configure how they are triggered (e.g., via a load balancer). The number of configuration possibilities can make the documentation seem daunting.

Easy-of-use and developer experience:

Other providers such as Netlify and Zeit respond to that by focusing on a more narrow use case and ease-of-use. In this case, one command is often enough to transform the function into a REST or GraphQL API that is ready to be consumed. The starting barrier and learning curve is greatly reduced because the functions serve a more specific purpose (APIs). Besides that, they provide an impressive toolchain to easily debug, deploy, and version your functions. For example, both support file system routing which allows you to simply drop a function in a folder so that it becomes accessible as an API with the same path, which also works locally. Since the functions live on the same origin as your frontend code, you do not have to deal with CORs issues.

Their function offering also integrates perfectly with the rest of their offerings, which are aimed at facilitating JAMstack applications and typical Single Page Applications (SPAs). JAMstack sites are relatively static sites that make heavy use of serverless offerings to populate the smaller dynamic parts.

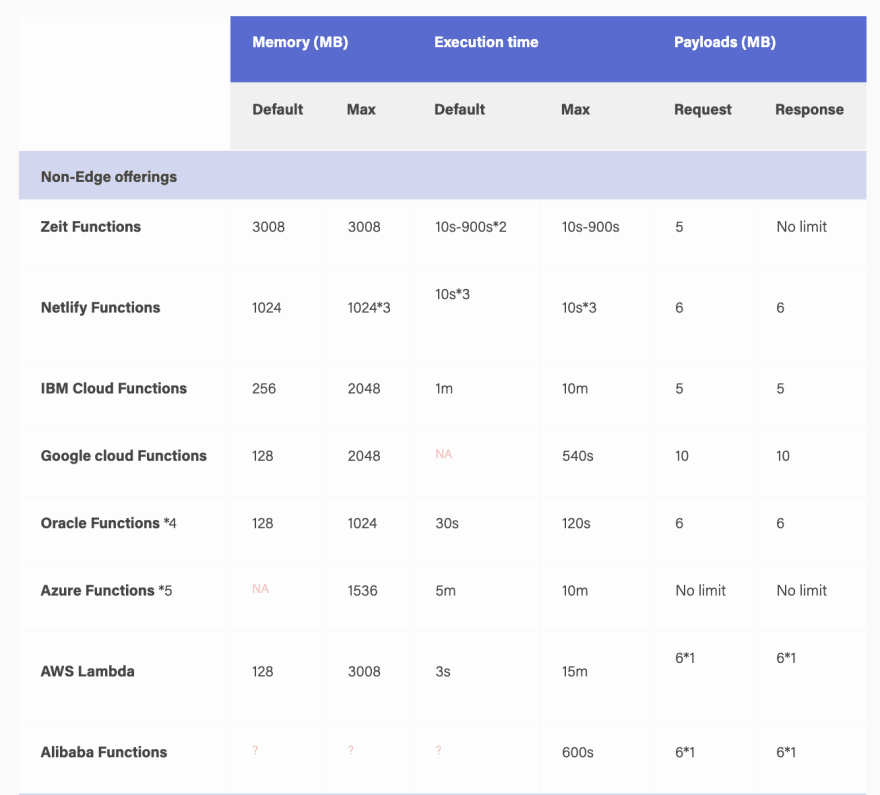

Zeit Functions: Deploy with the Now ecosystem and you have a scalable API under the /api endpoint that can also be simulated locally. When using Functions inside a Next.js app, you receive the benefits of Webpack and Babel and do not have to deal with response payload limits since Zeit Functions don’t have these limits.

Netlify Functions: Deploy with the Netlify CI by just pushing your code to Github. By integrating with their Identify product (similar to Auth0), Functions automatically receive user information. Netlify also provides one-click add-ons such as databases from which the environment variables will be automatically injected.

You can say that Netlify and Zeit compare to AWS/Azure Functions/Google Functions as a PaaS platform like Heroku compares to AWS: they abstract away the complexities of functions to provide a very smooth developer experience with less setup and overhead. Under the hood, they both rely on AWS to run their functions. Their free tier does not only include function invocations; it also comes with everything you need to deploy a website such as hosting, CI builds, and CDN bandwidth.

Edge computing

If low latency is necessary and data always needs to be real-time, then Cloudflare Workers, EdgeEngine, Lambda@Edge, or Fly.io are probably the best choices. Their focus is essentially edge computing, or in other words, bringing the functions as close as possible to the end-users to reduce latency to an absolute minimum. At the time of writing (November, 2019), Cloudflare Workers have 194 points of presence, EdgeEngine sports 45+ locations, and Fly.io is at 16 locations.

All of them run functions straight on the V8 engine instead of NodeJS, which allows for lower latency than their competitors. Due to that, they initially only supported JavaScript but all have recently added support for WebAssembly which should (theoretically) allow most languages. Fly.io differentiates with a more powerful API and an open-source runtime which allows you to build and test your apps in a local environment. However, EdgeEngine and Cloudflare Workers are probably not meant for very CPU intensive tasks since the runtime limitations are expressed in CPU time. A function can exist for a long time, as long as it does not actively use the CPU longer than the runtime limitation. For example, an idle function that is waiting for the result of a network call is not spending any CPU time.

It is noteworthy that Zeit provides a unique Edge Caching system which approximates the serverless edge experience. This system caches data from your serverless functions at configurable intervals, which gives users fast data access, although the data is not real-time.

Do it yourself

Finally, there is a whole new set of offerings that are starting to move away from the serverless aspect of FaaS. Many have bumped into the limits (payload, memory) of functions and then tried to get around those limits. The typical solution was to start running Docker containers. Frameworks like OpenFaaS, the FN Project, Fission, OpenWhisk, and Kubeless are aiming to provide a framework that allows you to deploy your own FaaS solution. They aim to deliver a similar experience to a true FaaS provider, but it’s important to note that this FaaS is all but serverless since you are again responsible for managing clusters and scaling. One can argue that deploying Kubeless on a managed Kubernetes service comes very close and it would not be surprising if these open source frameworks motivate other companies to start their own FaaS offerings. In fact, some of these already serve as the basis for a commercial service provider. OpenWhisk powers IBM’s FaaS offering while a fork of the Fn project is the technology behind Oracle Functions. Fly.io also deserves a space in this category since they rolled out an open source runtime. The future will definitely be interesting; we expect to see FaaS offerings for each niche.

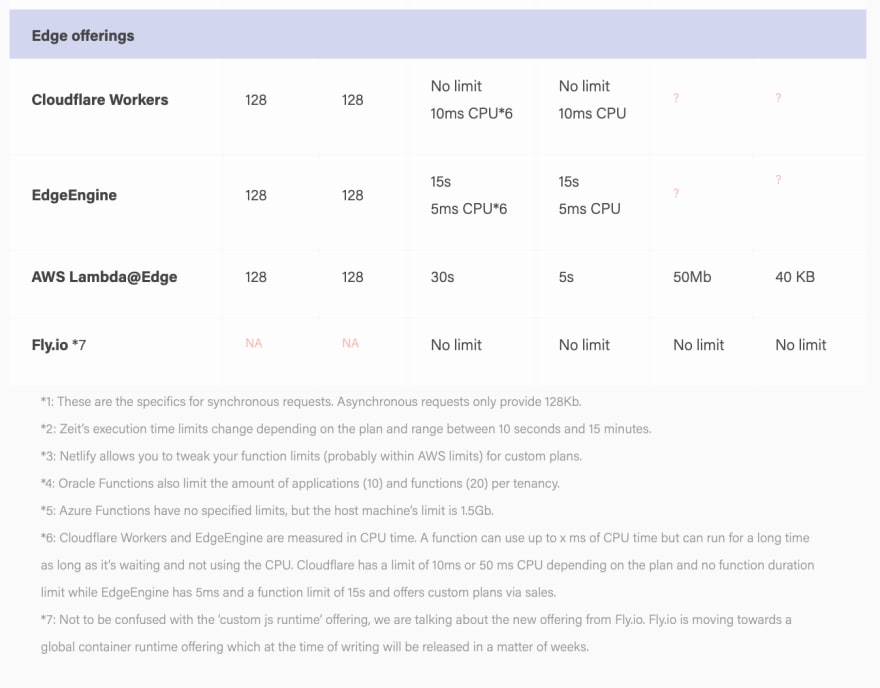

Limits

One of the main pitfalls of using Functions as a Service is the limits. Some developers are not aware of those when they get started, or they underestimate how easily an application can bump into these limits over time. The number of workarounds that can be found online where results are stored temporarily on another location such as AWS S3 shows how many developers bumped into these. Do-it-yourself solutions are left out of the comparison since those typically allow extensive configuration of the limitations. These limitations are there for a reason; they make sure that the load remains predictable for the provider, which allows them to provide lower latencies and better scaling. Azure is the only provider that has no payload limits, which might explain why they are a bit behind performance-wise. Programmers have struggled with these limitations and have found several creative solutions around them. Some providers came up with their own solutions to improve the developer experience. For example, Zeit builds upon AWS Lambda yet does not have the payload response limitation of Lambda.

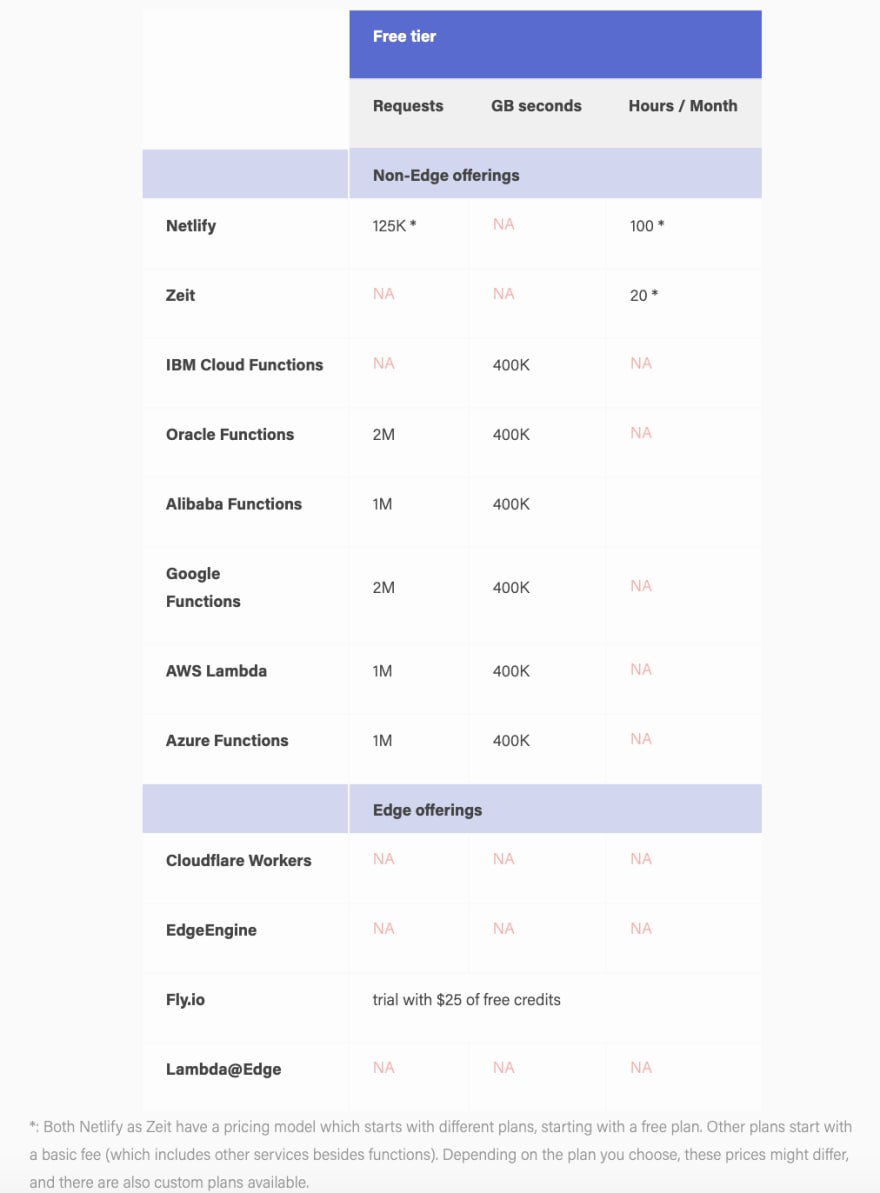

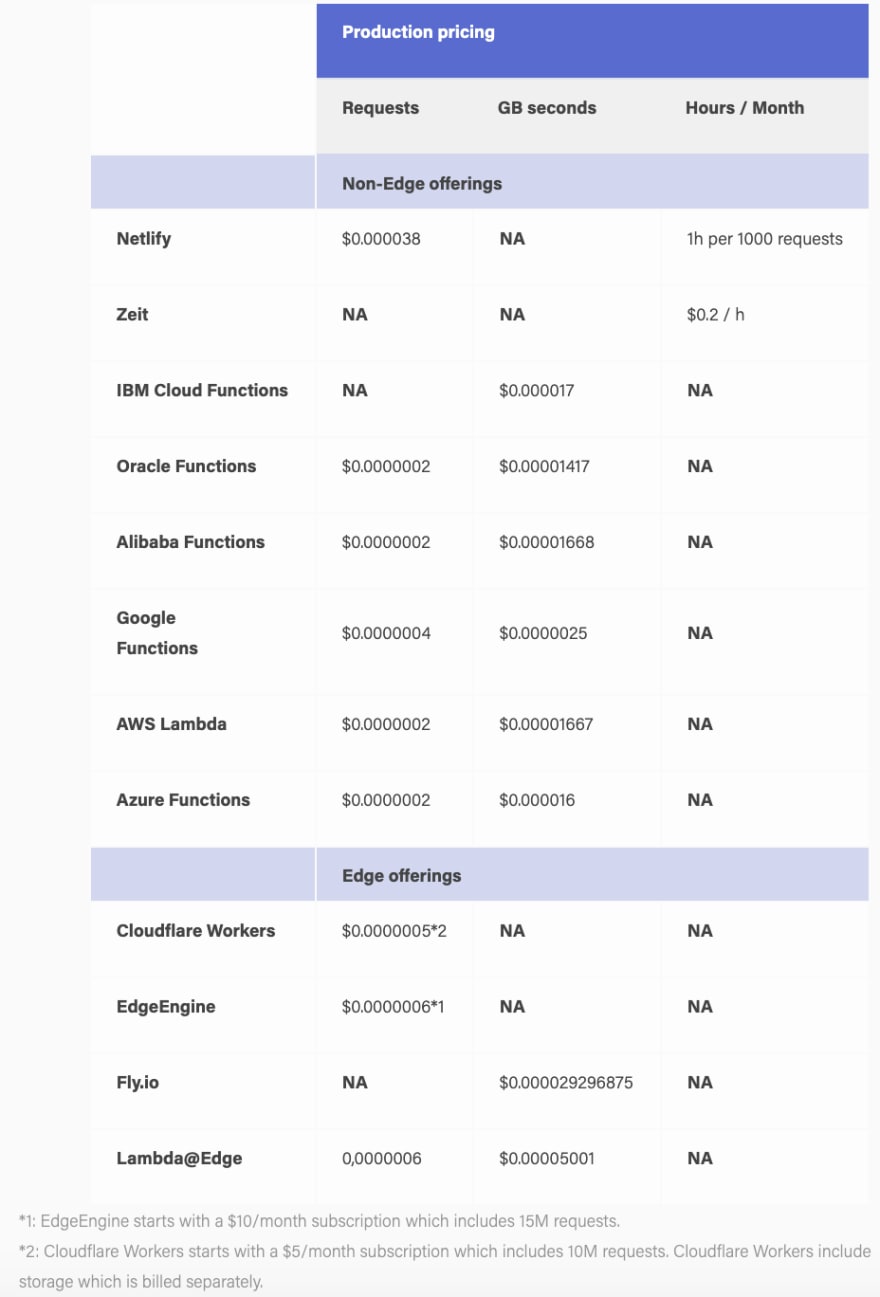

Pricing

The main four FaaS providers (Azure, AWS, Google, IBM) are very comparable. Only Google comes out more expensive as can be verified using this online calculator. The new kids on the block are slightly pricier since they do things quite differently. They abstract away a lot of work for you by automatically provisioning load balancers, or making the deployment process much easier with local development tools, debugging tools, versioning, etc. These providers aim to eliminate devops completely, so comparing their prices with bare-metal FaaS providers is like comparing Heroku pricing with AWS. Pricing for custom setups is left out since they will basically depend on your own infrastructure.

Languages

Most engineers prefer to program in a specific language. When moving to FaaS, this is not always possible since not every language is supported by the providers. Additionally, some languages have significantly higher cold starts than others. In theory, when deploying your own custom FaaS, any language is possible since they are built on top of Docker and meant to be extensible. Of course, that might require a lot of work when providers such as IBM Cloud Functions and Oracle Functions, who build upon OpenWhisk and Fn Project respectively, do not already have native support for all languages. This table provides an overview of supported languages per service. Only the darker green ones can be considered fully supported.

See table here: https://fauna.com/blog/comparison-faas-providers

Performance

Performance is typically the difference between an engaged customer and a bored client. FaaS performance is measured in function call latency. This is very hard to compare across providers for two reasons:

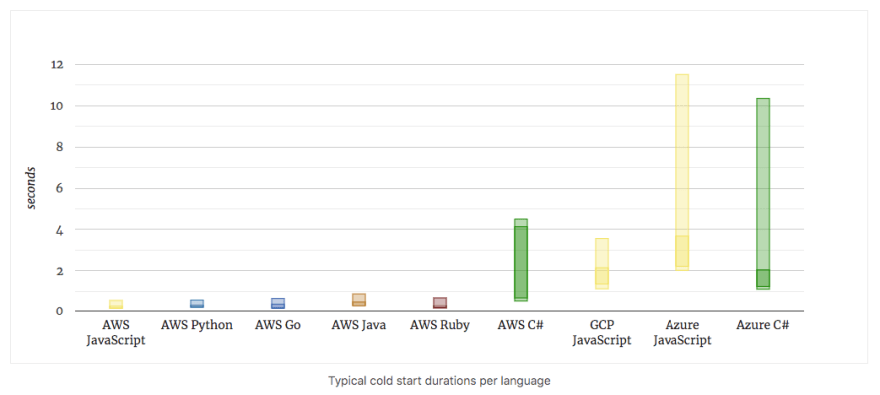

Cold starts: When a function starts for the first time, it will respond slower than usual.

Different idle instance lifetimes: Each provider keeps its functions alive for a different amount of time to mitigate cold starts.

Language and technology-specific: Cold starts and execution time are very language-specific. The way the language is executed (compiled, interpreted, runtime, etc.) can have a significant impact on the performance. For example, both EdgeEngine and Cloudflare Workers run JavaScript straight on the V8 engine instead of on Node, which apparently decreases cold start latency significantly.

Between the three major providers, AWS is definitely still in the lead ( 1, 2) in keeping cold starts low across all languages. They also exhibit the most consistent performance. Azure is last with cold starts and overall performance that is significantly worse than both Google and AWS. Nuweba has created a benchmarking website to compare these providers periodically.

(source: https://mikhail.io/serverless/coldstarts/big3/)

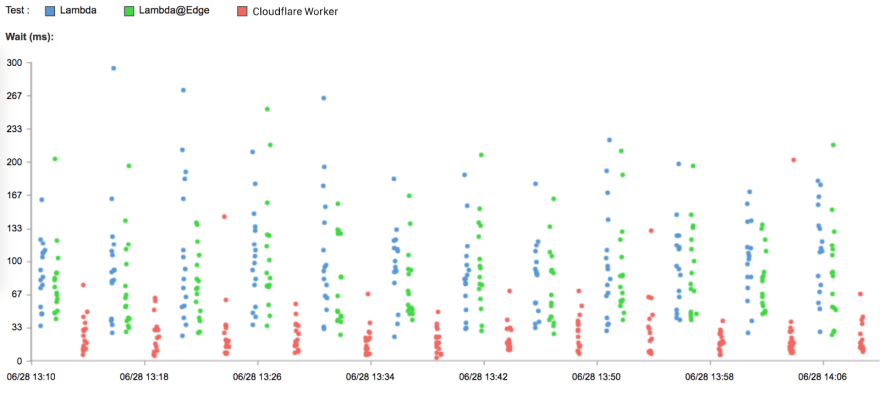

Cloudflare Workers and EdgeEngine both provide a lower cold start latency than regular FaaS providers thanks to the way they execute their functions. Both of these together with Lambda@Edge also aim to reduce the latency that is perceived by the caller by deploying functions in multiple locations and executing the functions as close to the caller as possible. Since Cloudflare Workers currently have most locations, they can probably provide the lowest average latency of the three edge providers. Cloudflare did a comparison between their own workers, Lambda, and Lambda@Edge from which the results indicate that Cloudflare is several times faster.

(Source: https://www.cloudflare.com/learning/serverless/serverless-performance/)

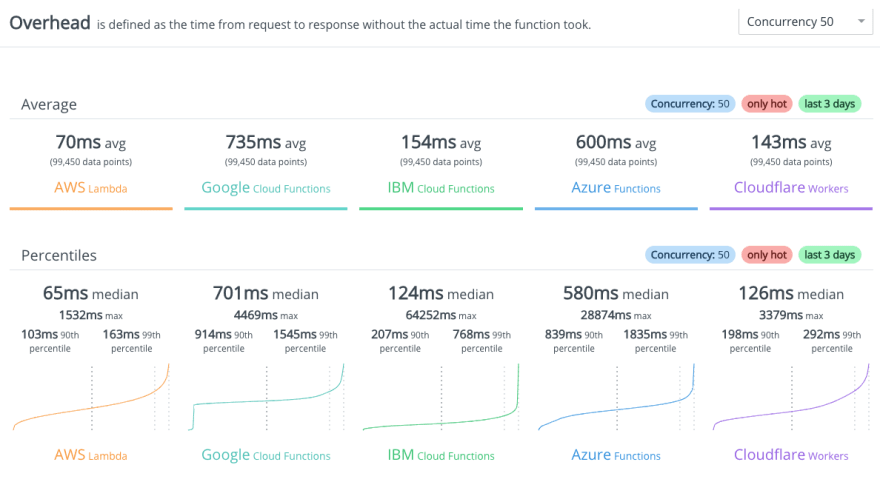

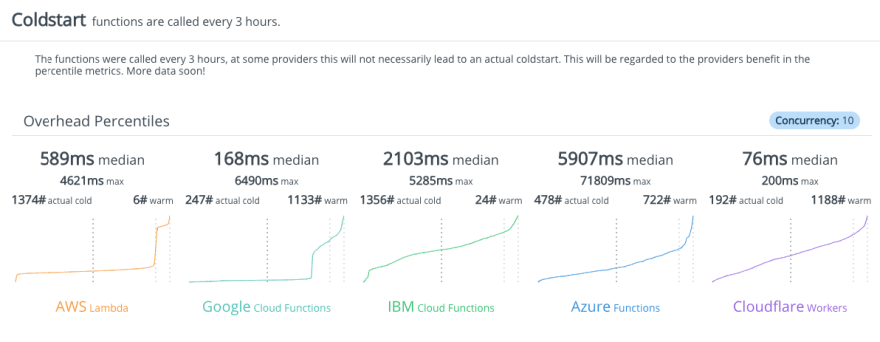

There is also an ongoing independent serverless benchmark project that is benchmarking both cold starts and execution time. It aims to extend the benchmarks to different providers than the main three and already has benchmarks for AWS Lambda, Azure Functions, Google Cloud Functions, IBM Cloud Functions, and Cloudflare Workers. The results are in line with what we expect: AWS Lambda is consistently the fastest for pure processing jobs yet is beaten in the domain of cold starts by Cloudflare. IBM Cloud Functions do very well, but also have very high worst-case latencies. Note that the results are continuously updated and the images provided here are snapshots. The results vary strongly from day-to-day.

The work of independent benchmarks is a great help to determine which functions are best suited for your problem. Providers like Zeit and Netlify that rely on another provider will probably exhibit the same performance as the underlying technology, which in both of these cases is AWS Lambda. At the time of writing, little work has been done to benchmark the performance of other frameworks.

(Source: https://serverless-benchmark.com/ as of 26 November 2019)

(Source: https://serverless-benchmark.com/ as of 26 November 2019)

For do-it-yourself FaaS frameworks, keeping cold start latencies low, scaling, and distribution are your responsibility. Different frameworks provide different configuration options for you to work with, but it will probably be extremely difficult to compete with true serverless providers.

Overview

In a global overview, this is how they all compare.

See table here: https://fauna.com/blog/comparison-faas-providers

Conclusion

A while ago, choosing a FaaS provider was a relatively easy task. Today, we are spoiled with diversity since FaaS providers are springing up like mushrooms. With such a wide range of providers, it becomes harder and harder to follow up on what exists and how they differ. Realizing that some new offerings have an entirely different focus (easy-of-use, edge, do-it-yourself) already brings you one step closer to choosing the right provider. In an attempt to make your choice more comfortable, we have researched and compared various providers on topics such as focus, limitations, pricing, languages, and performance. We hope that this becomes a basis for further discussion as such a comparison is ideally a collaborative effort.

Top comments (0)