I recently found A Cloud Guru launched a cloud challenge about improving the performance application, this triggered my interest so I sat for half a day trying to solve this out. Given I had some prior gcp knowledge I thought this could be pretty easy, however, I was too naive and this challenge turns out tougher than I expected.

An overview of the challenge

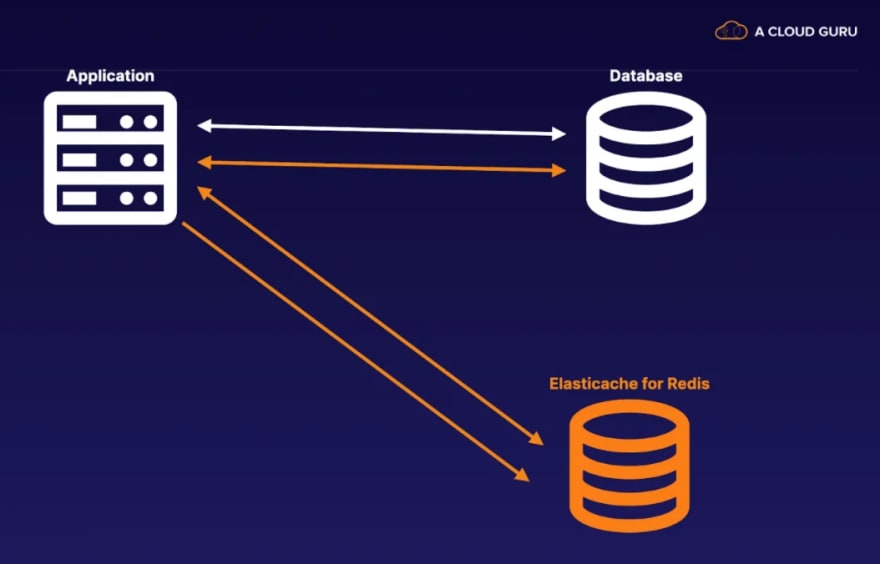

This challenge is about configuring (with CloudFormation or Terraform template) and deploying an application which fetches data from RDS PostgresSQL database, but to improve user experience we can cache certain results with ElastiCache Redis cluster.

My learnings

By comparison with GCP, the virtual instance shares the same concept in both AWS and GCP, where we can configure and customise our application. While RDS Postgres is similar with Cloud SQL; and ElastiCache Redis Cluster is similar to Cloud Memorystore, a gcp product which supports redis to cache certain results. However, the infrastructure in AWS is a bit different to set up, for instance, you need to set up "security group" to enable inbound/outbound traffic, whereas in gcp it is firewall rule where you do the actual implementation.

A couple of problems I ran into and how I solved them.

1. Ngnix server setup

Firstly when I ran the flask application in debug mode, I was only able to access by curl localhost:5000 to check data response, but I was not able to access the index page through the public ip of the instance hosting this application. After browsing some threads I realized it is necessary to use Ngnix or Apache Webserver for port forwarding, so I learnt about how to add servers into nginx and mapped it to the actual "root" location.

After setting up the nginx server, still, I can't access with the public ip, there is a 403 Access Forbidden Error, and this is quite tricky! I need to create/modify the security group to allow inbound access! This is quite different from gcp, normally when I deployed some demo there, it is natural you can web browsing it immediately, without additional effort of network/firewall management.

2. Redis Caching

This is where I learnt how to code in python. The logic is explained in the requirement already: for the first time when a user hit the website, the application need to check the database to return results because apparently our redis cluster has empty data at this moment, but if the same user access this website again, since the data is already cached in redis, it is a Hit! We don't need to access the database again, thus improving the performance of this application.

I think we also need to notice we need to cache our data properly:

- frequent data (also called hot data)

- set proper expiration date (some data can be dynamically changed)

- proper name convention of keys

3. Terraform

This has became very popular in gcp as well, basically speaking, Terraform enables you to create/change infrastructure in a templated, collaborative, versioned way. Depending on the provider, the syntax can be slightly different.

I didn't use it for this challenge, but I think it's a good start for me to manage multi-hybrid cloud environment with the help of Terraform.

Some other things I noticed:

- with free tier to provision a virtual instance, it's centos image by default! I have to move from apt-get to yum!

- I have to reinstall python, pip as the default environment is not very handy to use. Specifically, compiling psycopg2 always failed, I need to install dependent libraries to resolve this.

Conclusion

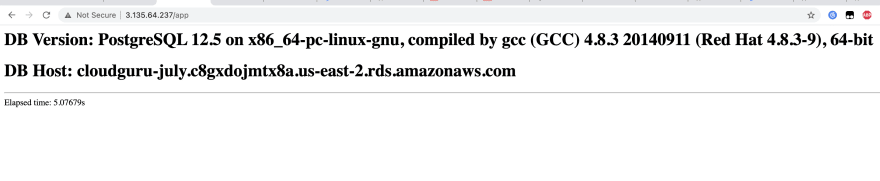

slow-response time

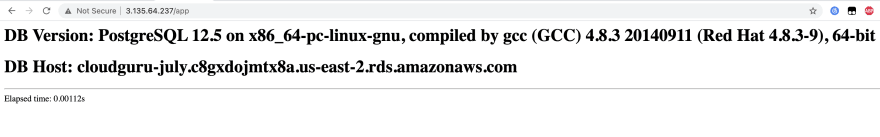

fast-response time

As we can see from the images above, it takes about 5 secs to get results back to the client, but while caching the result, the actual time we need is almost real time! The caching is a huge benefit for the optimization.

Last but not Least

A big thank you to #acloudguru, this challenge is quite helpful and I look forward to learning more in the upcoming months.

Thanks for reading!

Top comments (0)