This post is about Rivendell, my general-purpose library for the elvish shell.

I haven't kept up maintenance of Rivendell because elvish is changing rapidly. So, I took the first steps toward fixing that last week.

Testing seemed an excellent place to start because it would tell me which parts are currently broken and would make maintenance more manageable in the future. Also, I want to document it: a single image on the README isn't helpful to new users.

What if I could tackle both of these objectives at once by generating the docs from the tests?

That appears to be an uncommon practice. A google search reveals a smattering of tools offering this feature1, but I've never done professional documentation using this method. So sound off if you are quietly generating docs from tests.

It's a practical approach for Rivendell, however. Tests and documentation appear to be mutually complementary, and the test runner I had previously written is suited to this.

- Documentation shows users how to use the software, and tests incidentally cover use-cases by testing invariants.

- Tests should make the documentation more comprehensive with edge-case coverage, something I tend to overlook when writing docs.

- The documentation should help explain the tests. Detailed explanations are another thing I tend to overlook when writing tests.

The result

I think it looks pretty good.

Elvish continues to impress. Armed with documentation-generating tests, I now feel prepared to forge ahead by updating the rest of the project.

How does it work?

Reading the documentation for test.elv is like reading the script for a particular Christopher Nolan movie. But doing that won't reveal the stage crew behind the camera.

At the highest level, I have split testing into three parts.

- Assertions

- Test runner

- Reporters

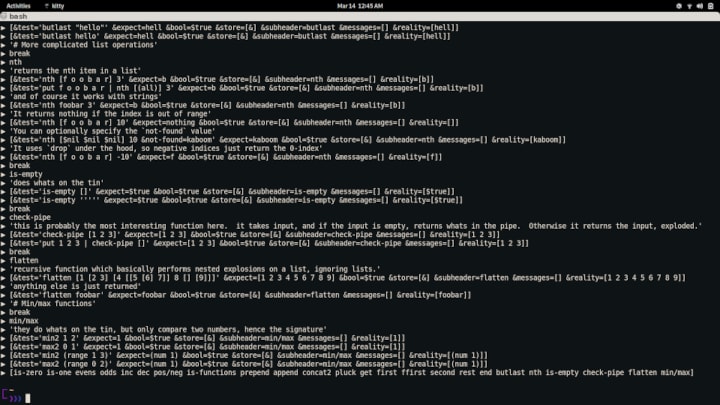

Assertions take a predicate and return a function that takes a test.2 They run the test and check the result against the predicate, then produce a map.

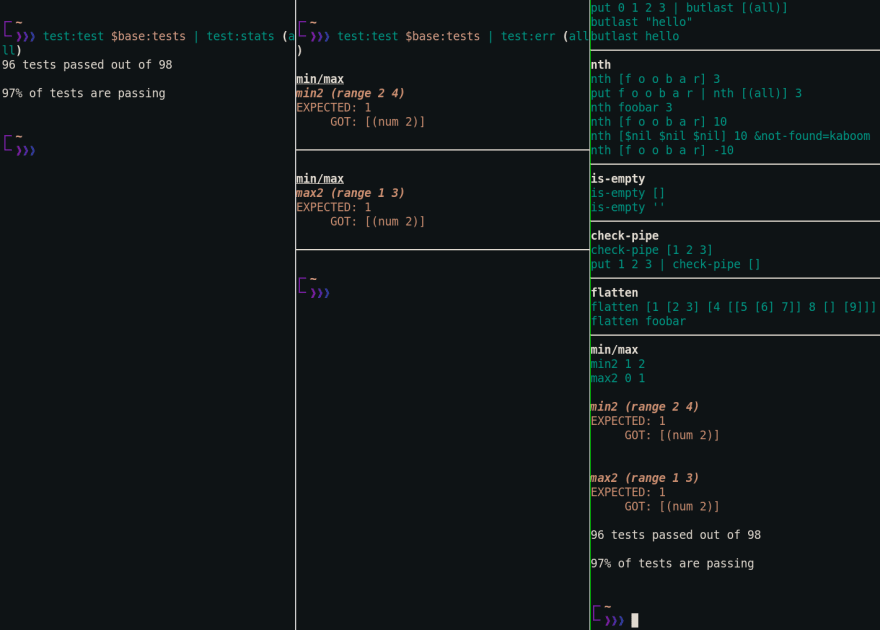

The test runner chains these together, one after the other, and spits out the result. At the terminal, this looks like unreadable garbage.

Last, a few different reporters turn that garbage into readable text. One shows statistics, another describes failing tests, and another gives general pass/fail information about every test. Choose the one most suitable.

although not shown, the error reporter can show more info

although not shown, the error reporter can show more info

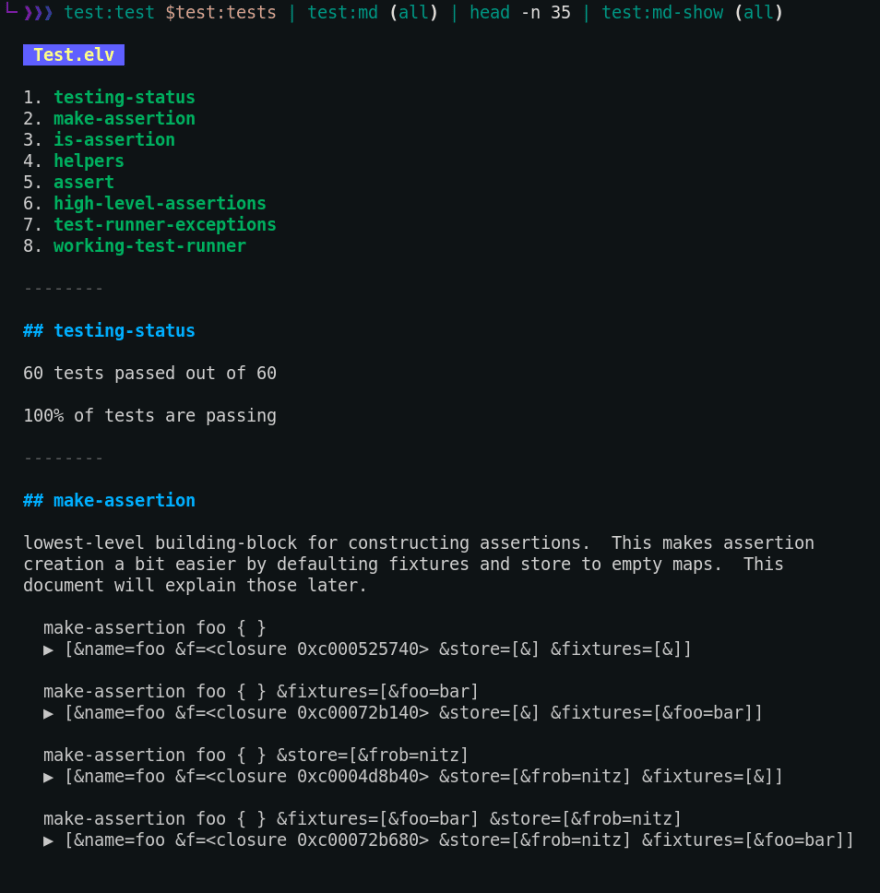

There is another reporter which generates markdown. This process works because I designed the test runner to take a structured list.3 Most strings are emitted verbatim, and you can include whatever markdown syntax you want. It transforms specially-located text into headers and subheaders.

I am re-writing the code using org-mode, so head over to the project if I have glossed over something and suggest improvements. (=

-

lread/test-doc-blocks is my favorite, and is used in honeysql. Although it takes a documentation-first approach, whereas I will take a test-first approach. ↩

-

There are plans for different kinds of assertions. ↩

-

The doc fills in the details about the structure required. ↩

Oldest comments (0)