#JulyOT is here! We are calling on all makers, students, tinkerers, hardware hackers, and professional IoT developers to bring their creativity to create an IoT focused project in the month of July. We have summarized the goals of #JulyOT and how you can get involved in this previous post on dev.to.

Today's #JulyOT post covers some of the updates that you can expect for the week of July 6 - 10. Our theme for this week is "Artificial Intelligence at the Edge" and we have partnered with our friends at NVIDIA to bring you over 8 hours of content that will teach almost EVERYTHING that you need to know to develop AIOT (Artificial Intelligence of Things) solutions. This will culminate in a livestream event with developers from NVIDIA on July 10 at 1 PM CST on the Microsoft Developer Twitch Channel.

Throughout the week, we will roll-out individual posts across social media, but you can also get ahead of the curve by heading to the official #JulyOT content repository at http://julyot.com.

So, what's in the repository this week and what topics will be covered on the livestream?

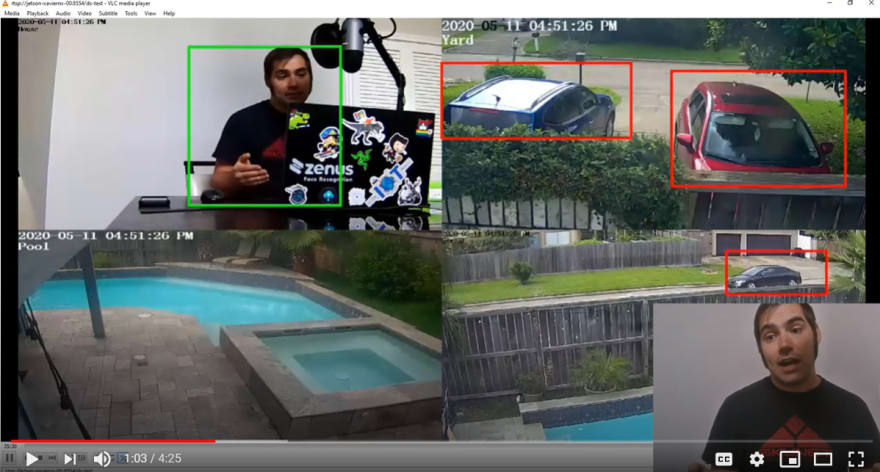

The majority of content will focus on a 5 part video series that was developed to accompany an open source project titled "Intelligent Video Analytics with NVIDIA Jetson and Microsoft Azure". In a nutshell, this project will teach you how to develop a custom end-to-end Intelligent Video Analytics pipeline with multiple video sources, and includes steps on how to train your own object detection model to detect whatever you fancy! The modules are listed below and will give you a quick overview on what you can expect to learn in each section.

- Module 1 - Introduction to NVIDIA DeepStream

- Module 2 - Configure and Deploy "Intelligent Video Analytics" to IoT Edge Runtime on NVIDIA Jetson

- Module 3 - Develop and deploy Custom Object Detection Models with IoT Edge DeepStream SDK Module

- Module 4 - Filtering Telemetry with Azure Stream Analytics at the Edge and Modeling with Azure Time Series Insights

- Module 5 - Visualizing Object Detection Data in Near Real-Time with PowerBI

Each of these modules is accompanied by a livestream that was recorded with @ErikDotDev on Twitch. This is interesting because, Erik was brand new to the NVIDIA embedded platform and applied Artificial Intelligence. We have catalogued his journey in these recordings and hope that it can serve to inspire others to follow a similar path! That's right, in ~8 hours of video content, we feel that you too can become an expert in building custom object detection pipelines for use in a wide variety of scenarios.

Now, what better way to endcap this journey than by being able to ask questions live to the NVIDIA developers who have worked to create the platform and tools that make all of this possible! On July 10 at 1PM CST, we will be joined by a group of developers who will be on hand to answer your questions about anything related to NVIDIA embedded devices, the DeepStream SDK, and the various AI workloads that are supported by their hardware. Be sure to mark your calendars for this event and tune in at the appropriate time at the Microsoft Developer Twitch Channel.

If you have any burning questions for the NVIDIA team, please leave them in the comments below. We will review them and just might ask them on the air! Here are a couple example questions that we plan to cover on the stream:

During the development of our Custom Model, the topic of acceleration hardware came up where we identified GPUs, FPGAs, and ASICs as mechanisms for inference acceleration.

These all work on different principles but we want to hear from NVIDIA, how does a GPU actually accelerate AI workloads?We mostly looked at the acceleration of computer vision workloads, what other types of AI inferencing can be done with NVIDIA hardware? We understand that audio features can also benefit, do you have any examples of this?

As always, thank you for reading and we hope to see you there on July 10 for the livestream event with NVIDIA developers on the Microsoft Developer Twitch Channel!

Top comments (1)

Great article... One thing that I would add, in the hardware acceleration table it would be nice to learn how GPU's and VPU's stack up.