Techniques to scale your Relational Databases - Part 1

Vishnu Chilamakuru ・ May 7 '21 ・ 3 min read

This blog post is a continuation of my previous blog post mentioned above. In my previous post, I mentioned Scaling Relational Databases using Replication. In this post, I will mention more about

Federation

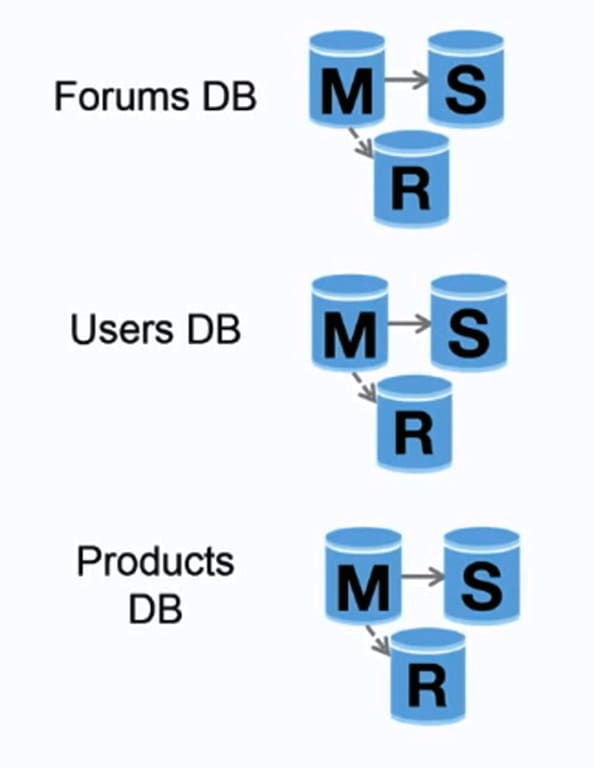

Federation (or functional partitioning) splits up databases by function. For example, instead of a single, monolithic database, you could have three databases: forums, users, and products, resulting in less read and write traffic to each database and therefore less replication lag. Smaller databases result in more data that can fit in memory, which in turn results in more cache hits due to improved cache locality. With no single central master serializing writes you can write in parallel, increasing throughput.

Disadvantages:

- Federation is not effective if your schema requires huge functions or tables.

- You'll need to update your application logic to determine which database to read and write.

- Joining data from two databases is more complex with a server link.

- Federation adds more hardware and additional complexity.

Sharding

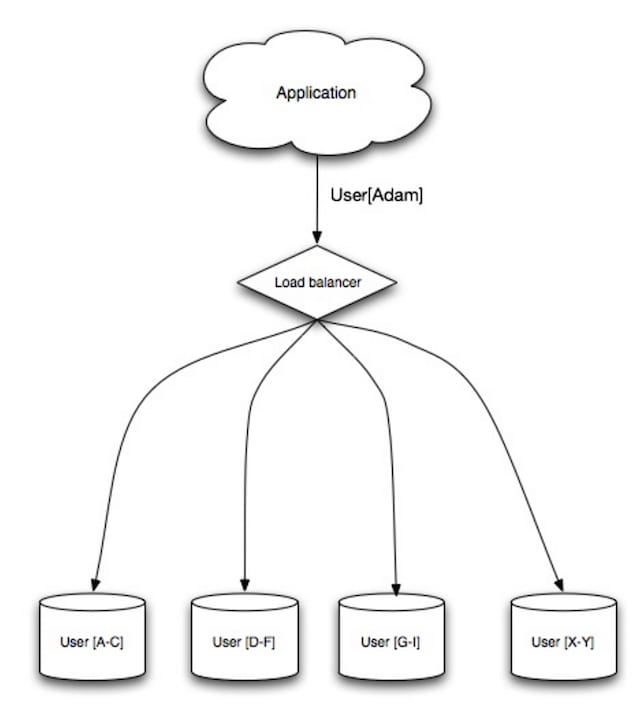

Sharding distributes data across different databases such that each database can only manage a subset of the data. Taking a users database as an example, as the number of users increases, more shards are added to the cluster.

Similar to the advantages of federation, sharding results in less read and write traffic, less replication, and more cache hits. Index size is also reduced, which generally improves performance with faster queries. If one shard goes down, the other shards are still operational, although you'll want to add some form of replication to avoid data loss. Like federation, there is no single central master serializing writes, allowing you to write in parallel with increased throughput.

Common ways to shard a table of users is either through the user's last name initial or the user's geographic location.

Disadvantages :

- You'll need to update your application logic to work with shards, which could result in complex SQL queries.

- Data distribution can become lopsided in a shard. For example, a set of power users on a shard could result in increased load to that shard compared to others.

- Rebalancing adds additional complexity. A sharding function based on consistent hashing can reduce the amount of transferred data.

- Joining data from multiple shards is more complex.

- Sharding adds more hardware and additional complexity.

Denormalization

Denormalization attempts to improve read performance at the expense of some write performance. Redundant copies of the data are written in multiple tables to avoid expensive joins. Some RDBMS such as PostgreSQL and Oracle support materialized views which handle the work of storing redundant information and keeping redundant copies consistent.

Once data becomes distributed with techniques such as federation and sharding, managing joins across data centers further increases complexity. Denormalization might circumvent the need for such complex joins.

In most systems, reads can heavily outnumber writes 100:1 or even 1000:1. A read resulting in a complex database join can be very expensive, spending a significant amount of time on disk operations.

Disadvantages:

- Data is duplicated.

- Constraints can help redundant copies of information stay in sync, which increases complexity of the database design.

- A denormalized database under heavy write load might perform worse than its normalized counterpart.

References :

In the upcoming articles, I will discuss more about scaling techniques for RDBMS using SQL tuning.

Thank you for reading

Hope you find these resources useful. If you like what you read and want to see more about system design, microservices, and other technology-related stuff... You can follow me on

- Twitter here.

- Subscribe to my Newsletter here.

Top comments (0)