Remark: The book Raytracing in One Weekend is an open source book written by Peter Shirley, one of the most renowned Computer Graphics gurus. In my article, I will refer to this book as “rtweekend”.

Why did I choose rtweekend?

As a C++ newbie who had skimmed through only basic parts of C++(I used it in a few university courses though, but I think any tutorials out there should be equivalent), it was really hard to move to the next step. Many people recommend doing actual projects such as Build-Your-Own-X or making a simple game, but none of them were for me.

- I tried Build Your Own Redis, but I felt almost all parts of the code were written in C instead of C++.

- Making a game could be a good start, but since I am running out of time, I couldn’t choose this option. Besides, making games nowadays often requires using either Unity or UE.

But I have always admired the Computer Graphics field, and most of the CG-related programs are written in C++. Thus, it was natural for me to have a look at making a simple rendering engine. So I decided to read rtweekend.

Beginning: Reading the book

So far I only read some C++ code written for the students: which means, I don’t have much experience in real-life C++ code running end to end. Of course, this rtweekend book may not be a production code, but I thought it is good enough to practice C++, because:

- It starts with no boilerplate code, so you can write a ray tracing engine from scratch that actually runs(and also generates cool images).

- It uses OOP C++ features such as inheritance and method overriding. And there are a few C++11 features used in the book such as

shared_ptr. - It builds the engine step by step with easy and self-contained explanations. Therefore, you don’t have to spend much of your energy on understanding the computer graphics concept.

So I just followed the book and typed the code. As a person with not much experience in C++, it was quite pleasant to experience these important concepts from the real code: OOP, pointers, references, methods, overloading, to name a few.

However, there are some parts that I thought I could improve:

- Since the book doesn’t explicitly mention the directory structure of the project, it would be great to separate files such that we have a more readable and organized project structure.

- Many of the hyper-parameters are hard-coded, so we want to provide a setting file(JSON or YAML) to the code when the project is built.

So after finishing rtweekend and the second series, Raytracing: the Next Week, I started to reorganize the project as the remaining parts of the article explain.

Further: Reorganizing the project

As explained above, I tried reorganizing the project in the following order:

- Restructuring the files so that we have a more organized code base

- Creating a JSON file for parameter settings, and passing it to the build commands

Improving the file structure

If you have a look at the book(just a brief glance), you’ll notice that all the feature implementations are done in the header files. I think the author intended this for simple build process(since we only need one single main.cpp file to provide to the compiler).

However, as the number of features grow, the project itself gets bigger and somewhat hard to figure out what is doing what. We want to separate the files according to their purposes such that the project becomes more oganized and manageable.

Either we can split the codebase adding more headers that work like routers - leading the main function in main.cpp to a collection of header files with a specific purpose, or we include .cpp files for each of these subdirectories. We hope to choose the latter approach, so that we can compile each subdirectories as an individual library. This is more beneficial in compiler’s perspective since we don’t have to compile the same unchanged source code every time there is a change in another file. However, due to the expected workload to change the code, we would like to simply follow the first approach.

Currently our project is like this:

.

├── README.md

├── aabb.h

├── aarect.h

├── box.h

├── build.sh

├── bvh.h

├── camera.h

├── color.h

├── constant_medium.h

├── earthmap.jpeg

├── external

│ └── stb_image.h

├── hittable.h

├── hittable_list.h

├── image.ppm

├── main

├── main.cpp

├── material.h

├── moving_sphere.h

├── perlin.h

├── ray.h

├── rtw_stb_image.h

├── rtweekend.h

├── sphere.h

├── texture.h

└── vec3.h

I want to create the following list of subdirectories according to the purpose of each of the header files, like this:

.

├── CMakeLists.txt

├── README.md

├── assets

│ └── earthmap.jpeg

├── build.sh

├── build_test.sh

├── config.json

├── external

│ └── stb_image.h

├── image.ppm

├── main

├── main.cpp

├── ray_tracer

│ ├── camera

│ │ └── camera.h

│ ├── common

│ │ ├── color.h

│ │ ├── common.h

│ │ ├── ray.h

│ │ └── vec3.h

│ ├── object

│ │ ├── core

│ │ │ ├── aabb.h

│ │ │ ├── aarect.h

│ │ │ ├── bvh.h

│ │ │ ├── core.h

│ │ │ ├── hittable.h

│ │ │ └── hittable_list.h

│ │ ├── material

│ │ │ ├── material.h

│ │ │ ├── perlin.h

│ │ │ └── texture.h

│ │ ├── object.h

│ │ ├── primitives

│ │ │ ├── box.h

│ │ │ ├── moving_sphere.h

│ │ │ ├── primitives.h

│ │ │ └── sphere.h

│ │ └── volumes

│ │ ├── constant_medium.h

│ │ └── volumes.h

│ ├── scene.h

│ └── utils

│ ├── json_parser.h

│ ├── rtw_stb_image.h

│ └── utils.h

├── test.cpp

└── tests

└── utils

└── json_parser_test.cpp

During this refactoring, I renamed rtweekend.h to utils.h and removed all the include headers that it doesn’t use. This headers acted like a center of all dependencies, but as the name suggests, we can’t see what its purpose, and also we don’t want to have any indirect dependencies.

I won’t explain what each of these directories mean, since it should be clear from their names. The only thing that I want to mention is the newly created scene.h. This file is for separating the scene(hittable_list objects) creation logic from the main.cpp file, which has become much larger than expected as the book goes on adding new scenes.

Making a parameter setting file and handing it over to the code

As the book progresses, more example scenes are added to the main function, each with different configuration parameters. As those parameters are hard-coded in the book, we want to separately store them as fixture files temporarily(in the future we may add some dynamic GUI configuration tools such as PyQt).

Therefore, I made a fixture file in JSON and passed it as an input to my own JSON parser. The file main.cpp is now responsible for parsing the JSON fixture and pass it to the scene object.

Advanced: Equipping the project with productivity tools

However, I wanted to apply some tools so that the project looks like a real project for production. Hence, I used the following tools:

- A formatter and a linter

- A testing library

- A build tool

Although I didn’t try hard to utilize them fully, I wanted to touch them so that I could be more familiar with the tools and the C++ ecosystem.

Formatter and Linter: clang-format & clang-tidy

The most recommended formatter and linter in C++ are clang-format and clang-tidy. Although there seems to be ways to use these two tools with simple settings through the VS code C++ extensions, for some reason it didn’t work on my Intel x86-64 macOS, so I had to manually install them through LLVM. But I like this approach anyways, since we can be independent from the choice of IDEs.

For the config files, I just copied & pasted the ones maintained by the PyTorch team. For now, I thought it would be better just to follow the best practice in order to minimize my workload.

Test: GoogleTest

For unit tests, I used GoogleTest framework. The actual use of it is briefly explained in my another article about writing my own JSON parser. Here I would like to simply introduce what I tried to use for testing my code. Currently there is only a single test file for the JSON parser, which is built by myself.

Build: CMake

Since we only have one .cpp(main.cpp), there is not much to do with the building process for this project. However, I thought still it would be a good practice to include CMake in our project even in the simplest usage, since we’re in a learning process.

cmake_minimum_required(VERSION 3.24)

project(rtweekend)

# Standard: C++17

set(CMAKE_CXX_STANDARD 17)

set(CMAKE_CXX_STANDARD_REQUIRED ON)

add_executable(rtweekend main.cpp)

target_include_directories(rtweekend PUBLIC "${PROJECT_SOURCE_DIR}/ray_tracer/utils")

target_include_directories(rtweekend PUBLIC "${PROJECT_SOURCE_DIR}/ray_tracer")

TODOs in the Future

Of course, we still have a long way to go. There are many things I want to do to improve for this project, and here is the list of a few of those:

- Finishing the last book: If I have more personal time that can be spent for free, I want to finish “The Rest of Your Life”.

- Make my own supplementary notes: There are several parts that the author doesn’t really explain much, especially some difficult mathematical equations. I want to make my own notes for them so that I can understand the contents in the book in a more complete sense.

- Add more sophisticated algorithms: There are a few algorithms I want to give a try, such as the Metropolis-Hastings algorithm for efficient path tracing.

- Add more unit tests: Currently our project itself has no unit tests(only for the JSON parser I made by myself), so we need to add more unit tests for the basic functions introduced in the book.

- Construct a CI/CD pipeline: If I would develop more open-source like sharable project, I would like to seriously consider applying the CI/CD pipeline for this project.

- Applying parallel processing for performance: this is the one I really tried hard to implement with not much success. I would like to try this such that the rendering time could be significantly reduced.

Remark: a word on the parallel processing in C++ on macOS

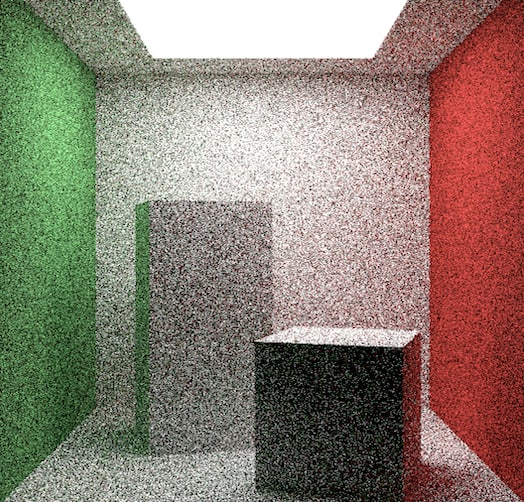

Even if we render a very simple scene, currently we take considerable amount of time. For example, let’s render a Cornell box, and measure its rendered time:

// main.cpp

// render

const auto start = std::chrono::steady_clock::now();

current_scene.render(cornell_box);

const auto end = std::chrono::steady_clock::now();

const std::chrono::duration<double> elapsed_seconds = end - start;

std::cerr << "Rendering the scene takes " << elapsed_seconds.count() << " seconds" << std::endl;

In my old MacBook Pro 2017(Dual-Core Intel Core i5 with 8GB RAM), the result was:

It took 57.6797 seconds to render the scene

For enhancing the performance of our renderer, we would like to render the scene in parallel, using all of the CPU cores.

Frankly speaking, I tried various ways to figure out how to use OpenMP or any other parallel processing tools using C++ on macOS. However, there seems to be no easy way to use such APIs due to some issues with Apple’s Clang compiler. I will search for the ways to address this issue in the future, but for now, I would like to leave this problem as an open question.

Conclusion

It was quite a long journey for me to try rtweekend. Reading the book itself was not simple at all, but trying to reorganize it and build the binary file on my own have taught me a lot more than simply reading books, since I always faced tons of errors including the hardware-specific compile errors and linking errors(so these errors were really hard to figure out the causes).

Personally, I think C++ is a way more sophisticated language than other modern languages such that Python, JavaScript, or even Go, so that we really need more time to prepare for the “prerequisites” to start even a simple project. Nevertheless, it is also true that we should start writing code as fast as we can, regardless of how much knowledge in C++ you have at the moment. This is the biggest lesson I have learned the hard way.

![Cover image for [C++] Hands-on Learning C++ from Code: Reading and Reorganizing “Raytracing in One Weekend”](https://media2.dev.to/dynamic/image/width=1000,height=420,fit=cover,gravity=auto,format=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2Fubxejvk40vi561oz46b7.png)

Top comments (0)