For a class project, I was attempting to scrape data from IMDb. I started by using Beautiful Soup. This was my first attempt at web scraping, and I am a beginner in data science and coding in general, having only started this data science program 2 weeks earlier. Despite struggling to get a handle on the syntax, after a lot of trial and error I eventually was able to start getting the data that I was looking for.

I was attempting to scrape the data from a page that listed the top grossing movies from 1990 through March of 2020. This particular list contained 50 movies per page. In order to do my trial and error method quickly, I was practicing scraping only the 50 movies on the first page.

My first task was to scrape the movie titles. In order to do this I simply went through each movie entry and pulled out the name and appended it to a list of movie titles (after being too far along in my effort I found out that using a dictionary for each movie would probably be a better strategy. Live and learn.) After I got it to work, I went through most of the data that was included for each movie, which included the release year, IMDb rating, Metascore, MPAA rating, runtime, genres, votes cast for the movie, and gross earnings.

With each successive category I was getting more comfortable with the process and was getting much quicker. Once I got finished getting each of these categories I was starting to feel like I was getting it...

Then the problems started. As I said before, in order to have the process go quickly, I was only scraping one page at a time, for a total of 50 data points in each category. I did make sure that my loop for running through each page worked, but then I cut back to only 1 page at a time. When I finally tried to go through 2000 movies I started getting errors.

Missing Values

What I eventually realized was that missing data was what was causing my errors. For example, some of the movies had no IMDb rating. When the trying to navigate to a particular line of text, like below:

sometimes there was no text to find.

What I eventually figured out was that an if statement to determine if the data point exists could fix it. Since the rating code was written with "strong" tags, I just needed to write an if statement checking to see if the strong tag existed. If it did not, the else statement would append the list with "None."

What this taught me was that it is probably a good idea to make your code stronger by planning for the possibility of missing values as a general rule.

Missing Values Part 2

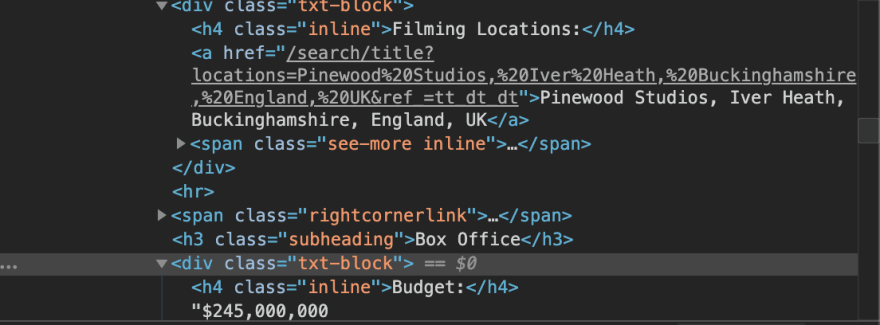

The second big problem that I faced was similar to the first problem. However, this time the problem involved missing values other than the one that I was targeting. This problem arose when I decided to try and get some data beyond what was listed on the previous pages that I was scraping. The information that I was previously getting was all on the list page, which contained the top grossing movies since 1990. However, I was interested in obtaining information about each movie's budget. For this, I had to scrape each individual movie's IMDb page.

Being able to go to each movie's page was easy enough. However, the problem arose when trying to obtain the budget value. On the page for the first movie, I simply entered in the code that pulled up the budget data:

soup2.findAll('h4', class_='inline')[13].nextSibling.rstrip().

The problem was when I tried to go through 2000 movies. What I was doing with my code was search for every h4 inline entry, and selecting the budget one from the list. There were usually 21 h4 inline data points for each moving. However, sometimes there were missing values. For example, some pages didn't have any information on filming locations. When this value was missing, instead of budget being the 13th h4 inline value, it was the 12th. Other times, there would be a number of values missing and it would only be the 8th.

Ultimately, I used if/elif statements to check each h4 inline value to see if it was the budget, and when it was, I would append the budget list with that value. If the budget value itself was missing, then I used the process that I described previously to address that problem.

Being pretty new to coding, these problems took me quite a bit of time to figure out (these and the multitude of other smaller problems that kept arising). The truly frustrating part is usually how simple the answer turns out to be, and the intense aggravation that I couldn't figure it out sooner.

On the other hand, it is incredibly rewarding to struggle for so long to get something right, and then finally run the code and have it all come together.

Top comments (0)