The Christmas of 1995 was a memorable one for me. I had just turned 5 years old and was reveling in my transition from a small child to a slightly larger child. However, my birthday wasn’t what made it special. No sir. On that day, I was in for a big surprise.

I remember sitting in the living room, right beside our tree. I was starring down one of the largest presents I’d ever received, still in its pristine wrapping. With bated breath, I waited for my parents to give me the signal. And when they did, I tore into it like only a kid hopped up on Christmas cookies could.

I instantly recognized the man with the red hat and cape riding atop a cartoonish green dinosaur.

Mario and Yoshi! I exclaimed. I recognized from TV. They were always in my favorite Nintendo ads. I loved watching game footage and would always shout the tag line:

Now You’re Playing With Power, Super Power!

What I was holding was the Super NES. I could barely contain my excitement. As soon as all the presents had been opened, I remember dashing to my room to set it up. Stomping on Goombas had never been so satisfying.

However, unbeknownst to me, as I battled my way through Super Mario Worlds' first castle, were the other wars brewing in the real world.

The Bit Wars.

What were the Bit Wars?

If you’re a fan of the Angry Video Game Nerd like I am, you might’ve already heard about the Bit Wars before.

The short answer is that the Bit Wars were a period of time between the mid-1980s to mid-to-late 1990s where video game titans battled it out for market share and dominance. Since this is more of a historical observation rather than an actual war that took place, the timeline is subjective.

For a better answer, however, we must go back in time to the Video Game Crash of 1983. Let me set the scene.

The video game industry was in shambles. Most game companies were beaten down with their tails between their legs, trying their best to prevent financial ruin. Atari, one of the largest names in video games at the time, slashed its workforce from 10,000 employees to just 400.

During this turmoil, one company would do the unthinkable. Only two short years after the collapse, Nintendo would release a home video game console in America. The Nintendo Entertainment System.

While it certainly wasn’t the first home console, it was the first one to perfect it. The simple construction, ease of use, and high-quality games. It was everything its predecessors hadn’t been, and that was by design. Nintendo dedicated itself to avoiding the mistakes that had lead to the video game crash.

Further aiding the NES’s success was an aggressive marketing campaign and licensing agreements for third-party developers. All of this would culminate in Nintendo taking a 75% share of the video game market and revitalizing the entire industry.

But as it often does, success begets competition.

Witnessing the dominance of Nintendo, arcade industry veteran Sega threw its hat in the ring. As did other smaller players, such as Atari. But when a market is already dominated by a single entity, how does one make a name for itself? Game library and brand image help, certainly. But in the late 1980s and early 1990s, there was another characteristic that caught on like wildfire, bits.

And thus, the Bit Wars began.

Looking back now, it’s bewildering how “bit” campaigns were. Few people really knew what bits were and how they worked. Not the kids playing the games, nor the parents buying them, nor even the marketers creating the ads. But that didn’t stop us from parroting the phrases “16 bit”, “32 bit”, and “64 bit” anytime we debated which were the better consoles.

It also didn’t stop Nintendo from naming an entire console, and a portion of its game library, after its bit architecture.

For those of you, like me, who grew up during the 90s and never understood what bits were, now’s the time to learn.

What are bits?

Bit stands for Binary Digit and it is a singular piece of data (or datum, to be precise). A bit can either be 0 or 1. And they can be strung together to make a long series of 1s and 0s. But why this is important?

Because machine code — the thing that tells computers what to do — is made up of bits. All the games we know and love, with their complex graphics and storylines, can be boiled down to a large string of 1s and 0s.

But how can this be? You might ask. How can a 1 or a 0 tell a computer what to do?

It all depends on the context. For example, take the machine code 0111 0011. In a certain context, those bits can represent the number 115. But in another context, it can represent the lowercase letter s.

The same goes for this code here:

0110 0110 1011 1000 0000 0100 0000 0000 0000 0000 0000 0000

In one context, this gibberish represents the number 112940527124480. But in another, it stands for the assembly language command:

MOV eax, 4

As you can see, bits can mean many different things. It’s up to the Central Processing Unit (CPU) and Graphics Processing Unit (GPU) on each gaming console to process all those bits and turn them into the games we enjoy playing.

The Left and Right Brains

While the “left brain right brain” concept is inaccurate and outdated, it makes for a somewhat fitting analogy for the CPU and GPU.

You can think of the CPU as the left brain of the computer. It’s the logical piece in charge of processing the controller inputs and updating the game’s state based on those inputs. The GPU, on the other hand, is in charge of rendering the graphics on the screen. In other words, the artsy stuff. Thus, the right brain.

When you hear phrases like 8-bit or 16-bit, they refer to the number of bits that a CPU or GPU can process in a single clock cycle. The amount of bits that can be processed at once can play a crucial role in the graphics and game play of a video game.

For example, the original NES had an 8-bit CPU. To put this in perspective, the largest number an 8-bit processor can handle at any given time is 256. This is why the original Nintendo games had simplistic graphics and controls. When your CPU can only handle 8-bit operations, simpler is better.

However, as chip sets and processors became more powerful, they were able to handle more and more bits. When Sega launched their Genesis console with 16-bit capabilities, it was immediately evident it was a technically superior system than the NES. Sonic the Hedgehog’s colorful scenery and blazing fast speed left Mario in the dust.

This may lead some people to believe that larger processors always result in faster and more complex games, but that isn’t the case. There are several factors that play a role in the performance of a gaming console:

- Number of processors (the more processors there are, the more that can be done in parallel).

- Clock speed (how fast the CPU and GPU are).

- Memory size (the more memory, the larger the games can be).

- Type of memory (certain types of memory are faster than others, leading the faster load times).

Even when a system has high marks in all of these areas, it fall flat if it’s confusing and had to work with. This was the case for the Atari Jaguar. The Jaguar had very impressive hardware specs compared to it’s main competitors at the time, the Genesis and Super NES. It boasted itself as the first 64-bit console in a now infamous “Do The Math” marketing campaign.

While many debate whether the Jaguar was a true 64-bit console, it’s hardware was still the most advanced on the market. The problem was that it used customized hardware chips and did not provide a coherent development kit. This meant that few game studios actually built games for the Jaguar. And of the few studios that did, even fewer created games that took advantage of its specialized chips.

Despite the Jaguar’s superior hardware, its games look and play little better than its 16-bit counterparts. It also didn’t help that it’s controller was notoriously clunky and awkward to use.

All in all, the Atari Jaguar was the prime example of more bits not equaling better games.

So if the bit architecture alone doesn’t make better games, why were game companies so obsessed with it? Why was Nintendo obsessed with the number 64? Two main reasons.

The first is marketing. As I mentioned before, the video game industry was dominated by one main player in the early days. New and smaller companies had to make themselves stand out in some way. This was the especially true for the Atari Jaguar and 3DO Interactive Multiplayer as they competed against Sega’s Genesis and Nintendo’s SNES.

The second is potential. Sophisticated hardware won’t make a gaming console great in itself, nor will it produce video game marvels either. However, it does mean that games have the potential for better graphics and complex game play. When you combine this with games that have engrossing stories and fun mechanics, masterpieces are born.

How did the Bit Wars end? Who won?

Seeing as how the Bit Wars were not a recorded event in history, there’s no official end date. But there are plenty of conjectures.

Many believe the release of the Atari Jaguar was the catalyst. Up until that point, consumers had made the association that more bits meant better games and better graphics. The Jaguar was a letdown for many consumers and shattered their bit-beliefs.

Some others believe that the final nail in the coffin came with the releases of the PlayStation and Nintendo 64. Not only was Sony a new player in the gaming industry, the PlayStation was only a 32-bit system. It was competing against Nintendo, an industry titan, with its impressive 64-bit console. By all measures, Nintendo should’ve wiped the floor with PlayStation.

However, PlayStation would end up on top. To be more precise, it destroyed the Nintendo 64, selling 3 times as many consoles.

The true end of the Bit Wars is probably somewhere in the middle. The success of the PlayStation, when juxtaposed to the failure of the Atari Jaguar, was proof to the rest of the industry that buyers no longer cared about bits. They just wanted entertaining games. The Nintendo 64 would be the last console to puts bits at the center of it’s marketing.

Additionally, there is no official winner. Though it’s probably not too controversial to say that the PlayStation crashed onto the video game scene and made the rest of the industry look like squabbling children. However, I propose that the real winners of the Bit Wars were us, the gamers.

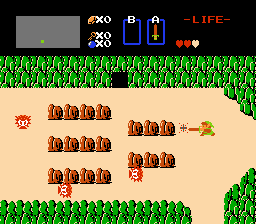

Speaking from personal experience, the Bit Wars was a surreal time to be a gamer. We witnessed the resurrection of an industry. Rapid technical innovation took us from this:

to this

in just over a decade. Not to mention the number of software engineers that must’ve gotten their start during this period. With all the marketing about bits going on, an entire generation was subtly introduced to the world of computer science.

If the Bit Wars has taught me anything, it’s how persuasive marketing and advertising can be. In a time when ads are becoming the go to monetization strategy for many companies, the old adage rings true:

Don’t believe everything you hear, especially if you hear it from a marketer.

Top comments (0)