Note:This post is just for educational purpose to show the use of Webscraping.

Have you ever been in a situation like, you are having you final exams in a week or so and you haven't attended a single lecture(I mean mindfully, of course)? Then you ask YouTube for help (happens in my case every time) and what you get is a huge(even larger than node_modules) playlist to watch and limited data/data-speed at your place.

Or like, you wanted to learn a new skill/language/framework from YouTube and you get a good "playlist" but limited storage space in your phone.

Now, there are websites/applications to download YouTube videos.

OOPS, either they download one video at a time or if they download a complete playlist at once, they are paid ones. You can download the playlist videos one-by-one(until that's a huge one). Exams are important, you can pay for them and if it is about learning, let it take some more time, we have entire life?

Wait a minute, you are a Python developer. Why pay for a service you can build with a few lines of code?

This post will be about a simple project "YoPlaDo-YouTube Playlist Downloader" built using Python. We will be writing a program that takes the YouTube playlist link and web-scraps all the video links using Selenium and download the videos using YouTube-dl.

Web Scraping

Have you ever searched and downloaded images? Or have ever Ctrl+ C and Ctrl+ V (If you know you know)? Or submitted an assignment with the solutions you get online? Basically, this is what scraping is.

Collecting data or to be more specific Extracting data from a website is Web-Scraping. Instead doing things manually, you can automate things. That's what a Web Scrapper does. You give it a list of things to extract and then it goes for shopping(scraping) from a website.

For example, you need an image, it searches for img tags.

These days Web-Scraping is being used in every fields staring from Digital Marketing to DataScience or AI.

So, various languages provide various libraries,frameworks and tools to make a Web Scraper or a Web Crawler. Python uses Selenium ,Beautiful Soup, Scrapy and a few more.

In this we will be creating a basic project with Selenium for a dynamic website.

YoPlaDo

Selenium

"Selenium is a portable framework for testing web applications." - Wikipedia

Primarily it is for automating web applications for testing purposes, but is certainly not limited to just that.

Boring web-based administration tasks can (and should) also be automated as well.

I will be explaining things in a simpler way. For detailed information, you can go through the Docs.

Youtube-dl

"Program to download videos from YouTube.com and other video sites" - Pypi

For detailed information, you can go through the Docs.

Let's get started,

We will be extracting links from a playlist(like https://www.youtube.com/playlist?list=) and download each video automatically with the program. This is just a basic project overview for beginners. You can go for documentation and make improvements.

- Import the libraries

If you want to follow-up with the post, you can make use of "https://www.youtube.com/playlist?list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-" as the input link as this is used in the below example. It will be easier to understand. Also make sure the playlist you use, should have videos which are downloadable and without any deleted videos. This problem can be solved using Exception block but in order to keep this post simple for beginners, the block hasn't been added.

Before importing make sure , you have downloaded the required libraries. You can use,

pip install selenium

pip install youtube-dl

from selenium import webdriver

import time

import youtube_dl

import os

- Initiate Chrome web-driver with the playlist URL

url = input("Enter the Youtube Playlist URL : ")

driver = webdriver.Chrome()

driver.get(url)

time.sleep(5)

Used to initiate Chrome browser with input URL.

time.sleep(5) provides a time gap to start the driver.

- Scraping all the videos links from the Playlist

playlist=[]

videos=driver.find_elements_by_class_name('style-scope ytd-playlist-video-renderer')

First we create an empty list "playlist" to store all the links to be extracted.

Then webscraping comes into play.

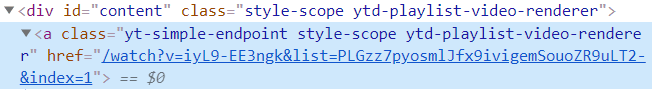

For simpler understanding, the line

driver.find_elements_by_class_name('style-scope ytd-playlist-video-renderer') is used to extract all the contents of source file that comes under specified class division.

- Scraping(part 2)

for video in videos:

link=video.find_element_by_xpath('.//*[@id="content"]/a').get_attribute("href")

end=link.find("&")

link=link[:end]

playlist.append(link)

"""

For example, a playlist with 6 videos

Enter youtube playlist link : https://www.youtube.com/playlist?list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-

['https://www.youtube.com/watch?v=iyL9-EE3ngk&list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-&index=1', 'https://www.youtube.com/watch?v=G7E8YrOiYrQ&list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-&index=2', 'https://www.youtube.com/watch?v=79D4Y1cUK7I&list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-&index=3', 'https://www.youtube.com/watch?v=MUe0FPx8kSE&list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-&index=4', 'https://www.youtube.com/watch?v=UkpmjbHYV0Y&list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-&index=5', 'https://www.youtube.com/watch?v=WTOFLmB9ge0&list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-&index=6']

"""

A new term? Xpath?(superhero path?)

"XPath is a technique in Selenium to navigate through the HTML structure of a page."

For simpler understanding that is just a path to find specific tags.

While the loop inspects all the elements in "videos" object

video.find_element_by_xpath('.//*[@id

="content"]/a').get_attribute("href")

finds all the anchor tags or links present under the division whose id="content" and extract or scrape their href.

The rest part of the code is for validation done to find only the link to the videos of the selected playlist by striping the playlist id and index number from the link.

"""

After processing it looks like:

Enter youtube playlist link : https://www.youtube.com/playlist?list=PLGzz7pyosmlJfx9ivigemSouoZR9uLT2-

['https://www.youtube.com/watch?v=iyL9-EE3ngk', 'https://www.youtube.com/watch?v=G7E8YrOiYrQ', 'https://www.youtube.com/watch?v=79D4Y1cUK7I', 'https://www.youtube.com/watch?v=MUe0FPx8kSE', 'https://www.youtube.com/watch?v=UkpmjbHYV0Y', 'https://www.youtube.com/watch?v=WTOFLmB9ge0']

"""

Wondering why those specific classes?

Link of videos are under these sections.

- Downloading Videos

os.chdir('C:/Users/Trideep/Downloads')

for link in playlist:

with youtube_dl.YoutubeDL(ydl_opts) as ydl:

ydl.download([link])

driver.close()

os.chdir('C:/Users/Trideep/Downloads')

Used to change the download location to the "Downloads".

with youtube_dl.YoutubeDL(ydl_opts) as ydl:

ydl.download([link])

Here comes the Youtube-dl. Looping through the "playlist" list, each link is processed and downloaded using YoutubeDL.

"ydl.download('Url to directory')" processes the link and downloads it to the mentioned directory.

You can further add video specifications or type of video using other attributes of youtube-dl .

driver.close() used to close the driver.

And with merely 30 lines of code, you saved a few dollars and gifted yourself a good project.

Well, for proper execution you can add your own exception blocks and logic. I would suggest you to go through the documentation.

For complete code, you can visit:

https://github.com/Dstri26/YoPlaDo-Youtube-Playlist-Downloader/

Happy Scraping! Happy Coding.

Top comments (25)

Cool post! If you're on a mac, you can easily copy the link of a youtube video or a playlist and use

youtube-dl, a command line tool to download videos on youtube.Very cool exercise! Have you heard of the venerable youtube-dl project? It already supports playlists and many others. It’s written with python to boot!

ytdl-org.github.io/youtube-dl/inde...

Yep, that's a great-one. But pytube helps in understanding Webscraping better,so tried this approach.

Is downloading YouTube videos Legal ?

Although the post is for educational purpose, just to show Webscraping use,in the copyright infringement that surrounds certain YouTube videos, it is the uploader who is technically the copyright violator because he/she is publishing. So if you try to own a video that's illegal. Works fine till you keep things to yourself and that's too illegal. But YouTube hasn't taken any actions till date about it.

Cool alternative to youtube-dl. Thanks for creating this, will have to explore this down the road.

:) Thank you. Surely this could be improved in so many better ways.

Thanks for this tutorial Trideep! Any reason why you used BeautifuSoup instead of Scrapy?

Just because this post was for beginners just to make them acquainted with Webscraping and also that Beautiful Soup does the work in few lines and easier to understand.

Just so you know, it's actually spelled "web scraping," not "web scrapping."

So sorry about that and Thanks a lot.

No big deal. I'm just trying to help you out. You wrote a great article, so thank you for writing it.

You can also use a program called YouTube-DLG

That's a good choice. But I wanted to show the use of Webscraping in a project for beginners so I preffered pytube.

Really good post, thanks Trideep. Will definitely try it.

~Akshat

Thank you :)

Hey. Is this still working? I don't know how you are using beautifulsoup to a playlist which depends upon javascript to form its page. I tried your code upto part-2 and it doesn't work.

Works for me. Javascript generates playlist but in the end every website is built with HTML files. You can go for the repository mentioned in the end.

Well look at the image I attached then. Also pytube isn't properly functional either. Its not updated and have multiple issues. A beginner might get more frustrated trying to debug.

dev-to-uploads.s3.amazonaws.com/i/...

You can use this tool omkar.cloud/tools/youtube-playlist...

which does the same job