In much of the work we carry out for communication service providers (CSPs), either as part of VisiMetrix integrations or when developing custom software products, we need to integrate with IP networking devices that power the packet core, circuit-switched core, backhaul, transmission, radio access, and IMS networks. While many of these integrations use proprietary management protocols, others conveniently use SNMP.

SNMP defines a standard protocol to collect status data, configuration information, and asynchronous notifications from such devices. Slightly less convenient is the fact that during development, we often don’t have access to the actual devices we need to integrate.

Developing and testing against production devices is not an option. While sometimes clients may have test devices, they’re often in high demand for exclusive access by other departments within the CSP. They can also suffer from sporadic downtime or are hidden behind remote desktop solutions rendering them unreachable from development and continuous integration environments.

For these reasons, a very long time ago, we developed an SNMP agent simulator. We used Java because it was our go-to language at the time. Cut to today, and while we still do most of our server-side work in Java and Kotlin, we also have a growing number of teams developing products for our clients in Rust.

Recently when looking for a way to provide more of our engineers an opportunity to develop and hone their Rust skills and hit on the idea of rewriting the agent simulator in Rust. Not only that, we decided to develop it in the open.

We’ll be sharing more about our progress in future posts, but in this one I’m going to talk about a particular challenge we hit early on.

The Problem

Our goal is to implement a service that can simulate one or more SNMP agents concurrently and allow such agents to be created, deleted, started, or stopped at runtime.

Our functional requirements can be summarised as:

- Expose REST endpoints that allow clients to control the simulator

- Support multiple UDP listeners, each on their own port

- Support dynamically creating and destroying UDP listeners

We also want to avoid excessive thread creation.

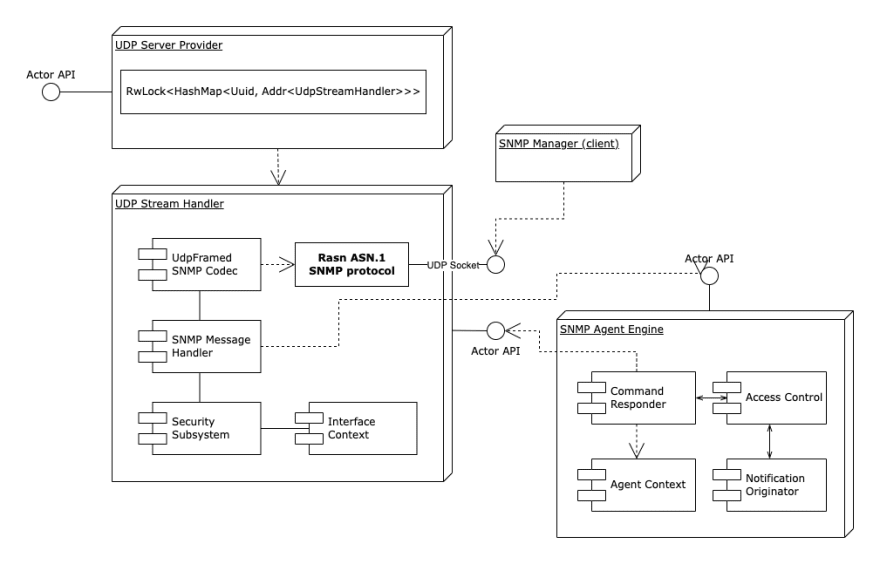

One of the first problems we hit was how to approach handling a dynamic set of UDP listeners in addition to a HTTP listener for REST API requests. Here’s what the (very) high level architecture looks like.

While there are plenty of Rust HTTP server frameworks [1], none support our UDP requirement. What to do?

Standing on the Shoulders of Giants

Before we did anything, we needed to make some key technical decisions. Based on previous experience and in some cases research, we settled on using the following crates [2].

Actix

Actix is an actor framework for developing concurrent applications built on top of the Tokio asynchronous runtime. It allows multiple actors to run on a single thread, but also allows actors to run on multiple threads via Arbiters. Actors can communicate with each other by sequentially exchanging typed messages.

The Actix framework is stable and mature and will ultimately help us create a scalable solution.

Actix Web

To handle REST API requests, we chose the Actix Web framework. By default, its HttpServer automatically starts a number of HTTP workers equal to the number of logical CPUs in the system. Once created, each receives a separate application instance to handle requests.

To handle REST API requests, we chose the Actix Web framework. By default, its HttpServer automatically starts a number of HTTP workers equal to the number of logical CPUs in the system. Once created, each receives a separate application instance to handle requests. Application state is not shared between threads, and handlers are free to manipulate their copy of the state with no concurrency concerns.

rasn ASN.1

We also need to read and write SNMP messages. The rasn ASN.1 codec framework supports handling ASN.1 data types according to different encoding rules.

Conveniently, it also provides implementations for a bunch of relevant SNMP IETF RFCs:

Application Architecture

On startup, the SNMP Agent Simulator creates a number of UdpServerProvider actors to handle incoming requests. The number of actors is determined by the host environment CPU resources. The UdpStreamHandler actor is responsible for serializing and deserializing UDP messages and for forwarding the decoded SNMP messages to the SNMPAgentEngine for further processing.

UDP Socket Creation

So how do we go about creating a UDP socket for each agent? There are a couple of potential options. We can either use UdpSocket from the Rust standard library, or UdpSocket from the Rust async standard library, or UdpSocket from Tokio. Which to choose?

Rust Standard Library

The standard net UdpSocket represents a raw UDP socket bound to a socket address.

let std_socket = std::net::UdpSocket::bind(binding_address)

.map_err(|error| UdpServerError::StartFailed(error.to_string()))?;

Standard net UdpSockets are created in blocking mode by default, and adding a stream backed by a socket created in blocking mode to an Actix stream handler actor leads the actor to end up in deadlock state.

To work around this, we need to ensure we create the socket in non-blocking mode before adding it to the Actix stream handler actor.

std_socket

.set_nonblocking(true)

.map_err(|error| UdpServerError::StartFailed(error.to_string()))?;

From here we can take that socket and wrap it in a Tokio UdpFramed object.

let socket = TokioUdpSocket::from_std(std_socket)

.map_err(|error| UdpServerError::StartFailed(error.to_string()))?;

This works, but we have to explicitly set the socket to no-blocking mode. Perhaps the Rust async standard library might offer a solution.

Rust Async Standard Library

Unfortunately this isn’t an option. Tokio does not support async_std sockets so we can’t wrap the socket in a UdpFramed object.

The last option to explore is the Tokio UdpSocket.

Tokio UdpSocket

The Tokio UdpSocket is created in non-blocking mode directly, so we don’t need to change that property manually.

let socket = TokioUdpSocket::bind(binding_address)

.await

.map_err(|error| UdpServerError::StartFailed(error.to_string()))?;

This does the job and is succinct. Now that we’ve settled on how to create sockets, the next challenge is to figure out how to convert data to and from SNMP messages.

UDP data to SNMP Messages

Raw UDP sockets work with datagrams (byte buffers), but it is more convenient for us to work with domain specific types (messages). Tokio UdpFramed is an interface to an underlying UDP socket that returns a single object that is both a Stream for reading messages and a Sink for writing messages. However, the StreamHandler actor expects the Stream part of the UdpFramed object only. Conveniently, the split method allows us to split the UdpFramed object into separate Stream and Sink objects as in the following code snippet.

// create a UdpFramed object using the SnmpCodec to work with the encoded/decoded frames directly, instead of raw UDP data

let (sink, stream) = UdpFramed::new(socket, SnmpCodec::default()).split();

The UdpFramed constructor must be supplied by an instance of a codec object, which implements the Encoder and Decoder Tokio crate traits. It’s used on top of the socket to handle frames. SnmpCodec is used to decode the received UDP socket data into a stream of SNMP messages, and to encode the SNMP messages into ASN.1 encoded UDP socket data. The SnmpCodec is a wrapper of rasn ASN.1 codec framework, which implements the Encoder and Decoder traits to encode and decode UDP frames. The decoder and encoder implementations leverage the rasn ASN.1 codec framework.

In the Actix StreamHandler actor, the stream handler helper trait is used to implement a UDP receiver data handler in a similar way to standard actor messages. The UdpStreamHandler represents an UDP socket receiver. The actor UdpMessage handler is called, when an SNMP message is received from the socket and successfully decoded.

// Add the stream to the actor's context.

// Stream item will be treated as a concurrent message and the actor's handle will be called.

ctx.add_stream(stream.map(|a| UdpMessage(a.map_err(|e| e.to_string()))));

The StreamHandler actor has no method to deregister the stream from the actor’s context. The solution here is to simply close the socket representing the stream.

What next?

We love Rust! It’s a great language. However, it’s still possible for developers to write code that results in deadlocks. This often happens when the synchronous and asynchronous worlds accidentally collide. Perhaps we’ll write more about that in a future post.

In the meantime there’s lots more work to do on the SNMP simulator. If you’re interested in contributing, please let us know on Github!

[1]: For example Actix Web, Rocket, Axum, Warp, Tide.

[2]: 'Library' or 'package' for readers new to Rust

Top comments (0)