Many websites demand users to register or login before they provide any information. Browsers store cookies for each session as the user navigates the website. Other websites may show pop-ups if they do not have location cookies or if the user explicitly consents to the collection of their data.

Indeed, you can simulate user input to enter credentials, click a button to submit a form. Sometimes, you need to press a checkbox to accept website terms while scraping the data.

Another way is to pass session cookies when you send a request to a website. This article will show how to transfer cookies from a web browser to a Dataflow Kit web scraper.

Follow the instructions described below to crawl specific websites that require login:

- Install EditThisCookie extension to your web browser.

- Go to the website that you want to crawl and sign in with your credentials.

- Open the "EditThisCookie" extension by clicking the button next to your URL. Copy the cookies to the clipboard using the "Export" button.

- Now paste cookies (Ctrl + V) from the clipboard into the "Initial cookies" field of a Dataflow Kit scraper. Cookies in JSON array format are compatible with the cookie format used by Dataflow Kit.

As an example, we'll use the Dataflow Kit Screen Capture Service to illustrate the cookie transfer function.

That's all! Now you run the scraper, and it starts already logged in.

Result

In the captured screenshot, we can see that it was captured after the login page.

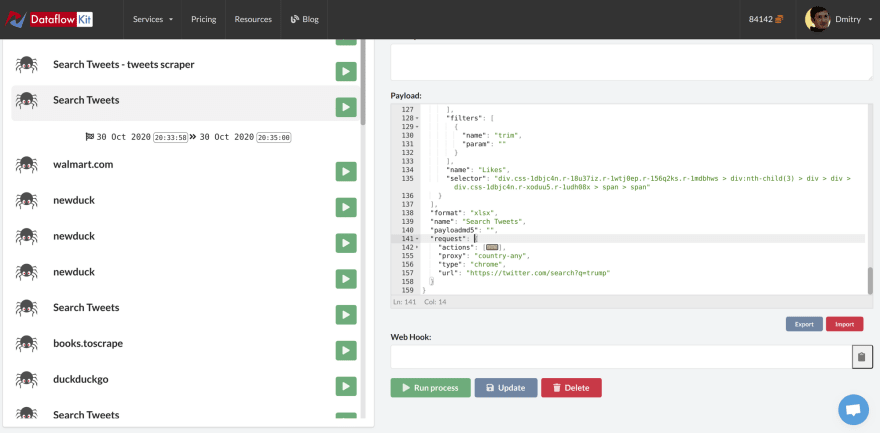

You are not limited to transferring the initial cookies only to the Dataflow Kit services provided on our website. You can add initial cookies to any custom web scraper powered by the Dataflow Kit framework. You can customize your payloads at https://account.dataflowkit.com/tasks

You can customize any Task payload and add InitialCookies manually to request.

Depending on a scraped website, cookies may be short-lived, and this approach with passing initial cookies is not a way to go. In this case, the right solution is to use actions to simulate filling out forms and pressing the submit button.

Top comments (0)