By default, when your Lambda function is not associated to your own VPCs, the function can access anything available on the public internet such as other AWS services, HTTPS endpoints for APIs, or services and endpoints outside AWS. The function then has no way to connect to your private resources inside your VPC.

Connecting to a VPC

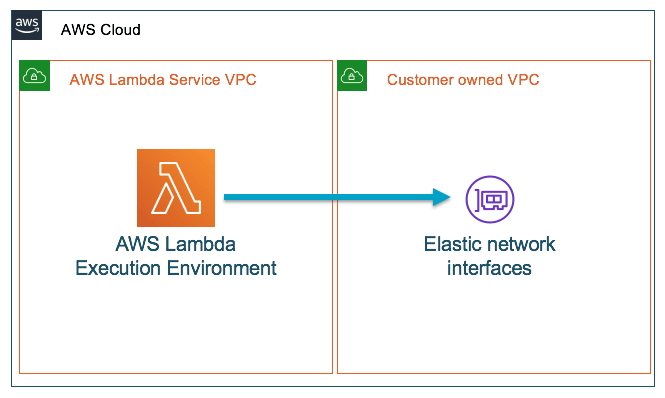

The way for your Lambdas to connect to private resources is by associating them with the VPC your resources reside in. This association is translated to an elastic network interface that lets traffic flow between function and VPC.

Problem?

With this model, there are a number of challenges that you might face. The time taken to create and attach new network interfaces can cause longer cold-starts, increasing the time it takes to spin up a new execution environment before your code can be invoked.

As your function’s execution environment scales to handle an increase in requests, more network interfaces are created and attached to the Lambda infrastructure. The exact number of network interfaces created and attached is a factor of your function configuration and concurrency.

Also, each elastic network interface (ENI) uses an IP address of your VPC subnets so you could possibly reach a limit on the available IPs of your subnet, preventing you from creating additional resources.

Solution?

So what should we do? Should we surrender to these trade-offs? Should we go for a different architecture?

I've been there, I've done my research and I've tested that none of that happens currently. There are a lot of outdated posts about it that generate a lot of misconceptions. That's the motivation of this post.

Since 2019 AWS launched a new (internal) service called Hyperplane, this service address the issues and drawbacks of putting lambdas inside private VPCs!

But... how?

On a high level it's pretty simple and interesting, at least if you have some idea of how NAT works on a network. The idea it's basically the same, you have a bunch of lambdas living in the AWS infrastructure, but when you need to associate them with your VPC they all go through a NAT-ish service that translate each different lambda request to a resource by using only one ENI.

It's very magical, and very convenient service. Because now, the difference in response times of VPC-ed Lambdas and normal Lambdas is almost negligible. I've tested this personally with my infrastructure but you can also see here a comparison made by AWS (with a more detailed explanation)

Top comments (0)