Debugging errors in remote Kubernetes environments is often more time-consuming than we’d like and issues need to be manually reproduced locally with mocks before we can debug.

Mocking sucks

At ZeroK, every time an issue occurred in our staging environment, we would manually reproduce the issue in the local development environment to debug. For this, we'd manually set up mocks to emulate the behavior of dependencies or update the local DB. Additionally, keeping these mocks up to date was a pain, especially as the dependent services are being continuously updated with rapid development cycles.

Sometimes the error would be caused specifically by the behavior of a dependent service and the reproduction would be harder. In these instances, we'd dig through logs to find the specific response or coordinate with the owner of the dependent service to understand the reason, which would further delay debugging.

In the era of copilot and AutoGPT, this seemed like a colossal waste of developer time. So we thought - how can we eliminate all this manual dev. effort around setting up (and maintaining) mocks? There had to be a simpler solution than all the complex service virtualization tools out there.

Our constraints

We started with a few constraints around what we wanted -

- It had to completely eliminate manual mocking effort in our team

- It had to be free

- It had to scale painlessly as we grew as a team

- It had to be transient and lightweight - e.g., auto-generate the latest mocks when needed, and then delete after. No semi-static or almost-right mocks that need additional overhead to maintain.

Solutions we considered

So we started with - what did a perfect solution look like if we could have whatever we wanted?

Ideally, we want to be able to directly tunnel into the remote service in staging and debug with a remote debugger. However, this was not possible today as this would make the service unavailable to other teams using the service in that environment.

Next, we considered creating a replica of the entire staging environment just for debugging (say Staging replica). Then we could connect our remote debugger to the service in the Staging replica and do remote debugging without affecting the original environment. This would ensure one was always debugging against the latest versions of all services in the original staging environment. But ensuring this replica was synchronized with actual staging was going to be a challenge. And it was not going to be entirely free, it would surely not scale painlessly, and it was nowhere near lightweight and transient, so we discarded it.

Then we pared down the replica idea further and said - what if we just created a replica of the single service/pod we want - in the original staging environment, have it retain all the egress connections (so we don't have to mock), and open it up to developers for remote debugging?

We could build out something like that. It would be free, scale well (since it's only a single pod that's being replicated), and we could make it transient.

So we spent a weekend building out Klone.

Meet Klone – for remote debugging in K8s clusters

Klone is an open-source tool that automatically creates a “remote, isolated clone” of a K8s pod in the same environment, and opens up a port forward tunnel to that clone for the developer to use for remote debugging.

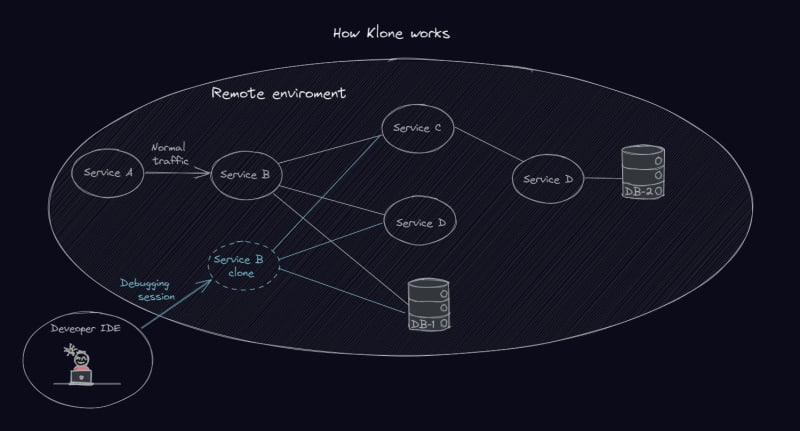

How Klone works

The replica created by Klone is -

- Remote - In the same remote cluster as the original service that needs to be debugged

- Isolated – The replica can only get requests from the developer’s machine (or wherever the port forward is set up to). So it is the developer’s own version of the service that the developer can send traffic to and debug.

- An exact clone - The clone not only replicates the pod and service, but also retains the egress connections to dependent services that the original service had, as well as the exact same configuration and flags.

A developer can establish a direct connection to this clone and debug as though it were on their local machine. Meanwhile, the original service remains untouched and can continue to be used normally in the environment as before.

Through this mechanism, Klone eliminates the need to reproduce an issue locally and eliminates several manual steps currently involved in debugging like setting up mocks/ stubs.

How Klone works

Here's specifically what Klone does under the hood.

- Klone creates a replica of a deployment pod in the same environment as the original

- Klone creates a target service for the pod

- The replica retains all the egress connections of the original service/ pod

- The replica also retains all the other characteristics of the original service, including the resourcing, configurations and flags

- Klone creates a new port forward tunnel to access that pod. This is the only way this pod is different from the original.

- Using this port forward tunnel, developers can directly send requests to the service, or attach their local IDE to the pod in debug mode and do remote debugging

Setting up and using Klone in 5 minutes

Klone is intended to be set up in a couple of minutes. Once installed, Klone has 3 simple commands for use-

- To duplicate: Klone duplicate pod

- To connect: Klone connect service klone-pod

- To delete/clean up: Klone clean

See below for a detailed set of instructions on how to install and use Klone on your cluster

Step 1: Install Klone

To install Klone on your cluster, find the repo here and follow the installation instructions (5 minutes).

Note that the installation requires that you have kubectl installed already and that you have access to the cluster where you're trying to create the replica.

After installing, to confirm that installation is done correctly, run the following command.

kubectl klone

Once you run the command, you should see something like the screen below.

Screenshot - Verification of successful Klone installation

This indicates that Klone is installed and displays the available commands you can use with Klone.

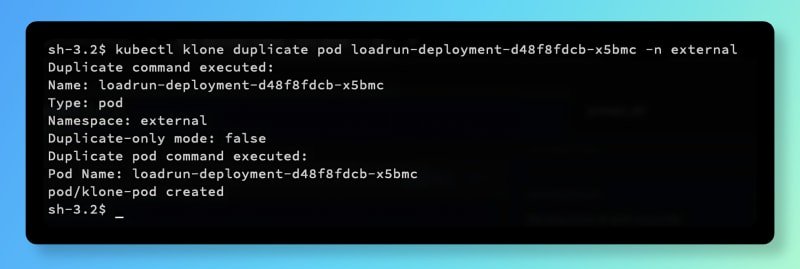

Step 2: Duplicate pod

Now that Klone is successfully installed, to create a replica of your pod, run the following script

kubectl klone duplicate pod <podname> -n external

Here <pod-name> is the name of the pod you wish to replicate.

Once you run it, here's what should see -

Screenshot - Duplicate pod through Klone

This has created a copy of the pod specified, in the same namespace and with the same egress connections and configurations.

This duplicate pod is automatically named klone-pod.

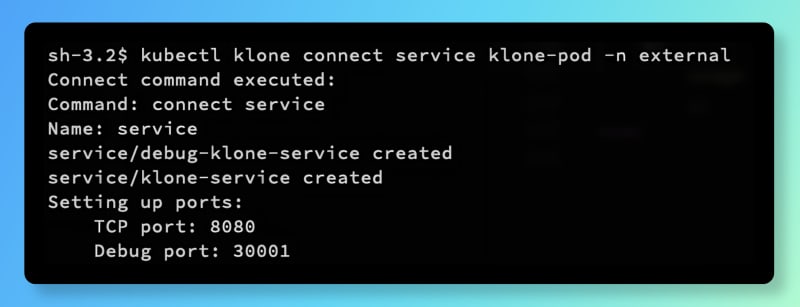

Step 3: Connect to pod

Now that the duplicate is created, to establish a connection to this pod, run the following command

kubectl klone connect service klone-pod -n external

This will show up something like this -

Screenshot - Connect to duplicate pod with Klone

Now you can see the ports in the duplicate pod to connect to. You can see both the TCP and Debug ports.

To send the request using Curl/Postman, use the TCP port. To debug, use the Debug port provided to connect from your IDE. From here you can just debug directly.

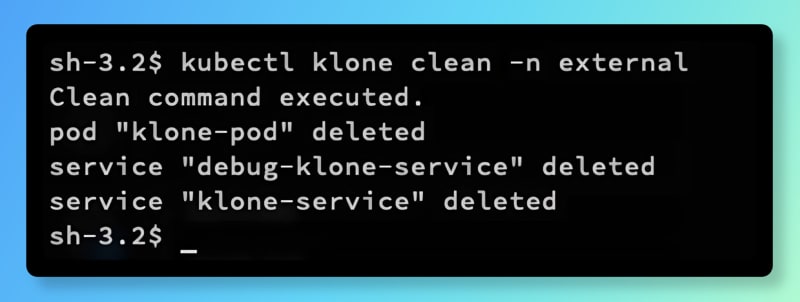

Step 4: Delete pod after use

Once you're done debugging, to delete the pod, run the following command

kubectl klone clean -n external

This will show up on the following screen to indicate you're all done.

Screenshot - Delete Klone duplicate pod after debugging

In summary, using Klone, you can now create a replica of a pod in a remote cluster, connect to the remote machine and debug directly just like you would on a remote debugger, and then delete the duplicate pod– all in a few minutes.

Where can Klone be used?

Klone can be used with all languages and is language-agnostic.

Klone can be easily used in staging, QA and pre-production environments.

While Klone can also be used in production environments - do note that the egress connections to dependent services are retained in the replica. So if using in production, ensure that the requests sent to the replica pod are for test accounts that will not impact any real production data.

Overhead

Given the pod is spun up for a short period for the purpose of debugging only, the resourcing and performance overhead are both expected to be negligible. Also, since the replica pod is independent of the original deployment, any scaling action will not include/affect it.

At scale, if several developers are using the tool at the same time, it will result in resourcing overhead in the environment where Klone is used, as several developers are spinning up a pod for debugging. However, It is unlikely that one developer is debugging more than one service at a time, so the resource overhead will be capped to the number of developers debugging at any point in time (no. of developers debugging at any point in the same environment * 1 pod per developer)

Future improvements

We plan to add several improvements to Klone to build on this basic version in the future. Here's what is coming up next-

Naming of duplicate pods

As you've noticed by now, the duplicate Klone creates is automatically named "klone-pod". This restricts usage of Klone to just one person in a namespace at a time. We're a small team, so we can get by with this for now. In the future, we plan to introduce a feature to name the replica pod based on what the developer wants, which would allow multiple replica pods to be created and used at the same time by different developers within the same namespace.

Automatic deletion of duplicate pods

Currently, deletion of the replica pod is manual – after use, it is up to the developer to remember to delete the pod. If forgotten there is the risk of several ghost replica pods lying around unused and using up resources. In the future, we plan to add a feature to automatically delete pods after a set period (e.g., 48 hours TTL) since creation. Or to show a list of live pods created by Klone for cleanup.

Configuration flexibility of duplicate pods

Currently, the duplicate pod has the exact configurations as the original (including CPU, memory). There could be flexibility on this so the developer can set the size of the duplicate pod to a lower number that's sufficient for the debugging use case.

Replicating several connected pods in one go

Klone currently creates a replica of one pod/ service. Over time, this could be extended to replicate a group of interconnected pods/ services a' la environment-as-a-service, with flexibility to specify different egresses, to enable multi-service debugging.

Summary

Overall, Klone is a simple open-source tool that creates an isolated duplicate pod of a target service, that developers can directly connect to and debug.

Klone removes the need to reproduce errors locally by manually setting up mocks. At ZeroK, using Klone has eliminated all developer mocking effort for us and saved us an estimated 10% of developer bandwidth.

Access the Klone repo here (https://github.com/zerok-ai/kubectl-klone) and leave suggestions for improvements you'd like in the future.

Top comments (0)