This post was originally published on monades.roperzh.com

I truly enjoy listening to Carl Hewitt talk about computers, and something he repeats often is “concurrency is not parallelism”. For me, there was no real difference, and honestly, I’ve never bothered to dig into it.

Last week, I’ve stumbled upon Rob Pike’s talk on the subject, which leads me to finally do some research on the matter. Here are my findings.

beware: as with most things in life, a lot of people consider that there’s no real difference between the two.

Concurrency

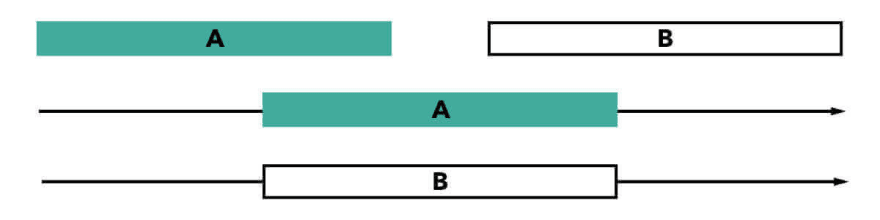

Concurrency is all about doing tasks in the same interval of time. The important detail here is that the tasks don’t necessarily execute at the same time, but they can be divided into smaller tasks that can be interleaved.

A good place where concurrency takes place is in the kitchen, imagine a single chef cutting lettuce while is also checking from time to time something in the oven. He has to stop cutting, check the oven, stop checking the oven and then start cutting again, and repeat the process until is done.

As you can see, concurrency is mostly related to the logistics, without concurrency, the chef will have to wait until the meat in the oven is ready in order to cut the lettuce.

Parallelism

Parallelism, on the other hand, is about doing tasks literally at the same time, as the name implies they are executed in parallel.

Back in the kitchen again, now we have two chefs, one can take care of the oven, while the otter cuts the lettuce, we split the work at the expence of having another chef.

Parallelism is a subclass of concurrency: before you execute multiple tasks simultaneously, you first have to manage multiple tasks.

Sources

- Brave Clojure: The Sacred Art of Concurrent and Parallel Programming

- Haskell Wiki

- Rob Pike’s talk

Bonus

If you have time, take a look at this humorous exchange between Carl Hewitt and a Wikipedia moderator about concurrency vs parallelism.

Top comments (1)

very good example..