Solution:

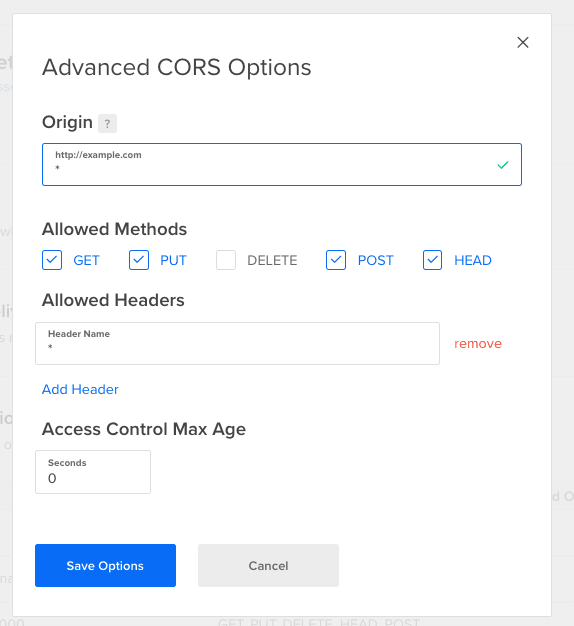

What i actually had to do, was allow for custom headers to be set in the put request. This was done through the DigitalOcean interface in the CORS settings for your spaces.

I am trying to upload assets through the getSignedUrl method that the aws-sdk provides, a NodeJS backend with Axios where the upload happens from a VueJS 2 frontend. Now whatever combination i try, or the file is able to upload but always sets it to private and the wrong Content-Type, or i get 403 permission errors.

If i leave out all the ACL and ContentType stuff, the file is displayed on the Spaces interface as being private.

This is how it should be shown, and what i get when i upload manually through the interface:

What i want to do is that the upload has an ACL that is public-read and the correct Content-Type.

The following is the code that i use:

To get a signed Url

const s3 = new AWS.S3({

accessKeyId: keys.spacesAccessKeyId,

secretAccessKey: keys.spacesSecretAccessKey,

signatureVersion: 'v4',

endpoint: 'https://REGIO.digitaloceanspaces.com',

region: 'REGIO'

});

app.get('/api/upload/image', (req, res) => {

const type = req.query.type;

s3.getSignedUrl('putObject', {

Bucket: 'BUCKET',

ContentType: type,

ACL: 'public-read',

Key: 'random-key'

}, (error, url) => {

if (error) {

console.log(error);

}

console.log('KEY:', key);

console.log('URL:', url);

res.send({ key, url });

});

});

This provides a signed url that looks like:

https://BUCKET.REGION.digitaloceanspaces.com/images/e173ca50-fafe-11e9-b18c-d3cfd4814069.png?Content-Type=image%2Fpng&X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=NSX3EZOFXE57BAFSKDIL%2F20191030%2Ffra1%2Fs3%2Faws4_request&X-Amz-Date=20191030T102022Z&X-Amz-Expires=900&X-Amz-Signature=f6bcdcd35da5dc237f881a714ec9ca09cb7aa3f3ffbd2389648a7c30808ab56e&X-Amz-SignedHeaders=host%3Bx-amz-acl&x-amz-acl=public-read

Then for actually uploading the file i use the following:

await UploadService.uploadImage(uploadConfig.data.url, file, {

'x-amz-acl': 'public-read',

'Content-Type': file.type

});

With the service looking like:

async uploadImage (url, file, headers) {

await axios.put(url, file, headers.headers);

}

disclaimer: this works with S3 itself as you can set the permissions on a per bucket level so you don't have to worry about it. But Spaces providers a CDN as well and is just generally cheaper. :)

Top comments (4)

LIFESAVER! Thank you thank you for posting this! Was going round and round with different ways to upload and losing my mind. Didn't realized had to put the ACL public headers both when generating url and also when uploading.

Working well for me now when deployed.

Now if I could just figure out how to get around the Digital Ocean Cors config when on localhost . .

Were you able to ever get this working on localhost?

my side ald try all but can fix just need this on setting space

I couldn't get it working with following code. :(

gist.github.com/Yasir5247/dfe584c3...