Introduction: Embracing Red Hat’s Journey

Being a part of Red Hat has also meant becoming a member of the expansive community that contributes to open source — a grand giveaway program that defines not only the ethos of Red Hat but also a significant part of my professional journey. The past year has been thrilling and filled with excitement, challenges, and learning. Red Hat has not only offered me a flexible and enriching work environment but also enabled me to live life to the fullest.

We’ve had our ups and downs, like the brief wave of layoffs that shook us, but the resilience and determination of my colleagues have been awe-inspiring. The highs and lows, the phases of acceptance and resistance, have all shaped the journey. The Red Hat associates bounced back with renewed motivation and vigour. The contribution is back on the bright spectrum, reflecting the natural ebb and flow of good and bad phases in any organization.

And speaking of contributions, let me tell you about the work itself. The problems I’ve been assigned are always new and challenging. But what truly sets Red Hat apart is the support system. It feels like everyone, whether inside or outside my team, is always ready to lend a hand.

So, now that you know a bit about my journey, let’s dive into one of those intriguing problems I faced, and the creative solution I stumbled upon. Trust me, it’s a good one!

The Challenge: A Need for Secure Communication

My work on Red Hat Appstudio (Enterprise Version: [Red Hat Trusted Application Pipeline or RHTAP[(https://developers.redhat.com/articles/2023/07/18/introduction-red-hat-trusted-application-pipeline) ), specifically with Tekton Results, one of its key components, presented me with a challenge. The task was to expose Tekton Results Metrics over HTTPS, even though the code only allowed exposure over HTTP.

I needed the metrics to be encrypted in transit over the cluster using HTTPS. This was crucial since Prometheus Service monitors generally expect HTTPS, but Tekton Results only supported HTTP.

The urgency of the requirement for Appstudio meant we couldn’t wait for the upstream Tekton Results to make the change. That’s when my seniors suggested an alternative: kube-rbac-proxy.

Why kube-rbac-proxy?

While it was possible to secure the /metrics endpoint with HTTPS using an Openshift Route, that alone wouldn’t have addressed our specific needs. In our scenario, we were defining a Service Monitor to observe this endpoint, and we needed a solution that not only encrypted the data but also enforced authorization. Simply applying HTTPS would have secured the transmission, but kube-rbac-proxy offered us the additional layer of RBAC authorization control. This ensured that Prometheus could securely access the metrics while maintaining adherence to our specific authorization policies. The decision to utilize kube-rbac-proxy was driven by this dual need for encryption and strict authorization, providing a tailored solution for our monitoring requirements.

The Solution: Kube-RBAC-Proxy Explained

Prerequisites:

Kubernetes-related concepts like RBAC, sidecar container patterns and pods communication.

When it comes to security in Kubernetes, role-based access control (RBAC) often takes centre stage. RBAC empowers us to declaratively define roles, specifying the actions an entity can perform. This structure provides a robust mechanism to safeguard data and resources within Kubernetes.

The Kubernetes API is instrumental in this process, verifying user information and determining whether an entity is authorized to access specific paths. This ensures secure communication between components, and behind the scenes, the system itself relies on RBAC.

Enter kube-rbac-proxy, it is a small but potent HTTP proxy designed to perform RBAC authorization against the Kubernetes API using SubjectAccessReview. It can act as a bridge between your service and the outside world, ensuring that only authorized entities can access specific metrics.

This proxy is intended to be a sidecar that accepts incoming HTTP requests. This way, one can ensure that a request is truly authorized, instead of being able to access an application simply because an entity has network access to it.

How Kube-RBAC-Proxy Operates?

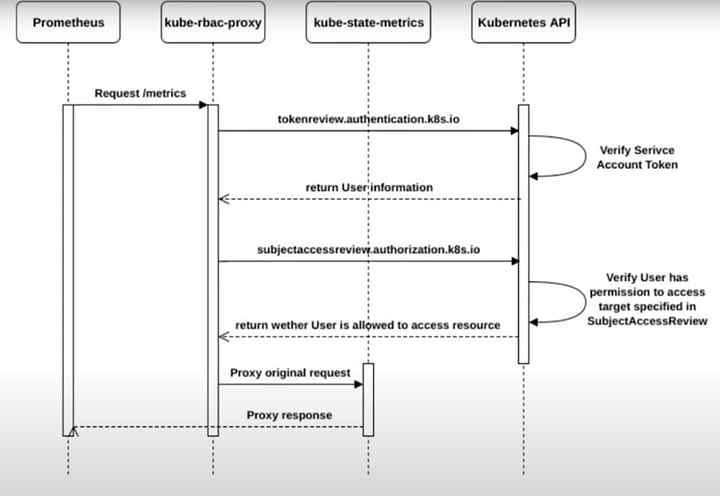

The functioning of kube-rbac-proxy is akin to the authentication and authorization mechanisms in the Kubernetes API server and kubelet. It employs RBAC to manage authorization, specifically through subject access review API calls.

Here’s a summary of how kube-rbac-proxy operates:

Authentication: It figures out the user performing the request, validating client TLS certificates or performing a TokenReview in the case of bearer tokens.

Authorization: Using authentication.k8s.io, it performs a SubjectAccessReview to verify that the authenticated user has the necessary RBAC roles.

Note: Pictorial description is taken from Frederic Branczyk’s lightning talk. Link in the #References.

Implementing Kube-RBAC-Proxy

For a practical example, refer to this example, showcasing a Prometheus example app protecting its /metrics endpoint.

At Red Hat, kube-rbac-proxy is widely used in projects, including Spray Proxy and Tekton Results of course.

Reflection on Security and Innovation

Kube-rbac-proxy, with its clever utilization of RBAC, provides a robust solution for securing services, reinforcing the importance of security and authorization in the modern cloud-native environment.

The example shared and the adoption of kube-rbac-proxy within Red Hat projects underline its effectiveness and flexibility.

References

Lightning Talk: I Got your RBAC — kube-rbac-proxy — Frederic Branczyk, CoreOS (Any Skill Level)

Top comments (0)