The fallacies of distributed computing or distributed system are a collection of eight statements made by L. Peter Deutsch and others at Sun Microsystems about false assumptions people new to developing distributed applications make. Here's the list of 8 fallacies of distributed systems:

- Network is reliable

- Latency is zero

- Infinite bandwidth

- Network is secure

- Topology does not change

- There is one administrator

- Transport cost is zero

- Network is homogeneous

I'll go through each one of them and explain them in more detail.

1. Network is reliable

If you compare how networks used to be ten or twenty years ago, you could say that networks have become more reliable. However, you can't say that they are and will always be fully reliable.

Power failures happen and will affect the network equipment, the equipment fails, attacks happen, and you will have a bad or weak signal, etc. The assumption developers make is to assume that the network is reliable, and they write their applications in such a way as well. You should write your applications in a way that accounts for and expects the network to fail - one way to do that is to retry failing calls (assuming they can and should be retried).

2. Latency is zero

Imagine running two applications on your laptop that talk to each other. Can you guess what the latency for that call will be?

Latency is the time between making a request and receiving a response

It is fair to say that latency will probably be minuscule, however, it will not be zero. Therefore as soon as you start making calls across the network, that latency will always be greater than zero. Latency is one of the metrics you should be aware of and monitor in your distributed system as it can have a significant impact on user experience and performance.

If you're not convinced, open the developer and network tab in your browser and switch to "Slow 3G" simulation to feel the latency.

3. Infinite bandwidth

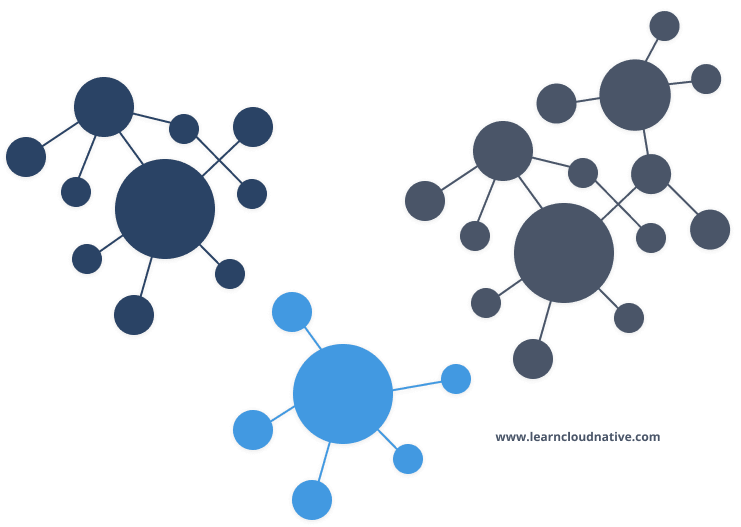

At first, it might seem that there's a lot of bandwidth available. But once you end up with a system that has tens or hundreds and more services, you will notice there's a lot of communication happening between the services and data is being sent back and forth.

Bandwidth is the capacity of a network to transfer data.

Bandwidth capacity has been improving. Ten or twenty years ago, you couldn't even think about streaming high-quality videos to your computer, let alone your phone. Today, we take that for granted, and we don't think about it too much until you get on a low-bandwidth connection.

4. Network is secure

This one can turn out very bad. As soon as you connect your computer to the network, you're already vulnerable. Security needs to be a priority in your design. Make sure you embrace a defense in depth approach where you place multiple layers of defense throughout your system.

You can almost say that it's not the question if you're going to get attacked, but when.

5. Topology does not change

Network topology does not change, assuming you will be running your services and applications on your laptop.

Network topology is the arrangement of network equipment.

As soon as you deploy your application to the cloud, the network topology is (usually) out of your control. Machines can get added and removed, network equipment upgraded and changed, and so on. Topology is always changing, and you can't rely on it being constant.

6. There is one administrator

Traditionally, it might have been common to have a single person responsible for environments, doing tasks like installing and upgrading the applications. That is not the case anymore with the shift towards modern cloud architectures.

Modern cloud-native applications are a composite of many services working together, but developed and maintained by different teams. It is practically impossible for a single person to know and understand the whole system, let alone try and fix any issues that arise in the system.

7. Transport cost is zero

There are two ways to interpret this statement. The first one is to interpret it as "network cost" and thinking it's free. That's false. For example, if you think about data ingress (incoming/inbound data transfers) - it's free with most cloud providers. However, data egress (outgoing or outbound transfers) will cost you money. Similarly, there are costs associated with running networks - someone, somewhere needs to pay for the infrastructure, buy new equipment, etc.

The second interpretation is about the overhead you incur when serializing data to get it on to the network. Serialization can be an expensive operation in terms of resources and latency, so you should make sure to think about which format you pick as well as which serializer you are using.

8. Network is homogeneous

Networks are heterogeneous, not homogeneous. For example, you can't predict what type of devices or applications will try to connect to your system, which protocols or operating systems will they use, etc.

Network is homogenous if each computer in the network is using similar configuration and same communication protocol.

The guideline here is to focus and use standard protocols that are widely accepted and avoid relying on proprietary protocols.

Top comments (0)