Origin Story

I work as a software engineer and my wife is a clinical psychotherapist / licensed clinical social worker. So when she decided to start her own private practice, naturally I told her I can write some software to help her run her business. This was a few years ago and helped me to practice what I preached when I say we as developers should constantly learn and create side projects to facilitate this. My version 1 involved:

- Web Forms ASP.NET web application to host her public facing website. Used jQuery on the front end with the web forms app to translate the data fetched from the backend to render data on the different pages.

- WCF service layer

- Backbone library on the internal web app to implement SPA on the frontend.

- MySql as the persistence / database layer.

Version 2

This was my setup for a year or so and worked great for our use case. Then at work, we were working on a green field app that used Angular (2 in beta) and I decided to rewrite the public facing web site to practice on the new technology I was using at work. This also gave me a chance to implement a responsive first design. This is where I fell in love with Angular. To me, the mix of a component based pattern and MVVM made sense based on my experience with Web Forms where each component was like a form and Backbone where the service injected into the component would provide the view model. I liked the pattern so much that I decided to rewrite the internal application as well. Here I decided to use Electron to wrap an Angular and NgRx application. Again, this gave me a chance to work on things I was interested in (Electron) and working on at work (Angular and NgRx). On a side note, I actually had my public speaking engagement at the NgCharlotte talking about Electron, Angular, and NgRx.

The next rewrite I decided was to switch out the backend stack from WCF to WebAPI. The switch here was pretty straightforward and I found I did not have to jump through all the web.config hoops I did with WCF.

The Switch

I decided to go to the cloud since my Windows 2012 Server was quickly becoming out of date and I did not want to invest in another server. I also wanted to learn more about developing apps in the cloud.

First up was my public facing website. This was an easy switch as I just used Github's free hosting of Github pages to run my Angular app.

Next was the database and backend. Since I was very familiar with the Microsoft stack, Microsoft Azure was the obvious choice for me. Moving my current WebAPI backend to Azure was as simple as a linking my Azure credentials, right click, and deploy to Azure. Easy peezy.

For the database, I went with Azure CosmosDB. I wanted to learn about MongoDB and Cosmos had a Mongo Client wrapper where I could use Mongo's query language against my Azure CosmosDB. So I wrote some Node scripts to migrate my local database to Azure. Cool, all done right?

The First Bill

Then I got hit with the first bill. It amounted to more than $400 dollars...for 1 month!!! I immediately thought well this is unsustainable. Luckily, Microsoft support was really helpful and let me know that the majority of the bill was from CosmosDB. (I think it had to do with my migration of data to Azure). So I decided to look for a better solution and found Mongo Atlas which allows me to host MongoDB clusters in the cloud for free. What's more, because of the MongoDB client wrapper from Cosmos, I didn't have to change anything but my connection string to get use Atlas after moving my data.

Coming Full Circle

This worked great but my monthly Azure bill was still around $70 since my backend was running on Azure Container Services. So after more research, I decided to go to Azure Functions. Since I was moving to a company where the backend was using Node with Typescript, I decided to go with the Typescript flavor of Azure Functions instead of C#. I could not believe how easy the Microsoft tooling made this. All I had to do was install some extensions in VSCode, map my Azure credentials, and deploy the app. The extensions I installed were Azure CLI tools and Azure Functions. This allowed me to create an Azure Functions application and generate functions just by clicking a button. Alternatively, you can also run the commands:

func init to create an application and

func new to create a function.

After that it was just moving my WebAPI endpoints to their own Azure Function.

My Project Structure

- Function folder (These are my endpoints)

- function.json

- index.ts

- Shared folder

- Here I put my services that can be shared across the different functions.

- package.json

- local.settings.json

- This is the json file the holds my environment variables. When I deploy to Azure, I would just add these entries to the Application Settings of the Azure Function app.

- tsconfig.json

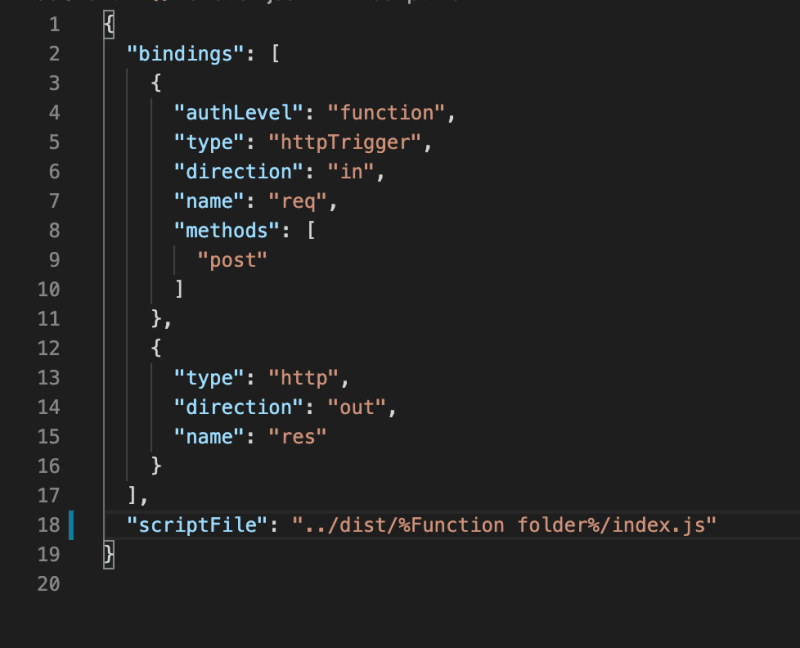

function.json

The only thing here I change is the methods array. This is where we put the HTTP verbs I want to allow for the endpoint. The authLevel and the type come from the prompts when you create your function. I mostly use the httpTrigger type which allows HTTP requests to be made to the endpoint. I also use the Timer type for one of my functions to run a nightly job that sends emails to my wife's clients if they have an upcoming appointment. The complete list of function types can be found at here.

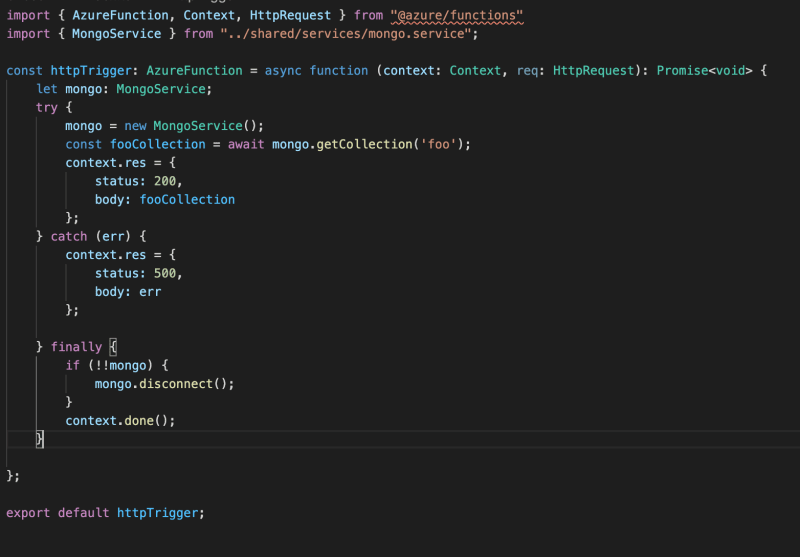

index.ts

This is the code that runs the function. Here I create instances of any shared code that lives in my shared directory. Some different classes I have created are a Mongo service to handle MongoDB reads and writes and an email service that handles sending emails. Below is an example:

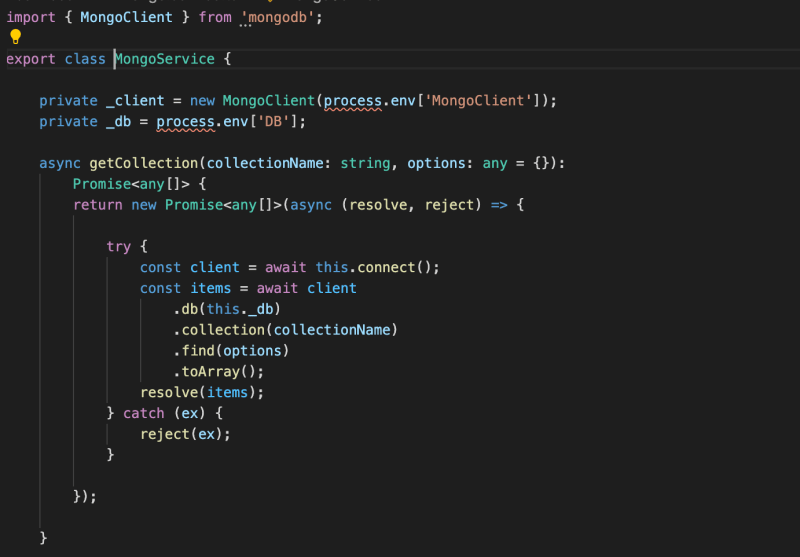

In the Mongo service, the process.env references the items in the local.settings.json file. When deployed to Azure, it will represent the items in the Application Settings.

Local Debugging

One of the most powerful feature of using Azure Functions, in my opinion, is the ability to debug the code locally from VSCode. It is as easy as running the application in VSCode. It create an endpoint on port 7071. From there, you can open Postman and make the requests.

Drawbacks

The only drawback here is the cold start problem. This occurs because since the application is running in a container that gets spun up ad hoc, if code hasn't been run for some time (I think the limit is around 15 minutes), the container gets spun down and needs to get restarted on the next call. I found that the first call takes somewhere around 1 -2 seconds but every call after returns in less than 500ms. But to me, the cost benefits far outweigh this small drawback. My cost since moving over has been between $0.01 and $0.02.

Top comments (6)

I don't know much about Azure or its ecosystem so I'd like to hear why/what lead to a $70 bill. afaik GCP Compute Engine and AWS EC2 have hourly and per-second pricing respectively and using ECS or GKE isnt all that expensiv unless you are have some serious traffic.

You should really try out an IaC tool like serverless, since you are using cloud functions you could also make use of serverless components :)

I would say cloud functions are a powerful tool as long as they are used for stateless operations. Glad to see that you are exploring cloud tech! Hope you'll keep sharing more about your journey.

Honestly, I probably could have made my code more efficient, which could have caused extra compute that didn’t need to happen. When I moved my relational database to Mongo Atlas, it was pretty much a straight port which I don’t think gave me the benefits of a NoSql, non-normalized database. I have heard about Serverless Framework, if that is what you mean, but have not gotten the chance to try it out yet. I was just really impressed with Microsoft’s tool chain to deploy to Azure, which of course is the reason for the free tools (VSCode and the Azure extensions).

actually $70 is nothing with GCP too.

problem with these tools are....they are quite powerful but damn expensive...they are useful for enterprises.

I'm not saying that GCP is cheaper than Azure (it can be in certain situations), I'm more or less asking how the $70 breaks down.

if i understand properly, you can bypass drawback easily by pinging your server/domain every 5mins,

just use uptimerobot (or any other ping/status services) which pings your server every 5mins

you get to know if your server is down and also drawback will be gone.

I thought about creating a function using the Timer type to call a heartbeat endpoint that would keep the container warm but have not done so yet. I will have to give that a try. Thanks for the suggestion.