Writing a code, I sometimes met errors that seem to occur in the library I had imported. This is not always because the library has incorrect coding, but instead in most cases because the code I'm writing is incorrect. However, it is useful to insert comment out between codes in the library when looking for the root cause of the errors. In that case, Kaggle notebook is not a convenient tool.

If we edit codes in libraries when debugging, local machine is the easiest environment, but Google Colab is also useful because it has a similar environment to Kaggle, such as GPU resources.

To do so, we need to move data from one to another. Today I take a note of the method.

Kaggle -> Google Colab

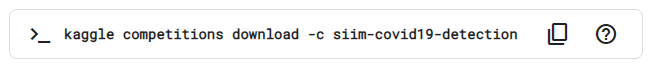

To access Kaggle data, we first need API token, which is loaded on Colab. Then, we can download the data from Kaggle to Colab using command line.

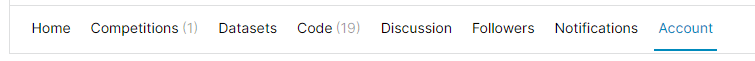

Firstly, we first move to our account page, push the 'Create New API Token', and download the token 'kaggle.json'.

Next, we upload the token on google drive. The example below is the case when we save the token under 'My Drive'.

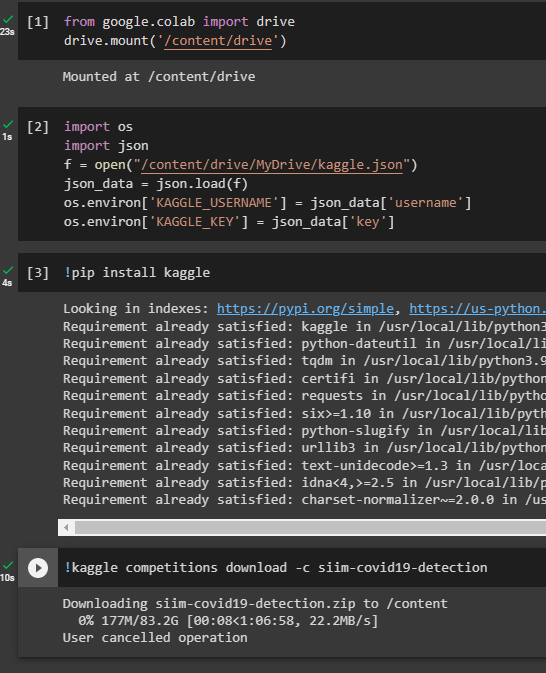

We move to Google Colab notebook and mount Google Drive on Colab. We mount My Drive on '/content/drive/MyDrive' in the example below.

from google.colab import drive

drive.mount('/content/drive')

And then do this command.

import os

import json

f = open("/content/drive/MyDrive/kaggle.json")

json_data = json.load(f)

os.environ['KAGGLE_USERNAME'] = json_data['username']

os.environ['KAGGLE_KEY'] = json_data['key']

Then, we have access Kaggle by installing the package for Kaggle.

!pip install kaggle

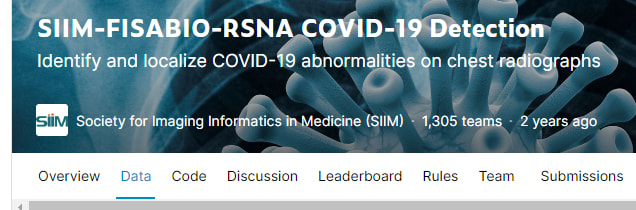

The way to download data is as below. We first move to the data page and there is API command written below the page.

Return to Colab notebook and paste the command.

!kaggle competitions download -c siim-covid19-detection

I stopped downloading because the data has the size over 80G.

The amount of data in competitions is extremely large. Therefore, I often use the entire data on Kaggle, while on Colab I test my code with a small subset of data or test modified packages made by other participants.

Google Colab -> Kaggle

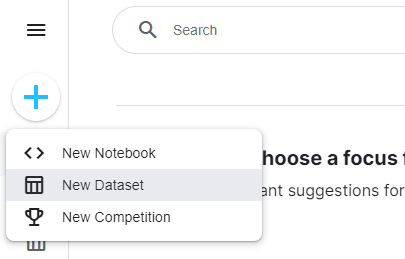

Unfortunately, I don't know the way to move Colab data to Kaggle directly as far as I know. Therefore, when I need the data I made on Colab, I once download the data and then upload it on Kaggle as Dataset.

Kaggle recommends to upload data in compressed form, and uploaded data is, if recognised as compressed files, automatically extracted.

Top comments (0)