Algorithms

We can think of algorithm's as being a recipe that describes the exact rules or steps needed for the computer to solve a problem. We use algorithms every day in our daily lives without knowing it. whether it’s looking at a Recipe, or going Grocery shopping and even when giving directions from point A to point B. We must conceptionally map out the steps we need to do a job or task. We can think of an algorithm like a function that transforms a certain input data structure into a certain output data structure. And inside that function body is the instructions to do that.

Why have so many different algorithms?

As stated, before an algorithm is just a plan on how to solve a problem. as we know with coding there are multiple solutions to solve a problem. the reason we don’t just stick with one algorithm to solve a problem is because there is always a better more efficient way to get to the solution. We are always on the move to find that way and be the one who found the better way. there are steps we take to find that better way.

Step 1: Creating the algorithm

We do this by first grasping our problem and the plan of attack to solve that problem. Once we have our steps in place, we need then its o to the next step.

Step 2: Pseudocode

We take our technical and program-based algorithm and turn it into plain English to simplify it in smaller steps and terms that anyone could understand.

Step 3: Code

This is the part where we implement our plan.

**Step 4: Debugging

During the debugging stage we fix any problems with or code and make it work

Step 5: Efficiency

After we get a working solution for our code we can go back and make changes to see how efficient it is and things we can do to make it better. Once we figure that out we can redo the algorithm to reflect that and write a more dynamic and efficient code for the problem. Let’s see an everyday example of making an algorithm more efficient.

Three ways to make a peanut butter and jelly sandwich

In this example you can see every time we changed the algorithm it became more efficient to make a sandwich by cutting the steps in half. Now of course this is an exaggerated point, but hey it did the job in proving there are more than one way to produce the same result and now I’m hungry.

Ideas that bloom from basic algorithms

From original algorithms we develop more algorithms to help and improve them.

to show this think about the different versions of JavaScript that comes out. that’s because developers realize that there are better ways to do things.

We can thing of different inheritance patterns from functional to pseudo classical and the reason they came to be was because there was the idea to improve on the algorithm and make it better. The same can be said for different ways we can store and access data with different data structures. for example, If we want to transverse a tree with a loop, depending on how deep that tree is we would need multiple nested loops or we can use recursion to basically do that for us an no matter how many inputs it should still work effectively. Another example will be with searching through a graph data structure. We have two algorithms: Breadth First Search and Depth First Search. Breadth First Search uses looping and Depth First Search uses recursion. They both can reach our end goal. it’s just depending on the type of graph one search method can find what you’re looking for with less and more time efficient. When think about the time efficiency of an algorithm we can think about Big O Notation

Runtime Analysis of Algorithms

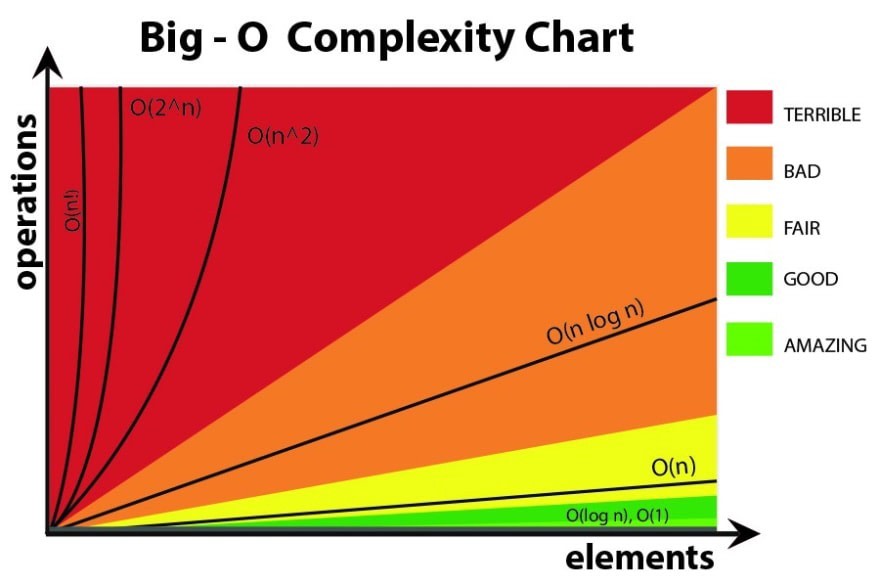

Big O Notation is the language we use to describe the complexity of an algorithm.

It’s how we compare the efficiency of different approaches to a problem

It uses a set of rules to determine what spectrum an algorithm falls on the graph. And by that definition we can see that Big O Notation is an algorithm itself used to grade other algorithms.

Conclusion

When thinking about algorithms the main thing we need to focus on is which route is the best route for our program. There is always a need to improve on your code and make it better and more efficient to solve a problem for the next person.

Top comments (0)