This was originally posted on the FlexMR Dev Blog.

At FlexMR we make heavy use of a ‘prototype-first’ approach. We find it’s easier to produce a stand alone implementation of an integration with a third party tool (or idea) than spend time folding it into what we have, only to decide to throw it away and try a different way.

As you’d expect this can produce a lot of apps. The configuration for NGINX (the ‘junction box’ of the server) started to become more unwieldy. New (and old) server configs and SSL certs started to pile up. Moving NGINX config to a separate git repository was tidier but it didn’t solve the underlying issue. I started to look around for an solution which better fitted our use case.

Our infrastructure setup for prototypes (and as it happens, an internal tool called Hairy Slackbot — more on that in the future) lives on a dedicated server which is running Docker Swarm mode (so it is ready to scale when we need to).

My wish list for a reverse proxy solution:

- Minimal configuration, ideally on the app side

- Scalable

- Easily use LetsEncrypt for certificate generation (provision and renew)

- Nice to have (only because we don’t need it right now): Load balancing

I’d looked at the popular nginx-proxy alongside docker-letsencrypt-nginx-proxy-companion however, in the spirit of experimentation I thought I’d give the new one a whirl.

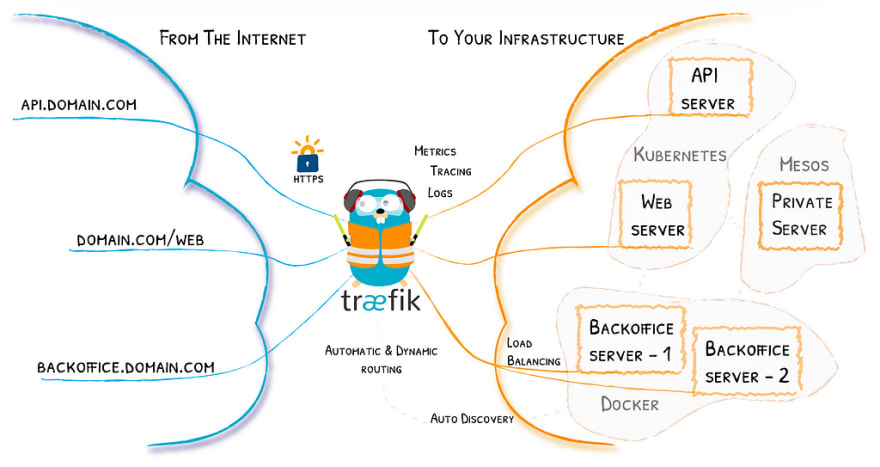

Træfik

Træfik is a modern HTTP reverse proxy and load balancer that makes deploying microservices easy. Træfik integrates with your existing infrastructure components and configures itself automatically and dynamically. Pointing Træfik at your orchestrator should be the only configuration step you need.

We’re only going to touch a small portion of Træfik’s functionality in the example below. What we’re aiming to achieve here is:

- New services can be deployed to our development server without having to make any changes outside of that apps repository.

- New SSL certs are provisioned (and renewed) automatically by LetsEncrypt.

Show me some code!

Or, if you’re really keen there is a repository and example walkthrough in the next section.

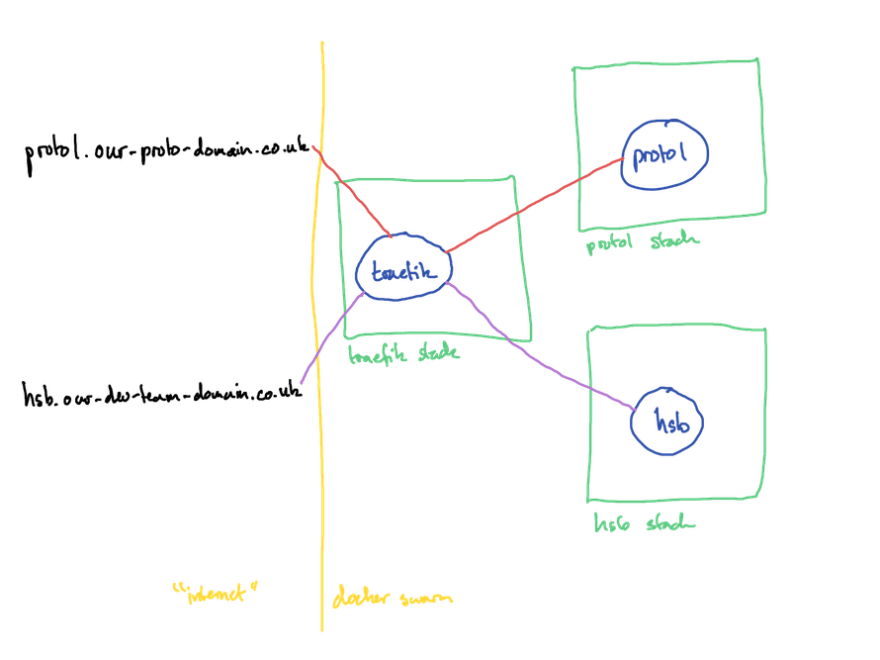

What we had

In the example I described above, I defined separate stacks for both the hsb and proto1 apps as well as nginx. nginx used to part of the hsb stack by virtue of it being the first stack on the development server, this was split out once we started adding more apps.

Each app has its own git repository in which the docker stack file defines how it runs within the swarm (how many instances, memory and CPU restrictions, network details). We deploy via CI.

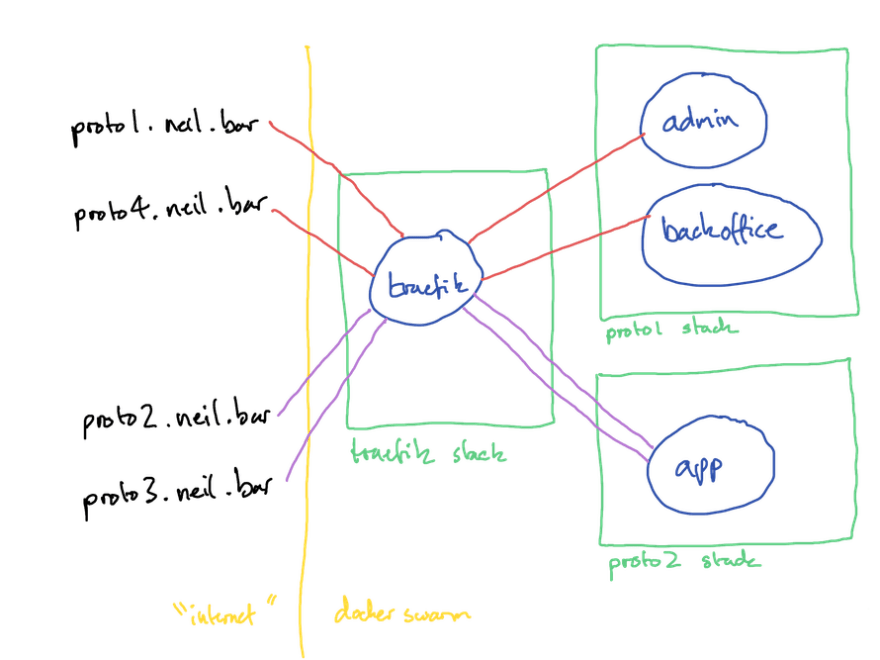

The new world

Stack 1: træfik

Deployed with: docker stack deploy --compose-file traefik-stack.yml --with-registry-auth traefik

Stack 2: hsb

Deployed with: docker stack deploy --compose-file hsb-stack.yml --with-registry-auth hsb

Stack 3: proto1

Deployed with: docker stack deploy --compose-file proto1-stack.yml --with-registry-auth proto1

Visual view of configuration

Traefik comes with a rather bonny UI, which we’ve configured to serve on port 8080 of the host. I access this via a SSH port forward eg: ssh -L8080:localhost:8080 ... as I don’t make it available externally (and neither should you!).

Worked Example

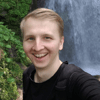

The accompanying repo below uses a different setup to the hsb/proto1 setup above. I’ve done this to illustrate multiple services in the same stack and how single services can be assigned multiple domains.

I’m making use of jwilder/whoami.. This provides a web service which just echos its container id.

Stack 1 is traefik, as above.

Stack 2 is proto1, this has admin (proto1.neil.bar) and backoffice (proto4.neil.bar) services.

Stack 3 is proto2, this has a single service, app (proto2.neil.bar and proto3.neil.bar).

As we’ve set up the [acme] block in our traefik.toml then Traefik will automatically request, provision and renew certificates for the hosts defined in the traefik.frontend.rule label in each service from LetsEncrypt.

Adding additional services doesn’t require any changes to the traefik stack and with the LetsEncrypt integration it really does make this task trivial.

Top comments (0)