Recently at work I’ve been involved in a learning group for developers new to python, and I’ve realised that the tools I’m used to using are pretty confusing for newcomers:

- Lots of them have very similar names but do different things, so it’s easy to confuse them in casual conversation (virtualenv, venv, pyenv, pipenv)

- There’s not one, obvious way to do everyday development tasks

- There’s a lot of active development around python packaging tools, so the best way to do something today probably won’t be the best way in one or two years time

- If you google stuff you have to trawl through a lot of out of date information

Because of this I decided I decided to demo some different tools to give an idea of what tools are available and why you might want to use them. You can read all of my notes on github.

A few of the developers I’m working with are familiar with Ruby, and I realised my development workflow is basically identical in python and ruby, so I put together this rosetta stone for ruby and python tools:

| Ruby thing | Python thing | Description |

|---|---|---|

| Rubygems | PyPi | The centralised package respository |

gem install bundler |

pip install pipenv |

Install a package |

| Gemspec | setup.py | Specify metadata and dependencies for a package |

| Gemfile | Pipenv | Specify dependencies for an application |

| Gemfile.lock | Pipenv.lock | Pinned versions for the full dependency tree of an application |

bundle install |

pipenv install |

Install packages for a project |

| rbenv | pyenv | Manage multiple versions of the interpreter |

bundle init |

pipenv --python 3.7 |

Start a new application project |

bundle gem |

??? | Generate a package skeleton |

| rspec | pytest | Popular testing framework |

| rubocop | flake8 | Popular linting tool |

binding.pry |

breakpoint() (3.7+) |

Built in debugger |

I also learned some new things myself while preparing this demo:

1. Virtualenv is not really needed anymore

In modern versions of python you can just use python -m venv to create a virtualenv. So you don’t need to install a tool through pip anymore (hurray).

Tools like pipenv use virtualenvs under the hood so you don't need to manage them yourself. Which is great, because as long as projects are isolated from each other, who cares where the packages are installed to?

If PEP 582 is accepted, then instead of using virtualenvs there will be a standard folder in the project directory that packages can be installed to, a bit like node_modules in javascript.

2. Pipenv will probably stick around a while

For my demo I wanted to mention alternative tools even if I hadn’t used them personally. I was conscious that while pipenv does seem to be the direction of travel, there is also a lot of doubt about it online.

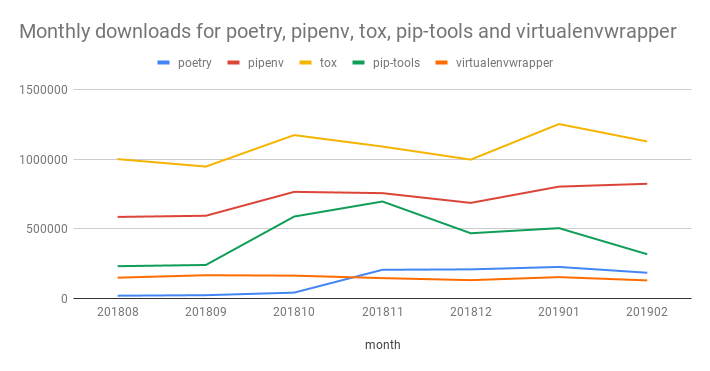

I was curious to see whether this was influencing adoption, so I used the PyPI downloads dataset on google bigquery to count the number of downloads for a handful of tools:

Note: the data point for February isn’t a whole month, and this counts all downloads even if the package is downloaded as a dependency of another tool

While there are definitely other contenders, pipenv still seems like a decent choice if you are starting a new python project in 2019.

3. Semantic versioning requirements will make your life a lot easier

One of the tasks I wanted to focus on for this demo was updating a project’s dependencies. This is important because not being able to keep up with package updates is a form of technical debt. It accumulates, and over time it becomes hard to update anything.

This is particularly problematic when you depend on frameworks like Django or Flask. Using versions of software with known vulnerabilities in is the 2nd highest application security risk according to the OWASP Top 10 2017.

To be able to manage dependencies over time, you should be able to separate information about what your application actually cares about (direct dependencies) from the full dependency graph at any point in time.

The former is all about flexibility - you want to define how your app can change as new versions of your dependencies get released. This is what the Pipenv file is for. The latter is all about specificicity - it makes sure that you run the same code on different machines. This is what the Pipenv.lock file is for. I’m using pipenv as an example, but this applies regardless of the tool you’re using.

Python introduced a syntax to describe version requirements way back in PEP 440. If a library follows semantic versioning, then you can write something like requests~=2.2 to mean any version greater than or equal to 2.2, but less than 3. If you want to be stricter you can use requests~=2.2.1 which means anything greater than or equal to 2.2.1 but less than 2.3 (i.e. you’ll only accept bug fixes).

If you follow this pattern, then routine updates to apply bug fixes and security patches can be completely automated. I am a huge fan of dependabot because it does all the boring work for you: it monitors for changes in your dependencies and then creates pull requests for you to review.

Top comments (0)