You don't always think about mathematicians building stuff like engineers do, but the truth is mathematicians build things all the time. It's just that the things they build exist mostly in their minds (and published papers). They're not concrete things you can look and feel. They do this to prove theorems or properties. Turing did just that when he "built" the Turing machine.

To set the scene, imagine it's around 1928 and no programmable computer was invented yet. But mathematicians did study algorithms and computable things. Since computers were so limited, the only way to figure out universal properties of all programs was to think really hard.

One of those properties of algorithms that they couldn't figure out was something I wrote about before: The Halting Problem. The halting problem asks whether or not you can write a program that can tell if another program will freeze or not. (Spoiler alert: You can't!)

The Halting Problem is hard to solve because there's an infinite amount of programs. To answer it, we need a computer that can execute an infinite amount of programs, and that's what Turing did.

Turing's machine is not a real machine. It's a mathematical model, a concept, just like state machines, automata or combinational logic. It exists purely in the abstract. (Although "real" implementations of the Turing machine do exist, like in this foundational computer science paper.)

How a Turing Machine Works

The Turing machine has three main parts:

- The star of the show is an infinite roll of tape. This tape can be written on, and written symbols can be erased or rewritten with different ones.

- The thing that's doing the writing is the head. The head can move up and down the tape and write, erase or re-write symbols on the tape. Like the head in a hard disk.

- There's also a state register, which is the memory of the machine. It holds the current state the machine is in.

All a Turing machine does is read and write from a piece of tape. At any step, it can write a symbol and move left or right. But even with this limited set of actions it can do all kinds of things. In fact, it can do all the things that any programming language does.

There's a table of instructions that tells the machine what to do and when. This table has five columns. The first column checks the current state the machine is in. The second column checks which symbol is currently below the head of the machine. These two columns determine the different combinations of inputs the machine can have.

The next three columns are the next symbol to be written, where the head should move and what's the next state. These three columns determine the actions performed by the machine for the given first two columns.

For instance, if the machine is currently in state "A", and the head is above the symbol "0", the machine should write "1" in that place and move right, transitioning to state B.

If you follow the instruction table at the top, you'll see that for state A all 0s are replaces with 1s and vice-versa. For B, the 0s and 1s stay the same. A always transitions to B, and B transitions to A. This leads to a machine that will invert every other symbol of a string. So for "1111" you'll get "0101".

Let's look at a more complex example to see how this really works.

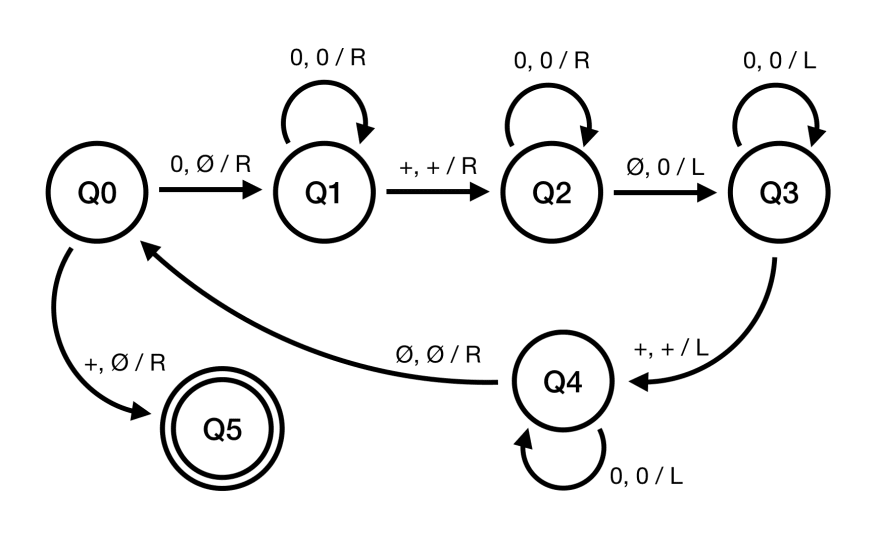

That doesn't look like your standard C program, does it? An instruction table doesn't really scale well. A much better way to write a Turing machine is with a diagram like above. If you've ever dealt with automata, this will look familiar.

The image above is a Turing machine that does addition, given a string like "00+000" (2 + 3), it will output "000000" (5).

The circles in the image are states, names Q0 trough Q4. States are connected with arrows that represent transitions from one state to another. On the arrow you see three symbols, like "0, X / R". You can look at the first symbol (in our case "0") as an if condition that checks the current symbol on the tape. If the symbol on the tape is "0", the transition will be performed. If the machine reads a symbol and there's no transition from that symbol in its current state, the machine halts.

The first state is marked with an arrow. The goal of the machine is to move to the final state, a state so nice we circle it twice. If the machine stops anywhere other than the final state, it means either our machine is wrong or our input syntax is wrong. This is similar to a way a compiler validates your code. If it gets stuck before it compiles, it means you have a syntax error.

We define the symbols a Turing machine works with. For this example, we'll use four symbols: "0", "1", "+" and "∅", to represent a blank space.

The second two symbols tell the machine what to do. The first symbol after the comma is what will be written down on the tape. "0, X / R" means "if I'm reading a 0, replace it with X". The symbol after the slash tells the head where to go. R stands for right and, naturally, L stands for left. So in total "0, X / R" means "if you read a 0, replace it with X and move right". Once the transition is performed the machine moves to the next state, the one the transition arrow points to. Every time the machine reads a symbol, it will either move to another state or halt.

Now that we have the fundamentals, let's move trough the process of adding 000 and 00 step by step. Here's a high level overview of what the machine does:

- Replace the first 0 with a blank space.

- Move to the end of the string.

- Add a "0" to the end of the string.

- Go back to the start of the string and back to step 1.

- If the first symbol is a "+", remove the "+" and complete.

Let's go trough an addition step by step. We take a piece of (imaginary) tape and write on it (with an abstract sharpie) we write "00+000". We give the machine the tape, and position the head at the first 0.

"00+000"

^(Q0)

The machine is initially in state Q0. State Q0 has two transitions, one for "0" and another one for "+". The machine reads "0", replaces it with a blank space, and moves right.

" 0+000"

^(Q1)

The machine is now in Q1. Again, we have two transitions, and one of them is a loop. Just like in programming languages, Turing machines have loops. The loop replaces all 0s with 0s, and moves right. In other words, it just skips over all the 0s. "X, X / R" is Turing-machine-speak for "skip".

" 0+000"

^(Q1)

The machine skipped all the 0s and is now at a "+", but still in Q1. The transition to Q2 simply skips over the "+".

" 0+000"

^(Q2)

Now we have similar transitions as in Q1: the machine will first skip over all the 0s in a loop.

" 0+000 "

^(Q2)

Once it gets to the blank space, it will replace the blank space with a 0, and move left, transitioning to Q3.

" 0+0000"

^(Q3)

Q3 will once again skip over all the 0s until it reaches a "+", but this time moving left. Once "+" is reached, the machine will move left one space and transition to Q4.

" 0+0000"

^(Q4)

Q4 will skip over all 0s and transition back to Q0 and move right if it reaches a blank space (i.e. one space past the start of the string).

" 0+0000"

^(Q0)

The same loop we just described happens again. Can you see what the machine is doing? Essentially, the machine replaces a 0 in front of "+" with a blank space, moves the head to the end of the string and adds a "0". It then goes back to the first 0 from the left and does the same thing. It keeps doing it until it replaces all 0s left of "+" with blank spaces.

Loop 1: 00+000

Loop 2: 0+0000

Loop 3: +00000

^(Q1)

After three loops the machine finds itself back in Q1, but this time it reads a "+". The final step of the machine replaces the "+" with a blank state and moves to Q5, the final state.

00000

^(Q5)

And there we go! We gave the machine "00+000" and we got "00000". Granted, it's not the most useful calculator ever, but it shows that with such a simple set of tools and rules we can construct a machine that can do calculation! And it works with an arbitrarily long number or 0s!

Why is this important?

Turing didn't come up with a machine. Turing came up with an abstract model for all machines. In other words, any algorithm ever can be built on a Turing machine. From "2 + 2" all the way to the latest Assassin's Creed, a Turing machine can run it. (But the latter will require a lot of tape. Like, a lot a lot.)

It's a theoretical description of computers. This is exactly what Turing used Turing machines for, after all. He thought of them so he can prove properties of computable things in general. Before that, we didn't really know anything about the limits of algorithms. We didn't know what exactly can be computed.

What's cool is that this amazing invention is just a byproduct of Turing trying to answer some fundamental mathematical questions, but in the process he created the basis of all computers we use today.

This all sounds well duh to us sitting here reading this on our general purpose computers. But keep in mind Turing is writing this in 1936. This was before any programmable computers. In fact, at the time, "computer" refered to a person, not a machine. And the thinking at the time was that computers can only be purpose-built for one task. But Turing believed otherwise. He believed that you could build a single machine that can be programmed to do any task. Turns out that was true, because you're currently using one.

The existence of machines with this property has the important consequence that, considerations of speed apart, it is unnecessary to design various new machines to do various computing processes. They can all be done with one digital computer, suitably programmed for each case. - Turing, 1950.

Oh, and did I mention he thought of all this while he was still a 23 year old student? Answering a fundamental question of mathematics and computer science and by doing so inventing an abstract way to describe all computers before they're even a thing is a pretty cool idea for a summer project.

Note: This is a repost from programmingwords.com. It's a blog about about different computer science topics explained in a simple, understandable way. Check it out if you're interested in compsci, compilers or programming languages! :)

References

Turing's paper:

https://londmathsoc.onlinelibrary.wiley.com/doi/abs/10.1112/plms/s2-42.1.230

The Annotated Turing (a great book that explains all of Turing's work better than I did and with historical context)

http://theannotatedturing.com

Turing Machines Explained - Computerphile (video)

https://www.youtube.com/watch?v=dNRDvLACg5Q

Curch-Turing Thesis

https://plato.stanford.edu/entries/church-turing/

Computing Machinery and Inteligence, Turing's paper on machine intelligence

https://www.csee.umbc.edu/courses/471/papers/turing.pdf

Top comments (3)

Hi Marin, great article!

I read it only today, my RSS reader hates me 😅.

I love to know that there are other developers fascinated by Turing machine, it’s an extraordinary mathematical model, and the Turing paper that describes them it’s mathematical art, it’s so elegant...

P.S.: I actually built a real, working, Turing machine for my high-school degree

Awesome! If you haven’t already, check out the book The Annotated Turing. It’s a full reprint of Turing’s paper explained sentence by sentence, with some interesting history behind Turing’s work. It’s a fun read.

Thanks for the suggestion, I’ll add it to my reading list immediately ✌🏻.