We've come a long way since the Apple 2 computer that birthed a new generation of personal computers. I often find myself thinking about the history of what came to be the tiniest personal computer we carry with us today, our mobile phones.

Where it all started

Thousands of years ago stones were used to perform simple calculations, the word calculate derives from the Latin 'calculus', which means small stone. Eventually people came to a realisation that this method of counting was inefficient as their tasks grew more tedious and required a lot of stones to perform larger calculations.

A more coherent and well organised system was needed. This was when the abacus came along, a wooden tool that allowed people to perform complex computations and counting. The date of when this 'computer' was introduced or who invented it is unknown and heavily debated. You might have seen an abacus in a toy store before, they are still being used today.

One step forward: The invention of the mechanical calculator

In the 17th century the mechanical calculator was invented by Blaise Pascal who was a French mathematician, physicist, inventor and writer. Although a well known pessimist, one of the greatest minds in the areas of science and mathematics. A child prodigy from an early age, Pascal had worked out the first 32 propositions of Euclid by the age of 12. He then went on to invent the mathematics of probability and made significant contributions to the field. You may have heard of pascals triangle before.

The syringe we use today was amongst his many inventions. By the age of nineteen Pascal began inventing calculating machines and over 13 years built 20 machines. This included the first mechanical calculator which he created to help his father who was a tax collector. It was not only very expensive but very useless and became more of a status symbol for the wealthy.

The programming language 'Pascal' was named in his honour.

Gottfried Leibniz

Considered to be one of the most important logicians of his time Gottfried Wilhelm von Leibniz, created his own calculating machine called the Step Reckoner in 1673 that was inspired and built on top of Pascal's work and was able to use all four arithmetic operations (addition, subtraction, division and multiplication).

He was also the first to refine the concept of binary arithmetic and was an advocate of binary or base 2 system which is at the heart of digital computing.

Punch Cards

Looms were used to weave yarn into fabric and to create very complex patterns on carpet and cloth, a lot of manual labor was involved.

In the 1800's a weaver by the name of Joseph Marie Jacquard invented a programmable loom called the Jacquard loom that used punch cards to control every thread going back and front to the loom individually allowing him to create complex and repetitive patterns. His invention allowed patterns to be woven without needing a weaver. Soon enough his invention was acquired by the french government and he would earn a royalty on every loom sold. Weavers who feared unemployment attempted to destroy Jacquard's looms.

This machine using punch cards would go on to play a huge role in the development of computers as it was later adapted for computer data input.

Charles Babbage and his difference engine

Known as the father of the modern computer Charles Babbage was a polymath and gifted mathematician, inventor and mechanical engineer who built what was called a difference engine. But he stopped working on it to pursue another invention that he had built on top of the difference engine known as the Analytical Engine.

Babbage used punch cards in his Analytical engine inspired by Jacquard's use of punch cards to perform calculations automatically instead of entering them by hand manually. Although it is worth mentioning that his idea's never came to reality due to a lack of funding.

Ada Lovelace: The worlds first programmer

It took another mathematician and pioneer in early computing named Ada Lovelace to realise that the machine could be used for more than just calculations and developed the first algorithm for the engine. An algorithm is a series of steps to solve specific problems. She and Charles Babbage had been working on the analytical engine together. She became the first female programmer in the 1800's and is known to be the first programmer because she came up with the algorithm.

It was through Lovelace's discovery of algorithms programmed into the Analytical engine that it became the very first general purpose computing machine in history.

Herman Hollierth: The Census Tabulator

Herman Hollierth , an American inventor invented the first punched card electromechanical census tabulator inspired by the work of Babbage, this machine built for punch cards could read data up to 65 at a time and tally up the results. His machine was so successful that he went on to found the company Hollerith Tabulating Company which eventually became IBM.

Alan Turing and the Universal machine

You may or may have not seen the movie 'The Imitation Game' to know about one of the greatest minds in the history of computer science, Alan Turing. Alan Turing was highly educated having attended the University of Cambridge to study mathematics and after graduating he was elected to a fellowship at King's College.

In 1936 Turing proposed the concept of a universal machine which was later named the Turing machine. The concept of the modern computer today is largely based off of Turing's ideas.

Konrad Zuse: Inventor of the modern day computer

Konrad Zuse invented the world's first programmable computer in 1936 . It was the first computer to use boolean logic and binary to make decisions through the use of relays. Zuse later used punch cards to store data in memory.

In 1942 Zuse released the world first commercial digital computer known as the Z4.He is considered today as the inventor of the world's first modern computer.

Howard Aiken and The Mark I

Inspired by Babbage's analytical engine, Dr. Howard Aiken in collaboration with IBM began working on the Harvard Mark I calculating machine. It composed of nearly 1 million parts. Oneday the Mark I was not reading its paper tape input correctly and one of the first programmers of the Mark 1 discovered a computer bug, a dead moth blocking one of the reading holes of the machine. Her name was Grace Hopper and she was a pioneer in computer programming. Hopper is also credited with coining the word "debugging".

The Beginning of Modern computing: Vacuum tubes

John Atanasoff and a graduate student Clifford E. Berry invented ABC (Atanasoff-Berry Computer) that was the first electronic digital computer.It was a purely digital computer that used vacuum tubes and used binary math and boolean logic to solve up to 29 equations at a time.

The Colossus, World war 2 and Alan Turing and the Enigma Code

In 1943 the colossus was built in collaboration with Alan Turing to break german crypto codes.

When the war broke out, governments began pouring money and resources into computing research. They wanted to develop technologies that would give them advantages over other countries. As a result many advancements were made in fields like cryptography.

During the war, computers were used to process secret messages from enemies. A team of elite mathematicians, including mathematician Alan Turing and Gordan Welchman were tasked with cracking enigma after coming to a realisation that the german messages were unbreakable with traditional codebreaking techniques.There were millions of possible combinations. Turing built a machine with the help of his fellow mathematicians which eventually cracked the codes.

The movie 'The Imitation Game' was released in 2014 highlighting Alan Turing's life and the role he played in cracking enigma.

ENIAC: The first high speed electronic digital computer

In 1946 consisting of 18,000 vacuum tubes, the ENIAC was the first successful high speed electronic computer. It took concepts from atanoffs ABC and elaborated on them on a larger scale.

The Transistor and the TRADIC

In 1947 the first silicon transistor was invented at Bell labs and by 1954 the first transistorised digital computer was invented known as the TRADIC composed of 800 transistors.

In this era many contributions were made to the hardware and software aspect of computing.

The First programming language

Assembly was the first programming language to be introduced in 1949 , it was a way to communicate with the machine in pseudo language instead of machine language known as binary. But the first widely used programming language was fotran invented by John backus in IBM in 1954. Assembly is a low level language whilst fotran is a high level language.

Grace Hopper: Inventor of the First Complier

Compiling code was a very expensive and time consuming process but this all changed with an inventor by the name of Grace Hopper invented a compiler to remove the process of manually converted the programming code back into machine code (binary).

She also assisted with the programming language Cobol.

Integrated circuit: A hardware revolution

Whilst transistors were an improvement over vacuum tubes, they still had to be individually soldered together , the more complex computers became the complex connections between transistors became.

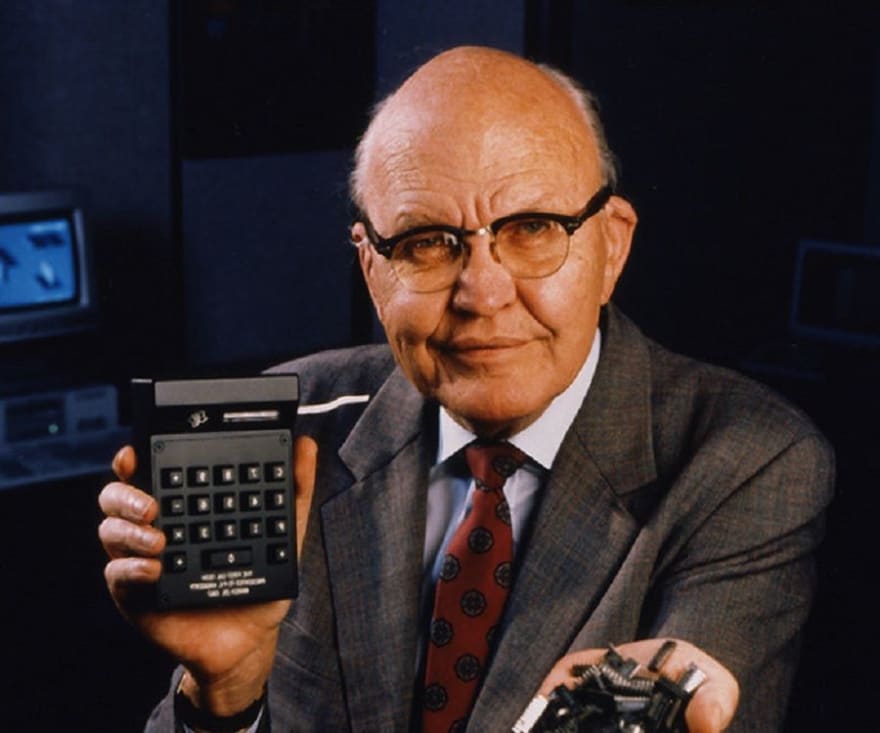

In 1958 this all changed with Jack Kilby of Texas instruments and his invention of the integrated circuit. It was a way to pack many transistors on a single chip, instead of individually wiring transistors. Integrated circuits sparked a hardwire revolution and helped the development of many other electronic devices such as the mouse invented by Douglas EngelBart in 1964 he also demonstrated the first GUI.

Xerox Alto

The first computer designed to support an operating system based on a graphical user interface (GUI) was the Xerox Alto. It would go on to influence the design of personal computers, the macintosh computer to be specific.

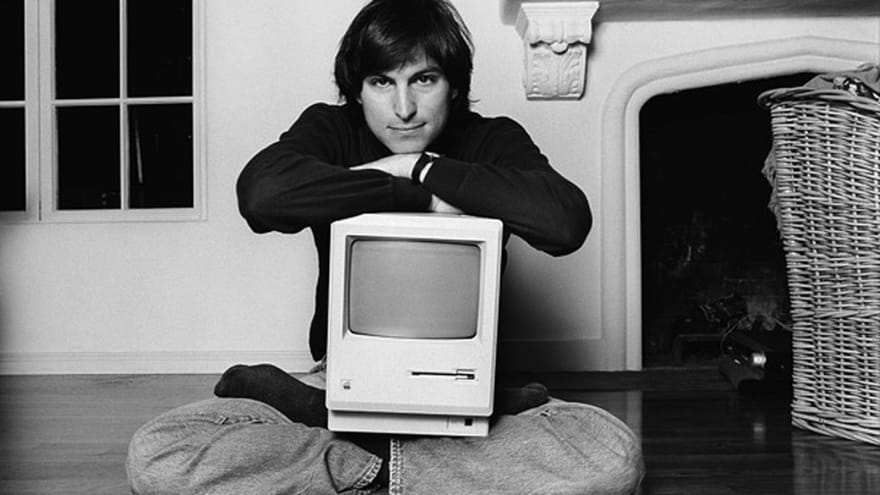

Steve Jobs and the Personal Computer

A pioneer of the personal computer known as one of the greatest inventions of the 20th Century, Steve Jobs founded Apple Inc along with Ronald Wayne and Steve Wozniak in 1976. They all worked at Atari. At that time computers were the size of a room and they had a vision of making computers small enough for people to use at home or in the office.

The Apple 1 was created in 1976 and only sold as a circuit board. Ronald Wayne left the company 12 days after the founding of Apple and sold his shared to Jobs and Wozniak for $800. In 1977 the Apple 2 was released and was greatly improved with a proper keyboard along with floppy disk drives.

Apple then went to XEROX PARC and used their GUI for future products. The Apple 3 and Lisa were released shortly after which both failed. It took the release of the macintosh in 1984 that changed the way people saw computers forever. The response to the release was a standing ovation.

Fast forward a few years later the iphone was introduced in 2007 and kicked off the smartphone market.

Computers as we know them today

We've come a long way from one of the most revolutionary inventions; the computer to the birth of the personal computer that has changed the way we do everything today from the way we communicate together via email, chat , video and social media on the internet to the way we function in our daily lives with applications that have allowed us to order food or catch a ride within minutes. We've come to rely on technology and it will continue to dominate various sectors for generations to come.

Top comments (1)

I use a computer every day, but I didn't know what a long way to go to improve it. Thank you.