Azure Storage Account Overview

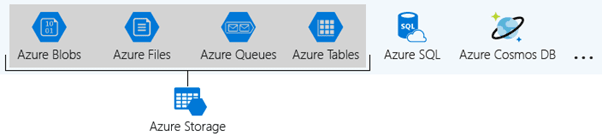

Each organization will have specific requirements for its cloud-hosted data. It can either store data within a particular region or need separate billing for different data categories. Azure storage accounts help to solemnize these types of policies and apply them to the Azure data. Azure offers enormous ways to store the data. There are several database options like Azure SQL Database, Azure Cosmos DB, and Azure Table Storage. Azure provides various ways to store and send messages, such as Azure Queues and Event Hubs. Even loose files can be stored using services like Azure Files and Azure Blobs.

What is Azure Storage Account?

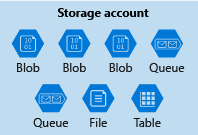

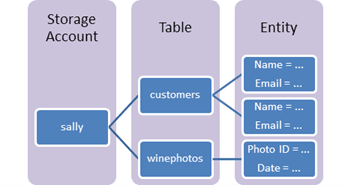

A storage account is a container that bands a set of Azure Storage services together. Only data services from Azure Storage can be comprised in a storage account. Integrating data services into a storage account allows the user to manage them as a group. The settings specified while creating the account, or setting that is changed after creation, is applicable everywhere. Once the storage account gets deleted, all the data stored inside gets removed.

Let us consider a machine manufacturing industry that produces machinery and instruments such as pulley, screws, and wedges. The products get marketed to stores which then sell them to consumers.

The designs and manufacturing processes of the industry get preserved as trade secrets. The spreadsheets, documents, and instructional videos that record this information are significant to the business and necessitate redundant storage. This data gets primarily retrieved from the main factory, so the user would prefer to store it in a nearby datacentre. The expenditure for this storage should be billed to the manufacturing department.

The industry also owns a sales group that creates demonstration and advertisement videos to promote consumers' products. Priority for this data is a low cost, rather than redundancy or location. This storage should be billed to the sales team. Such business handles require multiple Azure storage accounts, and each storage account will incorporate the appropriate settings for the data it holds.

Types of Azure Storage Accounts

Azure Storage provides different types of storage accounts. Each type supports unique features and has its pricing model. Consider these differences before creating a storage account to work out the best account for the applications. The types of storage accounts are:

• General-purpose v2 accounts: Basic storage account type for blobs, files, queues, and tables. Recommended for most scenarios using Azure Storage.

• General-purpose v1 accounts: Legacy account type for blobs, files, queues, and tables. Use general-purpose v2 accounts instead when possible.

• Block Blob Storage accounts: Storage accounts with premium performance characteristics for block blobs and appends blobs. It is recommended for scenarios with high transaction rates or scenarios that use smaller objects or require consistently low storage latency.

• File Storage accounts: Files-only storage accounts with premium performance characteristics. Recommended for enterprise or high-performance scale applications.

• Blob Storage accounts: Legacy Blob-only storage accounts. Use general-purpose v2 accounts instead when possible.

Core Storage Services

Core storage services provide an enormously scalable object store for data objects, disk storage for Azure virtual machines (VMs), a file system service for the cloud, a messaging store for reliable messaging, and a NoSQL store.

The Azure Storage platform comprises the following data services:

• Azure Blobsare an immensely scalable object store for text and binary data.

• Azure Files are organized file shares for cloud or on-premises deployments.

• Azure Queue is a messaging store for consistent messaging between application components.

• Azure Tables are NoSQL store for schema-less storage of structured data.

• Azure Disks are block-level storage volumes for Azure Virtual Machines.

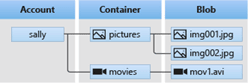

Azure Blob Storage

Azure Blob storage is an object storage solution designed for the cloud. Blob storage gets augmented for storing a massive amount of unstructured data. Unstructured data is data that does not stick to a specific data model or definition, like text or binary data. Blob storage objects can be accessed by the user or client application via HTTP/HTTPS from any part of the world. Azure Storage Rest API, Azure PowerShell, Azure CLI, or an Azure Storage client library is used to access Blob Storage objects. The flow and resources of a Blob Storage is pictured below,

The Blob Storage consists of a storage account with a container residing in it. Containers hold the respective blobs in them.

Azure Storage supports three types of blobs; they are:

Block Blobs

Block blobs are designed to store text and binary data. Block blobs are built with blocks of data that can be managed independently. It has a storage capacity of about 4.75 TiB of data. Larger block blobs are currently in preview and have storage up to 190.7 TiB

Append Blobs

Append Blobs are created with blocks like block blobs but are enhanced for append operations. Append blobs are preferred for scenarios like logging data from virtual machines.

Page Blobs

Page Blobs store random access files ranging up to 8 TB in size: these blobs stock virtual hard drive (VHD) files and function as disks for Azure virtual machines.

Azure Blob Use case

Let us consider an amplified-reality gaming company. The game runs on every mobile platform without any criteria. The scenario here is to add a new feature that allows users to record video clips of their gameplay and further upload them to the servers. Users can either watch the clips directly in-game or through the game website. A log on every upload and viewing can be planned and maintained to benefit analytics and traceability.

The user requirement would be a storage solution that can grip thousands of simultaneous uploads, a huge count of video data, and constantly growing log files. There is also a need for viewing functionality in all the mobile apps and website, so the user requires API access from multiple platforms and languages. Azure Blob storage could be an ideal solution for this application

Azure Blob Storage was designed to serve specific needs. If the business use case needs to store unstructured data like audio, video, images, etc., then you should probably go with this option. The objects which are being stored in Blob does not necessarily have an extension.

The following points describe the use case scenarios:

• Serving images or documents directly to a browser

• Storing Files for distributed access

• Streaming video and audio

• Writing to log Files

• Storing data for backup, restore, disaster recovery and archiving

• Storing data for analysis by an on-premises or Azure-hosted service

Azure Blob Pricing

Azure storage offers various access tiers, which allows storing the blob object data in a very cost-effective manner. The available access tiers include:

•Hot - Augmented for storing frequently accessed data.

•Cool - Optimized for storing less frequently accessed data, and the storage period lasts for at least 30 days.

•Archive - Enhanced for storing rarely accessed data and the storage period lasts for at least 180 days with flexible latency requirements.

Hot Access Tiers

The storage access cost for the hot access tier is comparatively higher than the storage costs of cool and archive tiers. But access cost of hot access tiers is low. Real-time usage for the hot access tier include:

• Data that is in active use or predicted to be accessed often, in detail, the data that is read from and written to often.

• Data that is staged for processing and in eventual migration to the cool access tier come under hot access tiers.

Cool Access Tiers

In comparison to hot access tiers, the cool access tier has lower storage costs and higher access costs. This tier is envisioned for data that will stay back in the cool tier for at least 30 days. Real-time usage for the cool access tier include:

• Short-term backup and disaster recovery datasets

• Older media content that is not viewed regularly anymore but is expected to be easily available when accessed

• Large data sets which must be stored cost-effectively when more data is being collected for future processing. For example, long-term storage of scientific data or raw telemetry data from a manufacturing firm

Archive Access Tiers

The storage cost of archive access is low, but the data retrieval cost is high when compared to the hot and cool tiers. Data must sustain in the archive tier for at least 180 days or will be subjected to an early deletion charge. The retrieval time of data in an archive access tier might consume more time depending on the priority of the rehydration. Real-time usage for the Archive Access Tier include:

• Long-term backup, secondary backup, and archival datasets

• The preservation of original raw data even after processing it into the final usable form

• Long-time storage of compliance and archival data, which is hardly ever accessed

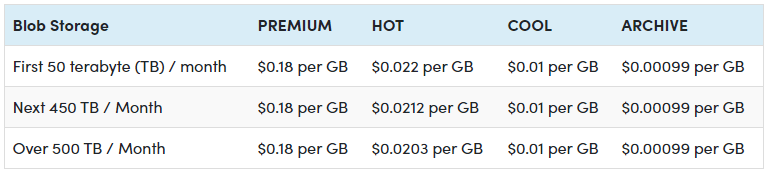

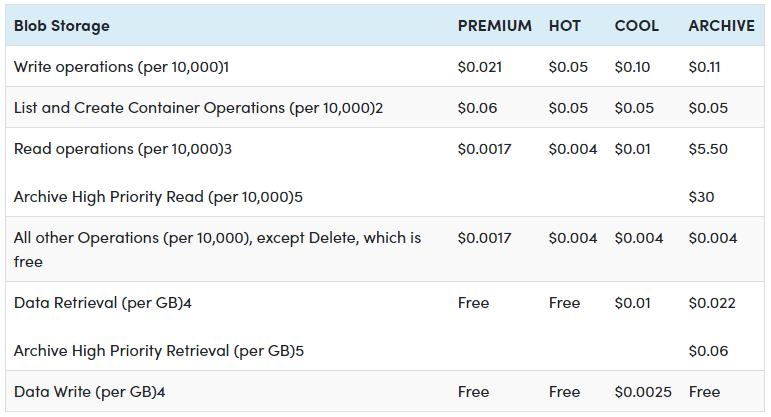

Data Storage Prices

Operations and Data Transfer Prices

Checkout Azure Page Blobs Storage Pricing for more details

Azure Storage Files

Azure Files provide fully managed File shares in the cloud that are approachable via the industry-standard SMB. Azure File shares can be attached parallelly by cloud or on-premises deployments of Windows, Linux, and macOS. It can be cached on Windows servers with Azure File Sync for quicker access. It permits the user to set up highly obtainable network file shares that can be accessed by using the standard Server Message Block (SMB) protocol. Multiple VMs can share similar files with both read and write permissions.

The only contrast between Azure Files and files on a corporate file share is, user can access the files from anywhere by using a URL that points to the file and contains a shared access signature (SAS) token. SAS tokens can be generated by the user; they allow specified access to a private asset for a specific time period.

The storage of file shares is one type of data that can be contained in an Azure Storage account. File shares can be implemented for many real-time scenarios:

• Many on-premises applications rely on file shares. This feature makes it flexible to migrate the applications that share data to Azure. If the file share is mounted to the same drive letter that is used by the on-premises application, the segment of application that accesses the file share should work with minimal, if any, changes.

• Configuration files are secured on a file share and can be accessed from multiple VMs. Tools and utilities consumed by multiple developers in a group can be stored on a file share, making sure that everybody can view it and use the same version.

• Resource logs, metrics, and crash dumps are just three models of data that can be written to a file share and can be handled or examined later.

Azure Storage File Use case

When it comes to file sharing, the end user should not be allowed to access the copies of the file from its URI and need to be mapped locally in the computers. This is when Azure File Storage fits customer needs. File Storage can be used if the business use case needs to deal mostly with standard File extensions like *.docx, *.png and *.bak, then you should probably go with this storage option.

Let us consider a financial company that is migrating an application to Azure that creates reports and data exports for users and other systems to consume. The company stores these reports and data exports on both NAS devices and Windows file shares. The files are stored this way so they can be easily shared between systems. The company wants to consolidate the storage of these files to a native cloud service. They want to continue to use the Server Message Block (SMB) to access the files securely. The main concern is that they want to reduce the impact on existing applications, systems, and users. The company wants to use a drop-in replacement for their existing SMB protocol shares. They intend that there won't be any code changes required to support the moved data.

The following are the solutions provided by Azure Files for the use case scenario:

"Lift and shift" applications

The foremost challenge faced by the company is shifting its application to Azure. Azure Files ease the "lift and shift "of applications to the cloud, where the file application or user data is stored in the file share.

Replace or supplement on-premises File servers

After shifting the application to the cloud, the company wants to consolidate the storage of these files to a native cloud service. Azure Files is accustomed to completely replace or supplement any on-premise file servers or NAS devices.

Simplify cloud development

The company's final requirement is they would like to continue the usage of Server Message Block (SMB) to access its files safely. In Azure Files, user can store development and debugging tools that need to be accessed from many virtual machines.

Azure Blob Storage vs File Storage

Azure Blob Storage and File Storage, both services have their own defined properties and are implemented in different scenarios. Azure Files provides fully managed and organized cloud file shares that can be accessed from anywhere. Azure Blob Storage permits the storage of unstructured data and it can be accessed at a massive scale

Consider a development environment where every developer needs access to IDE and tools without using the internet to download it. In this situation, Azure Blob Storage would meet the need and using which the developer can only store development tools then give a link to the team to access the Blob location.

For implementing a File server in an organization, the user should choose the Azure Files option. A File server is used to share Files across departments in an organization. When it comes to File sharing, the end user should not be allowed to access the copies of the file from its URI and need to be mapped locally in the computers. This is when Azure File Storage fits the organization's need.

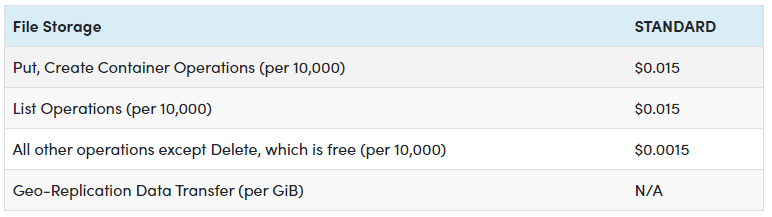

Azure Storage File Pricing

Below are prices for Data storage and Operations and Data Transferring in Azure File,

Pricing for Operations and Data Transferring

Checkout Azure Files Pricing for more details

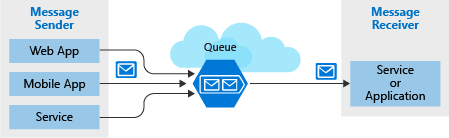

Azure Storage Queues

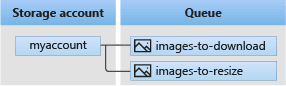

Azure Queue storage is an Azure service that implements cloud-based queues. Each queue maintains an inventory of messages. Application components access a queue employing a REST API or an Azure-supplied client library. Typically, you'll have one or more sender components and one or more receiver components. Sender components add messages to the queue. Messages are retrieved from the front of the queue for processing by receiver components. The subsequent illustration shows multiple sender applications adding messages to the Azure Queue and one receiver application retrieving the messages. Storage Queues are part of the Azure Storage infrastructure, feature a simple REST-based GET/PUT/PEEK interface, providing reliable, persistent messaging within and between services.

Concepts of Queue service

URL format:

Queues are addressable using the subsequent URL format:

https://.queue.core.windows.net/

The following URL addresses a queue within the diagram:

https://myaccount.queue.core.windows.net/images-to-download

Storage account: All access to Azure Storage is completed through a storage account. For information about storage account capacity.

Queue: A queue contains a group of messages. The queue name must be all lowercase for information on naming queues.

Message: A message, in any form, with a range up to 64 KB. Before version 2017-07-29, the utmost time-to-live allowed is seven days. For version 2017-07-29 or later, the utmost time-to-live is often any positive number, or -1 indicating that the message doesn't expire. If this parameter is neglected, the default time-to-live is seven days.

Azure Storage Queues Use case

Imagine the user works as a developer for a major news organization that reports breaking news alerts. The company employs a worldwide network of journalists that are constantly sending updates through a web portal and a mobile app. A middle-tier web service layer then takes those alert updates and publishes them online through several channels. However, it's been noticed the system is missing alerts when globally significant events occur.

The middle tier provides plenty of capacity to handle normal loads. However, a look at the server logs revealed the system was overloaded when several journalists tried to upload larger breaking stories at the same time. Some writers complained the portal became unresponsive, and others said they lost their stories altogether. The user has spotted a direct correlation between the reported issues and the spike in demand on the middle-tier servers.

Clearly, the user needs a way to handle these unexpected peaks. In such a situation user doesn't want to add more instances of the website and middle-tier web service because they are expensive and, under normal conditions, redundant. They could dynamically spin up instances, but this takes time, and they would have the issue waiting for new servers to come online.

This problem can be solved by using Azure Queue storage. A storage queue is a high-performance message buffer that can act as a broker between the front-end components and the middle tier. The front-end components place a message for each new alert into a queue. The middle tier then retrieves these messages one at a time from the queue for processing. At times of high demand, the queue may grow in length, but no stories will be lost, and the application will remain responsive. When demand drops back to normal levels, the web service will catch up by working through the queue backlog.

Azure Storage Queue properties

• Azure Storage Queues can be used when there is a need to store messages larger than the size of 80 GB.

• Azure Storage Queues provide logging of all the occurred transactions in the Storage Queue, which can be used for analytics or audit purpose.

• If the application requires load balancing, failure tolerance, and increased scalability, then Azure Storage Queues are the best choice.

• Storage Queues provide flexible and reliable delegated access control mechanisms. Users can provide access to the Storage Account level or at the entity level.

• When it comes to scalability, the Storage Queues can store up to 200 TB of messages. It is also possible to create an unlimited number of Storage Queues in a Storage Account.

• Allowed characters in Storage Queues names are lowercase alphabets, numbers, and hyphens with length ranging from 3 to 63.

• The maximum message size in Storage Queues is 64 KB. If the messages are base64 encoded, then the maximum message size is 48 KB. Large message sizes are supported by combining queues with blobs, through which messages up to 200 GB can be enqueued as single data.

• Whenever the Storage Queue messages are retrieved more than the specified Dequeue Count, the messages are then moved to the configured dead-letter queue.

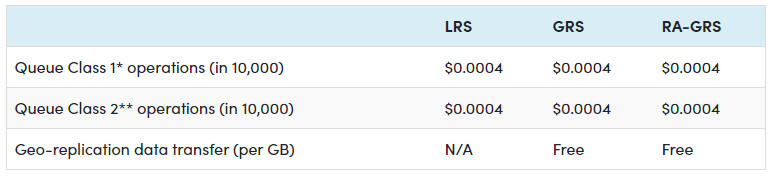

Azure Storage Queue Pricing

Below are prices for Data storage and Operations and Data Transferring in Azure Storage Queue,

Pricing for Data Storage

Pricing for Operations and Data Transferring

Checkout Azure Queues Storage Pricing for detailed pricing

Azure Table Storage

Azure Table storage behaves as a service that stores structured NoSQL data inside the cloud, producing an attribute store with a schema-less design. Because Table storage is schema-less, it is easy to adapt your data because your application's needs evolve. Access to Table storage data is fast and cost-effective for several applications and is usually lower in cost than traditional SQL for similar volumes of knowledge.

• Table: A table is a group of entities. Tables do not enforce a schema on entities, which means that one table can contain entities that have different sets of properties.

• Entity: An entity is a collection of properties, similar to a database row. An entity in Azure Storage can be up to 1MB in size. An entity in Azure Cosmos DB will be up to 2MB in size.

• Properties: A property is a name-value pair. Each entity can comprise up to 252 properties to store data. Each entity also has three system properties that state a partition key, a row key, and a timestamp. Entities with an equivalent partition key are often queried more quickly and inserted/updated in atomic operations. An entity's row key's its unique identifier within a partition

Azure Table Storage Use case

Table storage is employed to store flexible datasets like user data for web applications, address books, device information, or other metadata that the service requires. Users can store any number of entities in a table. A storage account may contain any number of tables up to the capacity limit of the storage account Azure Table Storage Pricing. Azure tables are perfect for storing structured, non-relational data. Real-time uses of Table storage include:

• Storing datasets that do not require complex joins, foreign keys, or stored procedures and may be denormalized for fast access

• Quickly querying data using a clustered index

• Accessing data using the OData protocol and LINQ queries with WCF Data Service .NET Libraries

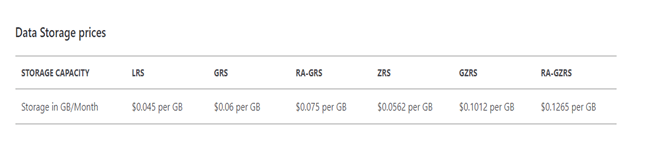

Azure Table Storage Pricing

Below are prices for Data storage and Operations and Data Transferring in Azure Storage,

Pricing for Data Storage

Pricing for Operations and Data Transferring

Azure charges $0.00036 per 10,000 transactions for tables. Any kind of operation alongside the storage is counted as a transaction, including reads, writes and deletes.

Checkout Azure Tables Storage Pricing for detailed pricing

Azure Disk Storage

Azure managed disks are block-level storage parts that are managed by Azure and used with Azure Virtual Machines. Managed disks are similar to a physical disk in an on-premises server but virtualized. In managed disks, all you must do is specify the disk size, type, and disk provision. Once the disk is provisioned, Azure handles the rest. Each disk can take one of three roles in a virtual machine:

•OS disk. One disk in each virtual machine contains the operating system files. When the user creates a virtual machine, he/she selects a virtual machine image, which fixes the operating system and the OS disk attached to the new machine. The OS disk has a maximum capacity of 2,048 GB.

•Data disk. User can add one or more data virtual disks to each virtual machine to store data. For example, database files, website static content, or custom application code should be stored on data disks. The number of data disks that can be added depends on the virtual machine size. Each data disk has a maximum capacity of 32,767 GB.

•Temporary disk. Each virtual machine contains a single temporary disk used for short-term storage applications such as page files and swap files. The contents of temporary disks are lost during maintenance events, so do not use them for critical data. These disks are local to the server and are not stored in a storage account.

Azure Disk Storage Use case

The user manages a healthcare organization, and he is beginning a lift-and-shift migration to the cloud, where many of their systems will be running on Azure virtual machines. These systems have a variety of usage and performance profiles that are highly confidential. The user is concerned about the storage and does not want to access that data outside the virtual machine.

To address these needs, the organization's got to option is Azure Disk Storage. The Azure Disk Storage is capable of,

• "Lift an shift" of applications that use native file system APIs to read and write data to persistent disks.

• Preserve data that is not required to be accessed from outside the virtual machine to which disk is attached.

Azure Disk Storage Pricing

Azure Managed Disks are the new and recommended disk storage offering to be used with Azure virtual machines for persistent knowledge storage. Customer can use multiple Managed Disks with each virtual machine. Azure offers four Managed Disks — Ultra Disk, Premium SSD Managed Disks, Standard SSD Managed Disks, Standard HDD Managed Disks. For Azure Managed Disks, the user will be billed on an hourly basis.

Checkout Pricing - Managed Disks for detailed pricing

Azure Storage Security

Azure Storage accounts provide several high-level security benefits for the data in the cloud:

• Protect the data at rest

• Protect the data in transit

• Support browser cross-domain access

• Control who can access data

• Audit storage access

Encryption at rest

All data written to Azure Storage is automatically encrypted by Storage Service Encryption (SSE) with a 256-bit Advanced Encryption Standard (AES) cypher. SSE automatically encrypts data when writing it to Azure Storage. When data is read from Azure Storage, Azure Storage decrypts the data before returning it. This process incurs no additional charges and doesn't degrade performance. It cannot be disabled.

Encryption in transit

Keep the data secure by enabling transport-level security between Azure and the client. It is advisable to use HTTPS to secure communication over the public internet. When the REST APIs are called to access objects in storage accounts, the user can enforce HTTPS by requiring Secure transfer for the storage account. After enabling secure transfer, connections that use HTTP will be refused. This flag will also enforce secure transfer over SMB by requiring SMB 3.0 for all file share mount.

CORS support

Azure Storage supports cross-domain access through cross-origin resource sharing (CORS). CORS uses HTTP headers so that a web application at one domain can access resources from a server at a different domain. By using CORS, web apps ensure that they load only authorized content from authorized sources. CORS support is an optional flag that can be enabled on Storage accounts.

Role-based access control

Azure Storage supports Azure Active Directory and role-based access control (RBAC) for resource management and data operations. To security principals, user can assign RBAC roles that are scoped to the storage account. Use Active Directory to authorize resource management operations, such as configuration. Active Directory is supported for data operations on Blob and Queue storage.

Auditing access

Auditing is another part of controlling access. User can audit Azure Storage access by using the built-in Storage Analytics service. Storage Analytics logs every operation in real-time, and you can search the Storage Analytics logs for specific requests. Filter based on the authentication mechanism, the success of the operation, or the accessed resource.

Azure Monitoring

Azure Monitor maximizes the supply and performance of the applications and services by delivering a comprehensive solution for collecting, analyzing, and working on telemetry from the cloud and on-premises environments. It helps us understand how the applications are performing and proactively identify issues affecting them and, therefore, their resources.

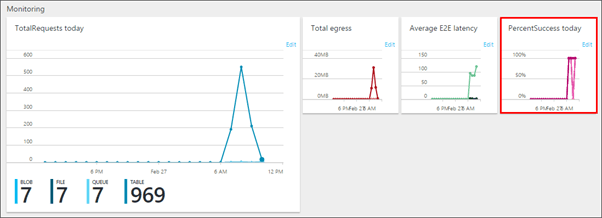

Azure Storage Account Monitoring

Azure Storage Analytics performs logging and offers metrics data for a storage account. User can use this data to trace requests, examine usage trends, and identify issues with the storage account. To use Storage Analytics, the user must enable it individually for each service to be monitored. This can be enabled from the Azure portal.

The gathered data is stored in an eminent blob for logging and in renowned tables for metrics, accessed using the Blob service and Table service APIs. Storage Analytics has a 20 TB limit on the quantity of stored data independent of the total limit for the storage account.

Once the desired Storage Account is configured to the Diagnostics option under the Monitoring section, the user will define the type of metrics data to monitor and the retention policy for the data. A default set of metrics is displayed in charts on the Storage Account blade and the individual service blades. Once metrics are enabled, it may take up to an hour for data to appear in its charts.

For more details on Azure Storage Monitoring, see Monitor a storage account in the Azure portal.

Manage and Monitor Azure Storage Account with Serverless360

Managing the activities in a service like Azure Storage Account, which deals with different storage services, requires a tool with outstanding management and monitoring capabilities. Serverless360 comes into the picture to fulfil all the complex management needs required by the Azure services. Learn more about Serverless360 to deal with Azure Storage Account in real-time.

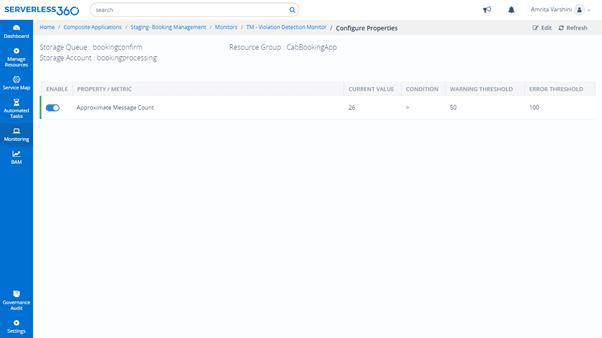

Monitor Azure Storage Services with Serverless360

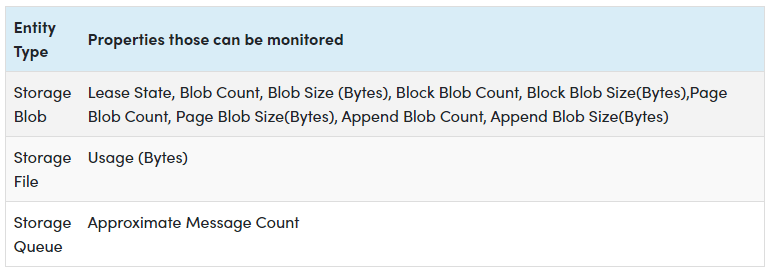

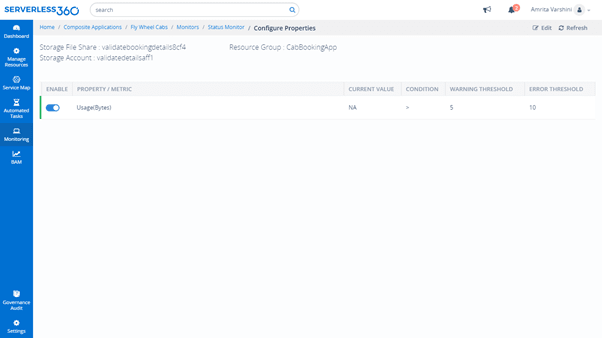

With Serverless360 monitoring abilities, the user can monitor the Storage Files, Storage Blobs and Storage Queues in a Status or Threshold monitor. Below is the list of properties on which these entities can be monitored.

Status Monitor generates a report at specific times in a day representing the entities' state against the desired values. Threshold Monitor generates a report when certain properties violate desired values for a specified period. Predominantly, Serverless360 monitors the Blobs, Files and Queues based on its properties rather than its metrics, unlike Azure Portal.

The below images depicts the Blob, Queue and Files configuration in the Status and Threshold Monitor of the Serverless360 application.

Azure Storage Queue Configuration,

Manage Azure Storage Queues with Serverless360

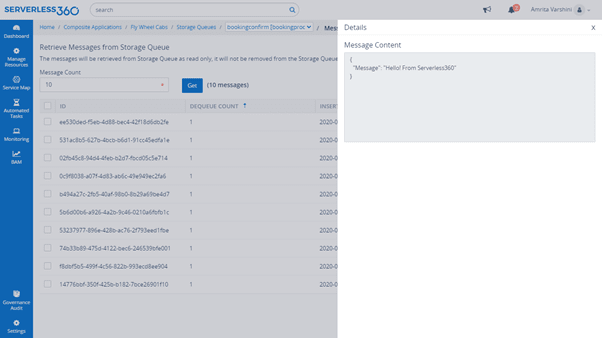

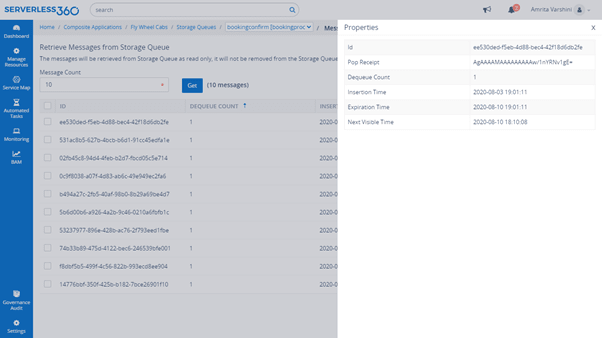

View Messages

With Serverless360, users can view the message content in the Storage Queues by retrieving the messages.

It is also possible to view the properties of the Storage Queue messages like dequeue count, Insertion time and more.

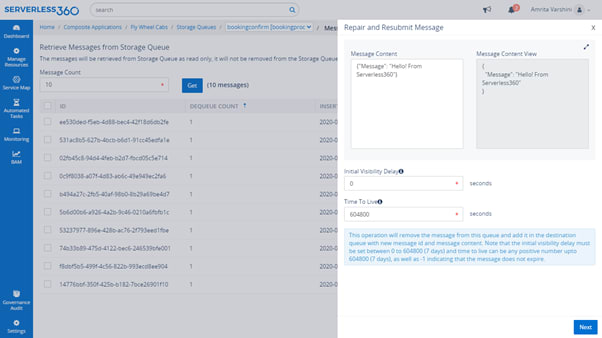

Message Processing in Storage Queues

Consider a need to send messages in a queue to another queue or purge the old messages in a queue. With Messages processing in Serverless360, the user can update, resubmit the messages, repair & resubmit the message, and delete it.

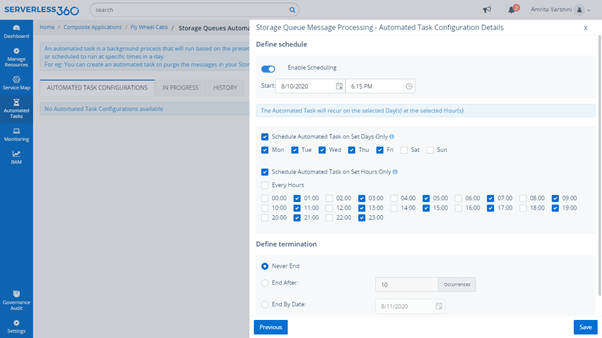

Automated Tasks to Purge Storage Queue Messages

Suppose the number of messages in the Storage Queue exceeds the limit that can be deleted manually. In that case, an automated task can be created to purge the Storage Queue periodically when dealing with a huge volume of messages.

Webinars/ Docs links

Azure Storage Account Monitoring and Management - Serverless360

Monitoring Storage Accounts - Azure Storage Accounts

Azure Storage documentation - Microsoft Docs

Top comments (0)