The Modern Data Stack is a tooling set for engineers to flexibly process data. Each framework has a specific task. In Snowflake we store data, Airflow orchestrates, Airbyte loads data, DBT transforms it and Superset visualizes. Complex frameworks are needed to deal with complex data. It's no wonder that with the divergence of tools, it's easy to lose sight of the big picture. In fact its a crowded market now.

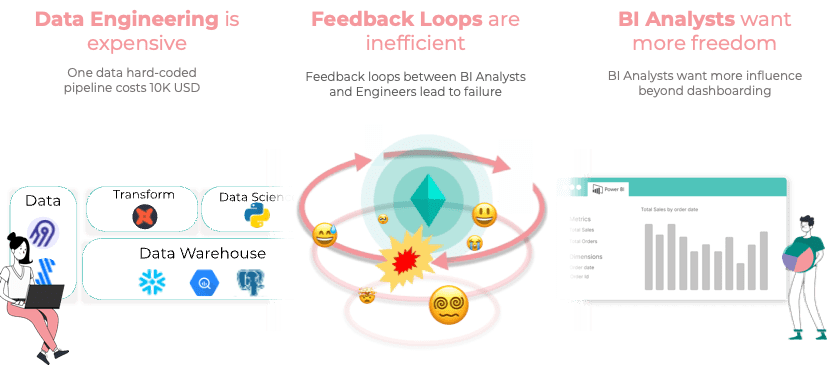

While in the past a BI analyst could analyze data in Excel or MySQL and then create a report, now he is just the person who visualizes data and briefs the IT team. The BI analyst is left out, and the engineering department is left alone and overloaded with tasks. The important discourse between Business and IT is missing as well as a fast iterative process. No wonder most data projects fail, don't deliver ROI or take way too long. The cross-functional teams suffer from speaking different languages. A BI Analyst wants to use DBT, but can't. An engineer wants more support so he doesn't have to work through ad-hoc and redundant tasks all the time.

I have built an open source tool with Matti for this very reason that allows BI Analysts to build advanced data pipelines directly through an abstraction layer. The BI Analysts can build workflows from data blocks (Data Sources = Airbyte under the hood), transformation blocks (Transformation= dbt) and data science blocks and connect them to a dashboard. Here is an overview of all integrations: https://www.kuwala.io/data-libary.

This creates a clean codebase under the hood. For example, DBT projects are created and built automatically. We have the idea to build a tool for the data analytics space similar to Webflow for web designers. An experienced engineer can customize the dbt models or create new models and make them available on the Kuwala Canvas for no-coders. How to do this (for Engineers) is described here: https://docs.kuwala.io/contributing/adding-transformations. Did I mention we are open-source? 😅 It would be awesome working with you together on a PR.

Back to topic: This flexibility is absolutely necessary because data projects are individual, grow, and need to be customizable.

Our current project state covers the following points:

💯 The setup of Kuwala is now straightforward. Just clone the repo and pull the dockers!

🗂 You can now easily connect to Snowflake, PostGres or BigQuery.

🧱 +20 Transformations covering merging, aggregating, and filters for data. Under the hood, we are generating clean dbt Labs models! (https://www.kuwala.io/data-libary)

🛠 you can now also easily add a new transformation by using dbt-core. (https://docs.kuwala.io/contributing/adding-transformations)

Want to give Kuwala a try? It's easy:

- visit our Github repo: https://github.com/kuwala-io/kuwala

- clone our repo: https://github.com/kuwala-io/kuwala.git

- cd to the root directory...

- start from there in the terminal

docker-compose --profile kuwala up. - open your http://localhost:3000

We now search for more people using it and building seperate parts to grow as an community. We are set - are you?

Start hacking! Send us your issues! Start contributing! And if something doesn`t work, join our Slack community and we will help you 🚀 https://kuwala-community.slack.com/ssb/redirect

Top comments (0)

Some comments may only be visible to logged-in visitors. Sign in to view all comments.