Organizations today are gearing up to redesign their infrastructure and development approaches. It’s a continuous process of rethinking, unlearning, and relearning - different approaches. With the prevalence of application-specific business transformation, the technology teams are constantly on their toes for bringing regular upgrades to their software models.

According to this report, 19% of respondents of the Global Survey believe that Containerization is already playing a strategic role in driving their business growth.

Instagram was first launched in the year 2010 as an IOS app, and in April 2012 it was launched for Android users. Then, we have LinkedIn which was first established as a website, and then when it started gaining momentum, it launched its app both for IOS and Android (2015) to increase its reach and enhance the mobile experience.

In the above cases, we see that every product that’s ever made is worked on constantly to make it accessible and democratic on all platforms. And what reduces our time in this process is when we follow the “Write Once and Run Anywhere” Philosophy with the codes written. Working on these lines is where application containerization comes into the picture. Although we can also say that application containerization is an alternate form of virtualization, but is lighter and more flexible.

What is Application Containerization?

Containerization is the process of creating a packaged unit (container) consisting of an application and its dependencies like files, libraries, and configuration files; making it an independent executable unit. Basically, a ‘container’ is an application with its own runtime environment, allowing the application to run reliably in multiple computing environments - as they partition a shared operating system.

Containerized applications are becoming an increasingly essential reason for adopting the cloud native development model. Comparing containers and virtual machines we see - VMs contain a guest OS i.e., possess a virtual copy of hardware needed by the OS to run, plus the application and its dependencies; whereas Containers virtualize the OS (which is mostly Linux or Windows) which means they only have an application and its dependencies, and can be easily run by leveraging the resources of the host OS.

This makes containers lightweight and portable, making them the most viable alternative for developers to address application management issues. In addition to that, working on the upgrades of applications individually and making them better than before.

If we look at it from a business perspective, we have a lot of areas to talk about - the ways it helps in keeping the business nimble in the dynamic market. Let’s discuss each of them briefly.

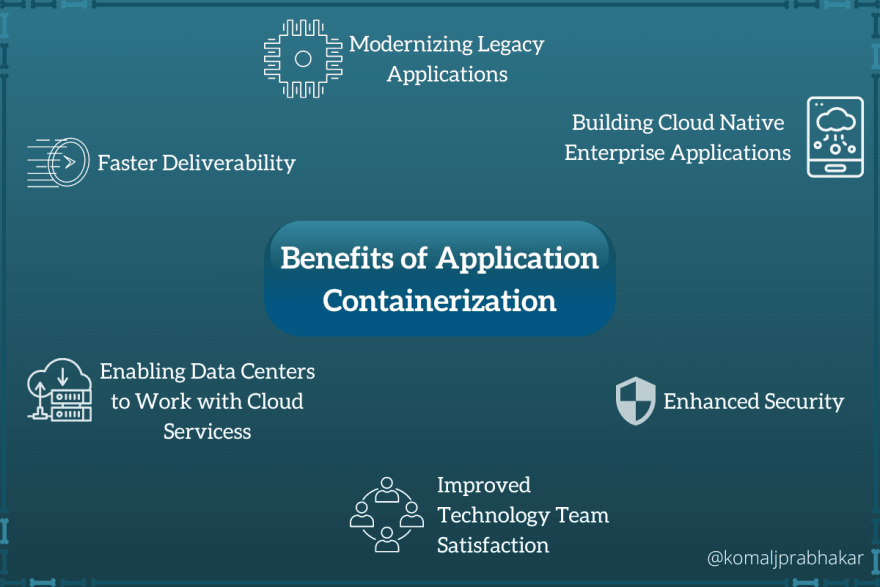

Benefits of Application Containerization

- Modernizing Legacy Application

- Building Cloud Native Enterprise Application

- Enabling Data Centers to Work with Cloud Services

- Faster Deliverability

- Enhanced Security

- Improved Technology Team Satisfaction

Modernizing Legacy Applications

Legacy Applications are often monolithic applications. What makes legacy applications undesirable for modern business scaling is the fact that they are difficult and expensive to update and scale.

This difficulty can be cited to its architectural complexities. In monolithic architecture, all the components are shipped and integrated together, whereby if one component is facing performance challenges, the entire application is scaled up - only to fix the issue of that one particular demanding component. This is a clear scene of waste of resources - both time and money.

If the entire architecture is composed of containers containing a single application, developing and scaling it as per requirements, gives us a lot more flexibility in efficient usage of resources.

Building Cloud Native Enterprise Application

What are cloud native applications?

These are built with discrete, reusable single-function components known as microservices. And they are so designed that they can easily be integrated into any cloud environment. These are built to operate only on cloud, and are structured to be scalable and platform agnostic.

Why cloud native applications?

The reason behind this concept is to meet the demands of improved application performance, and add flexibility and extensibility. Some more advantages to look forward to -

- Compared to monolithic applications, these are easier to manage and iterative improvisations can be brought in through Agile and DevOps processes.

- Being composed of microservices gives the flexibility to propose newer updates - the addition of newer functionalities incrementally. Improvements are incorporated non-intrusively, causing absolutely no disruption of the end-user experience.

- Scaling up and down is so much easier with the elastic infrastructure of the cloud architecture.

- Rolling out new updates for a single function without disrupting the performance of other applications is very much possible.

Though these offer many advantages, managing them can be challenging and cumbersome. Their maintenance demands a robust DevOps Pipeline with additional tool sets, replacing all traditional monitoring systems.

Enabling Data Centers to Work with Cloud Services

It is the central facility of an Enterprise’s IT operations. The upkeep of the security of the data center is essential to ensure the continuity of business operations. When enterprises migrate their workloads to cloud data centers, they don’t have to worry about their maintenance. Cloud service providers take responsibility for its upkeep and offer shared access to virtualized computing resources.

Advantages of Cloud Data Centers

- Reduced Capital Expenditures As we can pay on an as-needed basis, also we have a variety of subscription models suiting our specific needs.

- Efficient Use of Resources With Public Cloud Services offering shared access, individual enterprises don’t have to build and maintain resources of computing and storage, to support them at occasional peak user-traffic periods.

- Managed Cloud Services The cloud providers assume the responsibility of maintaining the cloud environments and also guaranteeing the security of critical resources.

- Global Network of Data Centers Major Cloud Service Providers have their data centers located across multiple regions and continents. Enabling customers to have an even data processing experience, also allows them to meet all the security and compliance requirements of their customers, irrespective of their geographic location.

- Rapid deployment and scalability Either facilitating new updates or scaling an existing application to meet higher demands, the cloud makes it possible in a fraction of the time utilized in doing so in on-premise data centers.

Faster Deliverability

When the individual performance of containers is measured, monitored, and scaled individually; we are avoiding disruptions in the end-user experience. Moreover, when they are treated separately, we can achieve the desired scale of certain services as per our requirements.

Enhanced Security

When all the functionalities are separated from each other through different containers, and they are running in their respective self-contained environments, this adds an additional layer of security. To simplify it, even if any one container’s security is compromised, other containers are safe from any possible intrusion. On top of that, the containers are even separated from the host operating system and interact minimally with the host’s computing resources, making the deployment of applications inherently more secure.

Improved Technology Team Satisfaction

When every new update created or new code written can be easily made accessible to the customers, without disrupting the entire application, or affecting other functionalities, it gives the team more time and encourages the innovation flow.

Conclusion

Though containerization application gives us a lot of advantages over traditional monolithic architecture, its implementation comes with a lot of challenges.

- The designing and maintenance of templates for container creation. In the long run, when container adoption expands beyond simple or regular use cases, these templates become the roadmap for simplified implementation.

- Expansion of Governance Model Oftentimes, it’s seen that the application layers are shared among different containers - on one hand, it implies an efficient usage of resources but on the other, it makes the containers vulnerable to interferences and security breaches.

- Choosing the right open-source container orchestration platform The container orchestrator is at the forefront of setup and management of a containerized application. If not chosen wisely, every deployment will be slow and might encounter errors.

- Integration with DevOps Environment The maintenance of these containers takes place through the DevOps methodology. Incorporating it into the DevOps lifecycle requires knowledge and skill.

Container orchestrator is a necessity when dealing with a containerized application. It simplifies the handling and management of containers by automating the steps of installation, scaling, and even assisting in rolling out new features and any bug fixes.

The popular choice for this has always been Kubernetes. If we list down reasons, these would be commonly heard -

- fully open-source

- complete granular control over scaling of each container

- and lastly but the most prominent feature is

- supports load balancing and self-healing. However, the complexity and distributed nature of Kubernetes, make the manageability tough. An intuitive platform offering seamless manageability of Kubernetes clusters looks quite convincing when looking forward to an automated work environment. Not just enabling smooth delivery and maintenance of containerized applications but even helping in building custom automation specific to your business needs.

Have a look at this Kubernetes Cheatsheet

With big tech giants like Twitter, Netflix, and Amazon adopting them, it shouldn’t be seen as a distinction of big tech giants, but it should be seen as an example of how it helps in scaling and expanding infrastructure.

Top comments (0)