In a perfect world, systems or automated tests would never fail – everything in software test reports would, literally, “go green.”

And to add to the stress, failures don’t just always go our ways to fail for the same reason.

There’s something different every time: bugs in the AUT, errors in the test case itself, or problems within the structure to cause timeouts during execution. This is when software test reports come into play, acting as the trusted companion to QA engineers and developers to search for the root cause and make follow-up fixes.

Even so, as digital economies race and raise demand for qualified software faster, the traditional way of skimming every line of log is sure to be insufficient. Read on to understand when traditional means of execution logs require an upgrade to a Smart Test Report.

Software Testing Logs: What Does It Report?

In its most basic form, the structure of a software test log reports all information about the Execution Environment and Test Execution Log of the application under test (AUT).

Execution Environment

This section summarizes the main information of the:

- Host Name: account or administrator ID of the machine in use

- OS: the operating system that a machine is running on

- Browser: the selected web browser and its version for tests to execute

Test Execution Log

The Execution Log gives the results of your tests based on a Test Suite and Test Case level, with their status coded in a traffic light color scheme – Passed = Green, Failed = Red, Incomplete = Yellow and Skipped = Grey.

On a Test Suite level, results are shown in an overview format to summarize the:

- Full Name: title or name of a Test Suite

- Start / End / Elapsed: exact date, start and end time, as well as the entire length to run through all of the Test Case contained in a Test Suite

- Status: total number of Test Cases of a Test Suite and their final result

In contrast, the Test Case area goes into detail about a Test Suite specifics of:

- Full Name: title or name of a Test Case

- Description: notes about the purpose or objective of a Test Case

- Start / End / Elapsed: date, starting and ending time, and the total duration for all the Test Step to execute in a Test Case

What Traditional Test Logs Lack

To be upfront, if you’re just looking to do some on-the-fly fixes for a failed step, scrolling down to that red line is already enough. As for tracing and monitoring test performance over time, you’d manually input every single execution log into a spreadsheet or CSV file.

A process like that only works in the short-term, or when your project has a few test suites to handle. What if your client and stakeholders ask for a much more complex application, built on user stories with a large number of features to develop and tests to design?

Your QA team now has hundreds and thousands of Test Suites to manage. Right now, we’re only talking about Test Suites, but to narrow it down into the volume of Test Cases and Test Logs? You do the math.

Nonetheless, reporting back to your Project Managers or stakeholders for tests that you’ve run months ago would also be a real hassle to trace back.

To help you picture how doing software test reporting will impact your team’s productivity, we’ve made a shortlist of the expected drawbacks.

- Slower Root Cause Analysis: For every Test Suite, Test Case or Test Step added, the lines of logs to go through will multiply. Whether a test failed due to an actual bug or only a false positive takes up more time to answer.

- Pressure on disk space: Exporting and saving reports into a PDF, HTML or CSV onto your local machine is meant for one-time use. If this is done long-term, your hard drive will run out of storage much faster.

- Miscommunication: Each member on the team will be occupied with their own tasks and might miss the report file you’ve sent. From here, miscommunication will also be much more frequent, leading to release delays.

- Incomplete presentation on quality and traceability: With individual test and bug reports and requirements documents scattered all over, project managers will have a harder time assessing build quality and release readiness.

*Smart Test Reporting – Katalon TestOps to Do QA Like a Boss *

Katalon TestOps is a comprehensive test orchestration platform that harnesses the power of analytics to enable QAs, developers and project managers to make informed decisions.

Intuitive Dashboard

TestOps Dashboard offers teams a helicopter view of the release-essential metrics.

- Monitor project pace and progress with delivery-date countdown, dates, build-specific pass/fail ratio for each version

- View test activities based on various timeframes

- Visualize performance trends of execution duration and pass/fail tests

Collaborative and Centralized Workspace

Regardless of the testing platform, CI/CD system or frameworks in your team’s toolchain, Jira integration is available to:

- Synchronize and gather data of the Test Run Status, ID, Name, Duration and Date, the number of Pass/Fail/Exempted?/Incomplete Test Case and Assignee/Author

- Instantly find the exact Test Suite, Test Suite Collection, Status, Profile, Assignees and ID you wanted

Smart Test Reports

Re-run Report

Retry Failed Execution Immediately is a feature in Katalon Studio – the market-leading test automation tool to create, execute and maintain low-code tests – to spot unreliable results in software testing. In short, Studio’s Retry Failed Execution Immediately allows you to set logic and re-run failed Test Cases for a set amount of time.

As a built-in reporting tool in Katalon Studio, TestOps automatically stores all of Studio’s Re-run Test Results and finalizes them into a final Pass/Fail status.

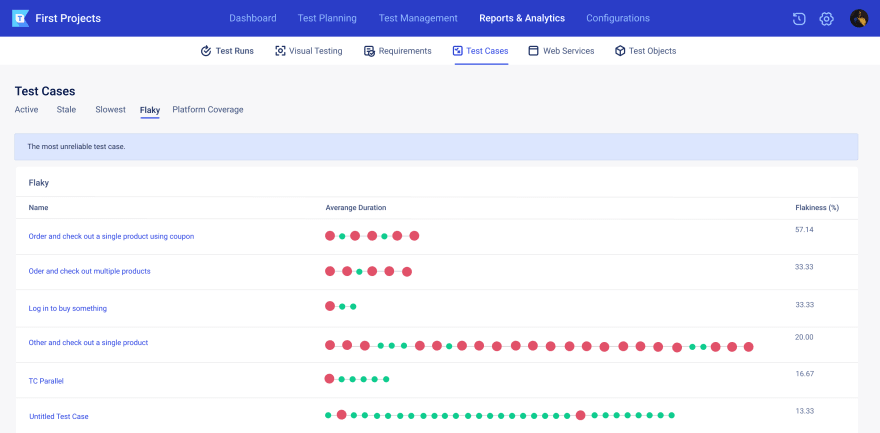

Flaky Test Report

A flaky test is a test that both passes and fails from time to time with no code modifications. This can be a pain to your testing team, as it takes up a sufficient amount of time and effort to retrigger their whole builds on CI.

TestOps flakiness rate is calculated by the formula of _ Flakiness % = # of times _ _ a test result has changes/total # of test results * 100. _

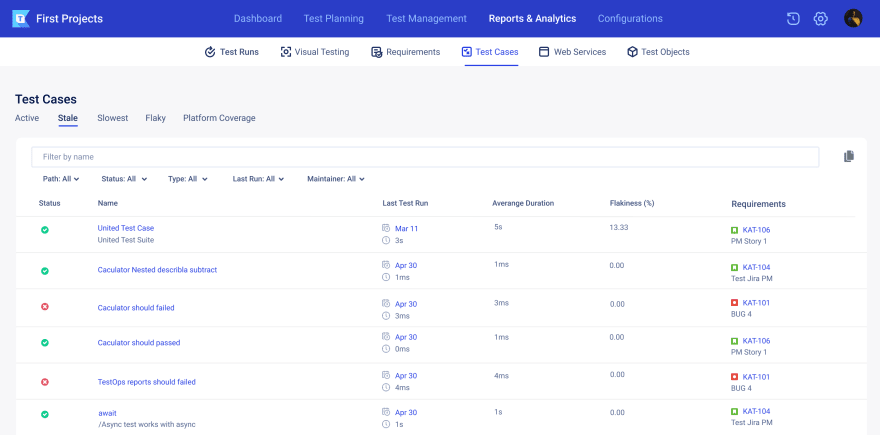

Stale Test Report

A stale test comes from outdated or obsolete test cases. TestOps helps to organize stale tests in one place, avoiding bugs in the testing cycle when running those tests.

You can review and decide whether those stale tests need to be updated or obsolete to the testing cycle, and make instant adjustments.

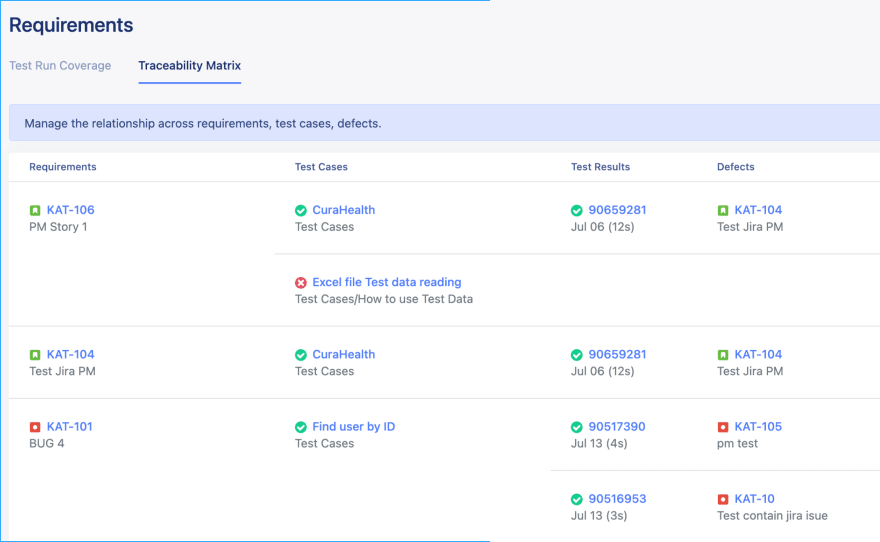

Traceability Matrix

Traceability should be established and maintained throughout the test process between each test base element and the different test products to perform effective test monitoring and control.

TestOps makes tracing quicker and easier with Jira integration, by synchronizing data from testing requirements to testing conditions, test cases, and test results.

Smart Test Reporting – Start Now to See Long-term Results

Failures don’t always occur in the same way or for the same reason. When an issue arises, software test reports may be relied upon to help software engineers and/or developers find the cause of the problem and implement fixes.

Standard execution logs may come in handy, but to keep up with the ever-changing digital race, consider a Smart Test Reporting solution like Katalon TestOps. By integrating with common testing frameworks, TestOps acts as a robust orchestration solution, enabling teams comprehensive access into their tests, resources, and environments.

Traditional execution logs may come in handy, but to keep up with the ever-changing digital race, consider a smart test reporting solution like Katalon TestOps. By integrating with common frameworks, TestOps acts as a robust orchestration solution, enabling teams comprehensive access into their tests, resources, and environments.

The post Smart Test Reporting appeared first on Katalon Solution.

Top comments (0)