This is an opportunity to analyze historical bicycle trip data in order to identify trends. Understanding how casual riders behave differently from paid riders with annual membership is important.

This analysis will help executives to make decisions about marketing programs and strategies to convert casual riders to riders with annual memberships.

Background:

Cyclistic is a bike-share program that features more than 58,000 bicycles and 600 docking stations. Cyclistic sets itself apart by also offering reclining bikes, hand tricycles, and cargo bikes, making bike-share more inclusive to people with disabilities and riders who can't use a standard two-wheeled bike. The majority of riders opt for traditional bikes; about 8% of riders use the assistive options. Cyclist users are more likely to ride for leisure, but about 30% use them to commute to work each day.

Stakeholders and Team

Lily Moreno: Director of Marketing

Marketing Analytics Team: Team responsible for collecting, analyzing, and reporting data.

Cyclistic Executive Team: The notoriously detailed-oriented executive team will decide whether to approve the recommended marketing program.

About the Company:

Cyclistic has 3 different pricing tiers: single-ride passes, full-day passes and annual memberships. Customers who purchase single ride or full day passses are referred to as causal riders. Customer who purchase annual memberships are Cyclistic members.

Cyclistic's finance analysts have determined that annual members are much more profitable than casual riders. Moreno believes that maximizing the number of annual members will be key to future growth. Rather than creating a marketing campaign that targets all new customers, Moreno believes there is a very good chance to convert casual riders into members.

Ask

Three questions will guide the future marketing program:

1) How do annual members and casual riders use Cyclistic bikes differently?

2) Why would casual riders buy Cyclistic annual memberships

3) How can Cyclistic use digital media to influence casual riders to become members.

1st step- Importing the data

Before any analysis or processing of the data can be done, we first need to import the data. I am choosing to use R for this analysis as the data is too large for spreadsheets. Below we are using the requisite packages and library in order to import the data.

We have imported all the requisite files. However, they are all still separated into individual months. We need them in one singular table.

yearly_bike_data <- rbind(Jan_5_21, Feb_4_2021,March_9_2021, April_8_2021,May_7_2021,June_11_2021, July_15_2021,Aug_11_2020,Sept_4_2020,Oct_13_2020,Nov_4_2020, Dec_4_2020)

Let us now install all our requisite packages to start processing the data

install.packages("pacman")

library(pacman)

install.packages("tidyverse")

library(tidyverse)

install.packages("janitor")

library(janitor)

dim(yearly_bike_data)

We have now imported, and combined all the data from the last 12 months of bike data we have available.

Data Exploration

Now that we combined all the data, we should explore it before cleaning.

glimpse(yearly_bike_data)

Clean the data

We have lots of "NAs" in our data. Let us keep only the completed rows.

yearly_bike_data <- yearly_bike_data[complete.cases(yearly_bike_data), ] # Keep only the complete rows

dim(yearly_bike_data)

We have now removed 434,648 rows that had NAs. This will five us a more accurate data set with which to make calculations with:

We now need to make some of our columns more accessible:

#The below code is determining the ride length in seconds.

yearly_bike_data <- yearly_bike_data %>% mutate(ride_length = yearly_bike_data$ended_at - yearly_bike_data$started_at)

head(yearly_bike_data)

The above code gives us the length of each bike ride in seconds. Let us now determine the ride length in mins and hours by using the seconds_to_period function:

install.packages("lubridate")

library(lubridate)

yearly_bike_data <- yearly_bike_data %>% mutate(ride_length_time = seconds_to_period(yearly_bike_data$ride_length))

A useful thing to add would be the days of the week. Let us extrapolate and add that data to our data set:

yearly_bike_data <- yearly_bike_data %>% mutate(day_of_week = weekdays(yearly_bike_data$started_at))

Now let us see what columns we have available to us:

colnames(yearly_bike_data)

We now need to clean up the started_at and ended_at columns by separating the date and time. This will make for easier analysis further on. In order to do this, we will use the separate function.

yearly_bike_data <- separate(yearly_bike_data,"started_at",into=c('start_date','start_time'), sep=' ')

yearly_bike_data <- separate(yearly_bike_data,"ended_at",into=c('end_date','end_time'), sep=' ')

We now have 4 new columns, start_date, start_time,end_date and end_time.

Now we have added 4 new columns to the table. We need to do further cleaning of the data to ensure data integrity.

min_ride <- min(yearly_bike_data$ride_length)

min_ride

I used the min function on the ride_length column and noticed that the shortest ride was in the negatives. Upon further investigation, I noticed that several rows contained negative numbers in the ride_length column:

min_ride <- arrange(yearly_bike_data,ride_length)

head(min_ride)

Based on the tibble above we can see that the ride_length column that has several thousand negative values, because, for a lot of cases, the rides appeared to be started in Dec 2020,but ending in November 2020. Since we can't time travel yet, we will need to remove those rows for the sake of data integrity:

yearly_bike_data <- yearly_bike_data[yearly_bike_data$ride_length > 0, ]

yearly_bike_data

In the code above, we removed all rows that contained time below 0. Now we run the minimum function again to make sure it worked.

min_ride <- min(yearly_bike_data$ride_length)

min_ride

After removing the negative numbers in the ride_length columns we now have a minimum time of 1 second.

Running this code resulted in 10,952 rows of inaccurate being removed. We still have a substantial amount of data to work with however, since we have over 4 million rows.

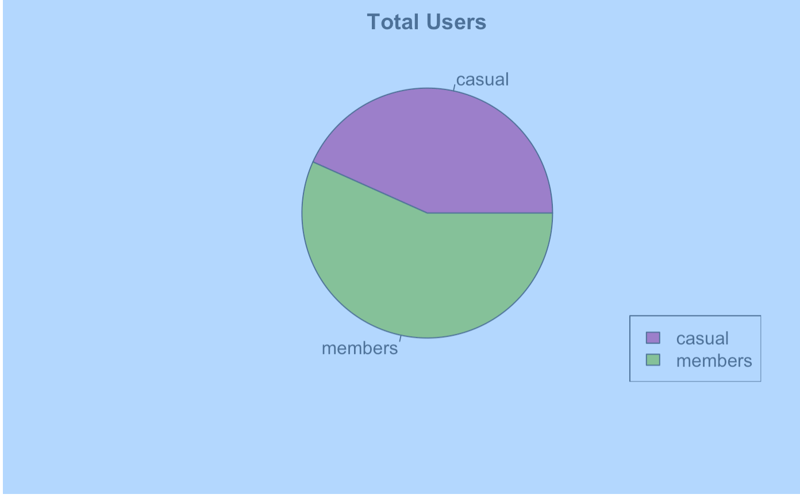

Let us now determine how many people use Cyclistic in total:

In order to determine the total number of users we are going to count the users and use this information to fill out a pie chart:

table(yearly_bike_data['member_casual'])

Casual:1,724,745 Members:2,290,711

total_number_of_users <- c(1926094, 2523705)

pie(total_number_of_users)

pie_labels <- c("casual", "members")

colors <- c("#C5007C", "#8CC702")

pie(total_number_of_users, label=pie_labels, main = "Total Users", col=colors)

legend("bottomright", pie_labels, fill = colors)

From the code and visualization above, we can see that we currently have more members than casual users:

Let us create a months column as well:

yearly_bike_data <- yearly_bike_data %>% mutate(Months =

case_when(

month(yearly_bike_data$start_date)==01 ~ "Jan",

month(yearly_bike_data$start_date)==02~"Feb",

month(yearly_bike_data$start_date)==03~"March",

month(yearly_bike_data$start_date)==04~"April",

month(yearly_bike_data$start_date)==05~"May",

month(yearly_bike_data$start_date)==06~"June",

month(yearly_bike_data$start_date)==07~"July",

month(yearly_bike_data$start_date)==08~"Aug",

month(yearly_bike_data$start_date)==09~"Sept",

month(yearly_bike_data$start_date)==10~"Oct",

month(yearly_bike_data$start_date)==11~"Nov",

month(yearly_bike_data$start_date)==12~"Dec"

)

)

colnames(yearly_bike_data)

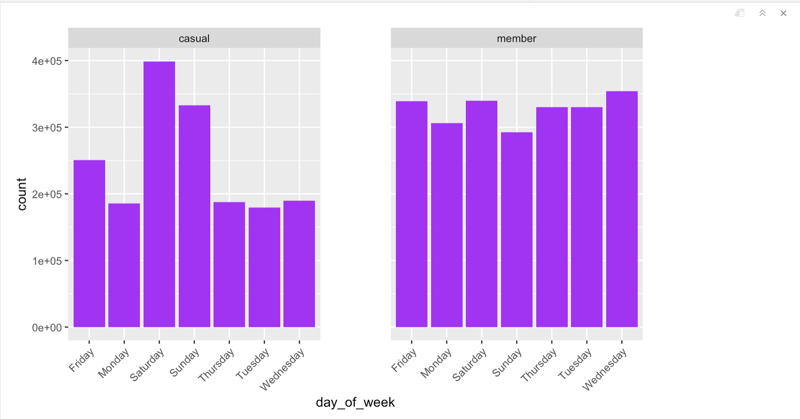

Let us plot a bar chart to easily show stakeholders the most popular day of biking:

#for both casual and members

ggplot(data=yearly_bike_data) + geom_bar(mapping=aes(x=day_of_week), fill="purple")+facet_wrap(~member_casual) +

theme(panel.spacing = unit(4, "lines"), axis.text.x=element_text(angle=45, hjust=1))

Interpretation of Data for Weekdays

From the bar charts above,we can answer one of our main questions: "How do annual members and casual riders use Cyclistic bikes differently?"

It is clear that casual users of Cyclistic use the app much more on weekends than the rest of the week. The members, however, tend to use the app more uniformly throughout the entire week. This suggests that members are more likely to use the service as their main form of transport, while casual users of the app tend to use it more for recreational purposes (as highlighted by the dramatic increase of use on the weekend for that group)

To aid in our analysis further down the road, I decided to to break the 12 months worth of data into the 4 seasons:

yearly_bike_data <- yearly_bike_data %>% mutate(Seasons =

case_when(

month(yearly_bike_data$start_date)==12|

month(yearly_bike_data$start_date)==01|

month(yearly_bike_data$start_date)==02 ~ "Winter",

month(yearly_bike_data$start_date)==03|

month(yearly_bike_data$start_date)==04|

month(yearly_bike_data$start_date)==05 ~ "Spring",

month(yearly_bike_data$start_date)==06|

month(yearly_bike_data$start_date)==07|

month(yearly_bike_data$start_date)==08 ~ "Summer",

month(yearly_bike_data$start_date)==09|

month(yearly_bike_data$start_date)==10|

month(yearly_bike_data$start_date)==11 ~ "Fall"

)

)

colnames(yearly_bike_data)

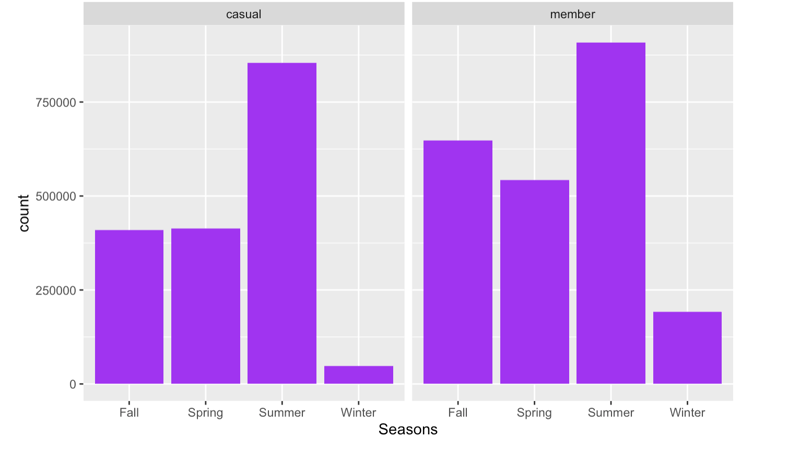

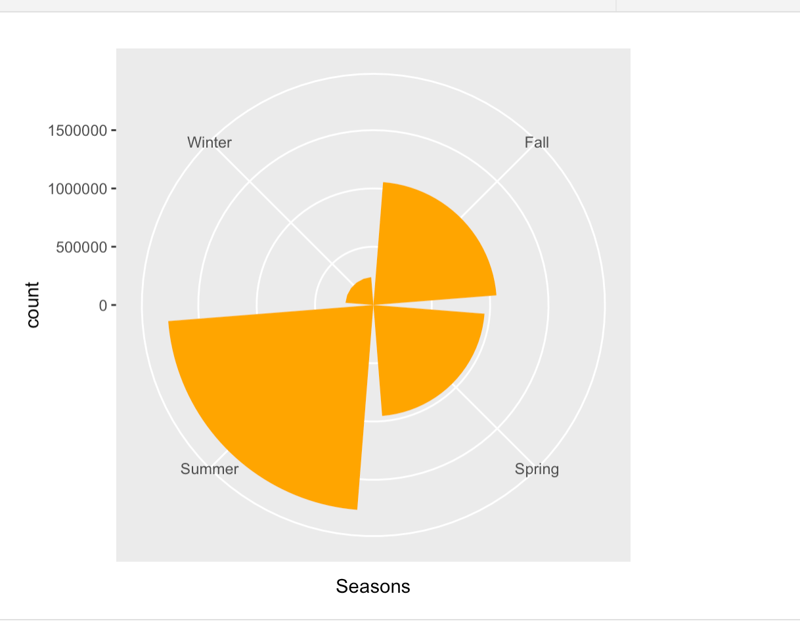

Importance of Seasons for biking

We will be now be able to use the Seasons column to determine which season is the most popular for biking, and therefore, help determine a marketing strategy that will take this into consideration:

ggplot(data=yearly_bike_data) + geom_bar(mapping=aes(x=Seasons), fill="purple") + facet_wrap(~member_casual)

Based on the charts above, we can infer that, for both groups, Winter is the least popular month for biking, while Summer is the most popular month for biking.

Members tend to bike more in the fall than casual users.

Let us now do a count of the number of rows and columns we have currently:

dim(yearly_bike_data)

We have 4015456 rows and 19 columns of data.

Going back to our business requirements, the first ask was to determine "How do annual members and casual riders use Cyclistic bikes differently. We can also answer this question by finding the average, minimum , and maximum ride_length of each group of riders.

ride_summary <-

yearly_bike_data %>%

group_by(member_casual) %>%

summarize(average_ride_time=mean(ride_length),

min_ride_length=min(ride_length),

max_ride_length=max(ride_length))

ride_summary

Analysis of Findings:

Though we have more members than casual users of the app, casual users actually ride for longer than members. The average ride time of a

casual user is 2511.95 seconds while that of a member is 891.89 seconds. The min ride length is the same at 1 second, and the max ride length of a casual user is 3,356,649 seconds and the max ride length of a member is 2,005,282 seconds.

We can also find the mode of day_of_week to show which day is the most popular for biking.

names(sort(-table(yearly_bike_data$day_of_week)))[1]

From this code, we can tell that Saturday is the most popular day of the week for biking.

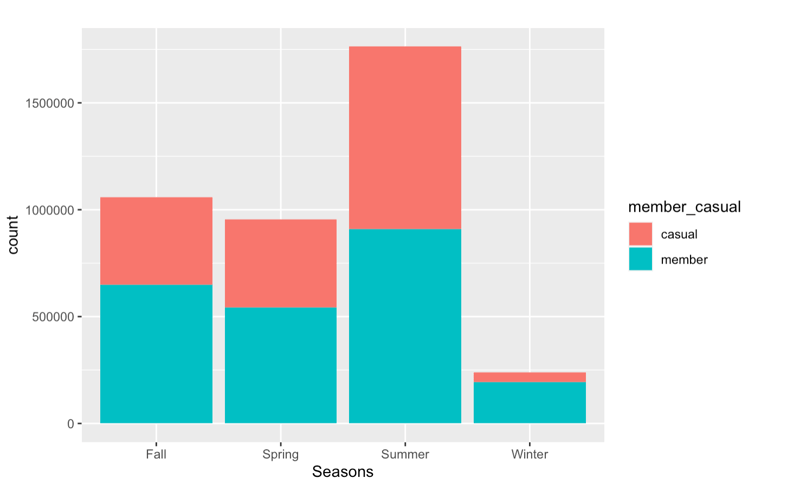

Now, we want to find out which season is the most popular for biking:

(names(sort(-table(yearly_bike_data$Seasons)))[1])

The mode once again tells us that Summer is the most popular month for biking. Let us find out the second most popular month:

names(sort(-table(yearly_bike_data$Seasons)))[2]

The second most popular season for biking is fall. Let's check the third most popular season for biking:

names(sort(-table(yearly_bike_data$Seasons)))[3]

Spring is the third most popular season, which means winter is the least popular season for biking.

Let us make a chart to show this visually:

ggplot(data=yearly_bike_data)+

geom_bar(mapping=aes(x=Seasons,fill=member_casual))

ggplot(data=yearly_bike_data)+

geom_bar(mapping=aes(x=Seasons), fill="orange") +

coord_polar("x")

The pie chart above shows how little the Cyclistic app is used in winter, as opposed to the rest of the months.

Based on my analysis of the data, I have some recommendations for the Cyclistics marketing team:

1) Converting casual users to members is a good idea, and should be moved forward with since the usage of the app is similar among groups.

2) I would increase marketing to the casual groups during the summer and point how much they would be saving if they were to become members. Since summer is when this group bikes the most, then they would be spending the most money on the app during this time and would probably appreciate a way to lower costs. For all users, I would incorporate a Spotify-esque approach where I would send them a "year in review" on how they used the app. This could include personalized details like: how much calories they burned for the year using Cyclistic, how much money saved by riding (instead of driving) etc.

3)For winter months I would give a discount to encourage members to continue using the app, and to encourage casual users to become users during this time.

4)The data also showed that casual users used the app more so on the weekends than on weekdays, where members were more likely to use the app every day. I would encourage casual users to use the app more in the weekdays by offering discounts on the weekdays, which would then translate to them using the app more and so being more inclined to become members.

Top comments (0)