(Heroku and Salesforce - From Idea to App, Part 9)

This is the ninth article documenting what I’ve learned from a series of 13 Trailhead Live video sessions on Modern App Development on Salesforce and Heroku. In these articles, we’re focusing on how to combine Salesforce with Heroku to build an “eCars” app—a sales and service application for a fictitious electric car company (“Pulsar”). eCars allows users to customize and buy cars, service techs to view live diagnostic info from the car, and more. In case you missed my previous articles, you can find the links to them below.

Modern App Development on Salesforce and Heroku

Jumping into Heroku Development

Data Modeling in Salesforce and Heroku Data Services

Building Front-End App Experiences with Clicks, Not Code

Custom App Experiences with Lightning Web Components

Lightning Web Components, Events and Lightning Message Service

Automating Business Processes Using Salesforce Flows and APEX

Scale Salesforce Apps Using Microservices on Heroku

Just as a quick reminder: I’ve been following this Trailhead Live video series to brush up and stay current on the latest app development trends on these platforms that are key for my career and business. I’ll be sharing each step for building the app, what I’ve learned, and my thoughts from each session. These series reviews are both for my own edification as well as for others who might benefit from this content.

The Trailhead Live sessions and schedule can be found here:

https://trailhead.salesforce.com/live

The Trailhead Live sessions I’m writing about can also be found at the links below:

https://trailhead.salesforce.com/live/videos/a2r3k000001n2Jj/modern-app-development-on-salesforce

https://www.youtube.com/playlist?list=PLgIMQe2PKPSK7myo5smEv2ZtHbnn7HyHI

Last Time…

Last time we explored connecting microservices hosted on Heroku with Salesforce to offload high-cost operations and processes. It turns out this topic is important enough to warrant a second video and article dedicated to the same subject!

This time we are going to cover some pretty cool Heroku features and integrations with Heroku apps including Progressive Web Apps (PWAs), using Kafka to scale MQTT protocols, and capturing change events in near real time.

In the very first video and article of the series, there were some previews of these potential use cases for these Heroku platform features, but now we’re going to see actual examples of their use and implementation!

As a reminder, the GitHub repo for this series and the eCars application used throughout is available at the following URL. There are a number of other sample apps in the overall repo as well.

https://github.com/trailheadapps/ecars

Architecture of the eCars Application

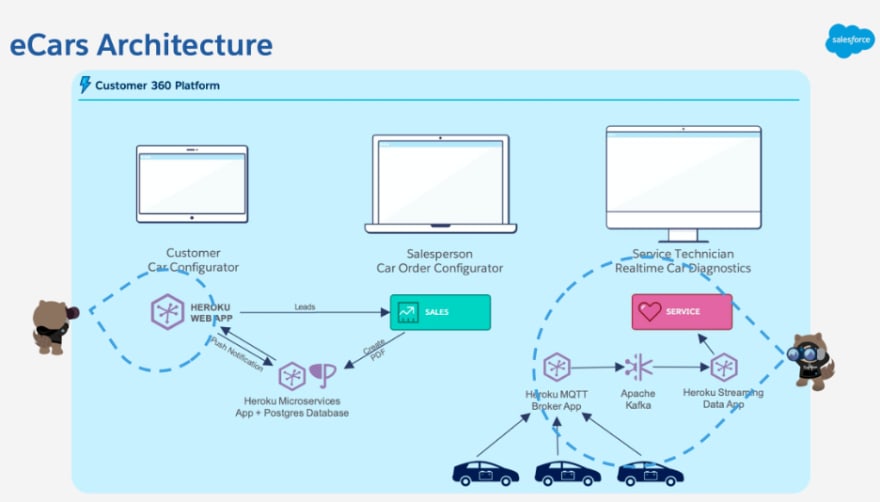

So far, the two major parts or “clouds'' of the application have been focused on Sales Cloud and Leads configuring their potential cars and now Service Cloud as well for certain customer service use cases. Both of the Salesforce clouds have external integrations. On the Sales Cloud side, we have a Progressive Web App (PWA) for the car configurator, a Heroku microservice for the handling of PDF generation, and a component for push notifications. On the Service Cloud side, we will have a streaming data Heroku app that leverages MQTT, Internet-of-Things (IoT), and Apache Kafka while integrating with Salesforce via web service APIs.

eCars Application Architecture

In this session we look at three demos, use cases, or integrations with Heroku apps and microservices:

- A PWA car configurator that sends leads to Salesforce, manages push notifications, and crosses boundaries of native and web applications.

- A real-time Heroku streaming data app that automatically opens a case and provides real-time IoT data from the car’s sensors that are reporting issues

- Synchronizing data with Salesforce and an external data store for change data capture

Subscription and Push Notifications PWA Demo

First let’s look at the Heroku PWA that’s handling the car configurator and doing two things: Pushing a new lead to Salesforce Sales Cloud and subscribing that lead to notifications using the contact information they provide.

An example lead completing their car configuration and providing contact info

Once the user completes the information on this page, a lead is sent to Salesforce via the Salesforce REST API and the JSForce javascript library, which provides an abstraction layer for the Heroku app to make API calls to Salesforce.

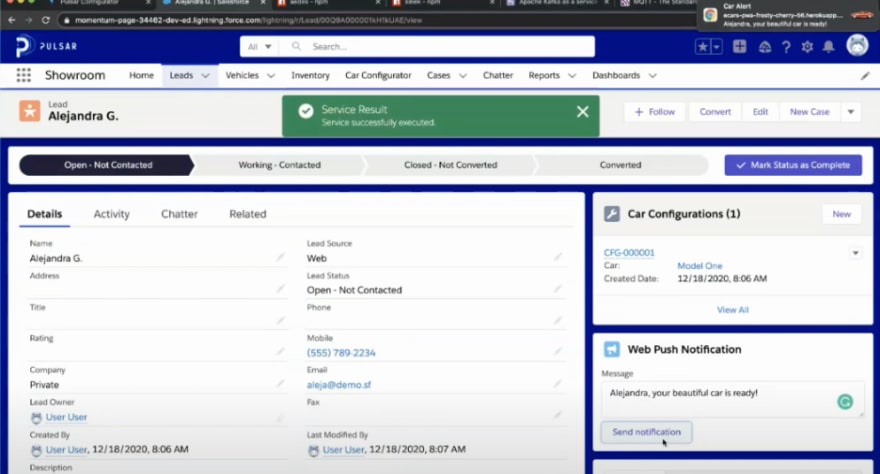

Once the lead is in Salesforce, we can see that it’s possible for the salesperson to manually send out a web push notification back to the web application to the prospective buyer. This could also be invoked automatically using Salesforce’s automation tools such as Flows.

Sending the notification from Salesforce results in the web notification in the top right corner

In this architecture, we effectively have real-time notifications between Salesforce and the prospective user’s machine. This is possible with something called a “Service Worker” which is a kind of Javascript application that runs completely in the browser so that notifications can be received even when the web page isn’t open. I bet this is partially why my internet browser is so RAM-hungry?

Another very cool feature of PWAs is that they are not just web apps but can be installed and run from the desktop iOS or a mobile device and run like a native app providing the same experience, independent of the platform they run on! This is great because the PWA frees you from having to maintain separate codebases for your web app and your native mobile and desktop apps.

Finally, the PWA we see in the demo is actually built using Lightning Web Components so this wasn’t actually some specialized node.js app that’s far removed from the Salesforce ecosystem.

Under the Hood of the eCars PWA

Customer-facing PWA app architecture

Taking a look under the hood of the PWA App, there are a few components to explore. In the graphic above, we have in the boxes from left to right: the client’s browser, the Heroku server, and the Salesforce instance on the far right side.

Without diving too far into the weeds of the actual code (which you can just explore in the Github repo), here are some notable highlights:

- The Heroku server is providing an HTTPS server via express to serve up the PWA app pages.

- The Service Worker lives completely on the client’s browser to manage subscribes, push notifications, and communications with the HTTPS server.

- The web-push library facilitates communication with the Service Worker and Salesforce APEX classes with web services callouts.

- The service worker, running totally on a separate thread on the client’s browser provides some offline capabilities as well as pre- and run-time caching and background synchronization.

I have found that the Composite Graph API is potentially very useful on the Salesforce side. Typically when making inbound calls to create multiple related records via the Salesforce API, you have to make multiple API calls—one call to create the parent, another call to create child objects, and so on.) Then, you need to manage cases that involve rolling back the entire transaction thread because maybe the parent insert was successful but then only some of the child records inserted successfully while the rest errored-out. With the Graph API, you can make a single callout to string together otherwise separate calls and manage the success/failure of the entire composite call as one. This should really be the gold standard when it comes to operations that insert multiple records!

Real-Time IoT eCars Data Demo

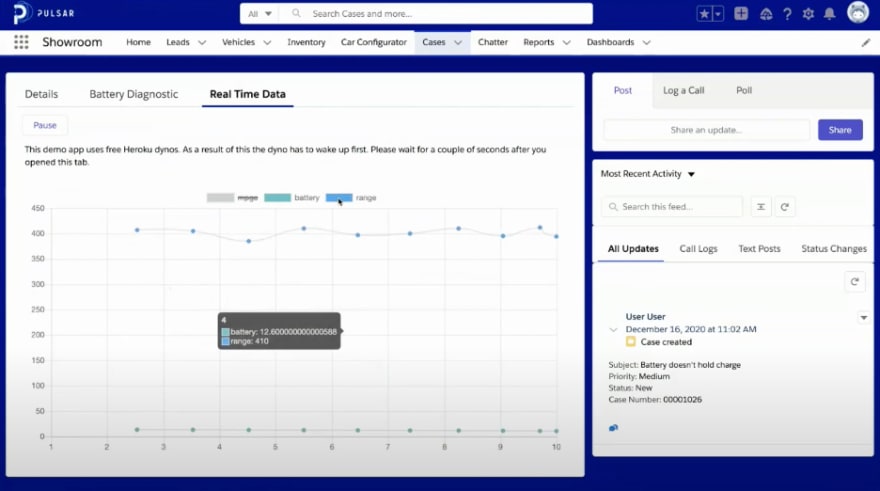

In the next demo, we’re looking at a scenario that involves getting IoT data from the cars’ sensors and pipe the information to our app in real time. This will enable us to take some preemptive action on certain triggering events (a rapidly-draining battery in this case) and empower the service agents with that data.

An automatically opened case due to battery anomaly with real-time data accessible in the case view

So, we’re looking at a Salesforce service cloud case that was automatically opened because the IoT data received by our application from a particular car was not performing within expected thresholds. Then, in two of the tabs on the case, we can see lightning components that have battery diagnostic and real-time data streaming to the case for the service agents to access.

Imagine that we then combine this with Salesforce automation to send the owner of the vehicle a preemptive email notifying them that Pulsar is aware of the issue and are looking into it. We can also combine this with what we did in the PWA and send a push notification with the same content! This would take customer service and the experience of the Pulsar brand to a whole new level.

Under the Hood of Real-Time IoT eCars Data

So how do we get all this rich IoT data from the cars all the way back to Salesforce? Let’s take a look under the hood (pun intended) and see what kinds of components are making this magic happen.

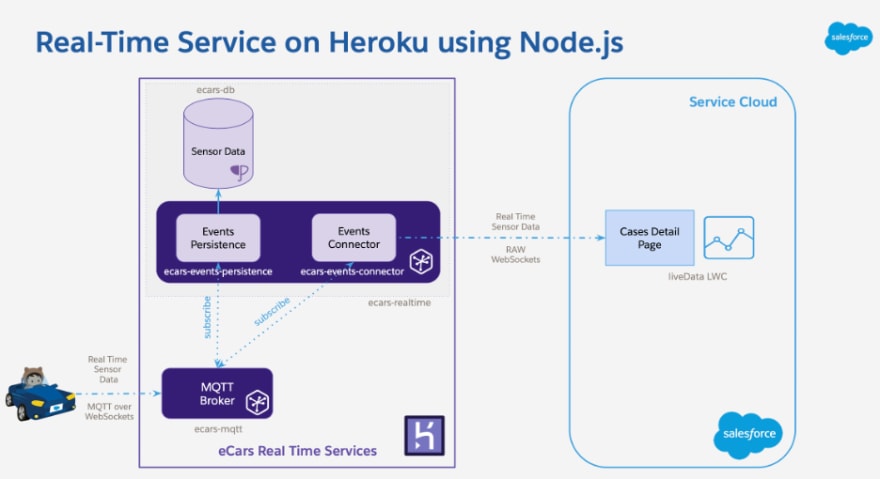

Here are some components to highlight below (Remember that all the code running behind these components can be found in eCars app Github repo)

MQTT Broker – This MQTT broker is a standalone application running on its own Heroku Dyno. MQTT is an extremely lightweight messaging protocol for IoT use cases. It’s a simple publish/subscribe messaging transport that is ideal for connecting remote devices with very little code and minimal network bandwidth. This MQTT broker is receiving data from the cars’ sensors and publishing them to clients that subscribe to the data. The specific MQTT library used for the eCars app is called Aedes and is a barebones MQTT server. More information is available on Aedes in the links at the end of the article.

eCars Real Time – The eCars real-time application is another standalone application running on its own dyno. As you can see, it comprises an events persistence module and an events connector module. The events persistence module handles taking the data from the MQTT Broker and persisting it to a sensor data database where it can be stored permanently and queried like a normal SQL database and perhaps stored in a data warehouse later. The events connector module also subscribes to the same MQTT Broker but has a different job—communicating with the Salesforce liveData LWC via a Raw Websockets API. This allows the service user on the Salesforce side to access the sensor data in real time.

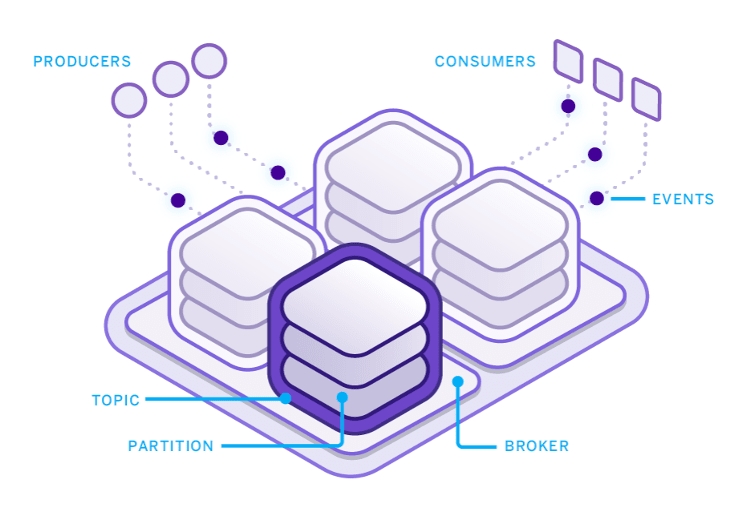

Of course, the amount of data throughput can get pretty crazy as Pulsar sells cars and more of them are on the road all broadcasting sensor data. In order to scale this application, Apache Kafka comes into play. Apache Kafka is a distributed commit log for rapid, fault-resistant communication between producers and consumers using message-based topics and provides the backbone for building distributed applications capable of handling billions of events and millions of transactions.

Apache Kafka for when you need to handle massive throughput

With Apache Kafka added on, it helps the app increase real-time data throughput and allows other microservices to consume/subscribe to data without producing additional overhead. It’s not necessary to have Apache Kafka on your Heroku instance for the eCars app to function as it’s only needed for massive scaling use cases in production.

Data Synchronization with Change Data Capture

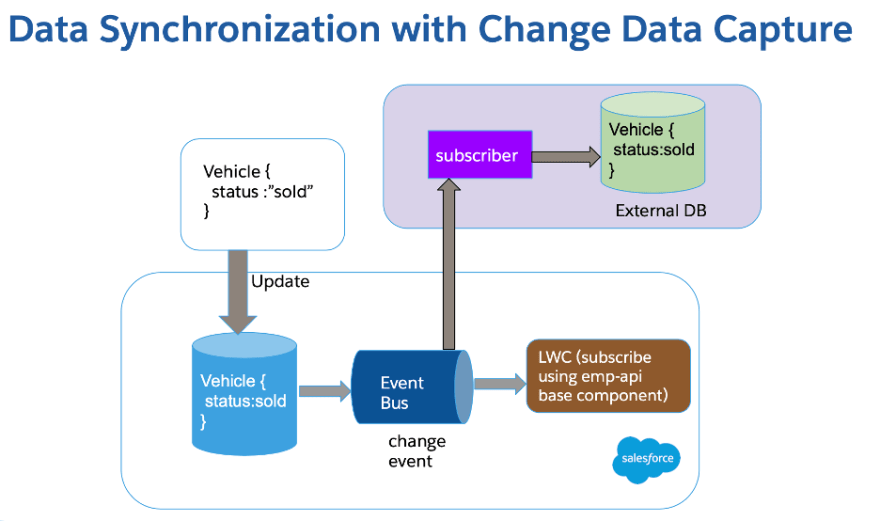

The final use case example is related to data synchronization and change data capture. This is similar to the previous “publish/subscribe” framework of the IoT example but can be applied to any use case where you might want to synchronize data between disparate applications or data stores.

Data synchronization with change data capture

The “event bus” is key to this architecture. It is where change data events are published to and can be subscribed from. This event bus is playing the same role as the MQTT Broker in the previous example. In our model above, the event is a vehicle changing status to “sold.” This update is committed to the database but also published to the event bus. This event bus can then serve up the change events to multiple subscribers.

In our eCars app, there is an LWC using the emp-API base component that subscribes to the events, however, a subscriber can also sit outside of the application and subscribe to the same event bus to then sync the data to an external database.

The main advantage of this model is that point-to-point integrations between multiple systems to keep the data in sync are no longer required. The event bus can serve as the singular hub for distributing this information.

Concluding Thoughts

The content in the demos covered for the eCars app is pretty advanced in terms of the level of sophistication and integration from the perspective of typical Salesforce implementations. Using a PWA or scaling massively using Apache Kafka may not be common, however, the purpose of this content is to expand everyone’s idea of the realm of possibilities that exist in the Salesforce ecosystem.

As a Salesforce administrator or developer, I find that it’s somewhat easy to get a bit of tunnel vision and somewhat “stuck” in our thinking in terms of possibilities when architecting an application in the ecosystem. Therefore, I find it refreshing to look at examples that really push the edges of what is possible to build.

For more information, trailhead modules, and advanced topics related to scaling Salesforce apps with Heroku and event-driven architecture, check out the links to the resources below:

- Composite Graph APIs

- Change Data Capture Basics

- Build Connected Apps with LWC Base Components NPM

- Deep Dive Into Progressive Web Apps

- Explore WorkBox for PWA

- MQTT: The Standard IoT Messaging

- aedes: Barebone MQTT server

- sinek: High Level Kafka Client

- Apache Kafka on Heroku

- The WebSocket API

In the next article, we’ll walk through unit testing methods and tools for Javascript applications.

If you haven’t already joined the official Chatter group for this series, I certainly recommend doing so. This will give you the full value of the experience and also pose questions and start discussions with the group. There are often valuable discussions and additional references available, such as presentation slides and other resources and references.

About me: I’m an 11x certified Salesforce professional who’s been running my own Salesforce consultancy for several years. If you’re curious about my backstory on accidentally turning into a developer and even competing on stage on a quiz show at one of the Salesforce conventions, you can read this article I wrote for the Salesforce blog a few years ago.

Top comments (0)