The Big O notation in a nutshell is what programmers use to define the runtime of a piece of code or an algorithm. It is how we measure how long something will take to finish running depending on how big its input is. The formal definition is Many programmers might be introduced this topic during school hopefully during a Data Structures & Algorithm Course, or might have just found out about it to prepare for their upcoming technical interview. So why might we care how long a piece of code will take to run? As long as it runs and gives us the right answer it should clearly not matter but that?

Common Analogy

Here is a common analogy to try to understand Big O.

Imagine you are trying to deliver a file of code to your friend who lives across the country. How would you deliver it to him/her?

If it is a small file (>5GB), then most people would email it. It may take some time but it will arrive before the day ends! But if our file is more then say 1 Terabyte. This may take a longer time to email it, so it would probably be faster to jump on a plane and hand deliver a Hard Drive (From Cracking The Coding Interview By Gayle Laakmann).

If you have to wait a longtime on a website to load the information you asked for, then that must mean the runtime of the code is not the best! Or your internet is lagging. But it is not a good user experience to wait too long for a response. So here we will show you how to calculate Big O notation or Time Complexity for some pieces of code.

What is a Good Runtime

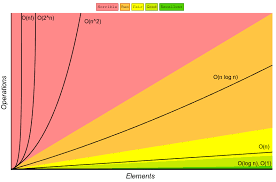

The common runtimes from worst to best is

O(n!) < O( 2^n ) < O( n^2 ) < O( nlogn ) < O( n ) < O( logn ) < O(1)

There are other runtimes but these are one of the more common ones. When reading these out it is read as "O of n factorial" or etc.

Generally we want to get as close as possible to get to O(1) or constant runtime, but some problems may be asking for so much that we may not be able to get close to that, so we may have to do just the best we can.

How Do We Actually Calculate Runtime

When we are working with Data Structures and Algorithms, we will generally have an expected runtime, best runtime, and worst runtime. This is usually dependent on the size of the input.

For Example, let us look at Quick Sort for an array of numbers, where we choose a pivot point, and then move all the numbers smaller than the pivot before it, and all the numbers larger than it after. When all numbers are placed before and after the pivot, choose a new pivot and repeat this partial sort until the array is fully sorted.

Expected Case

This sort is considered Divide and Conquer, and we would consider the expected runtime to be O( nlogn ). ( logn ) because we are choosing a pivot and sorting the array, and then choosing an other pivot. Since we are almost cutting the array in half after sorting to the pivot, this would double then quadruple and so on. And we get the ( n ) because we are changing our pivot constantly, which would finally give us O( nlogn ).

Best and Worst Case

The Worst and Best case would occur depending on the size of our array, how we choose our pivot, and the elements in our array. The worst case would be when the pivot is either the biggest or largest element in the array, which would slow our runtime down. The best case would actually be close to the expected runtime if we always picked the most middle element.

Identifying n! Runtime

These next parts will talk about how to tell the other runtimes apart starting with O( n! ). This is one of the more slower runtimes and can be easily spotted. In Math, it is the product of

n*(n-1)(n-2)... until n = 0

For a code example it would be

def factorial(n):

result = 1

while n > 1:

result *= n

n -= 1

print result

If you find yourself doing an operation on the same input then decrementing it until it disappears, then you should probably rethink your life.

2^n runtime

Exponential runtime is the next one down after O(n!) for slowest runtime. But this runtime can be remembered by solving a popular puzzle called, Tower of Hanoi. It depends on how you solve the problem but you can get close to having a O( 2^n ) runtime. The rules of the game can be found here. But basically we have 3 pegs, with the leftmost peg having disk, we must find a way to move all the disk to the far right peg, while only moving 1 disk at a time.

Here is a general solution to solving this

def TowerOfHanoi(n , from_rod, to_rod, aux_rod):

if n == 1:

print "Move disk 1 from rod",from_rod,"to rod",to_rod

return

TowerOfHanoi(n-1, from_rod, aux_rod, to_rod)

print "Move disk",n,"from rod",from_rod,"to rod",to_rod

TowerOfHanoi(n-1, aux_rod, to_rod, from_rod)

This is a recursive solution and can not be done any faster than O( 2^n ) since we need a recursion to solve 2 smaller problems which in this case is moving the disks one at a time, and at same time checking our disks do not violate our rules.

n^2 Runtime

O( n^2 ) runtime grows exponentially as the program runs on. One of the easiest indicators of n^2 runtime is going through a loop 2 times through an array for example.

array = []

for i in array:

for j in array:

(do something)

Don't worry about what you do within the loop but the idea is that you go through an array 2 times which makes it O( n^2 ) runtime.

Constant Time and Linear Time

The easiest to identify is O( n ) and O( 1 ). Linear Time O( n ), is

directly proportional to the input. If you have 10 items in your array then it will do 10 things, If there are 1,000 items, then it will do 1,000 things.

array = [0] * 5

for i in array:

print i

# => Will print 5 zeroes

Constant Time on the other hand will only take 1 step to complete. If your array has 5 items, 1 step. Array has million items, 1 step.

array = [0] * alot

print 'Hello World'

It is not the case that the program does not touch the input. The program can touch the first element in an array and it will still be constant time.

Closing Thoughts

these are not every runtime you will find, these are of the more common ones you may come across. Keep in mind also that my code snippets are not perfect or exact they are to show the idea for the runtime.

Top comments (1)

It is really helpful not only for beginners who would like to know a bit more about DS and alrg. Thanks.