Photo by Kaleidico on Unsplash

Growing businesses are moving quickly. Modern data-driven companies use data to build KPIs and make decisions. Those fast-paced organisations need a state-of-the-art data platform that can adapt to all new offers, relationships, acquisitions and other changes in the business. Today we will answer the question of what is DataOps, and how it helps companies to get the numbers they need quicker, cheaper and more reliably. Let’s start with a bit of history.

Assembly lines

Before the Industrial Revolution, most products were made on demand by hand. A different person would create every piece of the product. In the end, a final person would assemble the parts by modifying them until they fit and work together.

Then assembly lines were invented. Every individual in the line did exactly one thing in the exact same way. If someone’s job were to screw some bolts, they would do only that, the whole day, every day. The standardisation of the process made the products more consistent, reliable and cheaper, but we didn’t use people’s potential.

Nowadays, we have large factories full of robots that produce enormous amounts of cars. This revolution freed more time for people to use their most powerful tool — their brains. Instead of spending time doing the same mindless tasks over and over, they can focus on making cars safer, more comfortable, and more aesthetic.

Photo by Remy Gieling on Unsplash

While software engineering teams borrowed many production processes from manufacturing, data teams are just getting up to speed with assembly lines. They dump data into spreadsheets, cleanse it, categorise it, create the charts and send them via email. What’s worse is that all that work is being done manually for every single report.

What is considered modern is having many people sitting on a chain of responsibilities and working with just one type of data and don’t know what other people on the line do. Software engineers work with application data, data engineers are interested in producing a kind of raw data, and business analysts build business objects. In the end, no one really knows what the PM needs.

Just in the last couple of years, we started observing a more holistic view of how data is being processed and used. We call this Data Operations, or DataOps in short. Let’s see what exactly is that.

DataOps values

DataOps is a set of principles that combine people, technologies, and processes and lead to better data insights. The DataOps Manifesto consists of 16 principles and five values. I grouped them into three main areas.

Image by Datalytyx

Tooling and Processes

According to a survey by Datafold, almost 40% of the respondents believe that too much manual work is the top reason for low productivity of data teams. The same study shows that nearly 60% of the teams validate data manually before using it.

A large piece of DataOps engineers’ job is to streamline the process from ingestion to dashboard. They are responsible for building the infrastructure, automation, orchestration and monitoring. They also create pipelines and models and advise on architecture and security. Basically, they support everyone who needs these sweet insights. To do that, DataOps engineers need to choose the best tools for the job, continuously evaluate the stack, and optimise the processes. Obviously, this means they also train the team on those tools and invent procedures around them.

Photo by Adeolu Eletu on Unsplash

This holistic view of the tooling allows DataOps practitioners to build a homogenous platform that does not feel like a Frankenstein. Those people have to decide what process should be used, ETL, ELT, or a combination of these. Depending on that, they have to choose the tooling for ingestion, transformation and storage. They also have to build the orchestration and provide the visualisation layer. Ideally, all this should be put in version-controlled code, which can be executed easily on a local machine. This code should then be run in different environments and deployed via CI/CD processes. All this provides us with better visibility, stability and reproducibility.

People

Tooling is cool and important, but people are critical. Statics show that entry-level employees typically cost 50% of their salary to replace.

A DataOps practitioner needs to understand that they provide their services to real people, not just some squares in Zoom or Slack. They should value quality, and one of the best ways to achieve that is by delivering early and seeking feedback. It’s not enough to do tasks and blindly follow instructions. DataOps means a deep understanding of customers’ needs and advising on the best solution.

Photo by krakenimages on Unsplash

We don’t work only with stakeholders. There are also people in the team with different experiences and skills. That is great as different backgrounds and opinions increase innovation and productivity. On the other hand, this brings some challenges when it comes to tooling versus people. Overall, we should find a balance between people and “good practices” but lean toward people’s preferences. I can give you an example from my life. I like having clean and nice git history of commits and Pull Requests. It helps us connect all pieces of a task, trace the history, and get a better understanding of the project’s evolution. This process works well for a small team of senior engineers, but it appears to confuse more junior members of the team. To be honest, I’m still struggling to find the balance with this exact use case. My current solution is to allow everyone to commit changes in the way that fits them individually. That looks like small chaos but helps us move forward with the project, and people learn best practices slowly.

How would you deal with that?

Communication

Speaking about code documentation processes, good commit messages are non-negotiable to me. I don’t think we have to document every single piece of the project. On the contrary, the DataOps culture values shipping code early over waiting for the documentation. Why then is this so important for me? Communication is the heart of DataOps. It’s the glue that connects people and technologies. Clear, concise and open communication is the key to a successful analytics team. A study from Zippa shows that 86% of employees in leadership positions blame lack of collaboration as the top reason for workplace failures.

Within the team, we need to communicate what we have done and even more importantly, why we have done it. We can achieve that via good commit messages, pull requests, tests, daily stand-ups and knowledge sharing sessions. That way, we reduce confusion and repetitive work, provide more opportunities for learning and increase engagement. I don’t have to tell you how much team spirit increases productivity.

Photo by LinkedIn Sales Solutions on Unsplash

Cross-team communication is equally important. We should interact with our stakeholders on a daily basis. Only by understanding their needs, we can provide the best possible service. When I say communication, I don’t just mean calls and email. I also mean automated status reports, SLA dashboards, and all kinds of monitoring. We ought to communicate what data we can bring, what we currently have and how our customers are using that data.

Processes, people and communication are the three main ingredients for the DataOps culture. Only by combining these three ingredients, we can guarantee we are providing the best service we can. We could spend a lot more time discussing that matter, but this is the baseline we all should agree on if we want a modern analytics process. Do you see the point in these values? Great! Let’s see what kind of people we need.

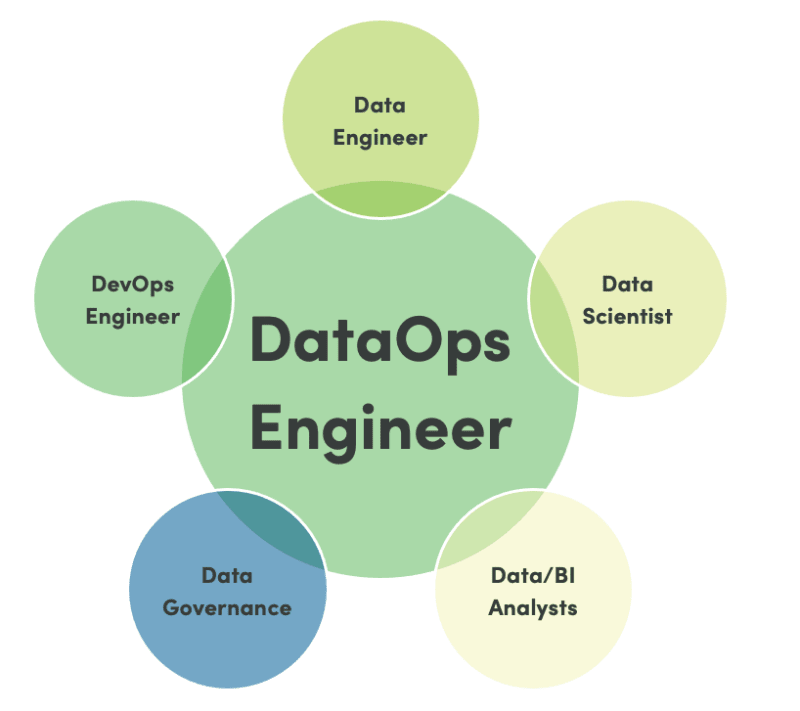

Who owns DataOps in the organisation?

As we already discussed, DataOps advocates are responsible for all processes from request to dashboard. They own the ingestion, transformation, orchestration and the other needed tools. They are responsible for getting the whole context through meetings and written messages, keeping the software running, environments in sync and data secure. As you can see, we need people with diverse skills — programming, server infrastructure, communication, organisational skills, and many more. They need to learn fast and adapt to changes in business requirements to produce graphs and charts as quickly, cheaply and reliably as possible. These people can increase productivity not just in the team for in the whole company if we allow them to execute their ideas.

Image by DataKitchen

Very rarely, just a couple of times in our life, we would find the amalgam of all these skills in a single person. Most times, we have a whole team of data engineers, analytics engineers and business analysts led by some sort of a Head — Head of Data Engineering, Head of BI or Head of Data. Even more often, we’d see at least two of these Head positions under a larger analytics team. That helps individuals focus on the areas they are interested in but still understand what happens around them. On top of that, we still have someone who has the whole context. In the end, we are all responsible, from the CEO to every individual contributor.

Conclusion

Slowly but surely, data teams are adopting processes from manufacturing and software engineering. The increase in productivity and visibility of all departments in data-driven companies gives them a great advantage over competitors. A strong analytics team following the DataOps principles is crucial for every modern business. I bet we’ll see an increasing need for people with data operation skills in the years to come.

What is your opinion on that matter? What does your organisation do to make sure it provides insights most efficiently? Share your thoughts in the comments below. Oh, and don’t forget to subscribe for new articles!

Top comments (0)