Architectures are moving towards separation, what does that mean for frontend engineers in the way you develop and test and how you could go about tackling it

The age of Separation

In the last couple of years, system architectures independent of actual scale, have shifted towards separation. Separation, in many production-grade applications we see today, means a mix of different (micro-)services which aim to model the different bounded contexts, if you may, of a system and its interactions with the world.

Especially in larger organizations, separation translates into diverse, more specialized and efficient teams that focus and are responsible for their domain service. Naturally each of these teams will be developing one or more services to interface with the rest of the system.

This approach has expectedly lead to re-evaluating the way the frontend part of a system is architected and how many tenants it needs to communicate to, to reason about its behaviour.

A typical modern frontend

What the shift towards separation meant for modern frontend applications and the way we as frontend engineers work on them?

Let's first establish the basic responsibilities of a common "frontend".

The frontend part of a system is having the least responsibilities to:

- Present a normalized state of the system to the user.

- Dispatch service actions that are generated from the user completing the application goals for example creating an account or booking a hotel room.

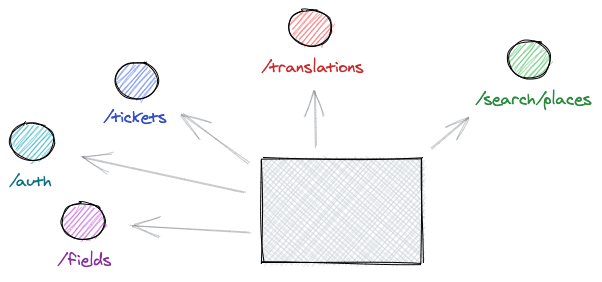

Especially for creating a presentable and solid view layer, a frontend application needs to reach for the system services (which could be many) and retrieve the required data to generate the state for the interface.

Probably your application will need to reach also external services for concerns like translations, authentication and other third-party data APIs (e.g. Google Maps).

Remember that all the above are just the ones that we pull data from.

Consequently, when we start developing our part of the application, we would require some of these data sources to be available even partly as a sample to build upon.

Then afterwards will come styling, optimizations, broader compatibility efforts and all the other nice stuff we love working against.

Frontend development and testing for those environments

While working (or planning to work) in an environment like that, you will quickly understand that in order to create a new feature or subsystem in isolation, you must not depend on external services being available. Cause there are times when they will not be or if they are, they might not be up-to-date.

Developing in isolation 👨💻

Some common ways that teams choose to deal with service dependencies* during development are:

- Using staging environment API endpoints for their services.

- Running a local copy of their backend monolith.

- Using Docker to spin-up multiple local services.

* External data-fetching services are some times not even available at staging/development environments.

If some of the above are done meticulously with a light setup, that is a great place to be. But sadly this is rarely the case.

Most people will need to fight their way through setting up their development environment, with lots of "hacks" that are to be maintained ad infinitum.

This processes is even becoming a part of the onboarding for a new member. A pretty poor initiation ritual if you ask me 🤦.

Testing your feature against the system 🔧

On the testing aspect, except for unit or integration tests, there should also be tests in place that really validate the application level workflows that your feature is contributing to. These are mostly mentioned as end to end tests. As the definition implies, the way to write and reason about those tests is tightly related to the external services the system depends.

Furthermore, this type of testing and how it should be conducted can still become a heated matter 🔥 in conversations between technical members of a team.

Should/Could it be run during development ?

Should we only run these tests in the CI server where all system components are built independently ?

Should the QA or the engineers be writting and validating these tests ?

...

All the above concerns are valid but it is not up to an individual or the community to define what best suits your system. Go with what suits your team.

One common caveat (and misconception in my opinion) around these tests is that they require a full-blown backend/service system to be up and running. Due to the nature of our modern architectures we discussed, this is becoming more and more complex, "heavy" or sometimes impossible to fully set up.

As a result, teams are drawn away from end-to-end tests and not validate how the whole application is behaving with the new addition, not up until the final step of the build pipeline. So much lost potential for improvement.

After all the hurdles mentioned, what is something that a team can experiment with and eventually adopt in order to alleviate the pains that multi-service dependent frontend applications bring to engineers ?

I will go about with my proposal here... just mock it off!

Mock it off 🤷♂️

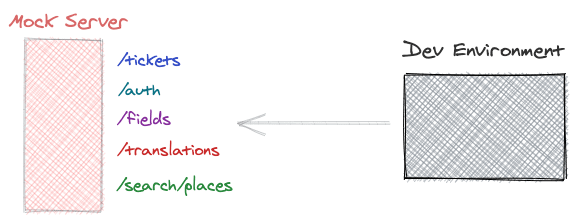

A tried and true solution to these issues, chosen by many teams, is mocking or otherwise stubbing those service API responses that your frontend application requires.

API Mocking is the process of simulating a set of endpoints and replaying their expected responses to the caller, without the refered API system actually being present.

By doing that, you can have a defined API schema with example responses bundled up for the services you depend on and available for consumption during development and testing.

The consumption of these "fake" responses usually happens from a static server that, provided the endpoints, gives you back the matching payloads. The mocking schemas could either be hosted and updated by different members of the team, being stored in another application like Postman or even be part of the frontend repository.

As a frontend engineer, you just want to open your development server and see the interface you will be tasked to work on. Now the weird parts of setting up and consuming a service, which at the end of the day you just needed the API response, are abstracted away from you.

Depending on the needs and the mocking server implementation, you should have the ability to also tweak the payloads and validate your frontend against special cases.

What if a service returns a different "Content-type" header? Or decides to randomly start streaming "video/mp4" data? Sounds unlikely but you can experiment with many cases that might break your implementation. It will surely leave it in a more flexible and reliable state than before.

Additionally the setup for a new frontend engineer will become a breeze. Just clone the repository, start the API mock server and you can just start working. This can also hold true for backend engineers that are working on these separated service architectures, having the set of endpoints readily-available for all the connected services. Sounds sweet 🍰!

Going a step further, think of all the nice things we have nowdays, by using something like Google's Puppeteer, you can run even end to end tests really fast with our mock server supporting us by filling up all those services that would otherwise needed to be present.

Most of all the benefits though, in my opinion the one that holds the largest stake is reliability of your development environment. It becomes portable and independent of the availability of external systems. You can even code on a plane without internet connection!

For sure there are tradeoffs

As in most things we juggle with every day, there is not silver bullet, and mocking does not claim to be one. It proves to be immensely helpful abstracting away many intricacies of the system but there are maintainance costs and communication costs when trying to introduce it to a team workflow.

So should you ?

Considering all the benefits and expected drawbacks, you can hopefully make your informed decision if and when it is the right time to try out API mocking in similar environments. The tools available are many, with approachable offerings and successful track records. In my opinion it is definitely worth the try.

If you feel like it, I have written about a way that I have found makes mocking a breeze for some use-cases

How to mock your frontend application environment in about a minute!

Peter Perlepes ・ May 7 ・ 4 min read

Drawings made in the amazing excalidraw

Top comments (0)