At the end of May I attended Nordic Testing Days in Tallinn, Estonia. It was the first time I spoke at a conference outside of Sweden, and I had a great time. There was one day with tutorials, and two days with workshops and regular half hour talks. Here are my impressions:

Talks I Liked

Why Should Exploratory Testing Even Be the Subject of a Keynote? This opening keynote by Alex Schladebeck was the best talk of the conference. Exploratory testing often seems driven by intuition – “maybe I’ll try this”, or “I wonder if it works if I do that”. But in order to teach how to do it well, we need to be aware of why we do these things – what the basis for the intuition is. Some tips for doing this is to narrate what you are doing, reflect on why you take the actions you do, label the rule you followed, and practice recalling what you did.

For the second part of the talk, Alex gave many excellent examples of heuristics that she uses. Examples include: Ifs are iffy – ifs in real life often lead to ifs in the code, and that is fertile ground for bugs, so worth exploring. A rose by any other name – if there are overlapping names for a concept (such as infant vs baby when booking a flight) there can be problems. You can never go back – for web apps, try going backwards and forwards, do and undo, and watch out for problems. Break the chain – deleting is hard, especially if the object you delete is linked, referenced, copied, appears in lists etc – make sure it is gone in all those contexts as well. Yellow is interesting – when states have more than two values, such as green, yellow and red, there can often be bugs because the logic gets more complicated.

These heuristics are really useful, and you can start using them in your own testing right away. Alex also gave them catchy names, possibly thanks to her background in language and linguistics. I really enjoyed this talk because it made me think of exploratory testing in a new way – why did I decide on this action now? – and because it gave me lots of useful test heuristics. The delivery was also top notch.

Utilizing Component Testing For Ultra Fast Builds. Tim Cochran talked about how to divide up, and speed up, the testing. He recommended testing the functionality and edge cases in component tests (such as one microservice), and testing integration between components (critical path) and configuration with end-to-end tests. This is fairly standard advice I think, but it is good to be reminded of, and it made me think about how we test the application I work with.

Ideas for speeding up the testing included running component tests in parallel, and a discussion of in-process (function calls) versus out-of-process (API calls) tests. He also referred to Accelerate on the importance of fast tests. I liked that this talk was based on actual experience helping companies set up CI/CD pipelines. The best quote from the talk was “Testability is a feature. Don’t be afraid to change the code to make it more testable”. I wholeheartedly agree with this!

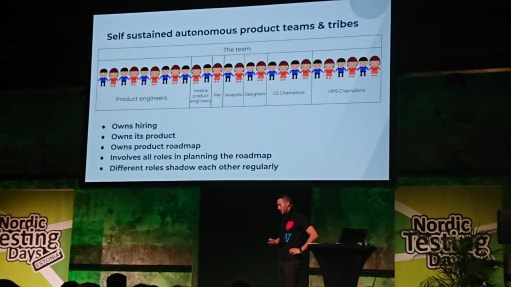

Life After QA. Erik Kaju is head of engineering for payment cards at TransferWise. He has moved from testing roles, to developer, tech lead and engineering manager. At TransferWise there are no dedicated testers – it’s everybody’s responsibility. He went on to talk about how they work. I thought it was really interesting to hear, and to compare to how we work at TriOptima. Here are the most interesting facts: Customer support is part of the product engineering team (to make sure the feedback is received). Different roles shadow each other regularly – e.g. handling customer support for a few days. Currently they have 169 microservices, and they release 1500 times per month. They use continuous deploys to the test environments, and manual deploys to production. They keep statistics on all PR:s and releases – smaller are faster. Developers can deploy to prod, but can not access customer data.

Learning From Bugs

The title of my talk was Learning From Bugs, and I gave it after lunch the last day. There were four other talks and workshops at the same time, and I think about 90 people attended my talk. I was quite happy with how it went. Several people smiled and nodded when I covered certain bugs, which was very encouraging. All attendees were recommended to rate the talks they listened to using the app Attendify. After the conference I got all the ratings and comments from my talk. The scale was from 1 to 5 stars and I got 1 “2 stars”, 7 “3 stars”, 24 “4 stars” and 18 “5 stars”, for an average rating of 4.18. I am really grateful for getting this rating! 16 people also wrote comments, and most of them were quite positive. This one made me especially happy: “Good, relaxed talk, ideal after lunch. You actually have some content in those slides, a rare thing”. Getting this feedback was great. I rated all the talks I attended, but now I wish I had written comments on all of them as well, because it is such valuable feedback.

This was my first time speaking outside of Sweden. I have given the same presentation at the Jfokus conference in Stockholm in February, and at a local test conference organized by the Swedish Association for Software Testing. Before that, I had given a version of the talk at several meetups in Stockholm (FooCafé, Stockholm Exploratory Testing Meetup, and Sthlm Web Dev Meetup). Speaking at the meetups was good practice for speaking at conferences. I found out about Nordic Testing Days on Twitter, and decided to submit a proposal. I had submitted to other conferences, but not been accepted. For Jfokus and Nordic Testing Days I spent quite a bit of time on the description of the talk. I looked at the descriptions from the previous conferences to get a feel for what the description should contain.

Odds and Ends

- A common theme of many talks was developers and testers sharing responsibility for quality assurance.

- Great venue – Kultuuri Katel, an old power plant, had great atmosphere and good conference rooms.

- Very good food.

- Friendly vibe.

- Two other blog posts about the conference: by Bailey Hanna and by Ali Hill.

Top comments (0)