The world is full of data. Everything we do, our plans and decisions are based on data, even if we are not aware of it. No doubt that IT systems also run on data, yet we – its creators – don’t pay a lot of attention to it, or we do?

Data is not just what we have on record regarding, for example, our products or users. Data is also the technology itself. Let’s look at source code as an example. We won’t do anything with the data if our code stops working for some reason.

And the risks are significant – starting from simple human error, infrastructure problems to ransomware attacks. We cannot afford to lose data in any way. While database backup is a usual practice, can we say the same about source code backup?

The old ways…

Both the problem and the attempts to solve this issue are not new. It’s not like I’m reinventing the wheel now. The source code is an asset. It is a product we are working on. And as such, we need to take care of it and secure it. The most natural, the easiest (apparently) and also the cheapest, again apparently, way is to build a script to do it for us.

Well, after all, what is the problem? We make a backup script that periodically performs for us the “clone” operation of our entire repository. Alternatively, we have to figure out how to perform authorization, but that’s basically the only difficulty. A few lines of code and we’re done.

However, are we sure? What if we have several hundred repositories? Or new ones are created regularly? Let’s assume that we can handle that too, but what about the topic concerning metadata? After all, we don’t want to lose that either. That’s not why we use Pull Requests, Issues, etc. to allow ourselves to lose them. That’s what a simple “clone” won’t do for us.

Back to the topic of authorization. I skipped over it earlier, but after all, it is a key issue because it is related to security. The wrong solution puts us at great risk of exposing our credentials. I hope I don’t need to tell you what that means.

How to write a GitHub backup script

Now let’s move on to practice. Before we fire up such a backup script we must first analyze what should be in it. After all, the “clone” command and authorization data alone are definitely not enough. Below is a list of necessary elements:

- address of the repository (or the entire organization)

- credentials – user and password or access token

- storage – the place where we will store our backups

- filename convention – mechanism for versioning

This is the bare minimum for our solution to make sense. But you should also consider a few more elements, such as:

- hostname – if we use our own hosting service

- reports – who and when performed a backup, and whether it was successful or not

- persistence – if we want to delete old data (e.g. older than 30 days)

- scheduling – when and how often we want to perform our backup

As it turns out, it is not as easy as it might seem at first. And yet still such a script is not flexible. If we want, for example, to make a backup after each code merge to the specific branch, what then? Such a file does not allow us to do much.

Let me use a very popular script (323 stars and 144 forks – as of November 2022). It does what it is supposed to do and is, let’s call it, an acceptable solution. You can find GitHub backup script on GitHub Gist.

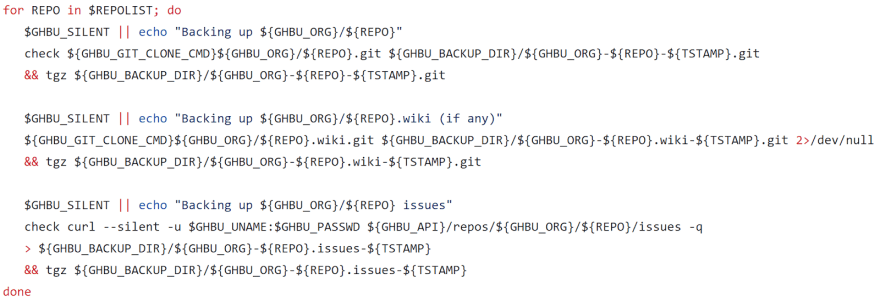

As you can see there we have some simple configurations to set up, then there are some validations, creating directories/files, downloading a list of repositories (max 100) and so on. What is most relevant you can see in the image below:

That is, for a given list of our repositories, we execute the “clone” command to get the repository itself, all wikis and issues. Not that bad. However, you can see that this is not the simplest solution. Nor is it flexible, as I mentioned earlier.

I also recommend getting acquainted with a similar script written in Python. It may not be as popular, but it has as many as 50 stars, so it is worth exploring this solution as well.

GitHub backup tools

According to the formal definition, backup is a copy of computer data taken and stored elsewhere. In case of emergency it may be used to restore the original data. We can say a good backup should have such features as:

- automation

- encryption

- versioning

- data retention

- recovery process

- scalability

So, does the backup script for GitHub created in this way meet this definition? Unfortunately, not really. Here we have a problem with scalability, encryption, and we haven’t even touched on recovery yet. And after all, this is a key issue! Why do we have a backup if we don’t know how to restore it quickly?

It is also worth noting that, admittedly, we rarely actually lose data. Our risk and cost is the time it takes to recover that data or restore the system to operation. Nowadays, downtime is unacceptable, so lightning-fast response and the ability to quickly restore a backup are extremely important to us. It would be difficult to achieve all these features with a rather simple, manually created backup scripts.

Why you should pick a backup tool instead of the script

To solve these problems, we can go further in writing more scripts and extending existing ones. But we can also use already existing third-party tools. This comes at a price, but allows us to overcome many obstacles.

Creating your own Git backup scripts may seem cheaper, faster and better, especially in the early stages of a project or organization. And certainly the biggest advantage of this approach is customization. It’s our program, so we can do what we want and how we want. We have full control over it. But in my opinion, that’s where the advantages of this solution ends.

The large (and constantly increasing) maintenance costs in the future, or the time required to manage and administer such scripts, are some of the main downsides of this approach. It is also worth noting that we have no guarantee of the reliability of the backup. I described one of such use cases in this article, the title of which already shows my opinion about writing backup scripts for GitHub.

I’ve already mentioned how critical Disaster Recovery is, and yet for that we would need another script. And probably more, to do the backup of the script…. making backups. It sounds absurd. After all, that’s not what we should be spending our money and our specialists’ (or ourselves) time on.

Benefits of GitProtect

With help comes a third-party tool called GitProtect. Okay, I’m sure some of us will cringe, because it’s another tool over which we don’t have full control and for which we have to pay. Apparently so, but after all, we have to pay for the time spent writing our own scripts too!

Among the advantages of this tool, we have, for example, central management, virtually unlimited configuration options or the ability to assign permissions and roles in the team. The latter allows us to conveniently manage who has access to what, so we can separate responsibility and management of the backup topic, for example, by teams or departments of our organization.

In terms of convenience and transparency, we have email (or Slack) notifications available here, as well as daily reports with compliance or audit information. And, of course, last but not least – Disaster Recovery from the real deal.

Conclusion

Let’s summarize what I have described above by just changing the title of this article. We should not be asking: “How to write a GitHub backup script and why (not) to do it?”, but rather “Why should you never ever use a GitHub backup script?”.

Let’s respect our time and allow ourselves to focus on developing our own business. Let’s not spend it on necessary, although boring, administrative topics, when someone can do it for us. And do it better!

Top comments (0)