Around two years ago I wrote an article on the perils of relying on big-O notation, and in it I focused on a comparison between comparison-based sorting (via std::sort) and radix sort, based on the common bucketing approach.

Recently I came across a video on radix sort which presents an alternate counting-based implementation at the end, and claims that the tradeoff point between radix and comparison sort comes much sooner. My intuition said that even counting-based radix sort would still be slower than a comparison sort for any meaningful input size, but it’s always good to test one’s intuitions.

So, hey, it turns out I was wrong about something. (But my greater point still stands.)

Here are my implementations of bucket-based and count-based radix sort, in C++:

#include <vector>

typedef std::vector<uint64_t> TestCase;

typedef std::vector<uint64_t> Bucket;

template<size_t K = 8> void radix_bucket_sort(TestCase& input)

{

constexpr size_t slots = 1 << K;

constexpr TestCase::value_type mask = slots - 1;

constexpr size_t bits = sizeof(TestCase::value_type) * 8;

for (int r = 0; r < bits; r += K) {

Bucket radixes[slots];

for (auto n : input) {

radixes[(n >> r) & mask].push_back(n);

}

input.clear();

for (auto& bucket : radixes) {

input.insert(input.end(), bucket.begin(), bucket.end());

}

}

}

template<size_t K = 8> void radix_count_sort(TestCase& input)

{

constexpr size_t slots = 1 << K;

constexpr TestCase::value_type mask = slots - 1;

constexpr size_t bits = sizeof(TestCase::value_type) * 8;

TestCase output(input.size());

for (int r = 0; r < bits; r += K) {

size_t counts[slots] = {0};

for (auto n : input) {

++counts[(n >> r) & mask];

}

size_t accum = 0;

for (auto& n : counts) {

n += accum;

accum = n;

}

for (auto iter = input.rbegin(); iter != input.rend(); ++iter) {

output[--counts[(*iter >> r) & mask]] = *iter;

}

std::swap(input, output);

}

}

For the full source, see big-o-2.cpp

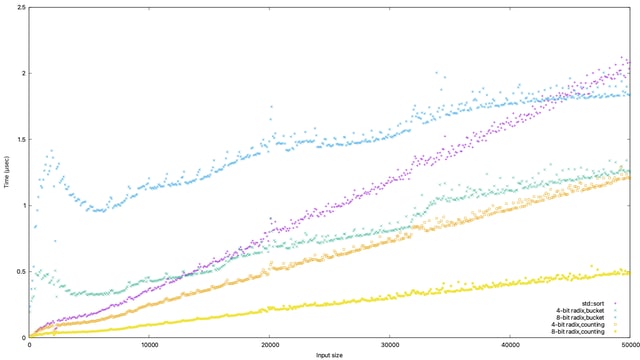

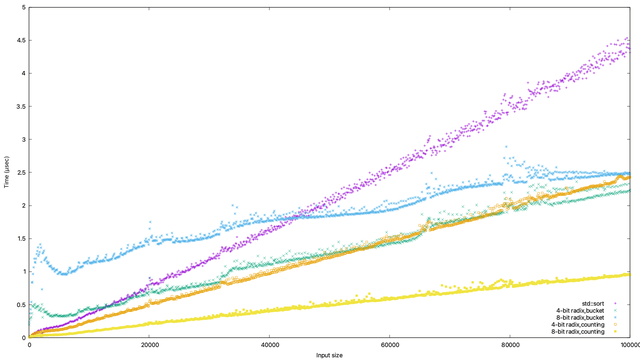

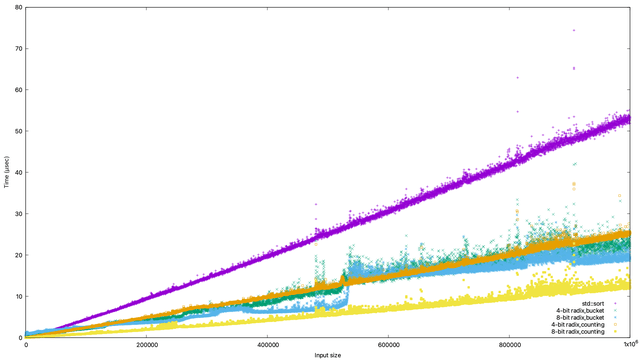

And here are the time comparisons between std::sort, and both bucket and counting radix sort using a 4- and 8-bit radix: (raw data)

So, what’s going on here? And why are even the bucket-radix sort graphs different than last time?

It’s hard to do a like-for-like comparison with the previous set of implementations; this time around was running on a very different computer (a Mac mini running on the M1 chip), a newer version of the C++ compiler, a newer version of the C++ standard library, and who knows how many other differences. It’s pretty interesting that the power-of-two allocation overhead from bucketed radix, in particular, has more or less gone away; there’s possibly something about the M1 architecture which makes the vector resize take much less time, and also making use of clang’s robust C++17 support may have also reduced some of the copy overhead due to implicit move semantics being used.

But it’s pretty interesting to me that the following things are pretty apparent:

- A 4-bit radix+bucket sort breaks even with

std::sortat around N=13000 - An 8-bit radix+bucket sort breaks even at around N=44000

- Both 4- and 8-bit radix+count sort break even pretty much immediately (around N=600 and N=100, respectively)

Now, all that said, this still demonstrates a problem with just assuming that a lower big-O factor is better. All four of those radix sort implementations are (\mathcal{O}(N)), but the bucket-based ones still are slower than the (\mathcal{O}(N lg N)) std::sort until fairly large input sizes, even with all of the overall performance improvements which have happened (after all, std::sort has gotten faster too).

And, of course, all four radix sorts have the same time complexity as each other, but they all scale with different factors; in particular, it doesn’t take that long for the radix+bucket sorts to overtake the 4-bit counting sort (which is, frankly, pretty surprising to me).

As always, the fact that an algorithm scales better in the long term doesn’t mean it’s suitable for all input sizes. Even in this best-case situation, std::sort still wins for input sizes of a few hundred values, and of course the maintenance overhead of using std::sort is way lower.

It’s also important to remember that these sorting functions can still only work on unsigned integers, or signed integers with a little tweaking. They are not applicable to floating-point values, much less things with more complicated tiebreaking rules (such as database rows).

And, heck, it’s also really easy to write code which is (\mathcal{O}(N)) but not optimal! As we saw with the previous article.

So, my conclusion is still the same: practical concerns trump theoretical complexity. But as a bonus conclusion, it’s okay to revisit things to see if they’ve changed.

Oh, and you might also want to consider that just because your parts of the algorithm have a certain complexity factor doesn’t mean that’s what the runtime performance will be; it’s easy to make something accidentally quadratic.

Top comments (1)

Radix sort vs. std::sort shows that real-world performance isn’t just about Big-O. Hardware, compilers, and memory allocation play a huge role. Also, if you're coding a lot, firacode.org/ can boost readability with ligatures—worth checking out!